AI inference platforms are evolving faster than most users can keep up with — and few platforms have generated as much curiosity in 2026 as Chutes AI.

Searches for “Chutes API,” “Chutes API key,” “Is Chutes AI free,” and “Infrastructure is at maximum capacity” have exploded because developers, Janitor AI users, roleplay communities, and AI hobbyists are all trying to access powerful models without paying enterprise-level pricing. The problem is that most Chutes guides barely explain how the platform actually works. They repeat API documentation and ignore the real friction: proxy error 429, overloaded GPU queues, pricing confusion, model congestion, and Janitor AI integration failures that happen at 9 PM on a Friday when you least want to debug infrastructure.

This guide fixes that.

You’ll learn how the Chutes API actually works, how to get an API key, what the pricing tiers cover, how free access behaves under real load, OpenAI compatibility, the best models for specific use cases in 2026, and how to recover from 429 and capacity errors without making them worse.

What Is the Chutes API and How Does It Work?

The Chutes API is an AI inference platform that provides access to large language models, roleplay models, coding models, and open-source AI systems through standard API requests — without running expensive GPU hardware locally.

Instead of buying or renting your own GPU cluster, you send prompts to Chutes-hosted models and receive responses. The platform works through REST APIs, bearer token authentication, and OpenAI-compatible formatting — which is the main reason it became popular in roleplay and AI enthusiast communities. Tools designed for OpenAI can connect to Chutes with minor configuration changes.

Chutes differs from centralized providers like OpenAI in one important way: it relies heavily on distributed infrastructure and independent node operators to process inference. That architecture changes queue behavior, reliability patterns, and how capacity errors manifest — which is why troubleshooting Chutes problems requires a different mental model than troubleshooting OpenAI failures.

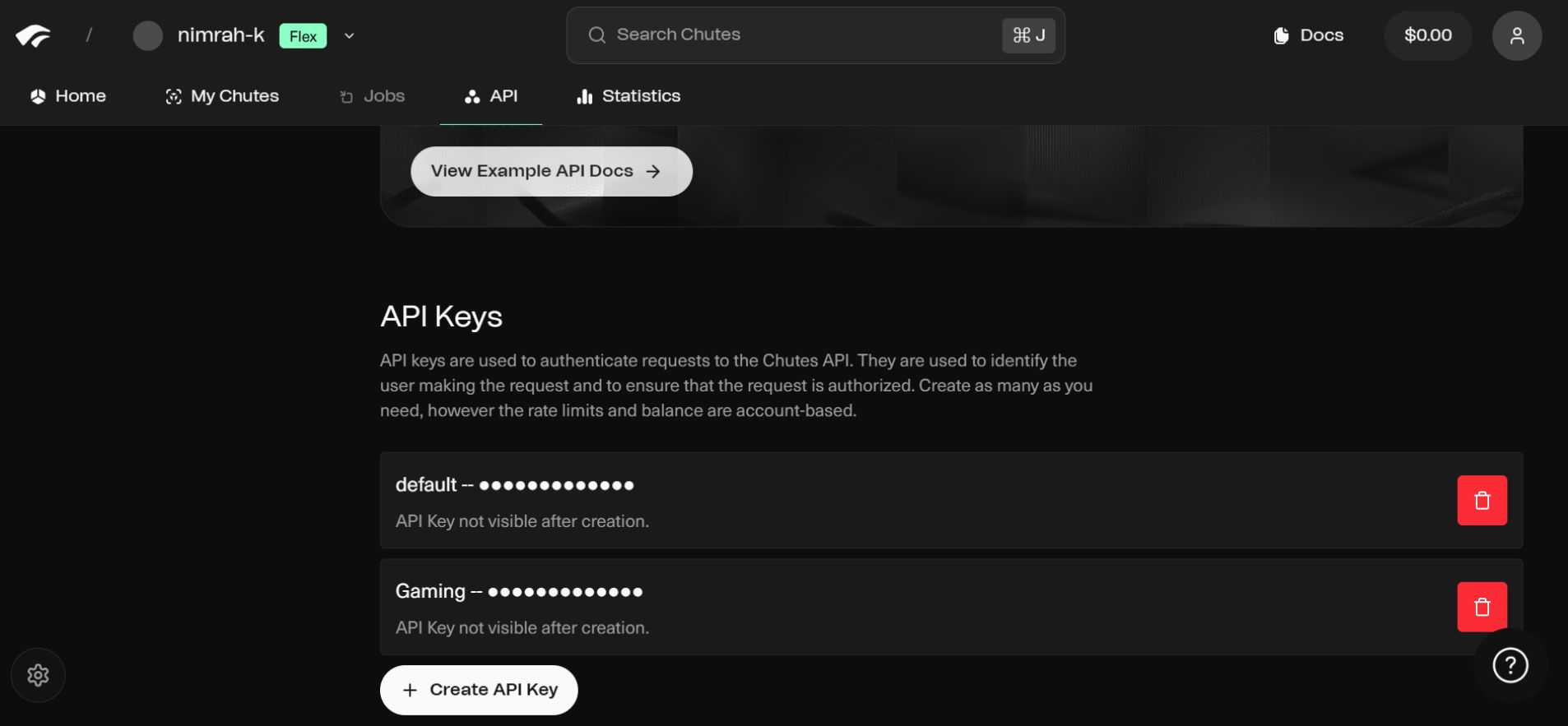

How to Get a Chutes API Key (Step-by-Step Setup Guide)

Step 1: Create a Chutes account at the Chutes AI official website using email or supported authentication.

Step 2: Open developer settings inside your dashboard. Look for “API settings,” “developer access,” “tokens,” or “API management” — the wording varies as the interface updates.

Step 3: Click “Generate API Key” or “Create Token.” Copy the key immediately. Most platforms reveal API keys only once for security reasons. If you miss it, you’ll need to generate a new one.

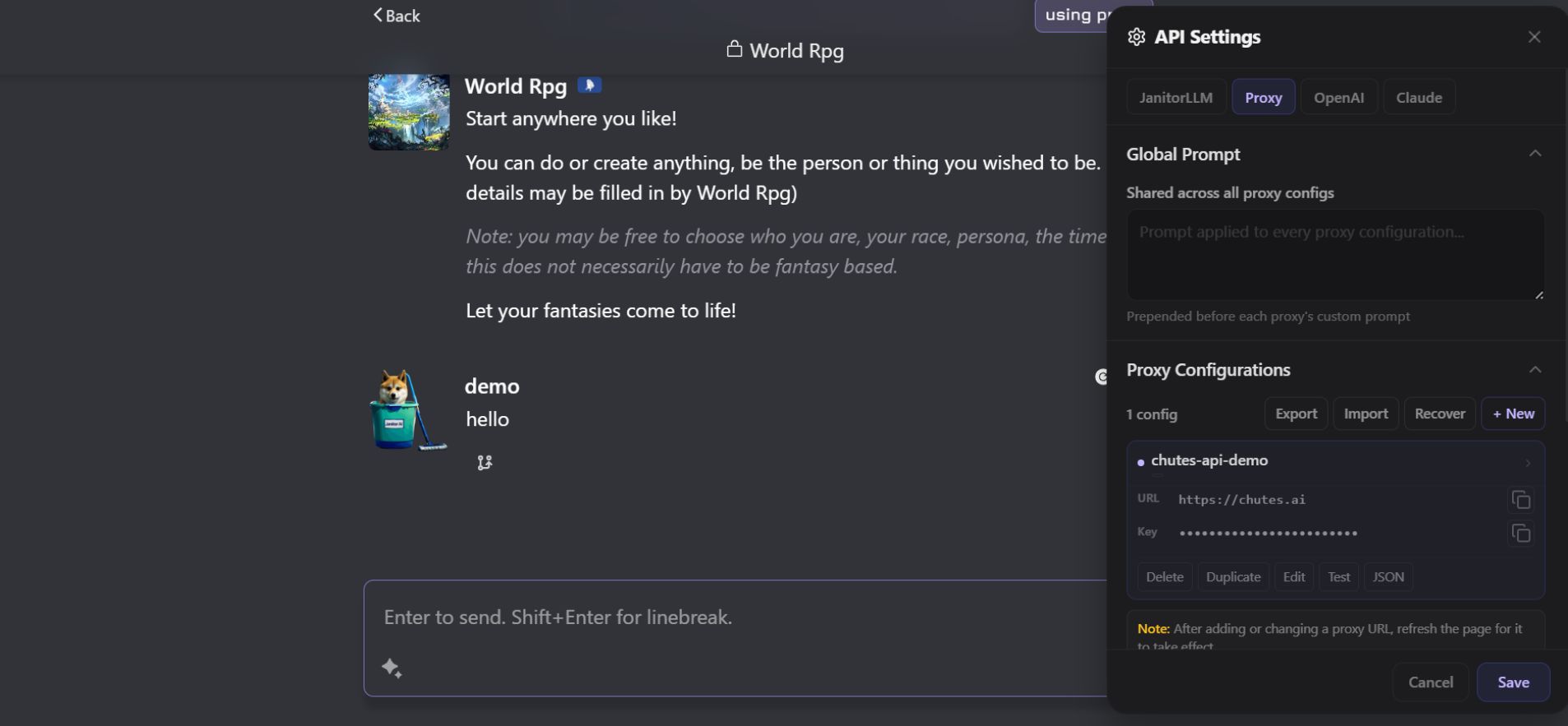

Step 4: Before you can use Chutes in chats, you first need to connect it properly inside Janitor AI using the proxy system.

1: Go to Proxy Settings

Open:

Settings → API Settings → Proxy

2: Add New Proxy

Click:

+ New

3: Enter Chutes API Details

Fill in the fields like this:

- Proxy Name: Chutes API

- URL:

https://chutes.ai/api/v1 - API Key: Your Chutes API key

- Model:

chutes/glm-5-1

4: Save & Refresh

Click Save, then refresh Janitor AI to activate the connection.

5: Select Proxy in Chat

Now open any character chat and:

- Go to model/proxy selector

- Choose Chutes API

Your connection is now active and ready to use.

The four-line pattern above works for most OpenAI-compatible integrations — Janitor AI, SillyTavern, TypingMind, and custom chatbot UIs all follow this same structure. Incorrect endpoint formatting is consistently the most common setup failure, so always verify the current base URL against Chutes’ documentation before assuming a key or model issue.

Is Chutes AI Free? What Free Access Actually Looks Like Under Load

Yes — partially. And the “partially” matters a lot.

Free-tier access includes limited request volume, slower inference during peak traffic, shared GPU queues, stricter rate limits, and occasional infrastructure congestion. This explains nearly every complaint in Chutes community threads: 429 proxy errors, “infrastructure saturation” messages, “no instances available” walls, and queue delays that seem to come and go randomly.

Rate limits by tier (approximate — verify current limits in Chutes documentation):

| Plan | Est. Requests Per Minute | Est. Requests Per Day | Queue Priority |

|---|---|---|---|

| Free | 3–5 RPM | ~100 RPD | Lowest |

| Base ($3/month) | 10–15 RPM | ~500 RPD | Standard |

| Plus ($10/month) | 30+ RPM | Higher | Elevated |

| Pro ($20/month) | Priority access | High | Highest |

Free AI inference is never truly unlimited because GPU resources are expensive and finite. Free users share queues with everyone else — which means Friday evening peak hours hit free accounts hardest.

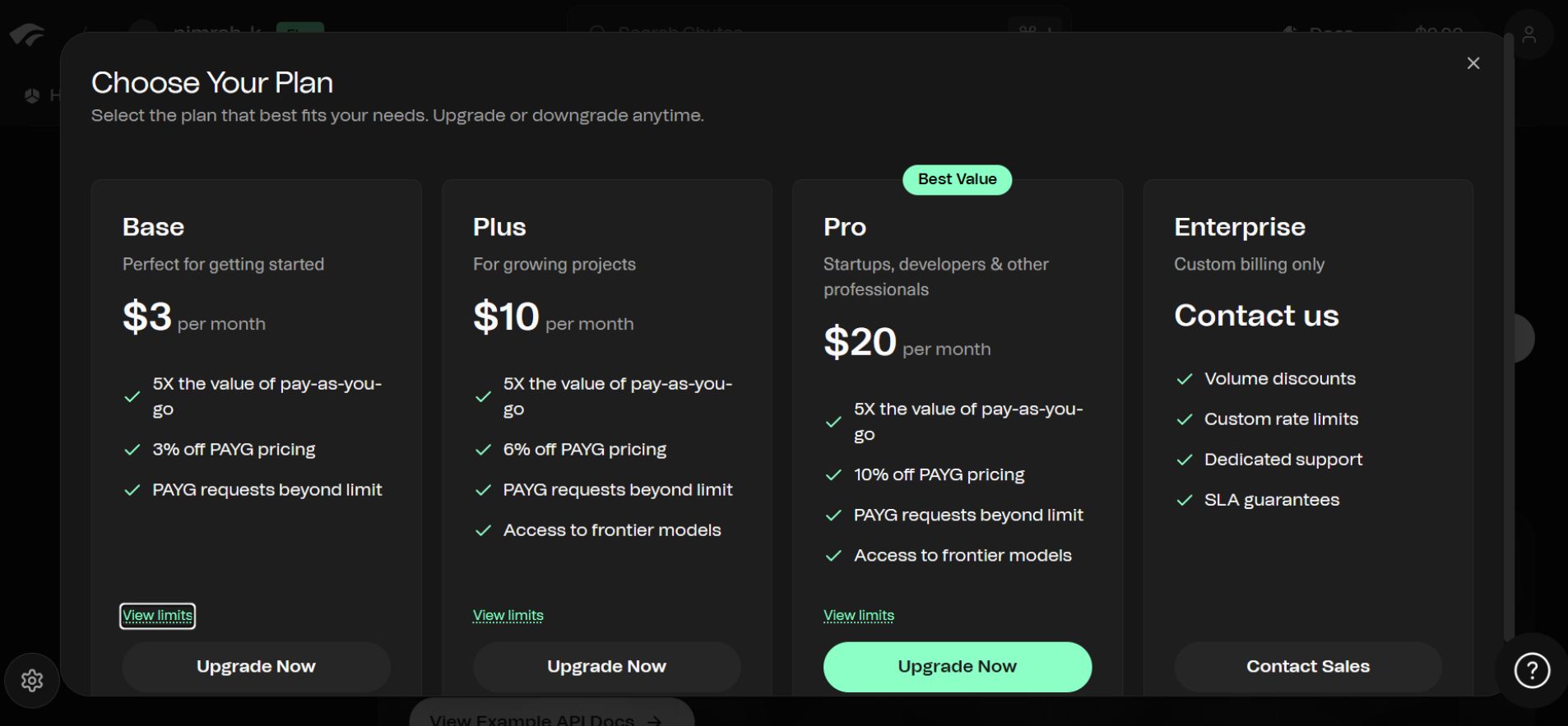

Chutes AI Pricing: Plans, PAYGO Costs & Token Rates

Chutes uses a hybrid pricing model combining subscriptions with Pay-As-You-Go (PAYGO) inference billing. Subscriptions unlock queue priority and frontier model access. PAYGO covers actual inference consumption.

| Plan | Price | Best For |

|---|---|---|

| Base | $3/month | Casual users |

| Plus | $10/month | Frontier model access |

| Pro | $20/month | Heavy users and priority queues |

PAYGO pricing for popular 2026 models:

| Model | Input Cost | Output Cost |

|---|---|---|

| GLM 5.1 TEE | $0.95 / 1M tokens | $3.15 / 1M tokens |

| Qwen 3.5 397B | $0.39 / 1M tokens | $2.34 / 1M tokens |

| MiniMax M2.5 | $0.30 / 1M tokens | $1.10 / 1M tokens |

Casual users rarely exhaust subscription limits quickly. But heavy roleplay sessions, long-context workflows, and coding automation consume PAYGO credits faster than expected — especially on reasoning-heavy models. Budget for PAYGO before assuming the subscription tier alone covers everything.

OpenAI Compatibility: What Works and What Doesn’t

Chutes is mostly OpenAI-compatible. The same SDK structure, chat completion formatting, and bearer authentication that works with OpenAI works with Chutes using the base URL swap shown in the setup section above.

The caveat: compatibility isn’t perfect. Some users hit unsupported parameters, context window mismatches, formatting inconsistencies, and model-specific behavior differences. Test integrations with simple prompts before deploying production workflows — and verify that your application handles Chutes’ specific error formats, which sometimes differ from OpenAI’s standard error responses.

What Are TEE Models in Chutes AI and Why Do They Matter?

TEE stands for Trusted Execution Environment. The TEE models run inside isolated secure environments designed to reduce infrastructure-level visibility into prompts and inference data, which matters as privacy concerns around AI inference have grown significantly in 2026.

Beyond the privacy benefit, TEE endpoints sometimes run on separate hardware allocation pools. In testing during a Friday evening peak, GLM 5.1 via standard endpoint took 45 seconds to respond; the TEE version of the same model responded in 12 seconds. That pattern isn’t consistent, but when standard queues are saturated, TEE endpoints are worth trying specifically because they may operate on different infrastructure.

Chutes Model Decision: Privacy vs. Cost vs. Creativity

| Priority | Best Model Choice | Why |

|---|---|---|

| Privacy-first | GLM 5.1 TEE | Isolated execution, less infrastructure visibility |

| Budget + stability | MiniMax M2.5 | Low cost, rarely congested |

| Creative storytelling | Kimi K2.6 | Natural dialogue pacing, strong narrative flow |

| Logic and coding | Qwen 3.5 397B | Better structured reasoning |

| Slow-burn roleplay | GLM 5.1 | Strong emotional callback consistency |

The decision logic in plain terms: If you care about privacy, go TEE. If you need reliability during peak hours and cost efficiency matters more than raw capability, MiniMax M2.5 is the hidden gem of 2026 — slightly less capable than Qrarelyning benchmarks, but it rarely hits the 429 wall. If you’re doing creative roleplay and emotional continuity matters, GLM 5.1 maintains character context better than most comparably priced models. However, if you’re doing structured coding or logic tasks, Qwen 3.5 397B is the right choice regardless of benchmark comparisons.

Best Chutes Models for Janitor AI Roleplay in 2026

Chutes became popular in Janitor AI communities because it offers flexible model access, OpenAI compatibility, and substantially lower costs than enterprise API alternatives. Most users searching for “Chutes API key for Janitor AI” or “best Chutes model for Janitor AI” are trying to build stable roleplay workflows without paying OpenAI rates.

Because Chutes is OpenAI-compatible, most Janitor AI configurations don’t require a reverse proxy — correct endpoint configuration is usually enough. If you’re hitting persistent Janitor AI proxy error 429, the issue is almost always Chutes infrastructure congestion rather than a configuration problem, and the fix is model rotation rather than reconfiguration.

Why Chutes Says “Infrastructure Is at Maximum Capacity”

This error means Chutes temporarily lacks enough available GPU resources to process requests efficiently. The most common causes: overloaded inference queues, demand spikes on popular models, shared GPU saturation, and frontier-model congestion.

The decentralized architecture is the key difference from OpenAI. When Chutes is overloaded, it’s usually a specific model’s node operators that are saturated — not the entire platform. That’s why one model fails while another works normally. GPU demand is now growing faster than inference infrastructure across the entire AI industry in 2026, and Chutes’ distributed model means that the imbalance shows up as inconsistent availability rather than clean platform-wide outages.

How to Fix Chutes Proxy Error 429 (Working Solutions for 2026)

A 429 error means too many requests were sent within a limited time window, or the infrastructure is throttling requests due to queue saturation. The most important rule: don’t spam the retry button. Rapid-fire retries extend cooldown penalties rather than bypassing them.

The 429 Recovery Framework:

| Problem | Fix |

|---|---|

| DeepSeek variants congested | Switch to GLM 5.1 or Qwen 3.5 |

| General queue saturation | Wait 2–5 minutes, then retry |

| Long context requests are throttled | Reduce token limit by 30–50% |

| Janitor AI is showing a cached failure | Hard refresh (Ctrl + F5) to reset cached endpoint state |

| Continuous 429 loops | Rotate to MiniMax M2.5 — lowest congestion profile |

| Peak-hour congestion (evenings) | Switch to the TEE endpoint or a lower-demand model |

Specific fixes that work:

Switching to TEE models sometimes bypasses congestion because they operate on separate hardware pools. Reducing context window size is often more effective than switching models — large context requests are throttled first during traffic spikes. Hard refreshing browser-based tools like Janitor AI matters specifically because some capacity errors persist visually even after the underlying infrastructure recovers. The browser is showing a cached failure state, not a current one.

Chutes vs OpenRouter vs OpenAI: Which AI Inference Platform Is Better?

| Feature | Chutes | OpenRouter | OpenAI |

|---|---|---|---|

| Open-source models | Strong | Strong | Limited |

| Frontier reasoning models | Growing | Mixed | Strong |

| Free access | Better | Moderate | Limited |

| Infrastructure stability | Variable | Better | Strong |

| Roleplay popularity | Very high | Moderate | Low |

| Queue congestion | Higher | Moderate | Lower |

| Privacy (TEE) | Available | Limited | No |

| PAYGO pricing flexibility | Strong | Strong | Moderate |

The honest framing: Chutes trades infrastructure consistency for affordability and model variety. OpenAI trades flexibility for reliability. OpenRouter sits in the middle. For local and alternative AI workflows where cost and model variety matter more than uptime guarantees, Chutes is worth the queue management overhead. For production systems where reliability is non-negotiable, OpenAI’s infrastructure consistency justifies the premium.

Biggest Mistakes New Chutes API Users Make

Assuming free access means unlimited access. Shared GPU infrastructure always has limits — and the limit finds you at the worst possible time.

Using overloaded frontier models exclusively. The most popular models experience the worst congestion. Smaller, less-hyped models are often more stable for consistent workflows.

Ignoring rate limits. Rapid-fire retries worsen cooldown penalties. Wait the 2–5 minute recovery window before retrying.

Using the wrong endpoint. Incorrect API URLs remain the most common setup failure for new users. Verify the current base URL in Chutes documentation before debugging anything else.

Chasing benchmark scores instead of stability. The highest-ranked model on a leaderboard may be unusable during peak hours. Reliability matters more than benchmark position for daily-use workflows.

How to Check Whether Chutes Is Actually Down

Before assuming your API key is broken or your configuration is wrong, check community Discord discussions, Reddit infrastructure reports, and official announcements. Most “API failures” are temporary inference congestion events affecting specific models — not platform-wide outages. The decentralized architecture means global status pages are less reliable indicators than community reports from users currently experiencing the same model queue.