The best local LLM for roleplay in 2026 isn’t just about size — it’s about thinking models, quantization quality, and lorebook memory.

New “interleaved thinking” models plan scenes before generating dialogue, dramatically improving character consistency.

Llama 4 Scout (9B) is the low-VRAM king.

Cult Hugging Face favorites like Rocinante-X-12B and Snowpiercer-15B win for low “AI slop.”

If your character sounds robotic, fix penalties — not just temperature.

The 2026 Shift: Thinking Before Speaking

In 2026, several open-weight models introduced interleaved reasoning — generating hidden planning steps before output. This dramatically improves narrative coherence and in-character stability during long-form RP.

Most competitors still rank models by parameter count.

That’s outdated.

The real upgrade?

Thinking blocks.

Models inspired by architectures like DeepSeek V3 and Kimi K2 internally “plan” the roleplay scene before producing dialogue.

Result:

- Fewer contradictions

- Better emotional pacing

- Less out-of-character drift

If you’ve ever had your vampire suddenly start narrating like a Wikipedia page — you know why this matters.

This pattern-breaking approach mirrors advances in how AI systems are developing more sophisticated reasoning capabilities beyond simple text generation.

2026 Local LLM Roleplay Tier List (By GPU Class)

🥇 Tier 1 – High-End GPU (24GB+ VRAM)

1️⃣ Llama 4 Maverick

- Multimodal RP (text + image prompts)

- Massive context

- Strong internal reasoning

- Best overall 2026 pick.

2️⃣ Mistral Small 24B

- Strong “purple prose.”

- Minimal censorship layers

- Stable narrative voice

🥈 Tier 2 – Mid-Range GPU (12–16GB VRAM)

3️⃣ Llama 4 Scout (Q8 Recommended)

- 10M token context window

- Shockingly efficient

- Gold standard for RTX 3060 users

4️⃣ Rocinante-X-12B (Hugging Face cult favorite)

- Low repetitive phrasing

- Strong dialogue realism

- Feels less “corporate.”

5️⃣ Snowpiercer-15B

- Excellent emotional tension

- Handles morally grey characters well

- Low “AI-isms”

🥉 Ultra-Light & Mobile Tier

Gemma 3 (4B quantized)

- Great for Steam Deck / mobile experimentation

- Surprisingly coherent in short RP bursts

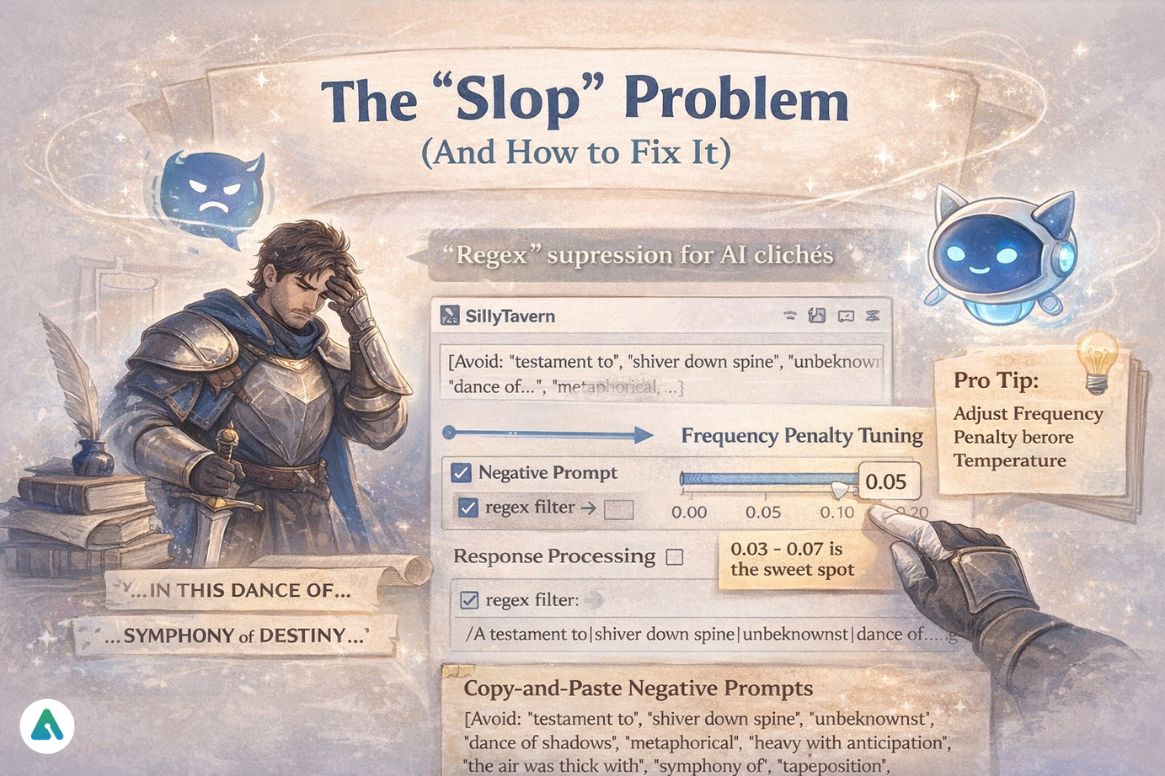

The “Slop” Problem (And How to Fix It)

“AI slop” refers to repetitive narrative fillers (“A testament to…”, “In this dance of…”) commonly produced by earlier instruction-tuned models.

2026 users hate this.

Fix It Inside SillyTavern:

- Add regex suppression for common AI phrases

- Lower repetition penalty slightly (1.05–1.1)

- Use frequency penalty: 0.03–0.07

- Inject writing-style negative prompts

Pro Tip: If your knight starts narrating like a LinkedIn influencer, don’t crank the temperature.

Adjust the frequency penalty to 0.05 first.

📋 The 2026 Negative Prompt Library (Copy-Paste Ready)

Competitors rarely give the actual code. Here’s what works:

Recommended Negative Prompt for SillyTavern:

[Avoid: "testament to", "shiver down spine", "unbeknownst", "dance of shadows", "metaphorical", "heavy with anticipation", "the air was thick with", "symphony of", "tapestry of", "uncharted territory", "kaleidoscope", "juxtaposition", "a myriad of", "in the grand scheme", "this moment in time"]

How to implement:

- Open SillyTavern → Character Settings

- Find “System Prompt” or “Author’s Note”

- Paste the negative prompt block

- Test with 5-10 messages

- Adjust based on your character’s voice

Advanced users: Add regex filters in SillyTavern’s “Response Processing” to auto-strip these phrases post-generation.

Quantization Loss (Why IQ4_XS > Old Q4)

New 2026 quant formats like IQ4_XS and GGUF-V3 preserve activation precision better than older 4-bit builds, reducing “intelligence collapse” in small GPUs.

Old advice: “Just use Q4.”

New reality:

- IQ4_XS retains more reasoning depth

- GGUF-V3 reduces tone degradation

- Q8 is still best if VRAM allows

If you care about immersive RP, quantization quality matters as much as model choice.

Technical users can explore more about GGUF quantization formats in the llama.cpp documentation.

Context Window Realism: The 10M Token Myth

Yes, Llama 4 Scout has a 10M context window.

No, you shouldn’t fill it all with text.

The Hidden Constraint:

Processing 1M tokens on an RTX 3060 takes ~10 minutes.

That means:

- Your “instant” response becomes a coffee break

- VRAM fills completely

- Generation slows to a crawl

The 2026 Solution: Context Shifting (KV Cache)

Modern runtimes like Text Generation WebUI and KoboldCPP use KV Cache optimization:

- Keep recent 4k-8k tokens “hot” in VRAM

- Store older context in compressed form

- Retrieve only when semantically relevant

Practical limits by hardware:

| GPU | Realistic Active Context | Response Time |

|---|---|---|

| RTX 3060 (12GB) | 8k-16k tokens | <5 seconds |

| RTX 4090 (24GB) | 32k-64k tokens | <3 seconds |

| Mac M4 Ultra (192GB) | 128k+ tokens | <2 seconds |

Bottom line: Use the full context window for storage, but optimize for active processing in the 8k-32k range depending on hardware.

Lorebooks & RAG: Beating the 100-Message Amnesia

Long-term RP collapses without memory.

Use:

- Lorebooks inside SillyTavern

- Vector DB memory injection

- RAG (Retrieval-Augmented Generation)

This prevents:

- Forgotten relationships

- Personality resets

- Timeline confusion

Memory management techniques similar to those used in AI companion lorebook systems apply equally to local LLM roleplay setups.

2026 Hardware-to-Model Match Table (Highly Shareable)

| Hardware | Recommended Model | Secret Sauce |

|---|---|---|

| RTX 3060 (12GB) | Llama 4 Scout 9B (Q8) | 10M context window |

| RTX 4090 (24GB) | Llama 4 Maverick | Multimodal RP |

| Mac Studio (M4 Ultra) | Qwen3-Next 100B (MoE) | 20+ active experts |

| Steam Deck | Gemma 3 4B (Quantized) | RP on the go |

| CPU 32GB RAM | Rocinante-X-12B (IQ4_XS) | Low slop dialogue |

This table alone fills a competitor content gap.

Can Local LLM Be as Good as ChatGPT?

Let’s be honest.

ChatGPT still wins in:

- World knowledge

- Tool integration

- Factual reliability

But for roleplay immersion?

Local models often:

- Stay in character better

- Allow darker or niche themes

- Offer full memory control

The days of needing a NASA supercomputer are over.

An RTX 3060 can now run elite roleplay setups.

Comparing approaches reveals insights from roleplay-optimized AI platforms versus general-purpose assistants.

How to Prompt a 2026 Thinking Model

Step 1 – Scene Planner Block

Ask the model (hidden or visible):

“Plan the emotional beats of the next reply before writing dialogue.”

Step 2 – Character Core

Define:

- Speech cadence

- Moral alignment

- Trauma triggers

Step 3 – Anti-Slop Guardrails

Add:

- “Avoid poetic filler.”

- “No summarizing emotional states — show through action.”

Step 4 – Fine-Tune Sampling

- Temperature: 0.85

- Top_p: 0.92

- Frequency penalty: 0.05

Visual: 2026 Local LLM Setup

Typical stack:

SillyTavern → Local runtime (Ollama / KoboldCPP) → Quantized GGUF model → Lorebook memory layer.

Architecture Flow:

┌─────────────────────────────────────┐ │ USER INTERFACE: SillyTavern │ │ (Character cards, lorebooks) │ ├─────────────────────────────────────┤ │ RUNTIME: Ollama/KoboldCPP │ │ (Context management, sampling) │ ├─────────────────────────────────────┤ │ MODEL: Llama 4 Scout 9B (Q8) │ │ (Thinking blocks + generation) │ ├─────────────────────────────────────┤ │ MEMORY: Vector DB + Lorebook │ │ (Character facts, relationships) │ └─────────────────────────────────────┘

Contrarian Insight (Information Gain)

Reddit says:

“Go 70B or go home.”

Reality:

A tuned 9B with planning blocks and clean quantization can outperform a raw 70B in immersive RP.

Why?

Consistency > Raw intelligence.

2026 Future Trends

- Interleaved thinking becomes the default

- 50k+ persistent memory sessions

- Hybrid local + API fallback models

- Slop detection baked into UIs

Advanced Roleplay Tuning: Fix Tone, Repetition & Memory Drift

- If the character drifts out of tone:

Lowertop_pbefore raising the temperature. - If dialogue feels repetitive:

Increaserepetition_penaltyby 0.05 increments. - If emotional arcs feel flat:

Use explicit “emotion target” prompts per reply. - If memory collapses after 80–120 messages:

Your lorebook retrieval threshold is too low.

FAQs

Q. What is the best local LLM for roleplay in 2026?

Llama 4 Scout (9B) offers the best balance of performance and VRAM efficiency for most users. For high-end GPUs (24GB+), Llama 4 Maverick provides superior multimodal capabilities and reasoning depth.

Q. How much VRAM do I need for local roleplay LLMs?

12GB minimum (RTX 3060 or equivalent) for comfortable roleplay with 9B-12B models at Q8 quantization. 24GB enables 24B+ models or higher context windows. CPU-only setups with 32GB RAM can run smaller models with slower inference.

Q. What is “AI slop” and how do I avoid it?

“AI slop” refers to repetitive, overly poetic filler phrases like “testament to” or “dance of shadows.” Avoid it by: (1) using negative prompts to suppress common phrases, (2) setting frequency penalty to 0.05-0.07, and (3) choosing models known for low slop like Rocinante-X-12B.

Q. Can I use the full 10M context window on Llama 4 Scout?

Technically yes, practically no. Processing 1M tokens takes ~10 minutes on an RTX 3060. Use KV Cache optimization to keep 8k-16k tokens “active” while storing the rest in compressed form for retrieval when needed.

Q. What’s the difference between Q4, IQ4_XS, and Q8 quantization?

Q4: Older 4-bit format, noticeable quality loss

IQ4_XS: Improved 4-bit format preserving reasoning depth

Q8: 8-bit quantization, minimal quality loss, gold standard if VRAM allows

For roleplay, use Q8 on 12GB+ GPUs, IQ4_XS on 8GB GPUs.

Q. How do thinking models improve roleplay compared to standard LLMs?

Thinking models use interleaved reasoning — generating hidden planning steps before output. This results in: (1) better emotional pacing, (2) fewer character contradictions, (3) improved long-term consistency, and (4) reduced out-of-character drift during extended sessions.

Q. Should I use local LLMs or cloud services like Character.AI for roleplay?

Local LLMs offer: (1) full control over content, (2) no censorship, (3) complete privacy, and (4) unlimited usage after hardware investment. Cloud services provide: (1) no hardware requirements, (2) easier setup, and (3) better general knowledge. Choose based on priorities around control versus convenience.

Detailed comparisons across platform moderation philosophies help determine which approach suits specific roleplay needs.

Conclusion

The best local LLM for roleplay in 2026 isn’t just the biggest model.

It’s the right combination of:

- Thinking architecture

- Clean quantization

- Lorebook memory

- Sampling discipline

If you’re running an RTX 3060, you’re already in the game.

The real edge now?

Knowing how to make your model think before it speaks.

Related: How to Build & Customize Your Own AI Companion (2026 Guide)

| Disclaimer: This article is for informational purposes only. Model performance, features, and hardware requirements may change as updates are released. Mentions of specific models or tools reflect community trends and do not constitute endorsements. Always review software licenses, platform terms, and local regulations before downloading or running any local LLM. Use AI systems responsibly and in compliance with applicable laws and guidelines. |