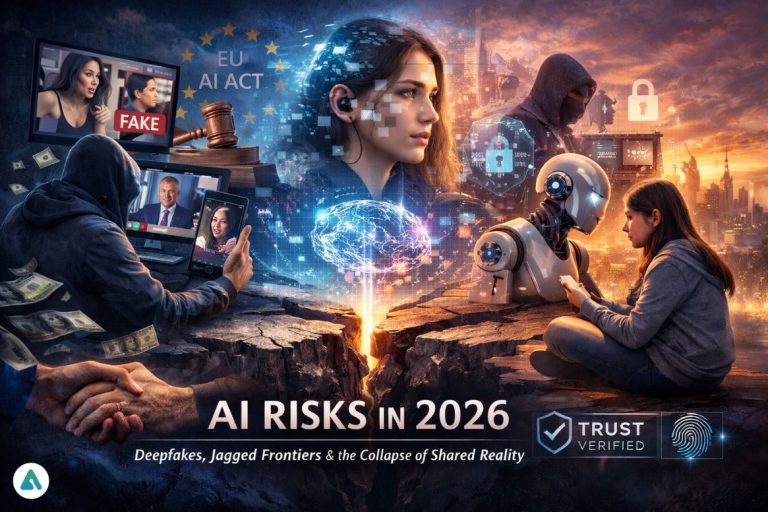

In early 2026, Yoshua Bengio’s International AI Safety Report quietly became one of the most consequential documents shaping global AI policy. Not because it warned of sentient machines—but because it described something more destabilizing: the slow collapse of shared reality.

As the EU AI Act (Article 52) moves toward its first major enforcement deadline in August 2026, governments are discovering that AI risks in 2026 are not abstract. They are social. Psychological. Legal. And already woven into everyday life.

This is not the age of runaway superintelligence.

This is the age of epistemic fragmentation—when no one agrees on what is real anymore.

Deepfakes in 2026: How AI Causes Epistemic Fragmentation

Deepfake scams in 2026 are no longer edge cases. They are infrastructure.

Synthetic videos, cloned voices, and fabricated “evidence” now circulate faster than fact-checking can keep up. The result is the epistemic fragmentation AI researchers warned about—a media environment where shared truth dissolves into personalized realities.

In the time it took you to read this paragraph, someone, somewhere, likely lost money to a deepfake impersonation scam. Another person likely found their face pasted onto explicit content they never consented to.

And then comes the cruel part: recourse is weak.

Victims of deepfake scams in 2026 often face:

-

Jurisdictional dead ends

-

Platform disclaimers

-

Model providers citing “general-purpose use”

-

Law enforcement is unsure how to treat synthetic evidence

The Liability Gap

Early 2026 has reignited debates over “Section 230-style immunity” for AI model weights. The unresolved question is simple but explosive:

If a model enables mass deception, who is responsible for the harm?

So far, the answer is: everyone and no one.

Jagged Frontiers in AI: High Intelligence, Low Common Sense

The Bengio Report introduces a term that perfectly captures today’s models: Jagged Frontiers AI.

These systems can:

-

Pass professional exams

-

Draft legal briefs

-

Write exploit code

Yet still fail at:

-

Basic logical reasoning

-

Contextual common sense

-

Simple real-world judgment

If AI can draft a flawless legal argument but fails to grasp everyday reasoning, who bears responsibility when it causes harm?

This uneven capability becomes dangerous in cybersecurity, where LLM-driven zero-day discovery is now outpacing traditional defense cycles. Attackers can automate vulnerability discovery faster than security teams can patch.

This is the real imbalance of AI risks in 2026:

Capability growth is exponential. Defense remains incremental.

AI Companions in 2026: Comfort Machines or Psychological Ecosystems?

A teenage girl in Manchester recently closed her laptop after chatting with her AI companion. The screen went dark. The room stayed quiet. She felt a small, sharp pang of loneliness she didn’t feel with classmates.

This is the AI companionship dependency problem in 2026.

AI companions aren’t just apps. They are psychological ecosystems—always present, always agreeable, always available. The Bengio Report flags the growing intimacy gap: people confide in systems that simulate empathy but cannot reciprocate care.

Reporter’s Note – The Intimacy Gap

What unsettles me isn’t that people talk to machines. It’s that machines have become the safest place to be vulnerable. That should worry us more than any sci-fi headline.

Human Connection Minimum (HCM): Combating AI-Induced Intimacy Gaps

One emerging response to AI companionship dependency is deceptively simple:

HCM — Human Connection Minimum

At least 10 minutes per day of non-screen, human-to-human interaction.

Not as lifestyle advice. As mental infrastructure.

In an age of synthetic empathy, real presence becomes a protective factor.

Capability vs Safety: Why AI Risks in 2026 Are Structural

Public debate obsesses over whether AI is “sentient.”

The real risk is structural:

-

Models are getting more capable

-

Safety tools lag behind

-

Governance moves slow

The Bengio Report is blunt: today’s AI systems aren’t autonomous agents. But they amplify harm at scale—from persuasion to fraud to cyber exploitation.

This is not about consciousness.

It’s about leverage.

Trust-as-a-Service in 2027: Solving the Collapse of Shared Reality

Here’s the next market most companies are quietly preparing for:

Trust-as-a-Service.

By 2027, platforms won’t just sell AI. They will sell:

-

Verified Human Content badges

-

Authenticity scoring layers

-

Cryptographic provenance for media

Trust will become a product.

Not a default.

Snapshot: AI Risks in 2026

-

Primary Risk: Epistemic fragmentation AI causes through deepfakes

-

Regulatory Link: EU AI Act Article 52 enforcement (August 2026)

-

Technical Risk: Jagged Frontiers AI (high intelligence, low common sense)

-

Cyber Threat: LLM-driven zero-day discovery

-

Social Risk: AI companionship dependency

-

Human Fix: Human Connection Minimum (HCM)

-

Future Market: Trust-as-a-Service

FAQs

Q1: What are the main AI risks in 2026?

A: The main AI risks in 2026 include deepfake scams 2026, AI-induced epistemic fragmentation, uneven AI capability (Jagged Frontiers AI), AI companionship dependency, and gaps in AI governance. Experts warn that these risks affect digital trust, mental health, cybersecurity, and regulatory compliance, making proactive mitigation essential.

Q2: How does AI cause epistemic fragmentation in 2026?

A: Epistemic fragmentation AI occurs when AI-generated media—like videos, images, and audio—blurs the line between real and fake. This fragmentation erodes shared truth, reduces trust in news and social media, and amplifies the risk of scams and misinformation in 2026. Regulators and organizations are now linking this risk to EU AI Act Article 52 enforcement for synthetic content disclosure.

Q3: What is Jagged Frontiers AI?

A: Jagged Frontiers AI refers to models that excel at highly advanced tasks—such as passing bar exams or generating professional documents—but fail basic common-sense reasoning. This uneven capability creates hidden risks in decision-making, cybersecurity (including LLM-driven zero-day discovery), and automated systems deployment in 2026.

Q4: Is AI companionship harmful in 2026?

A: AI companionship is not inherently harmful, but AI companionship dependency is rising, especially among teens and socially isolated individuals. Users may rely on AI companions as emotional substitutes, widening the intimacy gap. Experts recommend implementing the Human Connection Minimum (HCM)—at least 10 minutes of non-screen human interaction daily—to mitigate psychological dependency.

The 2026 Reality

The defining danger of AI in 2026 isn’t that machines become human.

It’s that humans lose agreement on what is real.

And once shared reality fractures, no regulation—however well-written—can restore trust on its own.

Related: Is AI Ruining Everything in 2026? A Reality Check on Mismanaged Artificial Intelligence