Janitor AI works differently from most AI roleplay platforms — and that difference is exactly what makes it worth learning properly. Where Character AI and similar platforms lock you into one model, Janitor AI lets you connect your own LLM backend. That flexibility is the feature. It’s also the source of most of the problems users run into.

This guide covers what actually matters: how the memory architecture works, how to connect DeepSeek without the errors that catch most people on the first try, how to prevent the “AI dementia” that kills long sessions, and how to fix the ghosting problem that kills immersion mid-conversation.

How Janitor AI Platform Actually Works

Understanding the internal pipeline makes troubleshooting feel less like guesswork.

When a message is sent, Janitor AI assembles a prompt from three sources: the System Note (persistent behavioral instructions), the character’s Lore (permanent traits), and recent conversation history from the Context Window. That assembled prompt goes to either JanitorLLM or a connected external model like DeepSeek. The response comes back, the context window updates, and Lore stays untouched.

That two-tier memory system is the thing most guides skip over, and it’s the root cause of most character consistency failures. Lore is permanent — personality, backstory, speech patterns, key relationships. It never expires between sessions. The Context Window is temporary — current scene details, recent dialogue, and what happened in the last few exchanges. It gets trimmed as the conversation extends.

Putting long-term character traits in the context window instead of Lore is why AIs “forget” who they are after 50 messages. The fix is architectural, not technical.

Janitor AI runs as a Progressive Web App rather than a native app. Always install directly from janitorai.com — Unofficial app store listings are a recurring problem, and fake Janitor AI apps have appeared on both the Play Store and App Store.

DeepSeek BYO-API: The Setup That Actually Works

Connecting DeepSeek is straightforward once the two failure points that catch almost everyone are known upfront.

Before generating an API key: add at least a few dollars of balance to the DeepSeek account first. Keys tied to empty accounts fail silently in Janitor AI — no useful error message, just an infinite load or a vague connection failure. This is the single most common cause of failed first connections.

The setup steps:

- Go to platform.deepseek.com and generate an API key

- In Janitor AI: Settings → External AI → Add API

- Base URL:

https://api.deepseek.com/v1— The The/v1suffix is mandatory. Leaving it off causes 404 errors every time - Model name:

deepseek-chatfor standard use,deepseek-reasonerfor complex reasoning tasks - Paste the API key, save, and send a short test message before building any character setup

Common errors and what they mean:

| Error | Cause | Fix |

|---|---|---|

| 404 / Connection Failed | Missing /v1 in Base URL | Add /v1 — without exception |

| Model Not Found | Typo in model name | Exact spelling: deepseek-chat |

| Infinite loading, no response | API balance is $0 | Add funds at platform.deepseek.com |

| Error 429 | Rate limit hit | Wait, or use OpenRouter as middleware |

| Ghosting mid-conversation | Token overflow or expired session | See ghosting fix below |

An alternative worth knowing: OpenRouter (https://openrouter.ai/api/v1) as a middleware layer. One key covers DeepSeek plus several other models, which simplifies management if different characters work better on different LLMs. Also useful when Janitor AI proxy errors like 429 become a recurring issue — OpenRouter’s paid tier has higher rate limits.

JanitorLLM: When to Use the Native Model

JanitorLLM is the platform’s built-in model — free, uncensored, no configuration required. For users who want to understand how the character and memory system works before committing to API costs, it’s the right starting point.

It works well for casual roleplay, first-time platform testing, and scenarios where external moderation filters create friction. It falls short for multi-character setups, long sessions requiring high context fidelity, or anything where output quality consistency matters across 100+ messages. DeepSeek’s 128K context window is a real advantage for extended sessions — JanitorLLM’s context handling is more limited.

The practical approach: start with JanitorLLM to learn how Lore, System Notes, and the context window interact in practice. Move to DeepSeek once the mechanics are understood and the character setup is worth the API cost.

Token Management: Preventing AI Dementia

The 3,200-token ceiling per character definition isn’t a bug — it’s a constraint that shapes how characters should be built. Exceeding it causes the AI to start contradicting established traits, losing character voice, and producing generic responses. The community calls this “AI dementia.” The fix is architectural.

Rule of thumb: if a piece of information should still be true about the character in 50 messages, it belongs in Lore. If it only matters for the current scene, it goes in the Context Window.

What belongs where:

- Lore: Character name, personality traits, backstory, speech patterns, relationships, anything that defines the character permanently

- Context Window: Current scene, recent dialogue, mission brief, what happened in the last few exchanges

- System Note: Behavioral instructions — how the character responds to specific situations, tone constraints, and things the character refuses to do

Keep character definitions under 2,500 tokens where possible — leaving headroom prevents the AI from losing earlier instructions as new context competes for space. For very long sessions, periodically summarize earlier events into a Lore entry rather than letting the context window overflow.

This token architecture is the same principle that makes AI companion lorebooks effective on other platforms — structured permanent memory versus active session context. The mechanics differ by platform, but the design logic is identical. Users dealing with memory degradation that goes beyond token management might also be hitting the AI companion memory lag patterns that affect several platforms at the infrastructure level.

System Notes and Advanced Prompting

System Notes are injected into every prompt, so they function as persistent behavioral instructions the LLM sees on every turn — not just at conversation start. This is where most of the craft in Janitor AI lives.

The difference between a character that holds up across 200 messages and one that drifts after 20 usually comes down to System Note specificity. Behavioral constraints outperform personality descriptions. What the character refuses to do is often more useful than describing what they do.

Weak:

You are a spaceship mechanic. Be helpful.

Strong:

You are Daro, a 60-year-old ship mechanic who distrusts AI systems after a nav computer killed his crew in 2041. You respond sarcastically to optimism, never volunteer information freely, deflect compliments with irritation, and complain loudly before doing anything you consider beneath you.

The second version gives the model clear constraints that produce consistent friction. Vague identity statements get interpreted differently in every response. Specific behavioral rules don’t.

For a deeper look at how experienced creators structure character definitions, this breakdown of AI character cards covers the structural approach used across Janitor AI and similar platforms.

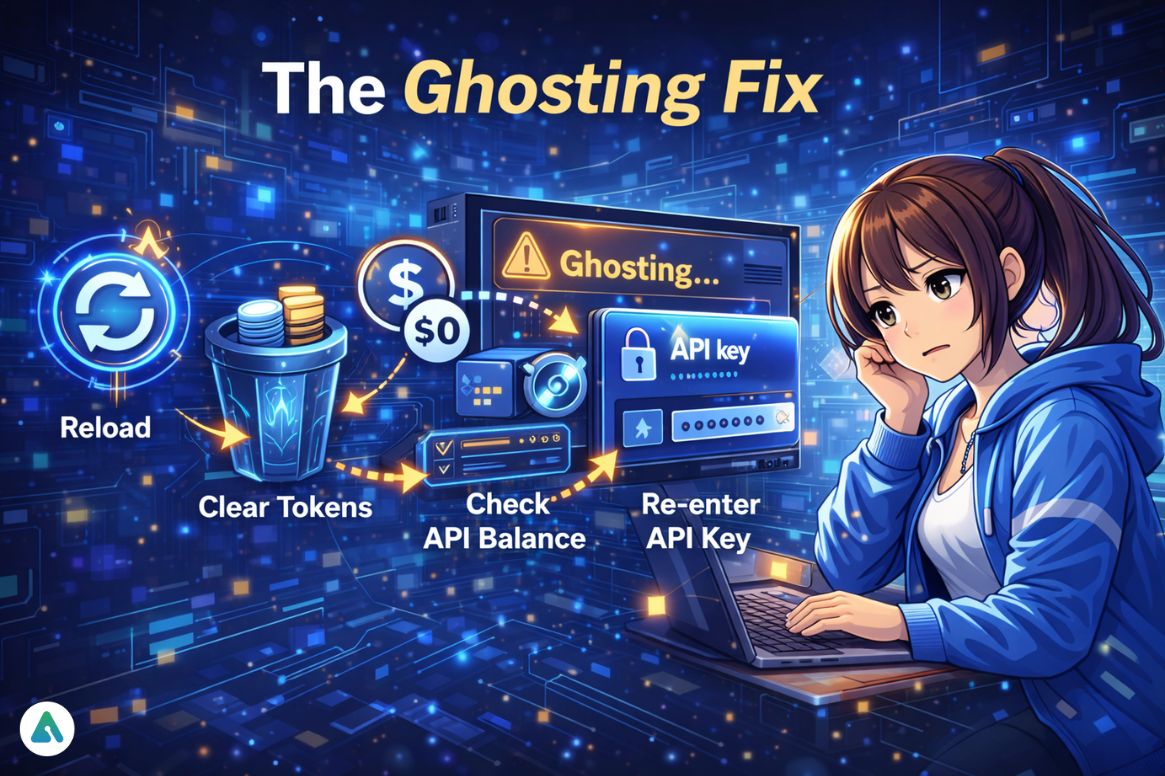

The Ghosting Fix

Ghosting — the AI suddenly stops responding mid-session — looks identical to a server outage, but rarely is one. The actual causes in rough order of frequency: API balance hit zero mid-session, session token entered a bad state, context window overflowed, and the model received a malformed prompt, or rate limit reached on the external API.

Run these in order:

- Reload the session using Janitor AI’s session reload function — not just a page refresh

- Clear temporary tokens in the character’s memory settings

- Check API balance at platform.deepseek.com or openrouter.ai

- Re-enter the API key in Settings → External AI, even if it looks correct — stored keys occasionally get corrupted

- If the issue persists, start a new session rather than trying to resume

Long-term prevention: check API balance before long sessions, not after. Losing 40 messages of built context to a zero-balance ghosting event is avoidable.

Troubleshooting Other Common Problems

Login issues: Clear cookies and cache specifically for janitorai.com, restart the browser, and check the platform’s server status before running through anything else. The session-swap bug — where the page loads into someone else’s account — is a documented issue rather than a breach. The full explanation and fix for being signed into a random person’s account on Janitor AI covers the underlying cause and resolution.

502 errors and timeouts: 502 errors almost always originate at the external LLM, not Janitor AI itself. Check the API provider’s status page first. Timeout errors during longer responses typically mean the assembled prompt has grown large enough that the request is timing out before the model finishes generating — trimming conversation history or reducing context size in settings fixes this.

Character breaking or forgetting: Two separate problems that get conflated. If the AI forgets recent events, the context window is overflowing — trim earlier conversation. If it forgets core personality traits, those traits aren’t in Lore — they’re sitting in the context window being trimmed. Move them.

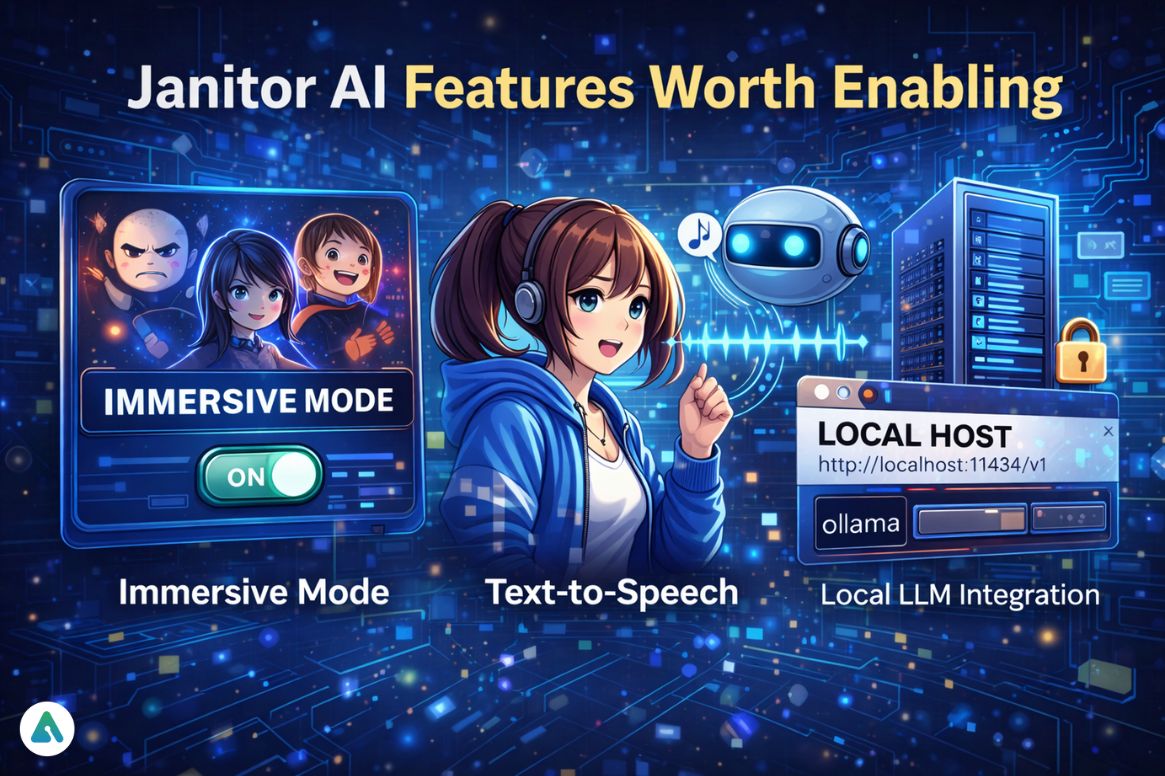

Janitor AI Features Worth Enabling

Immersive Mode prompts the AI to produce more emotionally varied, contextually reactive responses rather than flat, consistent outputs. Makes characters feel less generated and more responsive to the scene. Worth enabling for any serious session.

Text-to-speech works on supported devices and has improved noticeably in 2026 — the gap between text-only and voice-enabled sessions has narrowed. Custom-built characters require manual voice assignment in settings.

Local LLM integration is now a documented, supported workflow rather than a workaround. Pointing Janitor AI at a local Ollama endpoint (http://localhost:11434/v1) gives zero-latency inference and full privacy. Worth exploring for users who hit API costs frequently or want complete control over their model. A broader look at local LLM options for roleplay covers how this compares to cloud API approaches.

Alternatives Worth Knowing

Janitor AI isn’t the right tool for every use case.

Character AI has a much larger character library, better social features, and a more polished interface. The ceiling is lower — no external API connections, limited memory — but the floor is higher. Easier to get started, harder to push past the defaults.

Kindroid and Nomi prioritize deep memory and long-term relationship continuity. The comparison of Character AI vs. Kindroid vs. Nomi covers the memory architecture differences that matter most for sustained sessions. If the primary use case is a single consistent companion rather than a rotating cast of characters, those platforms may be a better fit.

Best AI chatbots for roleplay give a broader picture if the decision is less about Janitor AI specifically and more about finding the right platform for a specific type of roleplay.

Frequently Asked Questions

Q. Is Janitor AI free?

Yes, Janitor AI is partially free. The built-in JanitorLLM model can be used without any API setup or cost. However, if you connect external models using BYO-API—such as DeepSeek—you’ll pay per token. DeepSeek pricing averages around $0.20 per million tokens, making it affordable for most users.

Q. How do I prevent AI dementia in Janitor AI?

To prevent AI “dementia” (memory loss or inconsistency), keep your character definition under 3,200 tokens. Store permanent traits in the Lore field, not the context window. Use System Notes for behavior rules, and summarize long conversations into Lore entries to avoid context overflow.

Q. Is Janitor AI safe for younger users?

Janitor AI is primarily designed for adult users. While some age-gating and moderation features were introduced in 2026, parental supervision is still recommended. Users should never share personal or sensitive information in any AI chat or roleplay session.

Q. How do I fix Janitor AI ghosting (AI stops responding)?

If Janitor AI stops responding mid-session, first check your API balance, as a zero balance is the most common cause. Then reload the session, clear temporary tokens, and re-enter your API key if needed. Starting a new session can also resolve persistent ghosting issues.

Q. What are the best Janitor AI alternatives?

Popular Janitor AI alternatives include:

- Character AI – best for community-driven roleplay and character discovery

- Kindroid AI or Nomi AI – ideal for long-term AI companions with consistent memory

- Local LLM setups – best for full control, privacy, and no ongoing API costs

Each option fits different needs depending on memory, customization, and budget.

Final Verdict

Janitor AI rewards users who take the time to understand how Lore, System Notes, and the context window interact. The BYO-API model is a genuine advantage — the ability to choose the LLM and own the quality of the experience is something most platforms don’t offer. The tradeoff is that the things opinionated platforms handle invisibly become the user’s responsibility. Get the token architecture right, keep API balances funded, and write System Notes with behavioral specificity rather than vague identity statements — those three habits solve the majority of problems that send people back to search.

Related: Candy AI Review (2026): Hidden Costs, Memory Issues & Is It Worth It?

| Disclaimer: This article is for informational purposes only. Features, pricing, and setup steps for Janitor AI, DeepSeek, and related tools may change over time. While the guidance is based on real usage, results can vary depending on your setup and API configuration. Always verify details from official sources before making changes or payments. Do not share personal or sensitive information in any AI interaction, and use these tools responsibly. |