Janitor AI still looks like it should work.

The interface is clean. Characters are easy to create. For short conversations, it behaves. But anyone who has attempted sustained roleplay in late 2025 or 2026 knows what happens next: memory slips, personalities flatten, and scenes devolve into generic assistant responses.

Search interest in Janitor AI alternatives didn’t surge because of backlash.

It rose because users quietly left.

Not for chaos.

For platforms with real memory architecture, predictable limits, and control over context.

This guide is for post-JLLM migration users — people who already understand roleplay systems and want to know where serious storytelling, ERP, and long-form character work actually landed in 2026.

Why Janitor AI Is No Longer Viable for Long-Form Roleplay

This isn’t a debate about filters. It’s about system design.

1. JLLM’s Context Ceiling Is a Structural Dead End

Janitor AI’s internal model (commonly called JLLM) remains in beta as of early 2026. The recommended stable context length is under 1,000 tokens. Beyond that, performance degrades rapidly.

For long-form users, this makes character drift inevitable:

-

Personality softening

-

Dialogue collapsing into polite, neutral phrasing

This is not prompting failure. It’s an architectural limitation.

2. Token Expectations Changed — Janitor Didn’t

By 2026, experienced users expect:

-

Rolling or layered memory

-

Anchored facts that persist across days

-

Emotional state continuity

Janitor AI still treats most interactions as disposable sessions. Long arcs were never its design target.

3. Moderation Is Probabilistic, Not Transparent

Two similar prompts can produce radically different outcomes. Characters that behaved one way yesterday may derail today without explanation.

Platforms change the rules and ghost you.

That unpredictability makes Janitor AI unsuitable for long-term creative investment.

4. Platform Risk Is No Longer Hypothetical

Account wipes, character resets, and silent policy changes have trained users not to trust Janitor AI with months of work. That’s why many migrated to platforms such as Kindroid or Character AI alternatives for better memory stability and continuity.

How Janitor AI Alternatives Are Evaluated (The R.A.W. Framework)

Most listicles fail because they rank platforms without explaining why they work.

This guide uses three criteria that actually matter in 2026.

R — Roleplay Depth

Can the system maintain voice, tone, and emotional continuity over hundreds of turns?

A — Autonomy & Moderation

Are limits clear and consistent, or does moderation feel random and reactive?

W — Whitelist Risk

Do chats survive updates? Can users export data? How often do rules shift without notice?

No platform wins all three. Fit matters more than hype.

Best Janitor AI Alternatives in 2026 (With Technical Specs)

| Platform | Best For | Context Window | Memory Architecture | Price (2026) |

|---|---|---|---|---|

| CrushOn AI | Low-friction Janitor replacement | ~8k–12k | Session-based | Freemium / Paid |

| DreamGen | Deep story & plot arcs | 12k | Scenario Codex | $19–$48.30/mo |

| Kindroid | Long-term character memory | Rolling | 5-Layer Cascade | ~$13.99/mo |

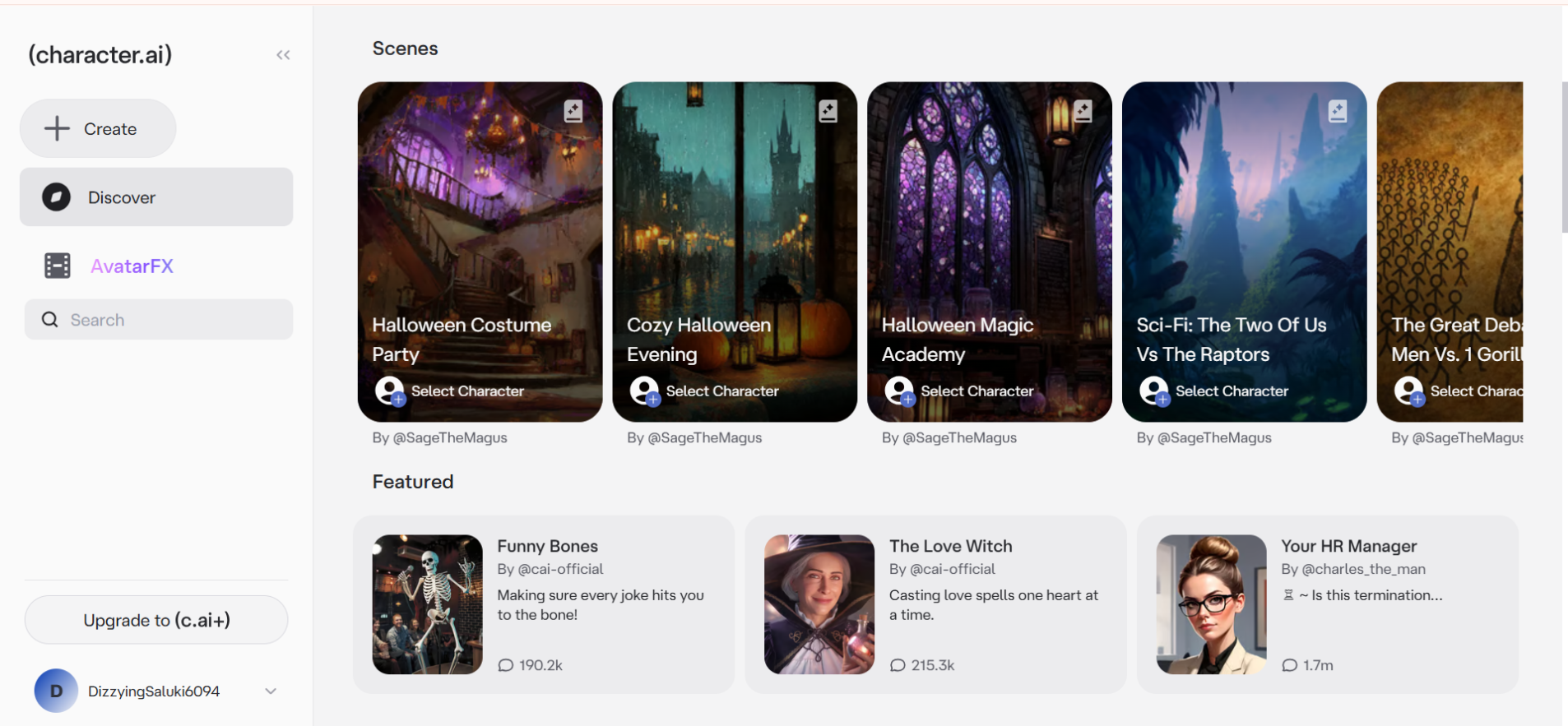

| Character AI | Stability & uptime | ~4k | Session-based | Free |

| Candy.ai | AI companionship | ~6k | Light persistent memory | Freemium |

| SillyTavern | Full control (power users) | Model-dependent | User-managed | Pay-as-you-go |

CrushOn AI: The Easiest Janitor Exit

CrushOn AI became the default landing spot for Janitor refugees.

The UX feels familiar. Moderation is less intrusive. Characters derail less often mid-scene. For casual ERP and short-to-medium arcs, it works.

The limitation is memory.

Long arcs soften unless you pay for higher tiers, and persistence remains session-focused.

If you want the least friction and minimal setup, CrushOn AI is the fastest off-ramp.

DreamGen: Built for Structured Storytelling

DreamGen does not pretend to be casual chat.

It is a narrative engine.

Its proprietary Scenario Codex anchors characters, world rules, and plot state outside the immediate conversation. The Pro tier ($48.30/mo in 2026) supports a 12,000-token context window, enabling chapter-length continuity.

Characters don’t just remember — they stay in role.

For writers and serious roleplayers, DreamGen replaces the need to wrestle chat platforms into doing novel-grade work.

Kindroid: Memory Wins the Long Game

Kindroid quietly became the memory benchmark in 2026.

Its 5-Layer Cascaded Memory System separates:

-

Immediate context

-

Short-term recall

-

Long-term factual memory

-

Emotional pattern tracking

-

User preference modeling

Characters remember events, emotional beats, and relationship dynamics across days or weeks.

It may feel less explicit than Janitor AI — but continuity is unmatched.

If memory matters more than shock value, Kindroid is the strongest upgrade.

Character AI: Stable, Predictable, Restricted

Character AI remains the most reliable platform in terms of uptime and polish.

Moderation is clear. Behavior is consistent. But context windows remain short, and ERP or intense emotional roleplay is still heavily constrained.

It excels at stability.

It frustrates anyone chasing depth.

Candy.ai: Companionship First, Plot Second

Candy.ai is not a storytelling platform.

It focuses on emotional presence, romance, and visual engagement. Memory exists, but it is intentionally lightweight.

Best suited for users who want consistency and connection rather than complex narrative arcs.

SillyTavern: The Power-User Endgame

SillyTavern isn’t a platform — it’s a control layer.

Users bring their own backend:

-

Claude 4.5 Opus for prose and emotional nuance

-

Gemini 3 Flash for cost-efficient, massive context (including caching)

-

Local LLMs for privacy and zero moderation

Why power users migrate here:

-

No platform risk

-

Full memory control

-

No “assistant-itis”

-

No surprise moderation

The trade-off is complexity. APIs, token budgeting, and setup are mandatory.

But for long-term roleplay, this is the only true escape hatch.

Context Caching: The 2026 Cost Reality

Long context isn’t just about memory — it’s about cost.

Modern models like Claude 4.5 and Gemini 3 support context caching, allowing repeated system prompts and lore blocks to be reused without re-billing full token costs.

Platforms that expose or leverage caching dramatically reduce the expense of long-form roleplay.

If a platform doesn’t support caching, your token budget will bleed fast.

2026 Price-to-Performance Snapshot

-

Cheapest entry: Janitor AI (free / API-based)

-

Best memory per dollar: Kindroid

-

Best structured storytelling: DreamGen Pro

-

Maximum control: SillyTavern + OpenRouter (pay-as-you-go)

Quick Migration Checklist (Post-JLLM)

Before switching platforms:

-

Decide whether memory or freedom matters more

-

Export character prompts where possible

-

Adjust expectations — “no filter” doesn’t mean no limits

Low hassle: CrushOn AI

Continuity-focused: DreamGen or Kindroid

Total control: SillyTavern + Claude 4.5

The AI Slop Reality Check

Every platform feels incredible for the first forty-eight hours. Then the honeymoon ends.

Characters start repeating phrasing from fifty messages ago. Scenes lose their edge. Dialogue becomes polite and safe. This is the 2026 slop loop, which occurs fastest on shallow memory systems. No UI redesign can fix it — only deeper context and layered memory prevent it.

Technical approaches to reducing memory lag and maintaining continuity are covered in How to Fix AI Companion Memory Lag.

FAQs

Q. What is the best Janitor AI alternative in 2026?

There isn’t one single “best” replacement — it depends on why you’re leaving Janitor AI. If you’re tired of characters forgetting who they are, Kindroid is the strongest option for long-term memory. If you want to write full story arcs without constant resets, DreamGen is where most serious writers end up. And if you’re done trusting platforms altogether, SillyTavern paired with models like Claude 4.5 or Gemini 3 is the clean exit.

Q. Why does Janitor AI lose character personality over time?

Because it simply runs out of memory. Janitor AI’s internal model (often called JLLM) starts breaking down once conversations get long. In 2026, it’s still only stable for very short contexts. After that, emotional callbacks disappear, personalities blur, and characters slide into that familiar “polite assistant” tone.

That drift isn’t your fault — it’s baked into the system.

Q. Which Janitor AI alternative actually remembers past conversations?

Right now, Kindroid does this better than anyone else. Its memory system doesn’t just store recent messages. It keeps layered memories — facts, emotional patterns, and relationship history — so characters remember what happened yesterday, last week, or even earlier. If continuity matters to you, this is the platform that feels the least disposable.

Q. Are there any Janitor AI alternatives without filters?

Every platform has limits — anyone promising “no filters at all” is lying or won’t last long. That said, some platforms are far more predictable. CrushOn AI and DreamGen tend to be consistent about boundaries instead of randomly interrupting scenes. If you want full control with zero surprise moderation, SillyTavern (especially with local or API-based models) is the closest thing to real freedom in 2026.

Q. What do people mean by “AI slop” in roleplay chats?

It’s that moment when the magic dies. The character starts repeating your own words. Responses feel smoother, safer, and emptier. Emotional scenes turn into polite conversations. That’s “AI slop,” and it happens fastest on platforms with shallow memory. The fix isn’t a new UI or hotter marketing. It’s deeper context and better memory — otherwise every platform eventually feels the same.

Final Verdict

Janitor AI didn’t implode.

It stagnated.

The best Janitor AI alternatives in 2026 aren’t louder or edgier. They’re better engineered — with real memory, transparent limits, and fewer surprises.

Choose the tool that matches how you roleplay, not how platforms market themselves.

That’s how you stop migrating every month.

Related: How to Talk OOC in Character AI: What Actually Works (and Why It Often Fails)

| Disclaimer: This article is for informational purposes only and is not sponsored or endorsed by any platform mentioned. The author is not affiliated with Janitor AI, CrushOn AI, DreamGen, Kindroid, Character AI, Candy.ai, SillyTavern, or any related services. Features, pricing, and policies may change over time, so please verify the latest details directly from official sources before making decisions. This content is not financial, legal, or medical advice. |