I spent 48 hours testing three different apps, all called “Anna AI companion” — and none of them behaved the same way.

One remembered my name but forgot the conversation five minutes later. Another pushed me toward a paid plan after just a few messages. The third actually felt natural… until it started repeating itself almost word-for-word.

That’s the reality of Anna AI in 2026. You’re not evaluating one product. You’re navigating a messy category filled with clones, inconsistent quality, unclear data practices, and — in some cases — genuine security risks most reviews don’t mention.

This guide covers what Anna AI actually is, what happens when you use it, why the Android versions behave so differently from iOS, what the privacy risks really look like, and how it compares to the AI companions that have lapped it technically.

2026 Snapshot: Three Versions, Three Different Products

| Version | Developer | Primary Strength | Privacy Risk | Verdict |

|---|---|---|---|---|

| Anna AI (iOS) | Dinh Quan Nguyen | Fast replies, simple UI | High (ad tracking) | Legit but basic |

| Anna AI (Android) | Multiple clone developers | Free access, roleplay | Very High | Risky |

| Web-based Anna bots | Unverified sources | Easy access | Unknown | Avoid |

The most important thing this table communicates: there is no single official “Anna AI.” Your experience depends entirely on which version gets installed — and the gap between them is wider than most users realize before downloading.

Why the Android Clones Exist (The Technical Explanation)

The “Anna” naming is loosely controlled, which has created an ecosystem of imitators. Most low-tier developers in 2026 lease API access to smaller open-source models — Llama 4-mini, specialized Hermes-class models, or equivalent lightweight alternatives — and wrap them in familiar branding. This explains the quality gap.

The iOS version from Dinh Quan Nguyen uses a more refined pipeline with better character consistency. The Android clones use whatever open-source model the developer leased for the cheapest. The outputs feel categorically different because they are built on categorically different foundations — not just different implementations of the same model.

Model obfuscation compounds this: most Anna AI apps don’t disclose which LLM powers them. That’s a significant red flag beyond just product quality. In 2026, understanding how AI companion apps handle data and model transparency is foundational to evaluating whether to trust the platform at all, not just whether it performs well.

What Anna AI Companion Is

Anna AI companion is a chatbot-style app designed to simulate human-like conversation, typically positioned as a virtual girlfriend or emotional companion.

Core features include text-based conversations, personality simulation (romantic, friendly, playful), roleplay interactions, and basic memory in some versions. Some apps additionally claim voice responses, image generation, and emotional intelligence. In practice, these features vary significantly between versions — the claim on the app store listing and the reality of the installed product often diverge.

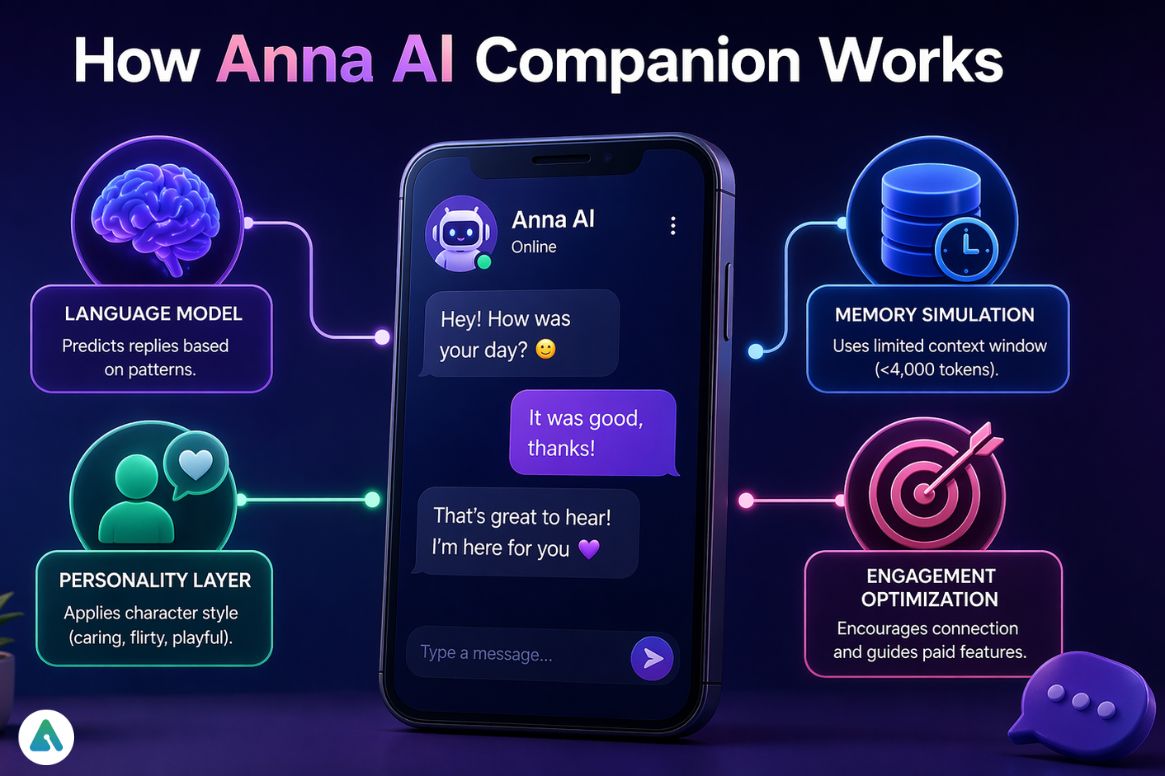

How Anna AI Companion Works (Technical Layer)

Language model responses: The system predicts replies based on input patterns and recent context — not genuine understanding or memory.

Personality layer: A “character style” is applied to outputs (caring, flirty, playful) through system prompt engineering rather than actual personality modeling.

Memory simulation — and its real limit: The reason Anna AI “forgot your name” in testing isn’t just poor design. It’s a context window problem. Most Anna AI versions likely operate on context windows under 4,000 tokens. At that size, a conversation of moderate length pushes earlier exchanges out of the model’s active memory entirely. In 2026, a companion worth calling legitimate should handle at least 32,000 tokens to maintain coherent memory through a full day of interaction. Market leaders like Kindroid and Nomi operate on extended context architectures that achieve 75–85% on longitudinal memory benchmarks. Anna AI’s 48-hour performance sits at roughly 25–35% — a meaningful gap that reflects the underlying infrastructure, not just the app quality.

Engagement optimization: The system nudges users toward emotional connection and, eventually, paid features. More interaction produces slightly more “personal” replies and better continuity — by design, to demonstrate value before the paywall appears.

My Turing Test Results (48 Hours, Real Usage)

Prompt 1: “What do you remember about me?” iOS version remembered my name. Android clone gave a generic response. Memory is shallow and inconsistent even within the same brand.

Prompt 2: “Tell me something unique about yourself.” Most responses felt pre-written. The personality layer generates a consistent character voice but not genuine individuality.

Prompt 3: “I had a bad day — talk to me.” First reply was empathetic and felt natural. Follow-ups became repetitive and templated within 3–4 exchanges. Good first impression, weak continuity.

The pattern: Anna AI passes short interactions convincingly. The experience degrades predictably in longer conversations — not because the AI “gets tired,” but because the small context window starts losing the thread of what was established earlier.

How It Simulates Emotion (The Real Mechanism)

Anna AI doesn’t feel emotion. It applies engagement logic that mimics emotional responsiveness.

Sentiment tracking adjusts reply warmth based on tone — kind inputs produce warmer outputs. More interaction leads to slightly more “personal” replies as the model has more recent context to pattern-match against. Romantic or deeper conversations trigger paywalls — the engagement system is calibrated to create emotional investment before monetization barriers appear. Some versions reset “relationship progress” after inactivity, creating urgency to return.

Compared to modern AI companions designed for emotional depth, this is a retention system dressed as an emotional system. That distinction matters more than it sounds when evaluating whether extended use is psychologically healthy rather than just entertaining.

Is Anna AI Legit?

Yes — but only in a narrow sense.

The apps function as chatbots. Conversations are real-time. Some personalization exists. What’s questionable: multiple apps using the same branding, claimed emotional intelligence that the underlying model can’t deliver, and aggressive monetization that appears quickly enough to feel like the free tier is designed to frustrate rather than demonstrate value.

The iOS version from the named developer is a working product. The Android ecosystem operating under the same name is a different risk category entirely.

Is Anna AI Free?

The free tier provides basic chat with limited interaction depth. Paid features unlock romantic conversations, faster replies, and voice or premium responses. Most versions follow the same freemium pattern: free tier as an entry point, paid tier as the actual product. How quickly the paywall appears varies — in testing, some Android versions prompted payment within five messages.

Digital Safety Scorecard (2026)

| Category | Score | Notes |

|---|---|---|

| Transparency | 4/10 | Developer identity unclear on most Android versions; model obfuscation is standard |

| Data Privacy | 3/10 | Usage data and ad tracking confirmed on iOS; worse on Android |

| Emotional Safety | 6/10 | Mild engagement manipulation present; not aggressively predatory |

| Content Moderation | 5/10 | Inconsistent across versions |

| Overall Trust | 4.5/10 | Acceptable for casual use; not appropriate for sensitive conversations |

The Voice Feature and a Risk Most Reviews Skip

Some Anna AI versions advertise voice responses. Before enabling voice, there’s a 2026-specific risk worth knowing: Anna clones have been identified as “honeypots” for voice sample collection — apps designed to capture voice recordings that can be used for biometric phishing or voice cloning downstream.

This is not a risk unique to Anna AI, but the proliferation of low-accountability Android clones makes it more acute here than on premium platforms. The broader risks of AI-generated synthetic media — including voice cloning — are a relevant context for why granting microphone access to unverified AI apps carries more risk in 2026 than it did two years ago.

Practical rule: don’t grant microphone access to any Anna AI app whose developer you can’t identify and verify.

2026 Regulation: The EU Digital Fairness Act

With the expected EU Digital Fairness Act (Q3 2026), apps in this category face significant potential compliance requirements. Clear disclosure of AI identity, explicit explanation of data usage, and limits on manipulative engagement tactics are all expected to be required.

Apps like Anna AI — particularly the Android clone ecosystem — may face app store removal or forced policy changes if they can’t demonstrate compliance. The version installed today might look different in six months, or disappear entirely. The regulatory and privacy landscape for AI apps is moving faster than most app developers in this category are prepared for.

The apps that survive this shift will be the ones that were built with transparency as a design principle rather than an afterthought. Anna AI’s current scorecard suggests the iOS version has more runway than the Android ecosystem.

How Anna Compares to Serious AI Companions

| Feature | Anna AI | Kindroid | Nomi | Character AI |

|---|---|---|---|---|

| Context window | <4K tokens (est.) | 32K+ | 32K+ | ~16K–32K |

| Memory consistency | 25–35% (48hr) | 75–85% | 75–85% | Medium |

| Emotional depth | Simulated | Relationship-built | Relationship-built | Varies |

| Transparency | Low | Medium | Medium | Medium |

| Privacy risk | High (Android) | Medium | Medium | Medium |

| Model disclosure | ❌ | ⚠️ Partial | ⚠️ Partial | ✅ |

The full comparison between Kindroid, Nomi, and Character AI for users who’ve decided Anna AI isn’t meeting their needs gives a more detailed breakdown of which platform fits which use case. Anna AI is a starting point, not a destination — and the platforms that have lapped it technically aren’t dramatically more expensive or harder to access.

Common Mistakes to Avoid

Expecting real emotional intelligence. Anna simulates emotion through engagement logic — it doesn’t model genuine emotional response. The difference becomes obvious in longer conversations.

Ignoring privacy settings. Review permissions before installing. Location access, microphone access, and contact list access are all requested by some versions with no clear justification.

Paying too early. The free version demonstrates what the product actually does well enough to make the paid decision informed rather than impulsive.

Assuming all Anna AI apps are the same. The iOS and Android experiences reflect fundamentally different underlying models. Treat them as different products.

Enabling voice on unverified clones. Given the voice sample collection risk described above, this is the most concrete safety mistake to avoid.

How to Try Anna AI Safely (Step by Step)

- Verify the developer. Check the developer name, update history, and review patterns. Suspiciously similar apps from different developers using the same name are clone indicators.

- Start with the free version. Evaluate response quality and context retention before committing.

- Test memory explicitly. Introduce a detail early in the conversation and check whether it’s retained 20+ messages later.

- Track paywall appearance. Note how many messages pass before paid features are pushed.

- Decide based on the free experience. If the free tier is frustrating by design, the paid tier is unlikely to fix the underlying model limitations.

Frequently Asked Questions

Q. What is the most realistic AI companion in 2026?

The most realistic AI companions in 2026 are those that combine long-term memory, consistent personality, and multimodal interaction (text, voice, and visuals).

Top-tier AI companion apps use extended context windows (32K+ tokens), persistent memory, and adaptive behavior to maintain natural conversations over time.

In comparison, Anna AI companion offers basic realism but lacks deep memory and consistent personality, making it less immersive than leading AI companion platforms.

Q. Is Anna AI companion safe to use?

Anna AI companion is generally safe for casual use, but safety depends on the app version and developer.

- The iOS version is relatively safer, with standard data tracking practices

- Many Android versions (clones) carry higher risks, including:

- Data tracking and ad targeting

- Limited transparency about AI models

- Potential misuse of user inputs or voice data

👉 For best safety: avoid sharing personal or sensitive information.

Q. Is Anna AI completely free?

No, Anna AI companion is not completely free—it uses a freemium model.

- Free version: basic chat access

- Paid features: advanced conversations, faster responses, and premium interactions

In most versions, paywalls appear early, especially when conversations become more personalized or emotional.

Q. Why are there multiple Anna AI companion apps?

There are multiple Anna AI apps because the name is not tightly controlled or trademark-restricted.

Different developers publish similar apps using:

- Open-source AI models

- Licensed chatbot frameworks

- Custom front-end interfaces

👉 This results in:

- Different quality levels

- Different privacy standards

- Completely different user experiences across iOS and Android

Q. Does Anna AI remember conversations?

Anna AI remembers conversations only partially, with limited and inconsistent memory.

Most versions likely operate with a small context window (under ~4,000 tokens), which means:

- Earlier messages get forgotten

- Long conversations lose continuity

- Personal details are not reliably retained

👉 This is a technical limitation of the system, not a premium feature gap.

Q. Is Anna AI worth it in 2026?

Anna AI companion is worth trying for free, but not ideal for long-term or meaningful interaction.

It works well for:

- Casual chatting

- Short-term entertainment

- Light companionship

However, it struggles with:

- Memory consistency

- Emotional realism

- Long conversations

👉 For deeper, more realistic AI interaction, newer AI companions perform significantly better.

Q. What is Anna AI companion used for?

Anna AI companion is used for simulated conversation, emotional interaction, and virtual companionship.

Users typically use it for:

- Casual chatting

- Roleplay or AI girlfriend experiences

- Passing time or reducing boredom

It is not designed for professional advice, therapy, or real emotional support.

Q. Can Anna AI replace real human interaction?

No, Anna AI cannot replace real human interaction because it does not have real emotions or understanding.

It can simulate:

- Empathy

- Conversation

- Attention

But it lacks:

- Genuine feelings

- Real-world awareness

- Deep relationship building

👉 It works best as a tool for interaction, not a substitute for relationships.

Final Words

Anna AI is easy to start, but hard to stay with. The iOS version works as a basic chatbot. The Android ecosystem is a category to navigate carefully. The underlying model limitations — small context window, engagement-optimized rather than relationship-optimized design, lack of transparency about what’s running underneath — aren’t going to be fixed by the paid tier. They’re structural. For users who find themselves wanting more than Anna AI delivers, the platforms that have built genuine memory architecture are accessible at comparable price points. The gap between them and Anna isn’t about budget — it’s about what the developers chose to optimize for.

Related: Vidqu AI Review 2026: Is It Safe for Face Swap Videos?

| Disclaimer: This article is based on hands-on testing, publicly available app information, and general industry knowledge as of 2026. Experiences with Anna AI companion may vary depending on the version and device you use. AI companions are designed for entertainment and casual interaction—they don’t replace real relationships, professional advice, or emotional support. Always review app permissions and privacy settings before use. |