Every day, millions of people type deeply personal, strategic, or sensitive information into AI companions:

-

Business ideas

-

Legal hypotheticals

-

Mental health struggles

-

Private conversations they’d never share publicly

And yet, almost no one stops to ask:

Who is actually watching your AI prompts?

In 2025, AI companions are no longer simple chat tools. They are data processors, pattern learners, and—in some cases—training pipelines. While companies advertise privacy, the reality is more nuanced: prompts can be stored, reviewed, reused, anonymized, retained, or flagged, depending on the platform, your settings, and your jurisdiction.

This guide answers the core question directly: Who can see your prompts, how long they’re stored, and which AI companions are safest in 2025, according to the latest AI Companion Privacy Rankings.

You’ll get:

-

A clear ranking of AI companions by privacy

-

A breakdown of prompt storage, human review, and training reuse

-

A proprietary Prompt Exposure Risk Framework™

-

Practical guidance for protecting your prompt privacy

-

Verified references to platform policies and regulations

If you use AI for work, creativity, or personal reflection, this guide is essential reading.

Why AI Privacy Became a Critical Concern: A 2025 Case Study

By mid-2025, millions of users were interacting with AI companions daily—sharing everything from business strategies and creative ideas to personal reflections. Yet, few paused to consider a key question: Who actually sees these prompts?

Several real-world events drove this concern into the mainstream:

-

Human Review Disclosures: Companies began publishing that human moderators occasionally read flagged conversations, raising alarms about potential exposure.

-

Regulatory Pressure: The EU AI Act and emerging U.S. state laws started requiring transparency, opt-outs, and data protection audits.

-

Data Leakage Incidents: Publicized cases of AI prompts inadvertently appearing in training datasets or outputs made headlines.

-

Expanding Use Cases: AI companions were no longer just productivity tools—they were used for mental health, coaching, and personal relationships, increasing the stakes for privacy.

The result? Privacy was no longer a niche feature. By 2025, it became a critical factor influencing which AI users trusted—and ultimately adopted.

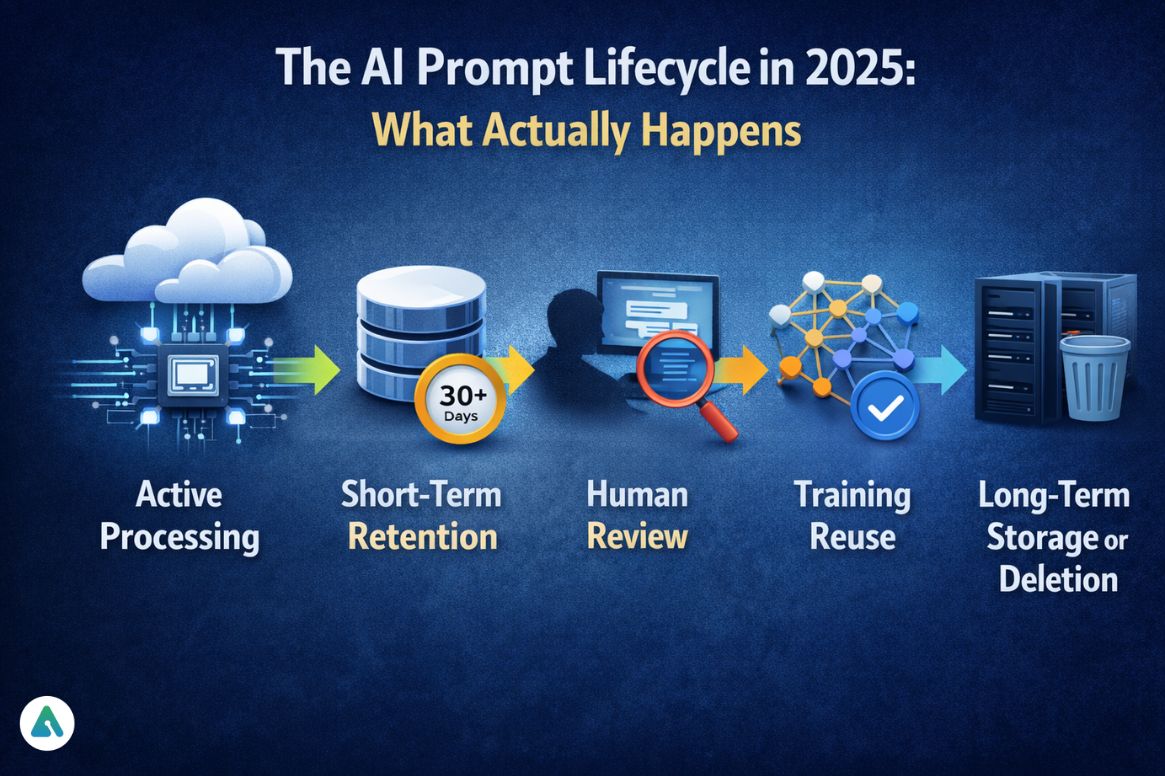

The AI Prompt Lifecycle in 2025: What Actually Happens

Most users assume AI prompts disappear instantly. They don’t. Here’s the realistic lifecycle of an AI prompt:

-

Active Processing

-

Cloud-based models

-

Local inference (rare, but growing)

-

Temporary or persistent memory systems

-

-

Short-Term Retention

-

Stored for abuse detection, debugging, safety auditing, and system improvement

-

Retention periods: 0 seconds → 30+ days

-

-

Human Review (Conditional)

-

Prompts may be sampled randomly or flagged

-

Reviewed in anonymized form

-

Often misunderstood and controversial

-

-

Training Reuse (Opt-in or Default)

-

Some platforms train on user conversations by default

-

Some allow opt-out

-

Others require explicit opt-in

-

-

Long-Term Storage or Deletion

-

Deletion does not always mean complete removal from backups or derived training data

-

Privacy lives in the details—not marketing copy.

Prompt Exposure Risk Framework™ (2025)

To evaluate AI privacy according to the AI Companion Privacy Rankings, we use five key risk factors:

-

Retention Length – How long prompts are stored (including backups)

-

Human Review Access – Whether humans can read prompts

-

Training Reuse Policy – Whether prompts feed model training

-

User Control – Opt-outs, deletion tools, privacy settings

-

Deployment Model – Cloud-only vs local/offline inference

Risk Tiers Explained

| Tier | Meaning |

|---|---|

| Tier 1 | Minimal exposure, user-controlled, privacy-first |

| Tier 2 | Low exposure with clear opt-outs |

| Tier 3 | Moderate exposure, defaults favor the platform |

| Tier 4 | High exposure, consumer data reused or reviewed |

| Tier 5 | Maximum exposure, entertainment-first systems |

AI Companion Privacy Rankings 2025

Tier 1: Maximum Privacy (Lowest Prompt Exposure)

-

Apple Intelligence (Private Cloud Compute)

-

Local AI: Ollama, LM Studio, GPT4All

Why they rank highest:

-

On-device or hardware-isolated processing

-

Zero prompt retention by default

-

No training reuse

-

Independent security audits

Best for: Healthcare notes, legal drafts, private journaling, enterprise IP

Tier 2: Privacy-First Cloud AI

-

Claude (Anthropic)

-

Mistral AI (Enterprise / EU deployments)

Key characteristics:

-

Short retention windows

-

Explicit opt-in/opt-out for training (updated Sep 28, 2025: Free, Pro, Max users default to training “On,” opt-out available)

-

Strong EU compliance posture

-

Transparent safety documentation

Best for: Professionals, writers, researchers, sensitive ideation

Tier 3: Conditional Privacy (Settings Matter)

-

ChatGPT (OpenAI)

-

Google Gemini

Reality check:

-

Training reuse is enabled by default on consumer plans

-

Temporary Chat and opt-out options exist

-

Retention up to ~30 days (varies by plan)

-

Enterprise plans offer stronger protection

Best for: General productivity with privacy settings configured

Tier 4: High Prompt Exposure

Why lower tier:

-

Entertainment-first design

-

Long-term conversation memory

-

Past data extraction vulnerabilities

-

Limited transparency around reuse

Best for: Fictional roleplay only — never personal or professional data

AI Companion Privacy Ranking Scores (2025)

| Platform | Tier | Privacy Score | Training Use | Human Review | Retention |

|---|---|---|---|---|---|

| Apple Intelligence | 1 | 9.8/10 | No | No | None |

| Claude | 2 | 9.2/10 | Default-on (Opt-out available, updated Sep 28, 2025) | Limited | Short |

| Mistral (EU) | 2 | 9.0/10 | Opt-in | Limited | Short |

| ChatGPT | 3 | 7.5/10 | Default-on (Opt-out available) | Yes | ~30 days |

| Gemini | 3 | 7.3/10 | Default-on (Opt-out available) | Yes | Variable |

| Character.AI | 4 | 5.9/10 | Likely | Yes | Long |

| Replika | 4 | 5.7/10 | Likely | Yes | Long |

Special Use Cases: Where AI Privacy Matters Most

-

Healthcare & Mental Health: AI companions are not HIPAA-compliant; local AI or Apple PCC recommended

-

Legal & Compliance: Client confidentiality may be compromised; use enterprise plans

-

Business Strategy & IP: Avoid consumer defaults; assume prompts may be logged

Real-World Example: In mid-2025, startup founders noticed AI-generated strategy responses mirrored proprietary insights. No direct leak was proven, but anonymized training can still expose patterns.

Privacy isn’t about intent—it’s about exposure.

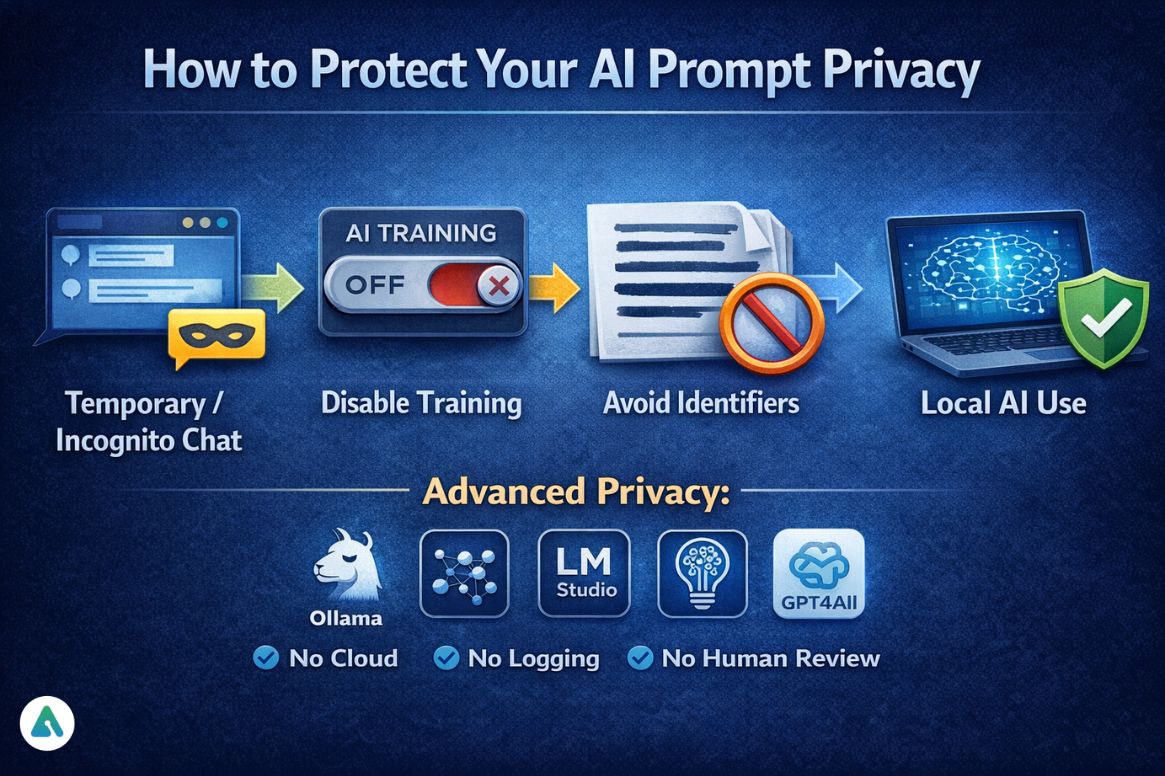

How to Protect Your AI Prompt Privacy

Before Typing Anything Sensitive:

-

Use Temporary / Incognito chat

-

Disable training if possible

-

Avoid names, identifiers, and exact data

-

Prefer local AI for critical work

-

Read the platform’s data policy

During Use:

-

Never paste confidential documents

-

Anonymize scenarios

-

Avoid personally identifiable information

Advanced Privacy: Run offline models like Ollama, LM Studio, GPT4All — no cloud, no logging, no human review

Trends 2025–2027 – AI Companion Privacy Rankings

-

Default no-training modes will become standard

-

On-device inference will expand

-

Regulatory audits will increase

-

Privacy will become a competitive differentiator, not a footnote

FAQs

Q. Can AI companies access or read my prompts?

Yes, AI companies can access your prompts in limited cases. Human reviewers may read anonymized prompts for safety monitoring, abuse prevention, and model improvement. The risk depends on the platform and your privacy settings.

Q. Which AI companion is the most private in 2025?

For maximum privacy, local AI tools like Ollama, LM Studio, and GPT4All are safest. Among cloud-based assistants, Claude (Anthropic) currently has the strongest privacy practices, especially with human review limitations and opt-out training options.

Q. Are AI prompts used for training models?

It depends on the AI platform. Some platforms train models on user prompts by default, while others require explicit opt-in. For Claude, as of September 28, 2025, Free, Pro, and Max users have default-on training with opt-out available. Always check settings to maintain control over your data.

Q. How long does ChatGPT store user prompts?

ChatGPT stores prompts for up to ~30 days on consumer plans. Using Temporary Chat or disabling chat history significantly reduces retention and limits exposure. Enterprise plans often provide stronger data protections.

Q. Is it safe to discuss mental health with AI companions?

AI can be useful for reflection or guidance, but it is not a substitute for licensed mental health care. Privacy and compliance risks remain, so sensitive or personal mental health conversations should only occur with tools that prioritize local processing or HIPAA-compliant systems.

Conclusion: Who Is Watching Your Prompts?

Sometimes, no one is in control, and sometimes, human reviewers are under strict controls.

The difference depends on:

-

The platform you choose

-

The settings you enable

-

The assumptions you make

According to the AI Companion Privacy Rankings 2025, prompt privacy is as much a user responsibility as it is a platform promise. If you wouldn’t say it publicly, don’t type it into an AI companion without understanding where it goes.

Related: How to Build & Customize Your Own AI Companion (2025 Guide)

| Disclaimer: This article is for informational and educational purposes only. It does not constitute legal, medical, or professional advice. While we strive for accuracy, AI platforms, privacy policies, and regulations may change over time. Users are responsible for verifying settings, compliance requirements, and platform practices before sharing sensitive or personal information with AI companions. We are not liable for any consequences resulting from the use of AI tools or reliance on the information provided here. |