In 2024, pointing an AI at a fridge and asking what was inside could produce an answer like “milk” when the shelf held a carton of juice. The vision model saw the shape of a container and made a plausible guess. It wasn’t lying — it was pattern-matching without genuine understanding.

In 2026, that kind of mistake is a relic. Not because the models got better at recognizing juice cartons — but because they stopped treating visual, audio, and contextual inputs as separate questions and started answering them as one.

That’s the real shift behind multimodal AI companions. Not better AI. Better integration.

What a Multimodal AI Companion Actually Is

A multimodal AI companion is an AI system that understands and responds using multiple types of input — text, images, audio, and video — to create interactive, context-aware experiences that respond to the whole situation rather than just the typed question.

It can see (analyze images or live video), hear (understand voice and ambient sound), speak (respond naturally with voice), and interpret context across all of those inputs simultaneously. Show it a screenshot, ask a question out loud, and it responds to both — not sequentially, but as a unified understanding of what you showed and what you asked.

That’s the distinction from a chatbot. A chatbot answers your text. A multimodal companion responds to your situation.

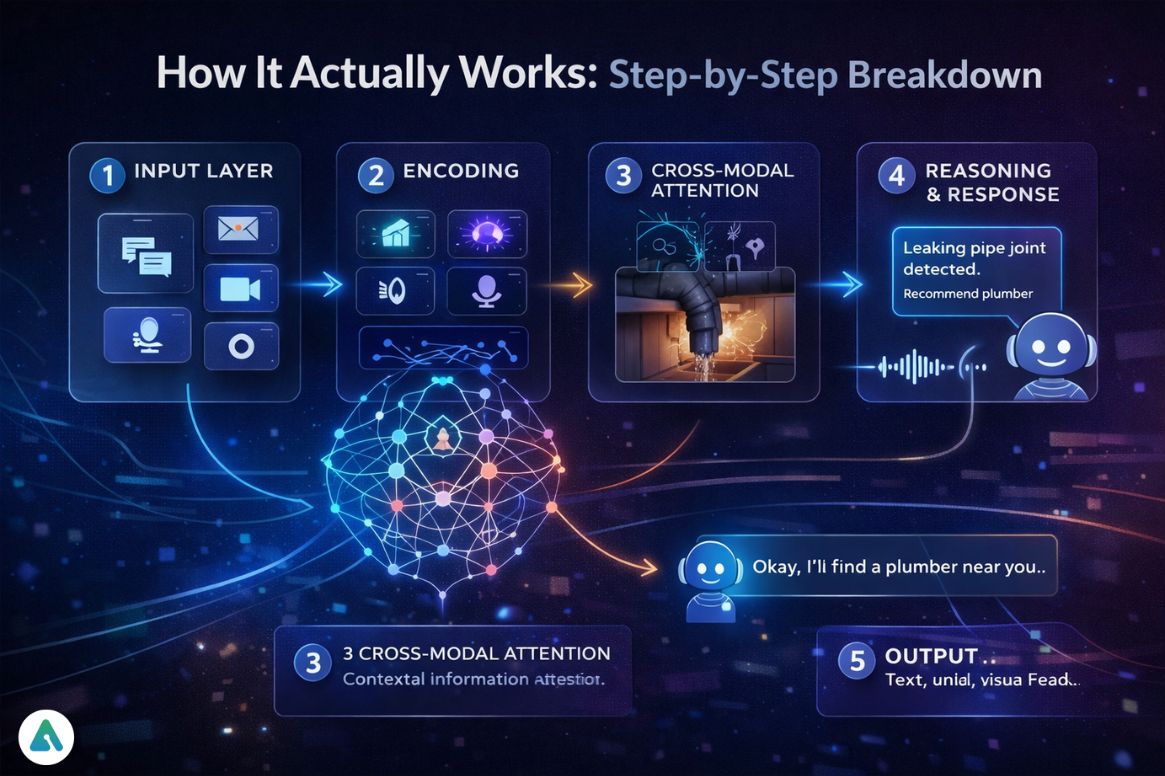

How Multimodal Companion Actually Works: A Step-by-Step Breakdown

When I first pointed a prototype AI at a broken pipe under a sink, the system didn’t just see a pipe. It heard the drip. It combined the visual of the damaged joint with the acoustic signature of the water flow and surfaced a diagnosis — specific, not generic. That’s what the architecture is actually doing.

Step 1: Input Layer The system receives simultaneous streams: text, images, audio, and video. Each arrives as a different data type.

Step 2: Encoding Specialized neural networks process each input type: vision models interpret images, speech models transcribe and analyze audio, and language models handle text. These run in parallel, not in sequence.

Step 3: Cross-Modal Attention (The Real Intelligence) This is what most explanations call “fusion,” but the technical term is more specific. Cross-modal attention mechanisms allow the model to learn which parts of one input are most relevant to understanding another. The voice tone doesn’t just get added to the visual input — the model learns to weight the shaky voice more heavily when the face simultaneously shows stress. The combination creates an emotional context that neither input would convey alone.

This is sometimes called “Semantic Folding” — the process of mapping different types of meaning (visual meaning, acoustic meaning, linguistic meaning) into a shared representational space where relationships between them can be reasoned about.

Step 4: Reasoning and Response Selection The system decides whether to generate a reply, take an action, or ask a follow-up question — based on the unified understanding, not just the most recent text input.

Step 5: Output Text, voice, and visual feedback. Sometimes all three simultaneously.

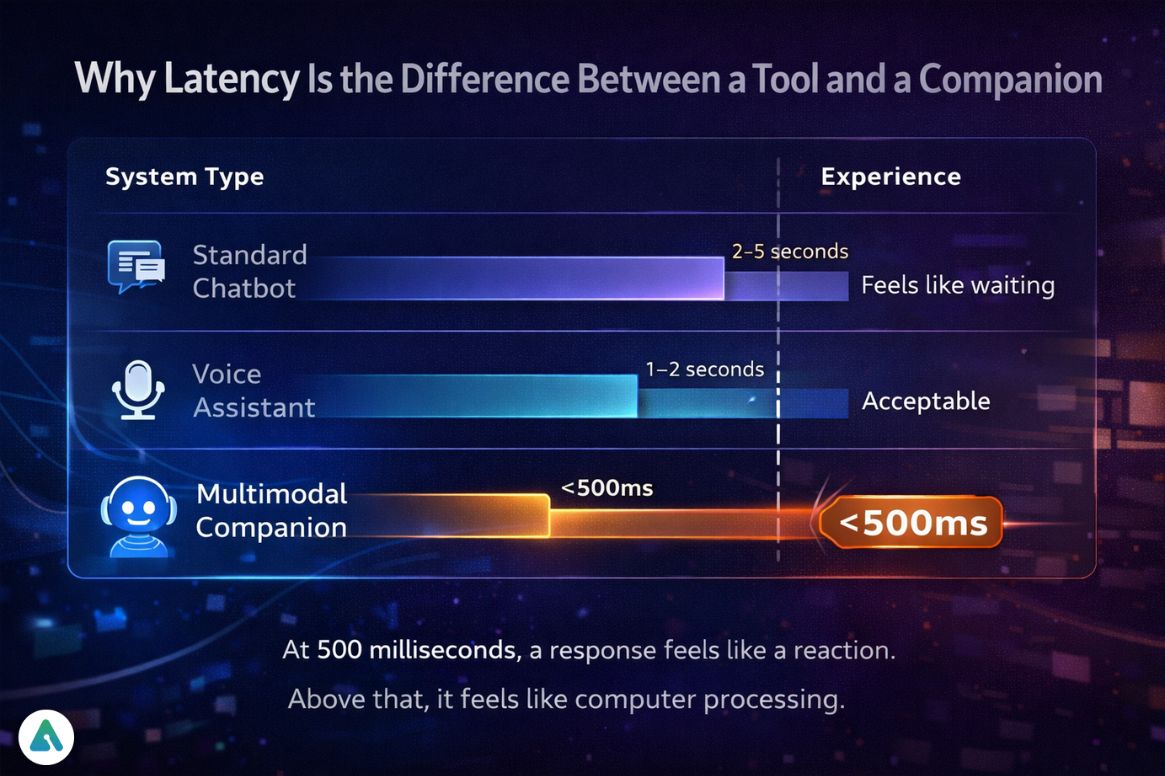

Why Latency Is the Difference Between a Tool and a Companion

| System Type | Latency | Experience |

|---|---|---|

| Standard chatbot | 2–5 seconds | Feels like waiting |

| Voice assistant | 1–2 seconds | Acceptable |

| Multimodal companion | <500ms | Feels real |

The gap between a chatbot and a genuine companion isn’t intelligence. It’s speed.

At 500 milliseconds, a response feels like a reaction. Above that, it feels like a computer processing a request. The subjective experience flips completely based on that threshold — which is why every major 2026 companion platform treats latency as a primary metric, not a secondary optimization.

On-Device AI and Zero-Knowledge Multimodal Processing

The “always-on camera” problem is the biggest barrier that most people won’t address directly. If a multimodal companion sees and hears continuously, where does that data go?

In 2026, the answer increasingly is: nowhere. On-device AI processing means the raw video and audio never leave the device. The model runs locally on dedicated Neural Processing Units (NPUs) — hardware specifically designed for AI inference workloads. Apple’s M4 and M5 chips lead this space for consumer devices, with Qualcomm’s Snapdragon X Elite close behind for Windows-based systems. These chips handle real-time multimodal processing at a power envelope that wasn’t possible before 2024.

Zero-Knowledge Multimodal Processing takes this further: the companion can “see” without storing. Vision models process frames locally, extract the meaningful signal (object detected, emotion inferred, scene understood), and discard the raw frame. What gets sent to the cloud — if anything — is the abstracted inference, not the original image. The companion accumulates understanding without accumulating a searchable archive of everything the camera saw.

This design matters for users who think carefully about AI companion privacy. An always-on system that stores raw video is a surveillance architecture with a friendly face. An always-on system with on-device processing and no raw frame retention is something genuinely different.

Agentic Multimodal AI: When It Stops Talking and Starts Acting

A multimodal AI companion becomes agentic when it takes actions on behalf of the user rather than just responding.

The example that makes this concrete: you show a flight screenshot and say, “book something like this.” The agentic system reads the image to extract flight details, searches available options, surfaces alternatives, confirms with you, and completes the booking. No manual steps. The multimodal perception (reading the screenshot) feeds directly into the decision-making (searching) and the tool usage (booking).

Technologies enabling this — LangChain, AutoGPT, and similar orchestration frameworks — have matured significantly in 2026. One emerging concept worth tracking: Personal Action Tokens (PATs), the emerging standard for how AI agents authorize transactions on behalf of users without requiring full account credential access. A PAT grants a specific, scoped permission for a specific action — rather than giving the AI broad account access that creates obvious security risks.

In 2026, the line between an AI companion and an AI agent has blurred. A companion with a persistent personality and memory that can also take real-world actions is both.

Real-Time Use in Gaming: Where Multimodal Companions Prove Themselves

During testing with a prototype connected to a live game feed, the stand-out moment wasn’t visual quality. It was timing.

The AI flagged danger before it registered consciously — not from reading a health bar, but from picking up on audio strain in the game’s sound environment, subtle changes in movement patterns, and environmental signals that collectively indicated a threat. The warning came before the health bar moved.

That’s when multimodal AI stopped being a feature and became something that felt like intuition. No more inputs processed in sequence. Better understanding assembled from parallel signals.

Practical gaming applications: watching gameplay via video input, listening to player commands via audio, analyzing threats or objectives through combined vision and logic, and responding with voice at sub-500ms latency. The result is an NPC-level companion that behaves like a teammate rather than a menu.

How Major Platforms Handle Live Vision

| Platform | Live Vision | Voice | Memory | Companion Depth |

|---|---|---|---|---|

| ChatGPT (OpenAI) | ✅ Image + limited video | ✅ Advanced Voice | ⚠️ Session-based | Low |

| Gemini 2.0 / 3 | ✅ Strong live vision | ✅ | ⚠️ Project-based | Medium |

| Claude 4 | ✅ Image, no live video | ⚠️ Limited | ⚠️ Project-based | Medium |

| Kindroid | ❌ Text primary | ⚠️ | ✅ Persistent | High |

| Dot | ⚠️ Limited | ✅ Voice-first | ✅ Persistent | High |

ChatGPT and Gemini lead on raw multimodal capability — vision quality, language understanding, and tool access. Kindroid and Dot lead on companion depth — persistent memory, personality consistency, emotional continuity across sessions. The trade-off in 2026 is still real: the most capable multimodal systems have the shallowest companion experience, and the deepest companions have the most limited vision capabilities.

The broader comparison between AI companion platforms for users who prioritize relationship depth over raw capability is worth reading alongside this guide.

Digital Attachment and the Loneliness Gap

As multimodal companions become more responsive, more emotionally perceptive, and more contextually present, the psychological dynamics of human-AI attachment become a real consideration — not a theoretical one. A companion that responds to voice tone, interprets facial expressions, and adapts its responses to emotional state is doing something genuinely different from a chatbot. It’s creating the experience of being understood.

Research on how AI companions affect loneliness shows both benefits and risks. The benefits are real: for isolated individuals, a companion that responds contextually and remembers past conversations provides genuine engagement that reduces measurable loneliness markers. The risks are equally real: the same features that make companions feel present can deepen dependency and emotional reliance in ways that substitute for human connection rather than supplementing it.

The multimodal layer intensifies both sides of this dynamic. A companion that can see and hear is more present than one that only reads text. That presence is what makes it valuable — and what makes the attachment question more urgent, not less.

How Multimodal AI Understands Emotion

These systems analyze prosody — the tone, pitch, and rhythm of speech — alongside visual signals from facial expressions and body language. A shaky voice combined with a tense expression maps to stress. Rapid speech with elevated pitch maps to excitement. These combinations feed into the companion’s response selection, adjusting tone and content based on the emotional context rather than just the literal meaning of the words.

This is what makes the interaction feel human-aware rather than just technically accurate. The psychological mechanisms behind AI attachment are partly rooted in this: humans are wired to respond to systems that respond to their emotional state. When an AI does that, the brain’s social processing activates in ways it doesn’t for a text interface.

Risks Worth Taking Seriously

Bias amplification across modalities. A mistake in one input stream doesn’t stay contained — it propagates. A vision model that misreads a facial expression feeds that misread into the emotional understanding, which affects the response, which affects the interaction. Multimodal AI doesn’t just inherit single-modality bias; it can amplify it across the entire pipeline.

The always-on privacy surface. Even with on-device processing, a continuously active camera and microphone create a new category of data exposure risk. Zero-knowledge processing mitigates this — but users should verify that specific platforms implement it rather than assuming it.

Over-reliance on AI judgment. An agentic companion that takes real-world actions introduces accountability questions that passive chatbots don’t raise. Who reviews the booking the AI made? Who catches the transaction that the agent authorized incorrectly? The capability and the oversight responsibility arrive together.

2026 Trends: Where This Leads

Real-time always-on companions — continuous ambient awareness rather than session-based interaction.

Agentic AI as default — action-taking becomes standard, not premium. The distinction between “assistant” and “agent” collapses.

Emotion-aware responses as baseline — prosody analysis and facial expression reading become expected features rather than differentiators.

Personal AI avatars with persistent memory — the digital identity and legacy questions this creates are already emerging.

Privacy-first local processing — hardware-native AI inference (M4/M5, Snapdragon X Elite) makes on-device processing the standard consumer expectation, not an enterprise option.

Frequently Asked Questions

Q. What is a multimodal AI companion?

A multimodal AI companion is an artificial intelligence system that understands and responds using multiple input types—such as text, images, audio, and video—at the same time. It creates interactive, context-aware experiences that go beyond simple text-based chat.

Q. How does a multimodal AI companion work?

A multimodal AI companion works by processing different inputs in parallel (text, images, audio), encoding them into a shared representation, and using cross-modal attention to combine them into a unified understanding. It then generates context-aware responses or actions based on that combined input.

Q. Is ChatGPT a multimodal AI?

Yes, ChatGPT is a multimodal AI system because it can process text, images, and voice input. However, a full multimodal AI companion typically adds real-time awareness, persistent memory, and the ability to take actions on behalf of the user.

Q. What is agentic AI in simple terms?

Agentic AI refers to AI systems that can take actions—such as booking, searching, or automating tasks—based on user intent. Unlike traditional AI that only generates responses, agentic AI can complete real-world tasks independently.

Q. What is the difference between multimodal AI and generative AI?

Generative AI focuses on creating content like text, images, or code from prompts.

Multimodal AI focuses on understanding and responding to multiple types of input (text, audio, images, video). Many modern AI systems combine both capabilities.

Q. Why does latency matter for AI companions?

Latency is the time it takes for an AI to respond. When responses happen in under 500 milliseconds, interactions feel instant and natural. Higher latency makes the AI feel slower and less human-like, reducing the sense of real-time interaction.

Q. What is zero-knowledge multimodal processing?

Zero-knowledge multimodal processing is a privacy-focused approach where video, audio, and other inputs are processed directly on the user’s device. Only abstract insights—such as detected objects or inferred emotions—may leave the device, and raw data is not stored or transmitted.

Q. What are real-world examples of multimodal AI companions?

Real-world examples include AI assistants that respond to voice and images, gaming companions that react to live gameplay, and smart devices that use cameras and microphones to understand their environment in real time.

Q. What are the main applications of multimodal AI?

Multimodal AI is used in virtual assistants, gaming, healthcare diagnostics, education tools, robotics, and smart devices. It enables systems to combine visual, audio, and text data for more accurate and human-like interactions.

Q. What makes a multimodal AI companion feel “real”?

A multimodal AI companion feels real when it combines fast response time (low latency), contextual understanding across multiple inputs, and natural interaction through voice, visuals, and memory. These factors create a more human-like experience.

Final Words

The real story of multimodal AI companions in 2026 isn’t capability — the capability is proven. It’s integration: systems that combine vision, voice, and action in real time, process sensitive data locally without storing it, and build the kind of emotional context that makes interaction feel present rather than transactional. The gap between that and a chatbot isn’t intelligence. It’s architecture — and in 2026, the architecture is finally catching up to the ambition.

Related: Claude vs ChatGPT (2026): Which AI Actually Wins for Coding, Writing & Automation?

| Disclaimer: This guide is for informational purposes only. AI tools and multimodal systems are evolving quickly, so features and capabilities may change over time. Always review official documentation and use these technologies responsibly, especially when it comes to privacy and data use. |