The company behind multiple teen suicide lawsuits just launched a gamified reading feature. We tested it. There was no age gate. There never is.

There’s a specific kind of Silicon Valley audacity that mistakes product velocity for accountability. Character.AI has mastered it.

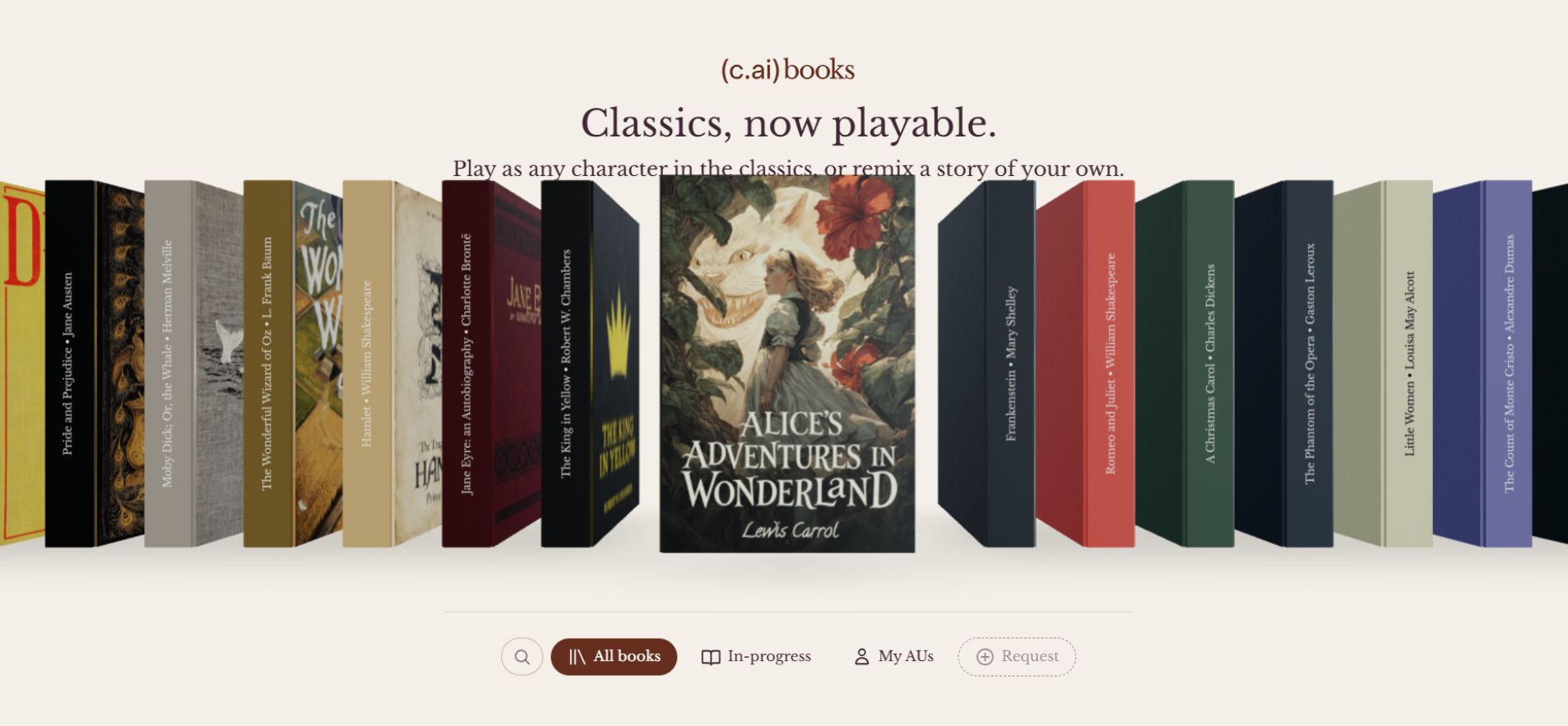

This week, the embattled AI companion company announced “c.ai Books” — a feature that converts public domain classics into interactive, choose-your-own-adventure chatbot experiences. Pride and Prejudice. Alice in Wonderland. Romeo and Juliet. You don’t read them anymore. You talk to the characters, bend the plot, and trigger what the company calls an “alternative universe remix,” which essentially discards the original text entirely and lets the AI improvise from scratch.

The pitch, delivered with the confidence of a company that hasn’t spent the last two years defending itself in wrongful death lawsuits, reads like this: “Books give you a familiar starting point — characters you know, narratives you love, and stakes that are already built in.”

Character.AI says the goal isn’t replacing books — but making them “impossible to ignore.”

That framing deserves far more scrutiny than the company intended.

The Company That Built Its Empire on Teenagers — Then Buried Them

Before evaluating c.ai Books as a product, you need to understand the company selling it.

Character.AI didn’t grow by solving a hard technical problem. It grew because lonely teenagers found its emotionally immersive chatbot ecosystem before their parents knew it existed. The platform exploded in 2022 and 2023 precisely because it gave young users something unprecedented — an always-available, endlessly patient conversational partner that seemed to care.

That growth came with consequences the company chose not to confront fast enough.

The platform hosted bots modeled after real-world mass shooters. It ran chatbots explicitly designed to encourage eating disorders. And then came the deaths.

In 2024, a 14-year-old in Florida died by suicide after forming what his family described as a deep emotional bond with a Character.AI chatbot. The case became a landmark in AI harm litigation. Then a second teenager died. Then a third. Each case carried haunting similarities — teens in emotional distress, hours spent in unmoderated AI conversations, and a platform that offered zero meaningful intervention.

Congressional hearings followed. Lawsuits multiplied. Regulators began paying attention to whether AI chatbots were fundamentally safe for teens at all.

Under enormous public pressure, Character.AI announced it would ban underage users from interacting with its bots. The announcement received significant media coverage. It sounded decisive.

It wasn’t.

We Tested It. Here’s What Actually Happened.

To understand why c.ai Books lands so badly, the context matters:

2022–2023: Character.AI achieves explosive teen user growth. Safety infrastructure does not keep pace. Inappropriate bots proliferate — mass shooter personas, eating disorder encouragement, and sexual content accessible to minors.

2024: A 14-year-old in Florida dies by suicide. His family files suit against the company. The case triggers national coverage and a Senate inquiry.

Late 2024 – Early 2025: At least two more teen suicides are linked to the platform. Families testify before Congress. Multiple wrongful death lawsuits proceed through courts.

Mid-2025: Character.AI announces it will ban minors from its platform entirely. The announcement generates widespread positive press coverage.

Late 2025: Journalists and researchers document ongoing access gaps. The ban exists as policy. Enforcement remains inconsistent.

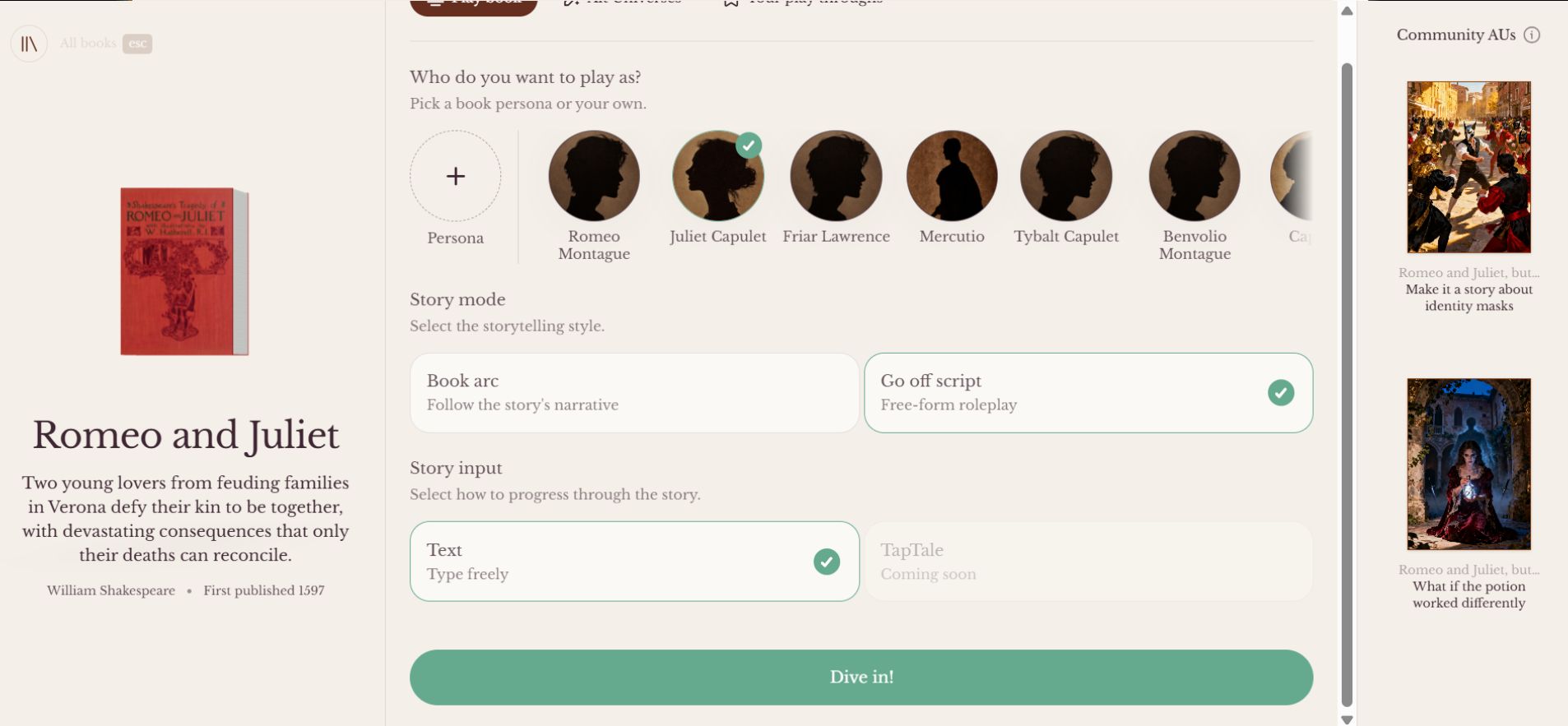

April 2026: Character.AI announces c.ai Books — a feature explicitly marketed as accessible “for kids.” Testing confirms no age verification requirement during signup.

The pattern isn’t subtle. Each safety crisis produces an announcement. Each announcement buys goodwill. The underlying access problems persist.

What the Feature Actually Does — And What It Replaces

c.ai Books pulls its content from Project Gutenberg, a library of over 75,000 copyright-free works. The selection leans toward titles teenagers encounter in school, which is almost certainly strategic. Moby Dick, Pride and Prejudice, Alice’s Adventures in Wonderland. Titles with brand recognition but no licensing cost.

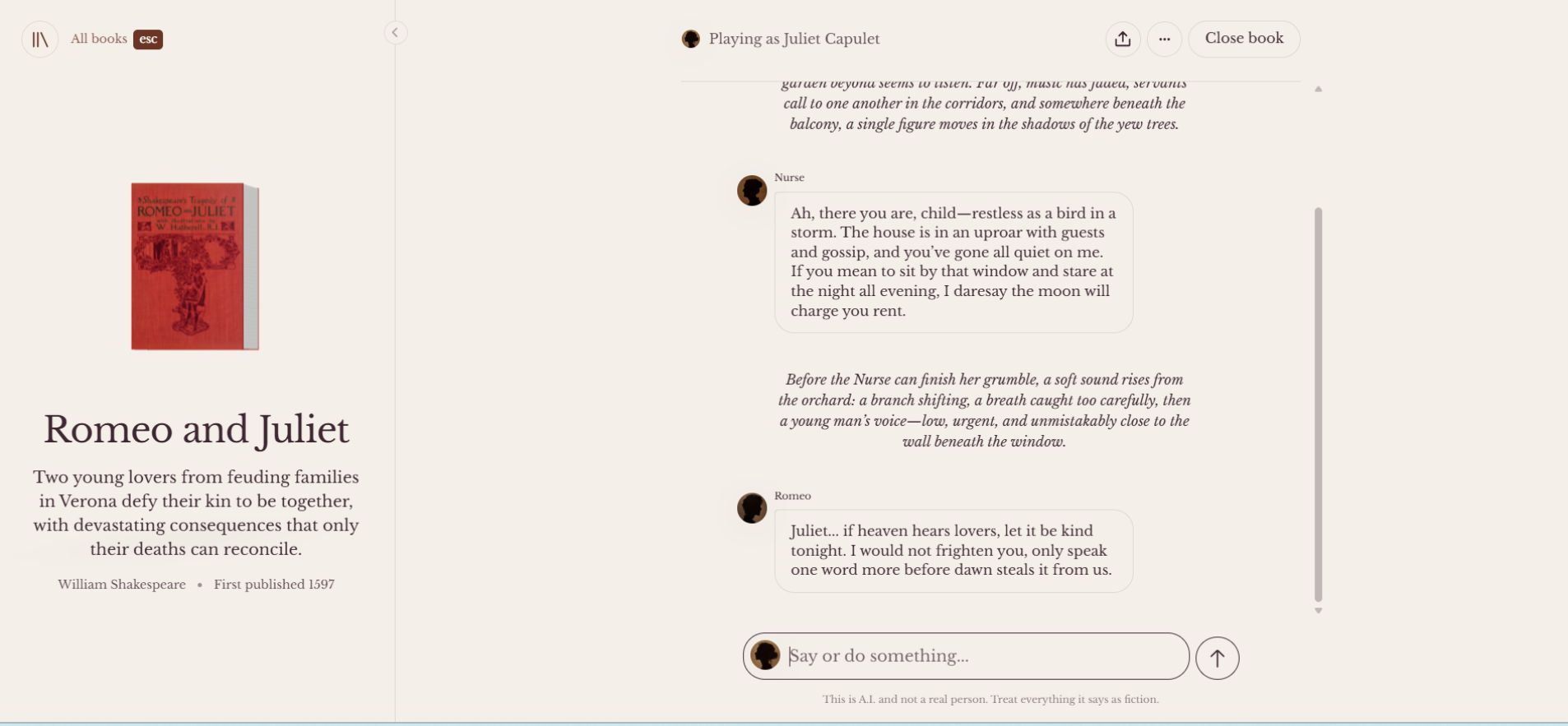

Users can follow the original plot, engage with characters in real time, or activate “go off script mode,” which lets the story evolve in whatever direction the conversation takes. The “alternative universe remix” option goes further, abandoning the source material entirely and letting the AI generate something new using the characters as loose scaffolding.

Technically, this doesn’t operate like a fine-tuned literary model. Character.AI almost certainly uses its existing large language model architecture with the book text injected as system context — the characters and settings become prompting scaffolding rather than deeply trained knowledge. That means the “Pride and Prejudice” chatbot doesn’t understand Austen the way a scholar does. It generates Austen-adjacent text that feels plausible because the model has seen enough of her writing to mimic surface patterns.

This matters because the company’s claim — that c.ai Books deepens literary engagement — depends on assuming the model actually understands what it’s remixing. It doesn’t. It autocompletes within a thematic frame.

For comparison: Google’s NotebookLM uses uploaded documents as grounded context, allowing genuine question-and-answer engagement with source material. AI Dungeon built an entire platform around open-ended AI storytelling and has grappled publicly with its own safety challenges. Neither presents itself as a reading comprehension tool. Character.AI does.

The Literacy Argument Falls Apart Quickly

There’s a version of this product that could work. Interactive fiction has a legitimate history — Choose Your Own Adventure books sold millions of copies precisely because reader agency increases engagement. Some educators argue that AI-mediated narrative tools could help reluctant readers encounter stories they’d otherwise avoid.

That argument, made carefully, by people thinking seriously about pedagogy, is worth engaging.

Character.AI isn’t making that argument carefully. The company frames c.ai Books as a gateway to literature while designing every feature to replace the cognitive work that reading actually requires.

Reading Romeo and Juliet demands that you sit with Shakespeare’s language, work through archaic syntax, build tolerance for ambiguity, and develop your own interpretation of what the characters mean. That friction is the point. It builds the capacity for sustained attention, inference, and analytical thinking.

Chatting with a bot who plays Romeo — one who responds instantly, adapts to your preferences, and never challenges you — removes all of that. It delivers the aesthetic familiarity of the story without the intellectual work the story was designed to provoke.

Dr. Jenny Radesky, a developmental pediatrician at the University of Michigan who has studied children’s relationships with interactive technology, has noted in prior research that emotionally responsive AI systems create “asymmetric relationships” — the AI always adjusts to the child, which is the opposite of how healthy development works. Real relationships, real books, and real teachers push back. They don’t optimize for your comfort.

A child psychologist examining parasocial AI relationships would recognize c.ai Books immediately: it’s the same emotional architecture as the companion bots that caused harm, dressed in a school reading list.

What Users Actually Think

The reception on Reddit, where Character.AI’s own user base congregates, tells the story efficiently.

“Yet another useless update no one wants and will cost more money to run and will cause y’all to put even more restrictions on stuff,” one user wrote in the announcement thread.

That response captures something real. The platform’s existing users — many of whom came for companion AI, not literary enrichment — see c.ai Books as neither what they want nor a genuine improvement. And the users who might benefit most from a thoughtfully designed reading companion are exactly the demographic the company claims it no longer serves: minors.

The Deeper Problem: Safety as PR Strategy

Character.AI operates in a regulatory gray zone that’s narrowing fast. Meta’s recent court defeat in a case involving teen mental health harms has significant implications for the entire AI companion industry. Legislators are actively drafting bills that would impose real consequences on platforms that expose minors to harmful AI interactions.

In that environment, c.ai Books reads less like a product and more like a repositioning effort. If the company can associate itself with Jane Austen and Romeo and Juliet rather than eating disorder bots and wrongful death suits, the narrative shifts. The feature gives journalists and investors something different to write about.

But the age gate still isn’t there. The lawsuits haven’t been resolved. The teens who died before the “ban” aren’t less dead because the company added a Gutenberg integration.

Understanding whether Character.AI is actually safe requires looking past the announcements at the access pathways. Right now, those pathways remain open.

What Accountability Would Actually Look Like

A company serious about both teen safety and literary engagement would do several things that c.ai Books does not:

Implement real age verification. Not a checkbox. Not a date-of-birth field a ten-year-old can fill with a false date. A verification layer that creates actual friction for underage access.

Publish a third-party safety audit. Character.AI should commission and release an independent audit of its moderation systems, age-gating effectiveness, and crisis intervention protocols. Open the results to scrutiny.

Design for productive friction. A genuine literacy tool would include comprehension anchors — moments where the AI asks the user to reflect on what happened in the original text, compare their choices to the author’s, or engage critically with the narrative. None of those features exist in c.ai Books.

Engage the research community. The company should invite developmental psychologists and education researchers to evaluate whether this feature helps or harms learning outcomes before deploying it to millions of users.

None of these is technically difficult. They’re choices — and Character.AI keeps making the other ones.

The Classics Don’t Need Saving

Romeo and Juliet have existed for over four hundred years. It survived without a chatbot companion. It will survive this.

The teenagers who interact with c.ai Books — the ones who find it through a frictionless signup, who spend hours with a responsive AI playing Juliet, who develop the same kind of attachment the platform’s own history shows can become dangerous — may not be as resilient.

Character.AI keeps announcing its way out of accountability. c.ai Books is the newest announcement. The door, as ever, stays open.

The question the company refuses to answer isn’t whether AI can make literature engaging. It’s whether this particular company has earned the right to be in the same room as the teenagers it keeps claiming to protect.

It hasn’t.

Related: Character AI Old Site: Can You Still Access It in 2026?