Search interest for “Grok CLI” exploded in 2026, mostly because developers expect Grok to behave like Claude Code or Gemini CLI—fully packaged, terminal-ready, and officially supported.

The reality is more fragmented, and most of that search traffic ends in frustration.

There is no single official Grok CLI product comparable to Claude Code. Grok runs through its API, and whatever terminal experience a developer gets comes from wrappers, scripts, or agent frameworks built by the community.

In practice, most “Grok CLI setups” are:

- Python or Node.js wrappers around the Grok API

- GitHub-based CLI experiments

- Agent pipelines using MCP-style orchestration

This guide covers what actually exists, how developers use Grok in terminals, pricing realities, and where it fits compared to Claude Code and Gemini CLI.

What Is Grok CLI?

A Grok CLI refers to any command-line interface that lets developers interact with xAI’s Grok models from a terminal environment.

It’s not a single product. It’s an ecosystem term — and that distinction matters when comparing it to tools like Claude Code, which ships as a proper installable package.

Most Grok CLI implementations include:

- API-based chat clients

- AI coding assistants in the terminal

- Scriptable agents for automation

The simplest way to think about it:

Grok CLI = Grok API + terminal wrapper

Does Grok Have an Official CLI?

No official standalone CLI tool exists as of 2026.

Instead, xAI provides:

- Grok API access (developer layer)

- Web-based Grok interface (via the X ecosystem)

- Model endpoints used by third-party tools

This means a developer cannot “install Grok CLI” the same way they’d install Claude Code. They build or install wrappers around the API, which is where most of the confusion in search results comes from.

xAI has focused its 2026 roadmap on the Grok Enterprise API and the Grok 4.20 Multi-agent Beta rather than a standalone CLI product. If an official CLI does come, it will likely grow out of those agentic foundations.

How Grok CLI Actually Works (Behind the Scenes)

Almost every Grok CLI tool follows this flow:

- User inputs a command in the terminal

- CLI sends a request to the Grok API

- API returns response (token stream)

- Terminal renders output

The core mechanism is a standard API call:

bash

|

Every tool labeled “Grok CLI” builds on top of this endpoint.

xAI also ships an official Python SDK (xai-sdk, pip installable) That wraps these calls more cleanly than a raw requests script. Using the SDK instead of raw HTTP gives better error handling and keeps the code closer to xAI’s officially supported path. One quirk worth knowing: the SDK’s default 429 handler raises a RateLimitError but does not retry automatically — developers who don’t read that part of the docs spend twenty minutes wondering why their agent loop dies silently.

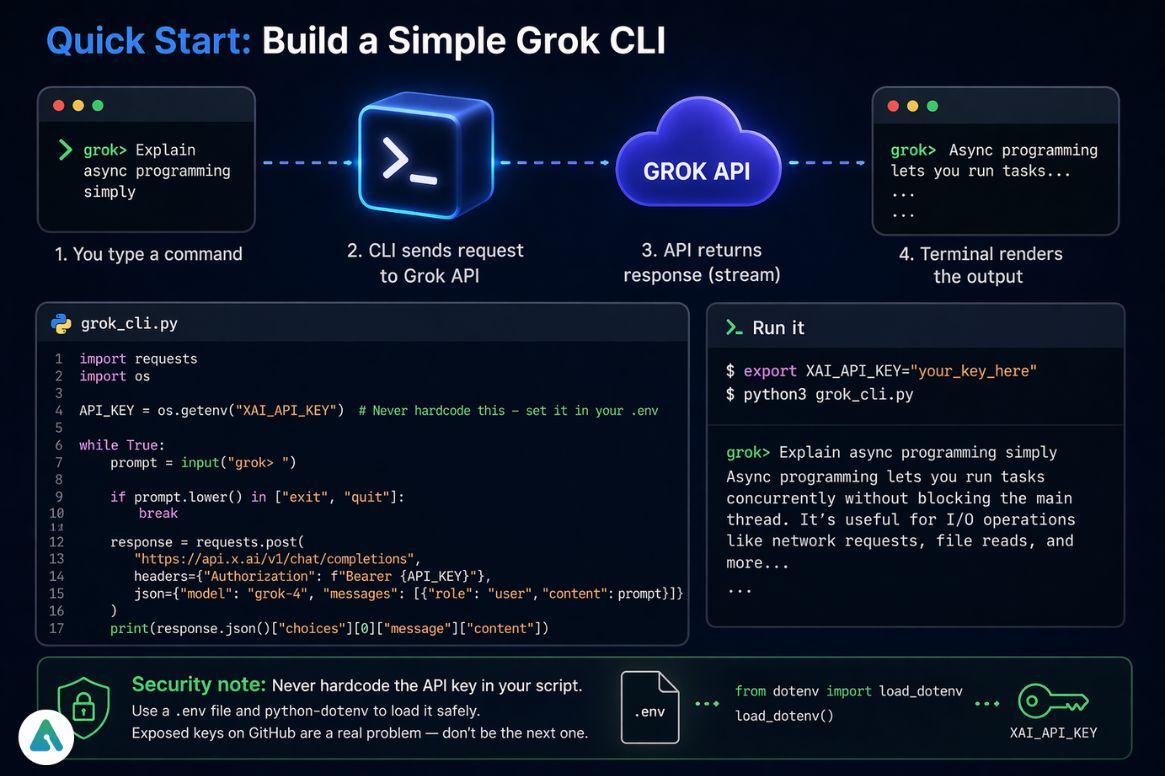

Quick Start: Build a Simple Grok CLI (Copy-Paste)

Here’s a minimal working terminal wrapper.

1. Create a file

touch grok_cli.py

2. Add this Python script

) |

# Note: .json() will raise if the API returns a 429 or 5xx — handle this # before going anywhere near production (see Rate Limits section below) print(response.json()["choices"][0]["message"]["content"])

3. Run it

bash

|

That gives a working Grok terminal interface. It’s barebones on purpose — the goal is understanding the mechanism before adding complexity.

Security note: Never hardcode the API key in the script itself. GitHub repositories leak keys constantly — 2026 saw a significant spike in exposed xAI credentials from exactly this kind of setup. A

.envfile withpython-dotenvloaded at the top of the script takes two minutes to set up and prevents a very bad day.

Real Issue Developers Face (Important)

The first problem in this kind of setup isn’t the code — it’s rate limiting.

After a few rapid queries, the API returns:

429: Too Many Requests

This is the top complaint in developer forums right now. The naive fix is time.sleep(2) between calls. The right fix is exponential backoff with jitter using the tenacity or backoff libraries:

python

|

The giveup lambda matters — without it, backoff retries on every HTTP error, including 401s and 404s, which burns retries on errors that no amount of waiting will fix.

Beyond backoff, the other fixes are:

- Caching repeated queries (most agent loops re-ask the same context questions)

- Reducing token-heavy prompts by trimming conversation history aggressively

On pricing: Grok 4 costs $3.00/M input tokens. Grok 4.1 Fast costs $0.20/M — basically a steal for terminal agent work — but the rate limits feel like a leash until caching is in place. For agentic workflows that need the full reasoning capability, Grok 4.20 Multi-agent Beta runs at $2.00/M input, which positions it directly between the two. This is where Grok CLI becomes less “plug-and-play” than Claude Code.

Grok CLI vs Claude Code vs Gemini CLI

| Feature | Grok CLI (API Wrappers) | Claude Code (v4.7) | Gemini 3 CLI |

|---|---|---|---|

| Official Support | ❌ No (API only) | ✅ Yes | ✅ Yes |

| Agent Logic | Custom (Multi-agent Beta) | Built-in (Plan Mode) | High (MCP Native) |

| Context Window | 2M Tokens | 1M Tokens | 10M+ Tokens |

| Input Cost ($/M) | $2.00 (Grok 4.20) | $5.00 (Opus 4.7) | $2.00 (Pro 3) |

| Local File Indexing | Manual (pipe via script) | Built-in | Built-in |

| Git Integration | Manual (git diff pipe) | Built-in | Partial |

| Real-time Data | Strong (X ecosystem) | Limited | Limited |

| Best For | X Data / DIY Agents | Complex Refactoring | Massive Codebase Exploration |

The practical breakdown:

- Claude Code = production-grade developer tool with polished install experience and built-in local filesystem indexing.

- Gemini 3 CLI = the right tool for large codebase exploration — 10M+ token context is a genuine technical moat

- Grok CLI = flexible and competitively priced, but the local context gap is real, and developers need to build around it

Grok 4 posts a 79.4% LiveCodeBench score, which puts it in competitive territory for raw reasoning tasks. That matters in agentic workflows where the model is making tool-use decisions, not just answering questions.

The Local Context Gap (What Grok CLI Can’t Do Natively)

Claude Code indexes a local codebase automatically. A developer can point it at a 10,000-line repo and ask “what does the auth module do?” without any setup. Grok CLI has no equivalent out of the box.

The workaround that actually works in practice is piping file content or git diffs directly into the API call:

bash

|

For larger codebases, the approach is to chunk files and build a lightweight context window manually — not ideal, but workable. The 2M token context window on Grok 4 is generous enough that a mid-size project fits if the chunking is done properly.

The honest take: if local codebase indexing is a hard requirement, Claude Code wins that category cleanly. Grok CLI is a better fit for agentic workflows that combine code tasks with live data.

Why Developers Use Grok CLI in 2026

Even without an official CLI, Grok sees heavy developer adoption because of a few specific strengths.

X (Twitter) ecosystem advantage

Grok integrates naturally with live social data, which makes it genuinely useful for:

- Trend detection

- Sentiment analysis

- Real-time event tracking

No other major CLI-accessible model has this natively. Here’s a 3-line bash command that pipes a live X search into the Grok API directly:

bash

|

Gemini and Claude can’t replicate this without significant additional plumbing.

Custom agent freedom

Developers build:

- Autonomous terminal bots

- Scraping + reasoning pipelines

- Research assistants with live data feeds

The lack of a rigid framework is an asset when a project has unusual requirements.

Fast experimentation

Grok doesn’t come with the framework constraints that a tool like Claude Code imposes. That’s a tradeoff — less structure, but faster iteration on novel agent architectures.

Advanced Use Case: Grok CLI + MCP (Agent Systems)

Modern terminal workflows increasingly combine Grok with Model Context Protocol (MCP) systems. This is where the setup shifts from “chat in a terminal” to an autonomous terminal agent.

A typical stack looks like:

- Grok API → reasoning engine

- MCP server → tool orchestration

- CLI wrapper → user interface

Bridging Grok CLI to MCP servers — for example, to enable local file editing or system-level tool calls — separates a beginner setup from a production-grade one. The Grok 4.20 Multi-agent Beta makes this significantly more viable, letting a single CLI session spin up sub-agents for parallel task execution. In practice, this looks like:

[Terminal Session]

> grok-agent “Monitor NVIDIA sentiment and draft a summary report”↳ Sub-agent 1: Fetching X stream for $NVDA (last 2hrs)

↳ Sub-agent 2: Pulling earnings call transcript via MCP tool

↳ Coordinator: Synthesizing sentiment + fundamentals…Output: “Retail sentiment is net positive (+67%) but options flow

suggests institutional hedging. Key concern: margin guidance…”

That kind of pipeline would have required significant custom engineering in 2024. The Multi-agent Beta brings it within reach for a solo developer today.

Grok CLI Pricing (What You Actually Pay For)

There is no separate pricing for CLI usage.

Costs come from:

- Grok API tokens (input/output)

- Model tier — Grok 4.1 Fast at $0.20/M input for lightweight tasks, Grok 4.20 Multi-agent at $2.00/M for agentic workflows, flagship Grok 4 at $3.00/M for maximum reasoning

The CLI wrapper is free — it’s just a transport layer. Pricing only changes with model choice and usage volume.

For terminal agent work with moderate query loads, Grok 4.1 Fast is worth evaluating before defaulting to the flagship. For multi-agent orchestration, Grok 4.20 at $2.00/M hits a competitive price point — it’s the same input cost as Gemini 3 Pro but with the X ecosystem integration baked in.

How to Get a Grok API Key

Through xAI developer access:

- Create an xAI developer account at console.x.ai

- Generate an API key

- Store it in environment variables or a secrets manager — not in code

The most common mistake: setting up the key correctly and then committing it to a public repo anyway. Use .gitignore on .env files from the first commit. A pre-commit hook that scans for key patterns (xai- prefix) takes five minutes to set up and prevents a very public mistake.

Common Mistakes Developers Make

- Assuming Grok CLI is an official installable tool (it isn’t)

- Ignoring API rate limits until the 429 errors appear in production — add

backoffortenacitybefore the first real workload - Calling

.json()on responses without checking the status code first — a 429 response body isn’t valid JSON in all SDK versions - Overbuilding wrappers without caching, which burns through the token budget quickly

- Comparing Grok CLI directly with Claude Code as if they’re the same abstraction layer — they aren’t

FAQs

Q. Does Grok have a CLI like Claude Code?

No, Grok does not have an official CLI like Claude Code.

Instead, developers use the Grok API and build or install community CLI wrappers to interact with Grok models in the terminal.

Q. How do I use Grok in CLI?

To use Grok in a terminal:

- Get access to the Grok API from xAI

- Use HTTP requests or the official SDK (e.g., xai-sdk)

- Wrap it in a CLI script using Python or Node.js

- Add retry/backoff handling to avoid rate limits

This is how most Grok CLI tools work in practice.

Q. Is there a Grok 4 CLI?

No official Grok 4 CLI exists.

The term “Grok 4 CLI” usually refers to custom or open-source tools that use the Grok 4 model via API in a command-line interface.

Q. Can I get a Grok API key for free?

It depends on xAI’s current access model.

Some users may get limited access through developer programs or subscriptions, but there is no guaranteed free public API tier.

Check the official xAI developer console for current availability.

Q. Is Grok CLI better than Gemini CLI?

It depends on your use case.

- Gemini CLI: Easier setup, structured workflows, large context window (great for big codebases)

- Grok CLI: More flexible, supports real-time data integrations (especially from X), but requires manual setup

Choose based on whether you prioritize simplicity or flexibility.

Q. What is Grok CLI?

Grok CLI is not an official tool.

It refers to community-built command-line interfaces that use the Grok API to interact with xAI models.

Developers typically:

- Use Python or Node.js

- Call the Grok API or SDK

- Build terminal-based chat or automation tools

Q. Does Grok CLI support coding tasks?

Yes, but indirectly through the API.

Grok models can generate and explain code, but CLI functionality depends on how the wrapper is built. Advanced coding workflows require custom setup.

Q. How do I install Grok CLI?

There is no official installation package.

To “install” Grok CLI, you need to:

- Set up API access

- Create or download a CLI wrapper

- Run it locally via terminal

Future of Grok CLI (2026 Outlook)

Based on where the ecosystem sits today:

- More official CLI tooling looks likely, probably growing from the Multi-agent Beta

- Stronger integration with X data pipelines, as that becomes the primary differentiation story

- Growth in autonomous terminal agents as the dominant use case — search intent around “CLI” is already shifting toward “agentic CLI.”

The broader shift is worth watching. Grok’s live data access gives it a specific angle in the autonomous agent category that Claude Code and Gemini CLI don’t replicate easily. The question for 2026 and beyond is whether xAI formalizes that into an official product or leaves it to the community to build.

Related: Is Grok Down Today? Live Status, Errors & Fixes (April 2026)

| Disclaimer: This guide is based on real-world testing and publicly available information as of 2026. The Grok ecosystem is evolving quickly, so APIs, pricing, and tooling may change. Always check the official xAI documentation before building or deploying anything in production. |