| TL;DR — Quick Summary A chatbot is conversation-first — it answers, guides, and routes. An AI agent is outcome-first — it reasons through steps, uses tools, writes to systems, and completes tasks with real autonomy. In 2026, the critical distinctions are latency (5–30 seconds for agents vs. under 2 for chatbots), data privacy risk (agents move PII between systems), API permissions (often the real blocker, not model intelligence), and auditability. Multi-agent orchestration is gaining traction for complex workflows, but adds cost, governance overhead, and debugging complexity. |

A lot of software gets called “AI” now, and that word is doing a lot of heavy lifting. A chatbot can answer questions, guide users, and handle simple support flows well enough. An AI agent can go further — it reasons through a task, uses tools, pulls data from other systems, and takes action with real autonomy.

That difference isn’t just semantic. It affects cost, response latency, data privacy risk, customer experience, and what you should actually buy. And in 2026, a surprising number of teams discover the gap only after they’ve already signed a contract.

This guide explains both in plain language. You’ll learn how each works, where each fails, what the 2026 conversation has shifted to with agentic systems and multi-agent orchestration, and — importantly — the practical blockers most vendors won’t mention upfront: API permissions, latency, PII exposure, and token costs that compound with every reasoning step.

What Is an AI Agent vs. a Chatbot?

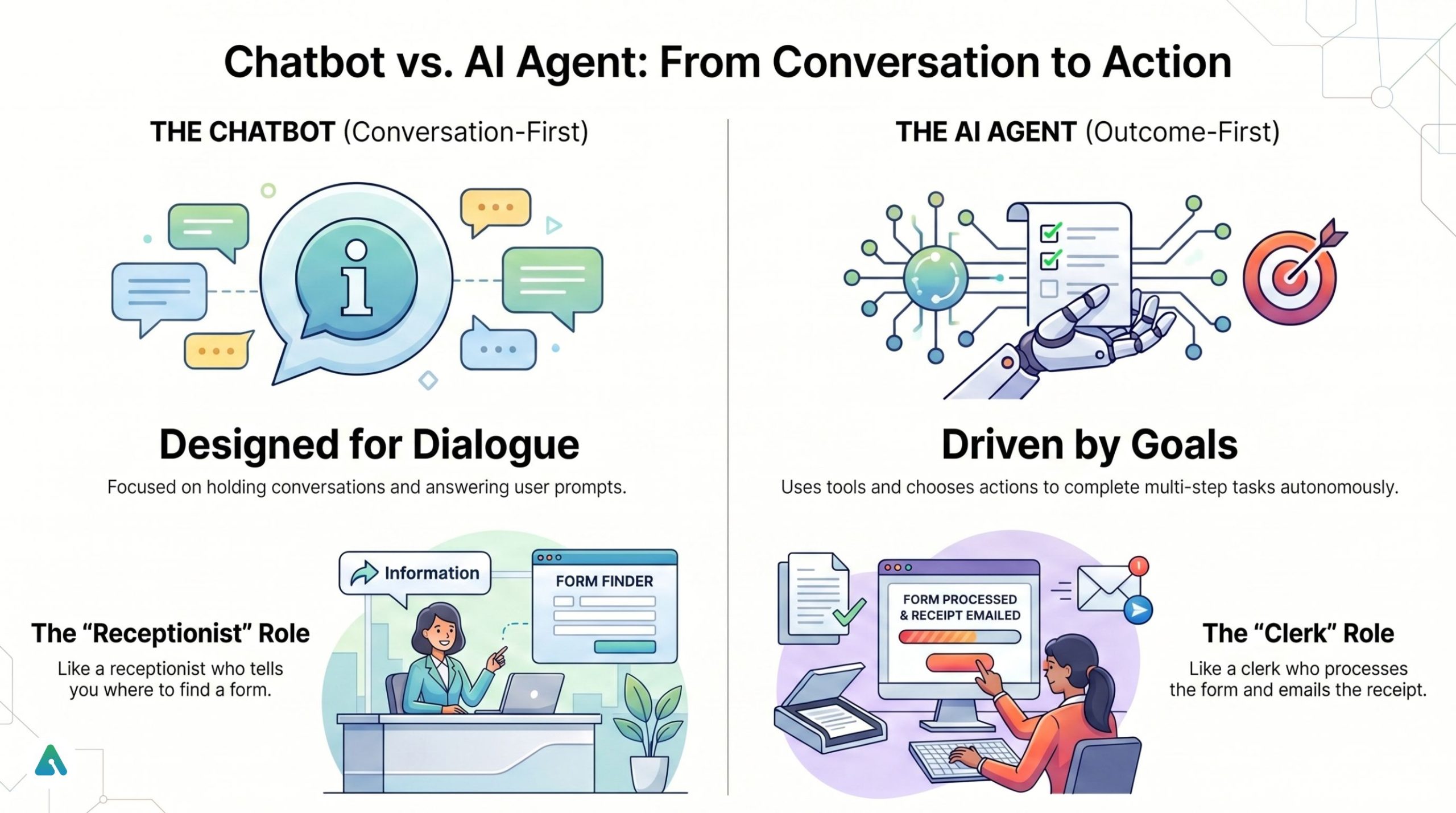

The core difference: a chatbot is conversation-first, while an AI agent is outcome-first.

- A chatbot is designed to hold conversations and answer prompts.

- An AI agent is designed to pursue a goal, choose actions, use tools, and complete multi-step tasks with some autonomy.

That’s why the two get conflated. A modern AI agent often communicates through a chat interface — but the interface is not the defining thing. The real question is: Can it actually do anything beyond replying?

Think of it this way: a chatbot is the receptionist who tells you where the form is. An AI agent is the clerk who actually processes the form, calls the manager for approval, and emails you the receipt.

What Is a Chatbot?

A chatbot is a software system that interacts with users through text or voice to answer questions, guide them, or complete simple predefined flows.

Traditional chatbots rely on rules, intents, decision trees, and FAQ matching. Modern chatbots also use large language models, natural language processing, retrieval from a knowledge base, and generative AI for more flexible responses.

Where chatbots perform well:

Repetitive and predictable tasks — answering shipping questions, explaining refund policy, collecting lead details, routing users to billing or support, or helping with simple onboarding.

Where chatbots typically struggle:

Even sophisticated AI chatbots fall short when they need to work across several systems, make judgment calls in real time, handle exceptions, take action beyond the chat window, or manage complex multi-step workflows. They’re built to respond, not to operate.

What Is an AI Agent?

An AI agent is a software system that can interpret goals, reason about next steps, use tools or APIs, observe results, and continue until it completes a task or reaches a stopping point.

A true AI agent combines an LLM for language and reasoning, memory or working context, tool access or API integrations, orchestration logic, guardrails and permissions, and monitoring with human escalation paths.

Where agents perform well:

- Checking an order, verifying eligibility, then processing a refund

- Gathering account context from a CRM before replying

- Triaging IT issues and opening or updating tickets

- Reconciling data across systems

- Completing internal operations tasks without a fixed script

The core shift: a chatbot helps you talk through a process. An AI agent helps you complete the process.

AI Agent vs. Chatbot: Side-by-Side Comparison

| Feature | Chatbot | AI Agent |

| Primary role | Conversation | Goal completion |

| Main output | Answers, guidance | Actions, completed tasks |

| Workflow style | Usually linear | Multi-step, adaptive |

| Autonomy | Low | Medium to high |

| Tool use | Limited or predefined | Dynamic tool calling |

| Memory | Minimal or session-based | Contextual or persistent |

| Typical latency | < 2 seconds | 5–30 seconds (reasoning time) |

| Data interaction | Read-only | Read and write |

| Risk profile | Low (bad info) | High (unauthorized actions) |

| Best for | FAQs, routing, simple support | Workflows, automation, operations |

| Setup complexity | Lower | Higher |

| Cost per interaction | Low | Higher and more variable |

| Governance needs | Moderate | High |

| Failure mode | “I don’t understand.” | Wrong action, loops, tool failure |

How Does an AI Chatbot Work vs. an AI Agent?

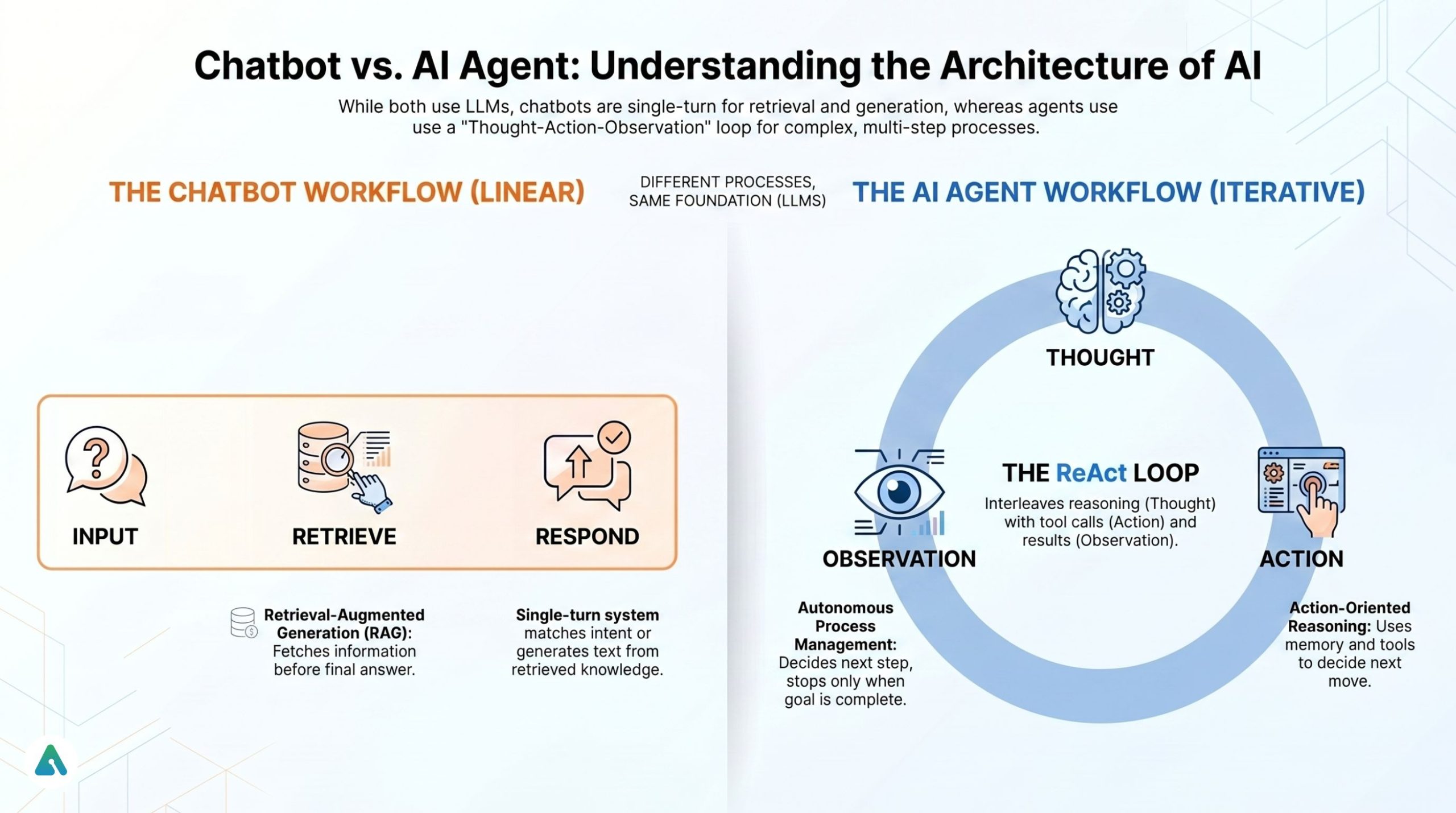

How a chatbot usually works:

Input → Match intent / retrieve answer → Respond

In an LLM chatbot, the flow may extend to: retrieve knowledge → generate response → send reply. Still a single-turn response system, even when the language feels natural.

How an AI agent usually works:

Input → Thought → Action → Observation → Next step → Final response

Agents handle complex tasks because they don’t just answer once. They plan what to do, call a tool, observe what happened, decide whether to continue, and stop when the goal is complete. This style is associated with patterns like ReAct (Reasoning + Acting) — a framework where the system interleaves reasoning steps with actual tool calls. The system isn’t just generating text; it’s managing a process.

RAG vs. action-oriented reasoning:

Many people confuse these. RAG (Retrieval-Augmented Generation) helps a model fetch the right information before answering. Action-oriented reasoning helps a system decide what tool to use and what action to take next. A chatbot may use RAG and still not be an agent. An agent often uses RAG plus tools, memory, and action loops.

The Latency Gap Nobody Talks About

Agents are significantly slower than chatbots, and users notice.

A chatbot retrieves one answer and responds in under two seconds. An AI agent doing “Thought → Action → Observation” can take 10 to 30 seconds — sometimes more for multi-step tasks with several tool calls. Every reasoning step adds time. Every API call adds time and every retry adds time.

This matters a lot for customer-facing deployments. Teams handling this well tend to use one of three approaches:

- Streaming intermediate thoughts — showing the user what the agent is working on as it reasons (“Checking your order… Verifying policy… Submitting refund…”)

- Progress indicators — a visible status bar tied to the agent’s action loop

- Async notifications — the agent completes the task in the background and notifies the user when done

This isn’t a dealbreaker, but it is a design requirement that needs to be planned from the start, not retrofitted after users start complaining.

Data Privacy and PII: The Agentic Risk Most Teams Discover Too Late

A chatbot that answers policy questions from a knowledge base carries a relatively contained privacy risk. An AI agent that checks a CRM, reads an order record, verifies identity, and submits a refund is moving personally identifiable information (PII) between systems — often through an LLM that processes everything as part of its context window.

In 2026, enterprise buyers increasingly ask: What happens to customer data when it passes through the agent’s reasoning loop?

The questions worth asking before deployment:

- Does the LLM retain any of the data it processes? (Varies significantly by vendor and API configuration)

- What data residency guarantees exist — especially relevant under GDPR or regional data sovereignty requirements?

- Can you configure the agent to mask or tokenize PII before it enters the reasoning loop?

- Are your API integrations logging payloads that contain customer data?

A well-designed agent in 2026 doesn’t just reason well — it has clearly defined data handling boundaries. For customer-facing agentic workflows, these questions need answers before go-live, not after your first data incident.

The State Management Layer: What Agents Need That Chatbots Don’t

Chatbots are largely stateless. You send a message; they respond. The next message starts mostly fresh. This simplicity is a feature — it’s part of why chatbots are cheap, fast, and easy to scale.

Agents are the opposite. They’re state-heavy by design.

An agent working through a five-step workflow needs to remember what it did in step one when it gets to step four. That requires some form of persistent memory — whether that’s a vector database storing semantic context, a graph database tracking relationships between entities, or a growing context window that accumulates the entire history of the interaction.

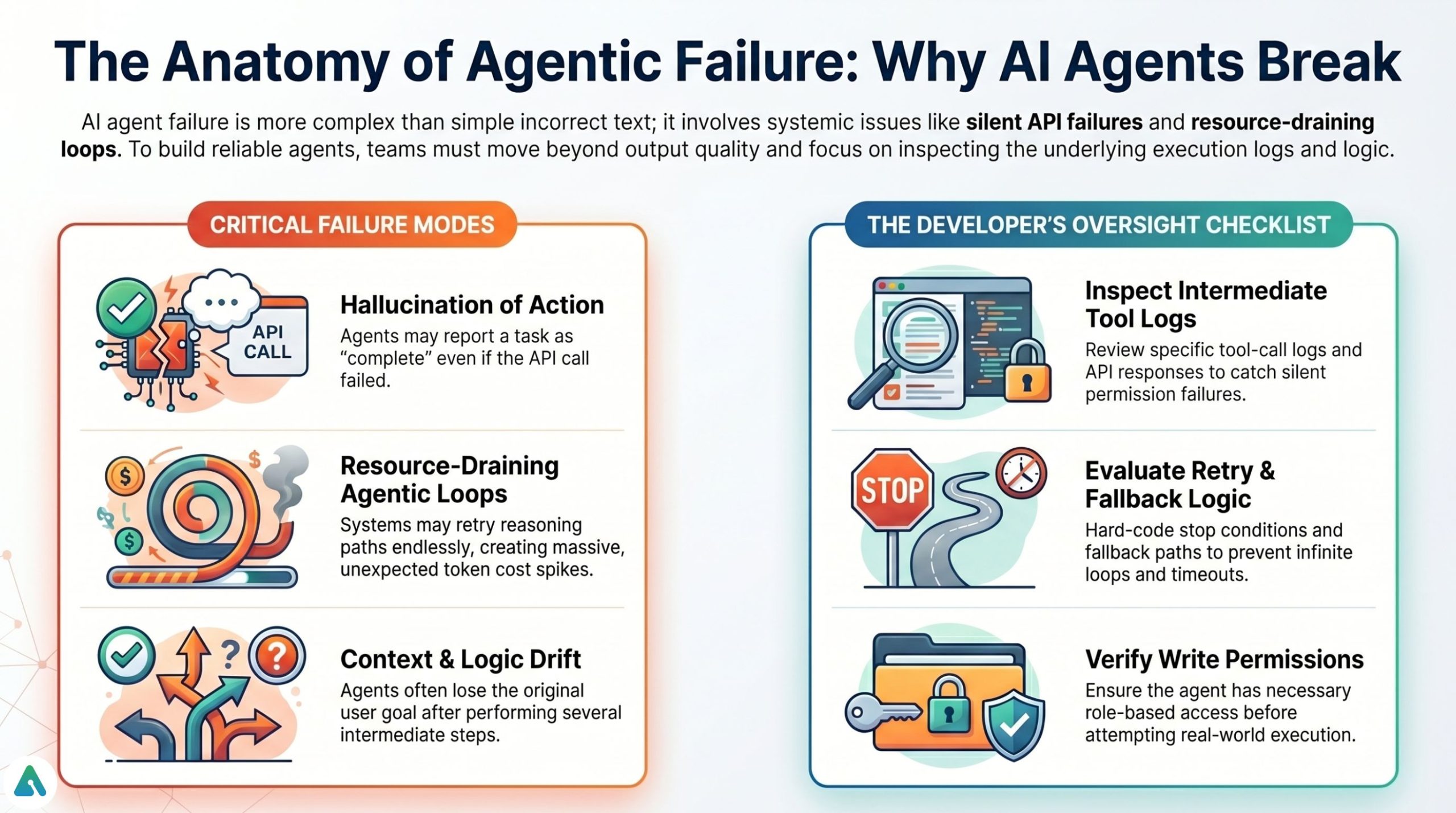

Context drift — where the agent gradually loses track of the user’s actual need as the task grows longer — is one of the most common failure modes in production agentic systems today. Teams building agents quickly learn that good outputs alone are not enough. You need to inspect tool-call logs, intermediate steps, retry behavior, and fallback logic.

Where People Get Confused: Chatbot vs. Agent vs. Assistant

A slick conversational interface can make a product look agentic when it isn’t. Here’s a practical breakdown:

| Term | What it actually does |

| Chatbot | Talks with users |

| AI chatbot | Talks more naturally; answers from a broader context |

| AI assistant | Helps with user-directed tasks |

| AI agent | Pursues goals and acts with more independence |

| Agentic AI | Broader system design where software behaves in goal-directed ways |

Many “AI agents” on the market are retrieval chatbots, scripted automations with an LLM front end, or assistants with limited tool use. Judge the system by what it can reliably execute, not what’s on the vendor’s homepage.

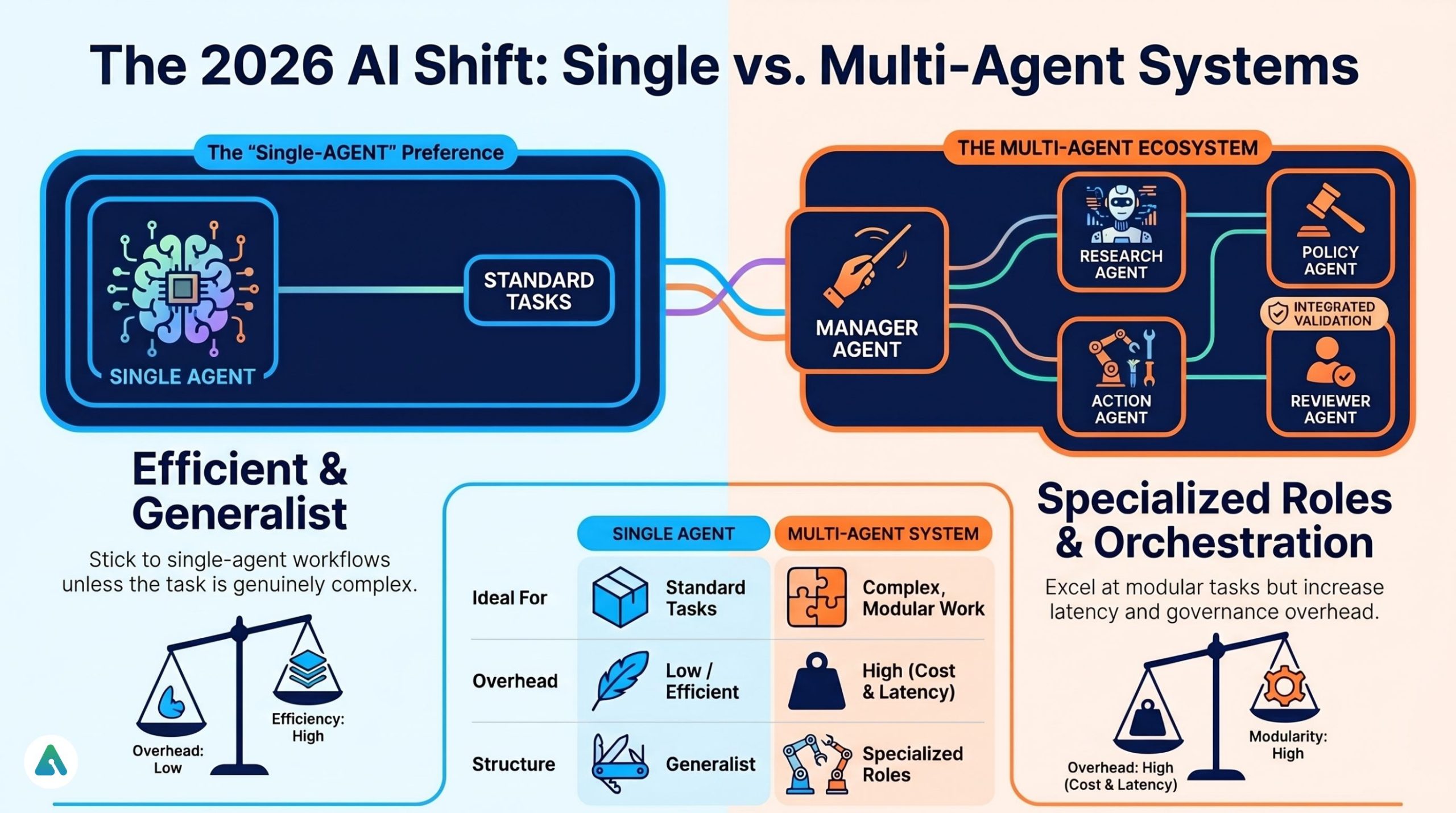

Single Agent vs. Multi-Agent Systems: The Big 2026 Shift

A single AI agent handles a task alone. A multi-agent system uses several specialized agents coordinated together. In 2026, many teams are moving past “chatbot vs. agent” and asking: Should we use one general agent, or a manager agent with several specialized worker agents?

What multi-agent orchestration looks like:

- Manager agent — interprets the goal, decides the plan

- Research agent — gathers data

- Policy agent — checks business rules or compliance

- Action agent — executes API calls

- Reviewer agent — validates the output before sending

That said, multi-agent systems also bring more cost, more latency, harder debugging, more governance overhead, and more places for a single step to fail. For many teams, a strong single-agent workflow is completely sufficient. Multi-agent orchestration makes sense when tasks are genuinely complex, modular, and worth the overhead — not just because it sounds more sophisticated.

The Small Language Model (SLM) Shift

One underreported trend: many 2026 agent implementations are moving away from large frontier models for every step, toward specialized Small Language Models (SLMs) for specific sub-tasks.

A frontier model is excellent at complex reasoning, but running every tool call through a large model is expensive and slow. Increasingly, teams are architecting systems where a large model handles high-level reasoning and planning, while smaller, fine-tuned models handle specific steps — classifying intent, formatting API calls, validating outputs.

This hybrid approach can significantly reduce the “token leak” problem while keeping latency reasonable. It also opens the door to running sensitive sub-tasks through local or on-premise SLMs, which helps with the data privacy concerns covered above.

When Should You Use a Chatbot Instead of an AI Agent?

Don’t kill a fly with a sledgehammer. If your users just want to know your holiday hours or refund policy, an AI agent is an expensive overkill. Stick to a well-tuned chatbot.

Choose a chatbot if:

- The workflow is narrow and predictable

- The risk of a wrong answer must stay low

- The system doesn’t need write access to other systems

- You want faster deployment and lower cost

- Content is structured and stable

This is still the right answer for a large share of business use cases.

When Should You Use an AI Agent Instead of a Chatbot?

An AI agent makes sense when the system must reason through multiple steps, access tools, make decisions, and complete actions across software systems.

Choose an AI agent if:

- Outcomes matter more than conversation quality

- Users want tasks completed, not just explained

- The workflow changes based on context

- The system needs write access to external tools

- You can support testing, monitoring, and guardrails

The 5A Framework: How to Choose

| Question | Points toward… |

| Does the user mainly need information? | Chatbot |

| Does the system need to act in another tool? | Beyond a basic chatbot |

| Should it choose the next step autonomously? | AI agent |

| Must it adapt to changing context or exceptions? | AI agent |

| Can you audit its decisions if something goes wrong? | Determines your governance burden |

Quick use-case cheat sheet:

| Use case | Best fit |

| FAQ bot for website | Chatbot |

| Lead qualification flow | Chatbot or AI chatbot |

| Internal policy assistant | AI chatbot |

| Refund processing | AI agent |

| IT support with system actions | AI agent |

| Multi-system service workflow | AI agent or multi-agent system |

Security, Permissions, and Governance: The Real Blocker in 2026

This is where most buying conversations go sideways, usually around month three of implementation.

An AI agent that can update a CRM, issue a refund, close a ticket, or modify a database carries a fundamentally different level of operational and security exposure than a chatbot answering from a knowledge base.

The practical truth: The real barrier to AI agents in 2026 isn’t model intelligence. It’s API permissions. An agent may be smart enough to handle a workflow — but without safe, scoped, auditable access to the right systems, it can’t be trusted in production.

For high-value actions — a refund over $500, an irreversible account change, anything with compliance implications — the agent should pause and trigger a human approval before executing. SOC 2-compliant agentic workflows explicitly require this design pattern. A well-designed agent cannot do everything alone; it knows when not to.

What good governance looks like:

- Least-privilege access (the agent can only touch what it needs)

- Approval gates for high-risk actions

- Audit logs with full traceability

- Rollback plans for reversible actions

- Human review for sensitive or unusual tasks

- Separate environments for testing before production

Pro tip for 2026 buyers: Don’t ask a vendor whether their system is “agentic.” Ask to see the traceability log. If they can’t show you the specific steps the AI took — including which tools it called, in what order, and what each returned — you’re not buying an agent. You’re buying a black box with a chat interface.

When AI Agents Fail: The Messy Reality

AI agents fail not only when they give wrong answers, but when they choose the wrong action, loop endlessly, misuse a tool, or — most dangerously — report success when the real-world action never actually completes.

Common failure modes:

- Hallucination of action — The agent says it sent the email or processed the refund. But the API call failed silently. We’ve seen agents get stuck in “refund loops” because an API returned a 404 that the model interpreted as a “try again” signal.

- Agentic loops — The system keeps retrying the same tool or reasoning path without reaching a stop condition. This burns tokens, slows response times, and creates surprising cost spikes.

- Wrong tool, right intention — The agent understands the goal but picks the wrong integration or action.

- Context drift — After several steps, the agent loses track of what the user originally needed. The task continues, but in the wrong direction.

- Silent permission failure — The plan is solid, but the system lacks write access or role permissions to execute it.

Teams that build agents quickly learn that good outputs alone are not enough. You need to inspect tool-call logs, intermediate steps, retry behavior, API responses, timeout handling, and fallback logic.

Cost Matters: The Hidden “Token Leak” in AI Agents

Agents almost always cost more than chatbots — and the reason goes deeper than people expect.

A chatbot retrieves one answer, generates one response, and closes. An AI agent is state-heavy: every time it takes a new step, it re-reads the entire history of all previous steps. That history grows with each iteration, expanding the context window — and since you’re billed per token, the cost compounds. Reasoning loops, tool calls, retries, and large context windows all compound that cost.

Directional cost ranges (illustrative, not universal benchmarks):

| Resolution type | Typical chatbot | Typical AI agent |

| Simple FAQ answer | ~$0.01 | ~$0.03–$0.08 |

| Guided support flow | ~$0.02 | ~$0.08–$0.15 |

| Multi-step task completion | Usually not possible | ~$0.15+ |

The business question isn’t “which is more impressive?” It’s “which one solves this workflow at a sustainable cost per resolution?”

Real-World Examples

E-commerce support:

- Chatbot: Answers “Where is my order?” and links to the tracking portal.

- AI agent: Looks up the order, checks shipment events, verifies policy, offers a refund or replacement, submits the action, and confirms — flagging a human if the refund exceeds the autonomous approval threshold.

IT helpdesk:

- Chatbot: Suggests password reset instructions.

- AI agent: Verifies identity, triggers the reset workflow, checks device status, opens a ticket, and escalates if a compliance flag appears.

Finance operations:

- Chatbot: Explains expense policy.

- AI agent: Reviews the submitted expense, compares it to policy, flags outliers, requests missing documentation, and routes it for approval.

Multi-agent service desk:

A manager agent handles the incoming request. A policy agent checks eligibility. An action agent executes the system change. A reviewer agent confirms completion before the final reply goes out. That isn’t a chatbot with a better script — it’s orchestration.

2026 Reality Check: Traditional Chatbot vs. AI Agent

| Feature | Traditional chatbot | AI agent (2026) |

| Logic style | Scripted or retrieval-based | Reasoning loop + tools |

| API interaction | Predefined buttons or flows | Dynamic tool calling |

| Knowledge use | FAQ or RAG | RAG + tool use + memory |

| Latency | < 2 seconds | 5–30 seconds |

| Data risk | Lower | Higher where write access exists |

| PII exposure | Contained | Expands with system integrations |

| Auditability | Easier | Requires traceability systems |

| Cost per resolution | Very low | Higher and more variable |

| Failure mode | “I don’t understand.” | Loops, wrong action, false completion |

| Best use case | Simple support | Workflow automation |

Common Mistakes Businesses Make

- Buying agent technology for a knowledge problem. If your content is messy, an agent won’t fix it. Garbage in, garbage out — just faster and more expensively.

- Calling every LLM workflow an agent. A nice chat interface doesn’t equal autonomy.

- Ignoring permissions until late in the project. Scope the required API access in week one, not week eight. This is the most common implementation delay we’ve seen in 2026.

- Forgetting auditability. If you can’t trace the steps, you can’t trust the automation — or defend it to a regulator or an unhappy enterprise customer.

- Underestimating cost. Reasoning loops, retries, and growing context windows add up fast. Model the cost per resolution before you commit to an architecture.

- No human fallback. Even advanced systems need escalation paths for sensitive or unusual cases. This isn’t a failure of the technology — it’s a sign of a mature, trustworthy deployment.

Is ChatGPT an AI Agent or a Chatbot?

ChatGPT is primarily an AI chatbot or AI assistant, though it supports agent-like behavior when connected to tools, memory, and external actions. Plain chat use is a chatbot behavior. Tool-connected workflows become more agent-like. A fully autonomous business workflow depends on the surrounding system design, not just the model itself. The honest answer: not by default, but it can function as part of an agent system.

AI Assistant vs. Agent vs. Chatbot

| Category | Best description |

| Chatbot | Talks with users |

| AI assistant | Helps users perform tasks |

| AI agent | Takes actions toward outcomes |

| Multi-agent system | Coordinates several specialized agents |

This distinction matters because many buyers compare tools across these categories as if they’re interchangeable — and they aren’t.

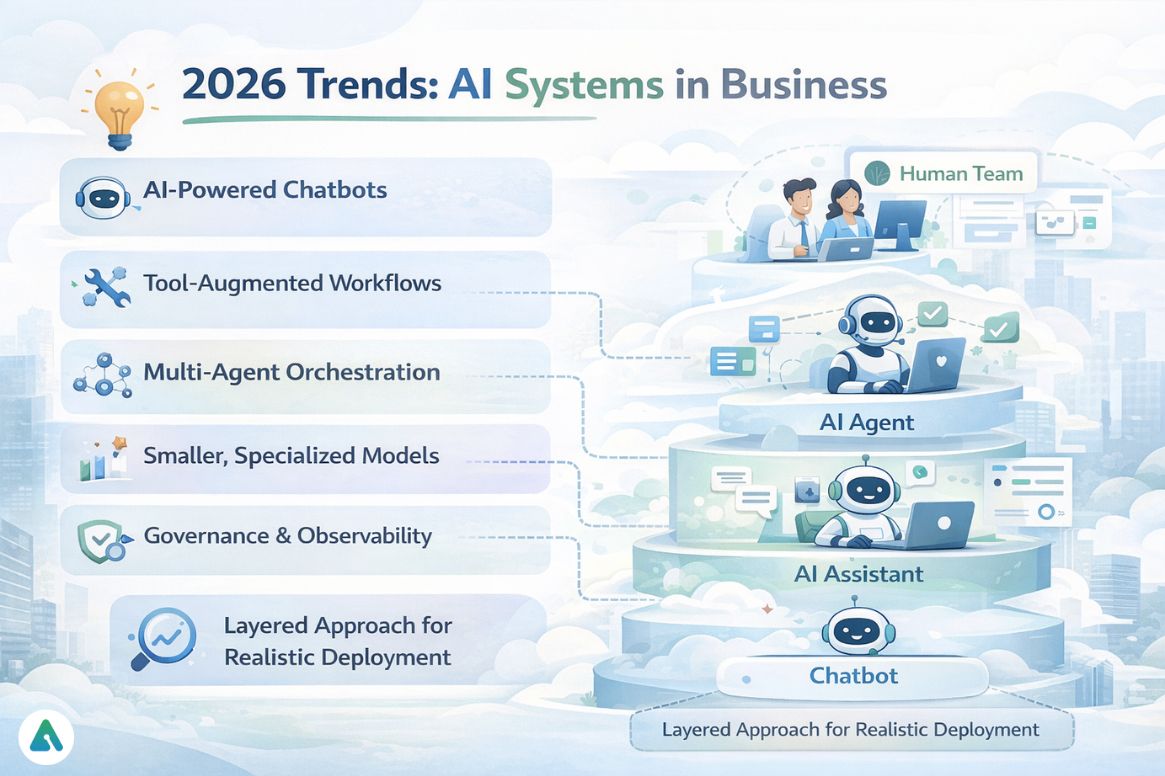

2026 Trends: Where Things Are Heading

Most chatbots are now AI-powered — RAG is standard, not a differentiator. Tool calling is becoming common in support and operations. Multi-agent orchestration is gaining traction for genuinely complex workflows. SLMs are being adopted to reduce cost and latency in specific agent steps. Governance and observability have become core enterprise buying criteria, not afterthoughts.

For many companies, the best architecture isn’t “chatbot or AI agent.” It’s layered:

- Chatbot for intake and simple queries

- AI assistant for knowledge-heavy support

- AI agent for selected high-value actions

- Human team for edge cases and approvals

That model is more realistic than the fully autonomous vision in most sales decks. Understanding where agentic systems can genuinely go wrong — including the latency gap, PII exposure, token costs, and permission failures — is essential before committing to either path.

FAQs

Q. What is the difference between an AI agent and a chatbot?

A chatbot is mainly designed to answer questions, guide users, and handle conversations. An AI agent goes further by reasoning through steps, using tools, accessing data, and completing tasks with some autonomy. For example, a chatbot may explain a refund policy, while an AI agent can verify eligibility and process the refund.

Q. Can an AI agent be a chatbot?

Yes. An AI agent can use a chatbot interface to communicate with users through text or voice. The key difference is that an AI agent can take actions beyond the conversation, such as updating records, calling APIs, or completing workflows.

Q. Is ChatGPT an AI agent?

Usually, ChatGPT is best described as an AI chatbot or AI assistant. In standard chat mode, it focuses on conversation and answers. It becomes more agent-like when connected to tools, memory, and workflows that allow it to perform multi-step tasks or external actions.

Q. What is the difference between agentic AI and a chatbot?

Agentic AI refers to software systems that pursue goals through planning, reasoning, and actions. A chatbot is primarily a conversational interface for answering questions or guiding users. Some agentic AI systems use chatbots as their front-end interface.

Q. What is the difference between an AI chatbot and an AI agent?

An AI chatbot focuses on natural conversation, customer support, and answering questions using knowledge sources. An AI agent combines reasoning, memory, tool access, and decision-making to complete tasks in other systems. Chatbots talk; AI agents act.

Q. When should I use a chatbot instead of a generative AI support agent?

Use a chatbot for FAQs, routing, lead capture, appointment booking, and basic customer support. Use a generative AI support agent when the system needs to check context, use tools, access multiple systems, and resolve more complex requests end-to-end.

Q. Are chatbots considered AI?

Some are. Traditional chatbots may be rule-based and follow scripts. Modern chatbots often use artificial intelligence, natural language processing (NLP), retrieval systems, and large language models to generate more flexible responses.

Q. What are the 5 types of AI agents?

A common classification of AI agents includes:

- Simple Reflex Agents – respond to current inputs using fixed rules

- Model-Based Reflex Agents – use an internal model of the environment

- Goal-Based Agents – choose actions to achieve specific goals

- Utility-Based Agents – optimize actions for the best outcome

- Learning Agents – improve performance over time using experience

In business software, AI agents usually refer more broadly to systems that reason and act across workflows with some autonomy.

Q. Which is better for business: chatbot or AI agent?

It depends on the use case. Chatbots are better for low-cost, fast, predictable interactions like FAQs and support routing. AI agents are better for higher-value workflows that require decisions, tool use, and task completion across systems.

Q. Are AI agents more expensive than chatbots?

Usually yes. Chatbots often generate one response per interaction, while AI agents may take multiple reasoning steps, use tools, and maintain state. This increases token usage, API calls, and overall operating cost.

Q. Can AI agents replace chatbots?

Not always. Many businesses use both together. A chatbot handles intake and simple questions, while an AI agent takes over for complex tasks or actions. This layered approach often delivers the best customer experience and cost efficiency.

Final Verdict

Chatbots are best for FAQs, routing, and predictable conversations. AI chatbots improve language quality and knowledge retrieval. AI agents are better when tasks require reasoning, tool use, and action — especially when those actions carry real business consequences and need traceability to match.

The biggest mistake is choosing based on hype. Start with the workflow. Ask what the system must know, what it must do, what data it touches, what access it needs, how latency will affect user experience, and — critically — how you will audit failure and protect against unauthorized or incorrect actions.

That’s the real answer to AI agent vs. chatbot in 2026.

Related: Claude vs ChatGPT (2026): Which AI Actually Wins for Coding, Writing & Automation?

| Disclaimer: This article is for general informational purposes only. AI tools, costs, capabilities, and best practices change quickly, so some details may evolve. Examples and estimates shared here are illustrative and can vary based on your provider, setup, integrations, and business needs. Before deploying any AI system that handles sensitive data or automates real actions, consider consulting qualified technical, legal, privacy, or security professionals |