Inside xAI’s reasoning model with a 2M token context window, MCP agent support, and a 1483 LMArena benchmark score.

Most developers testing AI models professionally ignored Grok 4.1 when it launched in November 2025.

Another reasoning model? Another company claiming “breakthrough performance”?

Then, engineers actually started using it. The conversation shifted fast.

Artificial intelligence models are evolving so quickly that a model released just a year ago can feel outdated. When Grok 4.1 launched in November 2025, it marked one of the most significant upgrades in the xAI ecosystem. The release didn’t simply introduce a faster chatbot—it introduced a reasoning-focused system capable of handling extremely long contexts, agent workflows, and complex analytical tasks.

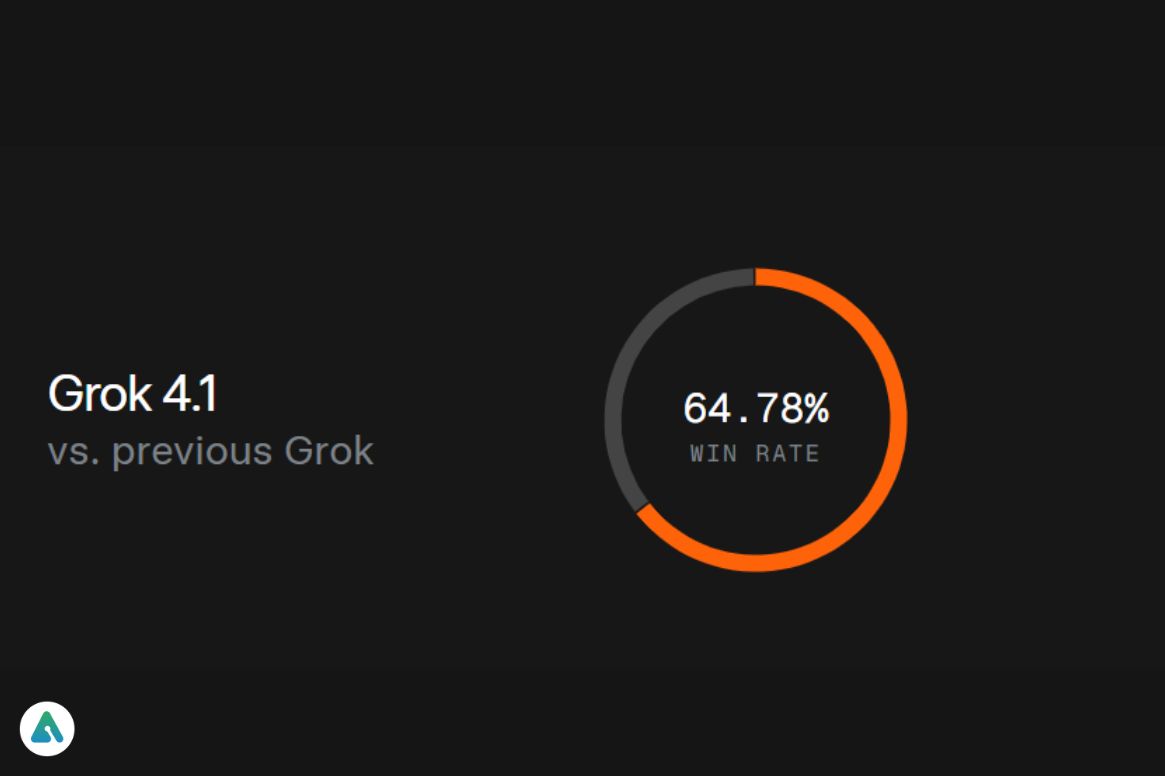

Early benchmarks helped change the perception quickly. Grok-4.1-Thinking briefly reached an Elo score of about 1483 on LMArena, which put it among the strongest reasoning models at the time. But numbers weren’t the only reason developers started paying attention. Many testers noticed that Grok felt noticeably less restricted than other models. Instead of shutting down certain lines of inquiry, it often explored them more openly—something researchers found useful when working through complex or controversial topics.

But hype alone doesn’t explain why developers and AI researchers started experimenting with Grok so aggressively. The real story lies in the technical architecture behind the model, the massive Colossus AI training cluster, and a new ecosystem strategy that connects Grok with real-time data, developer APIs, and emerging multimodal tools.

This guide explains what Grok 4.1 actually is, how the different model variants work, how developers access the API, and why the system is becoming an interesting alternative to models like Claude Sonnet, Gemini, and GPT-style reasoning systems.

What Is Grok 4.1?

Grok 4.1 is a large language model developed by xAI, the artificial intelligence company founded by Elon Musk. It builds on previous Grok models but introduces significant improvements in reasoning depth, context handling, and integration with external tools.

Unlike earlier conversational AI systems that focus mainly on short responses, Grok 4.1 was designed with large-scale reasoning and long-context processing in mind. The model can analyze extremely long documents, maintain context across complex conversations, and coordinate tool usage when operating in agent environments.

One feature that quickly drew attention was Grok’s massive context window, which expanded far beyond what earlier AI models typically supported. In practical terms, that means the model can work through entire research papers, large codebases, or long technical reports without losing track of earlier sections.

Another factor is how Grok fits into the broader xAI ecosystem. Rather than functioning as a simple standalone chatbot, the model connects with data streams, developer tools, and experimental systems being developed across xAI’s research projects, including robotics initiatives.

To understand why that matters, it helps to look at how modern AI systems manage long-context information. Grok’s approach to context handling is quite different from traditional chatbot architectures that struggle once conversations or documents become too long.

Grok 4.1 Technical Specifications

The following table summarizes the most important technical characteristics of the Grok 4.1 model family:

| Feature | Grok-4.1 | Grok-4.1-Thinking |

|---|---|---|

| Release date | November 17, 2025 | November 17, 2025 |

| Context window | Up to 2 million tokens | Up to 2 million tokens |

| Primary design goal | speed and agent tasks | deep reasoning |

| Peak LMArena Elo | ~1410 | 1483 |

| Training infrastructure | xAI Colossus cluster | same |

| Input price | $0.20 per 1M tokens | higher reasoning tier |

| Output price | $0.50 per 1M tokens | higher reasoning tier |

| Latency | very fast | slower due to reasoning |

| Best use cases | agents, chat, automation | analysis, coding |

The 2M token context window is particularly important. Most earlier models struggled to maintain coherence when handling very long prompts. Grok’s expanded context allows developers to pass large datasets or documents directly into the model without heavy summarization.

Grok 4.1 vs Grok-4.1-Thinking: The Performance Trade-off

A common point of confusion for new users is the difference between the base Grok 4.1 model and the reasoning-focused Grok-4.1-Thinking variant.

The standard Grok model is optimized for speed and interactive applications. It responds quickly and can handle high request volumes, making it suitable for chat applications or automated agents.

The Thinking variant behaves differently. Instead of immediately producing an answer, the model internally performs a deeper reasoning process before generating the response. This approach improves performance in tasks that require structured thinking, such as debugging code, solving mathematical problems, or analyzing complex research questions.

Real-World Performance Comparison

Early adopters ran comparative tests that revealed the trade-offs clearly. One developer testing both variants on the same code debugging task found:

- Standard Grok: 3-second response time, incorrect solution

- Grok-4.1-Thinking: 22-second response time, correct solution with step-by-step logic

The Thinking variant identified a subtle race condition in database connection pooling that the standard model missed entirely. The slower response included detailed reasoning about thread safety assumptions and why the original implementation was flawed.

For developers building high-speed applications, the standard model often wins. For researchers and engineers tackling analytical workloads, the Thinking variant’s deeper reasoning justifies the latency cost.

Patterns in how Grok handles high-speed coding tasks demonstrate where each variant excels in production environments.

Grok 4.1 API Pricing and Developer Access

Developers interact with Grok primarily through the xAI API ecosystem. Pricing is based on token usage, which measures how much text the model processes and generates.

As of 2026, approximate pricing for the standard Grok 4.1 model is:

| Token Type | Price |

|---|---|

| Input tokens | $0.20 per 1 million tokens |

| Output tokens | $0.50 per 1 million tokens |

Actual pricing may vary depending on the reasoning tier and usage volume.

API integration typically involves:

- Authentication keys from the xAI developer console

- Selecting the model variant (standard vs. Thinking)

- Configuring temperature and context parameters

- Handling token limits and response streaming

Many developers access Grok through AI model aggregators like Unify or Portkey, which allow switching between multiple models using a single API layer. This approach simplifies experimentation when comparing Grok with alternatives like Claude or Gemini.

Detailed comparison of how Grok’s capabilities stack up against ChatGPT reveals architectural differences that affect both performance and cost at scale.

Grok 4.1 for Agentic Workflows

One of the most interesting aspects of Grok 4.1 is how well it fits into agentic workflows. Instead of acting purely as a chatbot, the model can function as the reasoning engine inside automated systems.

Agent workflows involve AI systems performing sequences of tasks. For example, an AI research agent might search the web, analyze documents, summarize results, and generate a final report.

Grok was designed to integrate with environments using Model Context Protocol (MCP), which allows AI systems to connect external tools such as databases, APIs, or file systems. Through MCP-style architectures, Grok can coordinate actions across multiple services.

This capability enables workflows such as:

- Automated research assistants that gather, analyze, and synthesize information

- Coding agents that write, test, and debug programs iteratively

- AI copilots managing enterprise knowledge bases with real-time updates

- Data analysis pipelines processing millions of records

In practice, the Grok-4.1-Fast variant is often used for these tasks because it provides quick reasoning loops that keep automated systems responsive.

Grok 4.1 in the xAI Ecosystem

Another factor behind Grok’s rapid adoption is its integration into a larger ecosystem of technologies being developed by xAI.

One notable advantage is access to real-time data from X (formerly Twitter). Because Grok is integrated with the platform’s data infrastructure, it can analyze current events and trending conversations more effectively than models trained only on static datasets.

The system also benefits from the massive Colossus AI training cluster, which xAI built to train next-generation models at a large scale. This infrastructure enables both the initial training and ongoing improvements to Grok’s capabilities.

Researchers have also speculated about future integrations with Tesla’s software ecosystem and robotics research projects such as Optimus, although these connections remain experimental as of early 2026.

This broader ecosystem strategy suggests that Grok is not just another chatbot but a component in a larger AI platform that spans social media, autonomous systems, and enterprise applications.

Real-World Use Cases: Where Grok Excels

During early testing phases, developers quickly discovered that Grok performs exceptionally well in several practical scenarios.

Software Engineering & Debugging

Software engineers frequently use Grok-4.1-Thinking to analyze complex codebases or debug difficult errors. The model’s reasoning approach allows it to explain problems step by step rather than jumping directly to an answer.

One documented case involved a Django application with intermittent database connection failures that standard debugging tools couldn’t identify. Grok-4.1-Thinking analyzed the connection pooling logic, identified a race condition in timeout handling, and suggested a fix that resolved the issue.

This type of deep technical analysis has led some developers to reach for Grok-4.1-Thinking before consulting traditional debugging resources like Stack Overflow, particularly for subtle concurrency issues.

Research & Document Analysis

Researchers use the model to process large collections of documents. Because the context window can handle millions of tokens, Grok can analyze long research papers or large datasets without requiring aggressive summarization that might lose important details.

AI Research Agents

Another emerging use case involves AI research agents. These systems rely on reasoning models to gather information from multiple sources, synthesize insights, and produce structured reports. Grok’s integration with MCP and external tools makes it particularly effective for these workflows.

Creative & Analytical Work

Creative professionals also experiment with Grok for brainstorming and storytelling tasks. While reasoning models are not primarily optimized for creative writing, the deeper reasoning process often produces more coherent long-form narratives than earlier AI systems.

The “Elon Factor”: Less Filtering, More Flexibility

One noticeable difference when testing Grok is its tone and approach to controversial topics. Compared with models like Claude, which enforce strict safety filters, Grok often feels more willing to explore complex or contentious subjects.

During policy analysis tests, the model sometimes responded with debate-style exploration rather than strictly neutral summaries. For researchers, this can be valuable because it allows examination of multiple viewpoints without predetermined boundaries.

However, this flexibility also means developers need to implement their own guardrails when deploying Grok in public applications. The model’s openness is intentional and reflects xAI’s stated goal of building “truth-seeking AI” systems.

Whether this philosophy produces better AI assistants remains an active debate in the research community.

Practical Implications

For research and analysis contexts, the reduced filtering appears valuable. For customer-facing applications without additional moderation layers, it introduces risk. The deployment context determines whether this characteristic functions as a feature or a liability.

Understanding different approaches to AI safety and alignment helps contextualize where Grok fits in the broader spectrum of model philosophies.

2026 Update: Grok and the Aurora Video Model

In early 2026, xAI expanded the Grok ecosystem by introducing Aurora, a video generation model integrated with the broader platform APIs.

The strategy creates a multimodal AI stack where Grok handles reasoning and language tasks while specialized models generate images or videos.

For developers building creative or marketing tools, this integration opens the door to fully automated content pipelines where an AI agent researches a topic, writes a script, and generates visual content—all within a single ecosystem.

Early demonstrations suggest Aurora is competitive with other video generation systems like Sora, though extensive independent testing remains limited as of early 2026.

Technical Verdict: Performance Assessment

| Category | Assessment |

|---|---|

| Reasoning ability | Excellent |

| Coding performance | Very strong |

| Creative writing | Good |

| Safety alignment | Moderate (intentionally less filtered) |

| Agent workflows | Excellent |

| Cost efficiency | Competitive |

Grok-4.1-Thinking’s 1483 LMArena Elo rating briefly placed it at the top of several public benchmarks when it launched. While benchmarks never tell the entire story, they demonstrate that the model’s reasoning performance is competitive with leading AI systems.

For developers building AI agents, research tools, or advanced automation systems, Grok 4.1 represents one of the most interesting models released in the past year.

FAQ

Q. What is Grok 4.1?

Grok 4.1 is a large language model developed by xAI that focuses on reasoning ability, long-context processing (up to 2 million tokens), and integration with AI agent workflows through Model Context Protocol.

Q. What is Grok-4.1-Thinking?

Grok-4.1-Thinking is a reasoning-optimized variant of Grok designed for complex analytical tasks such as coding, research, and multi-step problem solving. It performs internal reasoning steps before generating responses, resulting in higher accuracy at the cost of increased latency.

Q. What is the context window of Grok 4.1?

Grok 4.1 supports a context window of up to 2 million tokens, allowing it to process extremely long documents, entire codebases, or large datasets without losing coherence or requiring summarization.

Q. What is Grok 4.1 API pricing?

Approximate pricing is $0.20 per million input tokens and $0.50 per million output tokens for the standard model. Reasoning tiers cost more. Access the API through console.x.ai.

Q. Does Grok support agent workflows?

Yes. Grok integrates with Model Context Protocol (MCP) environments that allow AI agents to connect tools and external APIs, making it highly effective for automated research, coding agents, and data analysis pipelines.

Q. What benchmarks did Grok-4.1-Thinking achieve?

The model briefly reached an Elo rating of around 1483 on LMArena in November 2025, placing it among the top reasoning models at launch.

Q. Is Grok less filtered than other AI models?

Yes. Grok intentionally uses less restrictive safety filtering than models like Claude or ChatGPT, making it useful for research and exploratory analysis but requiring additional guardrails for public-facing applications.

Q. What is the difference between Grok 4.1 and Grok-4.1-Thinking?

Standard Grok 4.1 prioritizes speed (3-5 second responses) for interactive applications. Grok-4.1-Thinking performs deeper reasoning (20+ second responses) before answering, producing better results for complex analytical tasks.

Conclusion

The release of Grok 4.1 represents a meaningful shift in how AI models are designed. Instead of focusing solely on conversational ability, the model emphasizes long-context reasoning, integration with external tools, and large-scale data analysis.

Several factors make the model stand out:

- Its 2-million-token context window allows analysis of extremely large datasets without summarization loss

- The Thinking variant provides deeper reasoning for complex analytical tasks at the cost of increased latency

- Integration with real-time X data and emerging multimodal tools like Aurora

- Less restrictive filtering for research and exploratory analysis

- Strong performance in agent workflows through MCP integration

For developers and researchers exploring advanced AI systems, Grok 4.1 offers a compelling alternative to traditional chat-focused models. As the ecosystem continues to evolve, its combination of reasoning power and large-context processing will likely play an increasingly important role in AI-driven applications.

Based on early adoption patterns, Grok-4.1-Thinking has become the preferred model for developers tackling debugging, research, and tasks requiring actual reasoning rather than pattern matching. The standard variant excels in agent workflows where speed matters more than reasoning depth.

Related: How to Recover Deleted Grok Conversations (xAI) — 2026 Guide