Most people still choose an AI tool the same way they pick a streaming service — someone recommends it, they sign up once, and they never look back.

That worked in 2024.

In 2026, it’s a costly mistake.

The Claude vs ChatGPT debate has moved well past “which one is smarter.” Both models now operate at a near-human level across writing, coding, and reasoning. The real difference is how they work — their architecture, their agents, their philosophy about what AI should do for you.

Pick the wrong one, and you don’t just lose convenience. You lose time, accuracy, and real output quality every single day.

After testing both across coding workflows, long-form writing, research tasks, and automation — and cross-referencing the latest April 2026 benchmark data — one thing stands out clearly:

Claude 4.6 is optimized for deep reasoning and internal system work. GPT-5.4 is optimized for speed, multimodal output, and external automation.

That single architectural difference explains nearly every outcome gap you’ll hit in real use.

Quick Reference: What Each AI Is Actually For

| If you need… | Use… |

|---|---|

| Deep reasoning on a 50,000-line codebase | Claude 4.6 (Opus/Sonnet) |

| Fast UI component generation | GPT-5.4 |

| Long-form research documents (40+ pages) | Claude 4.6 |

| Image or video generation | GPT-5.4 |

| MCP-connected dev workflows (GitHub, Jira, Slack) | Claude Code |

| Browser automation and web-based task agents | ChatGPT Computer Use |

| High-stakes structured outputs (legal, technical docs) | Claude 4.6 |

| Production-volume SEO content | GPT-5.4 |

What Claude and ChatGPT Actually Are in 2026

Claude and ChatGPT aren’t just chatbots anymore. They function as full productivity systems — each optimized for a different kind of work.

Claude (Anthropic) runs on the Claude 4.6 family:

- Opus 4.6 — the flagship for complex reasoning and large-scale coding

- Sonnet 4.6 — the balanced daily driver most professionals use

- Haiku 4.5 — lightweight and fast for high-volume tasks

Claude’s foundation is Constitutional AI, which produces more cautious outputs, lower hallucination rates on structured tasks, and stronger long-chain reasoning consistency. The two biggest architectural additions in 2026 are Adaptive Thinking — which automatically judges problem complexity and allocates reasoning depth without any configuration — and Context Compaction, a beta feature that compresses conversation history on the fly so Claude maintains coherent sessions across extremely long workflows without hitting a reset wall.

Anthropic also officially supports a 1 million token context window, matching the frontier standard.

ChatGPT (OpenAI) runs on GPT-5.4, released March 5, 2026, and remains the most feature-rich AI platform available. It integrates programming, computer control, full-resolution vision, and tool search into a single general-purpose model. When usage limits are hit, it falls back automatically to a lighter model, keeping workflows uninterrupted.

Core GPT-5.4 strengths include multimodal capabilities (images, video, voice), the GPT Store for custom tools and agents, deep Microsoft and GitHub ecosystem integration, and native computer-use agents for browser and desktop automation.

Claude vs ChatGPT for Coding (2026 Reality)

This is where the comparison gets genuinely interesting — and where benchmark headlines mislead the most.

What the Numbers Actually Say

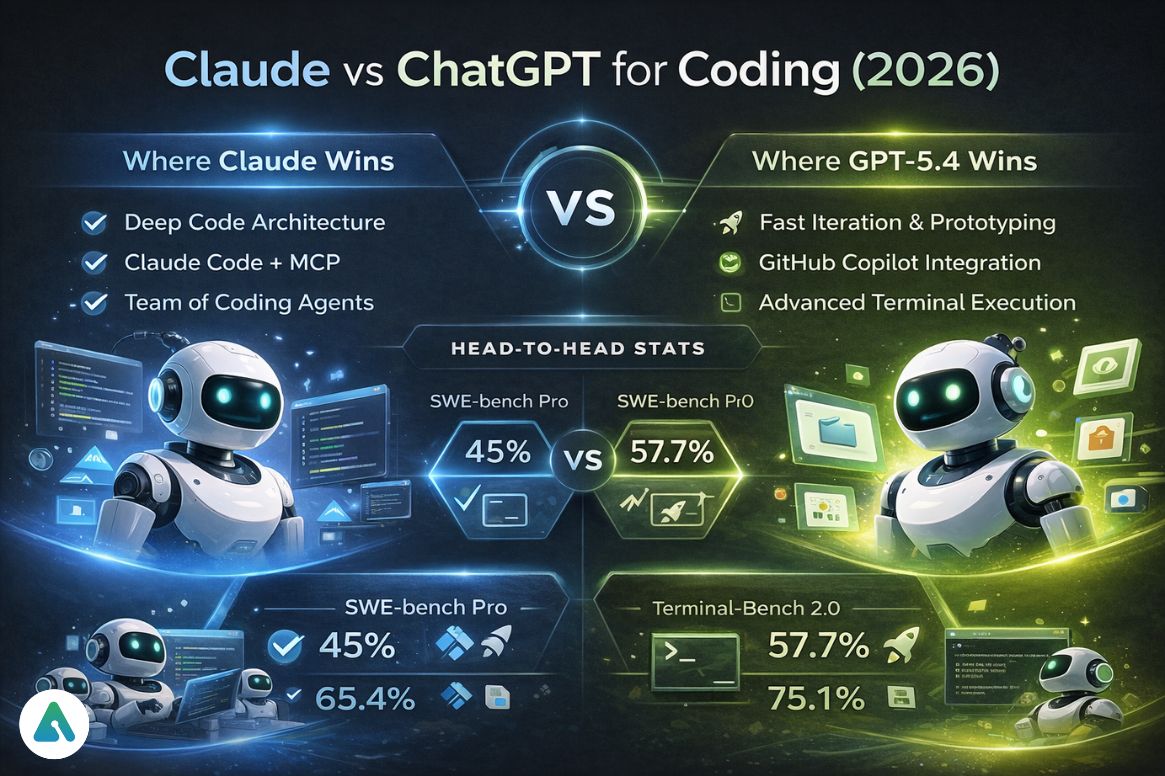

On SWE-bench Verified — the standard real-world software engineering benchmark — Claude holds a slight lead in published evaluations, while GPT-5.4 performs more strongly on harder adversarial variants like SWE-bench Pro. The gap is narrow, but Claude has held this position consistently across multiple release cycles.

On SWE-bench Pro, the harder variant designed to resist benchmark gaming, GPT-5.4 leads significantly at 57.7% versus Claude’s ~45%. If your concern is novel engineering problems that can’t be solved by pattern-matching training data, GPT-5.4 has a clearer edge on that specific test.

On Terminal-Bench 2.0, which tests autonomous coding in real terminal environments, including file editing, git operations, and debugging, GPT-5.4 leads at 75.1% versus Claude’s 65.4%.

The honest takeaway: neither model universally wins on coding. The right choice depends on the type of coding you do.

Where Claude Pulls Ahead

Claude’s coding advantage doesn’t come from raw benchmark scores. It comes from Claude Code (the CLI agent) combined with MCP — the Model Context Protocol.

MCP is an open standard Anthropic built and ships natively in Claude Code and Claude Desktop. Think of it as a universal connector between Claude and every tool in your stack. Instead of custom integrations for every service, MCP gives you one standardized protocol that connects to GitHub, Jira, Slack, Google Drive, PostgreSQL, Figma, and hundreds of other tools. The MCP ecosystem now counts over 10,000 active public servers and 200+ npm packages.

In practice, this means you can tell Claude Code: “Implement the feature described in JIRA-4521, check our PostgreSQL database for affected users, and draft a Slack message for the team.” It handles all three without you switching tabs. No other AI coding tool matches this level of native integration depth right now.

Here’s where it gets concrete: when I tried to refactor a legacy 50,000-line Python repo with circular dependencies across 12 modules, Claude caught a dependency chain that GPT-5.4 silently skipped — which would have broken the build three steps later. That kind of cross-file architectural reasoning rarely shows up in benchmarks, but it shows up in pull request acceptance rates.

Claude also introduced Agent Teams in 2026 — a main Claude instance can spawn multiple independent sub-agents that work in parallel, each with its own full context window, coordinating through shared task lists and messaging. For large codebase migrations or multi-module refactors, this architecture cuts development time dramatically and has no real equivalent on the OpenAI side.

Where GPT-5.4 Pulls Ahead

GPT-5.4 is faster to iterate. For prototyping, UI component generation, and quick bug fixes where you need rapid output rather than architectural precision, it’s the stronger daily driver — especially at its lower token cost (roughly 6x cheaper per token than Opus 4.6 at list price, though Sonnet 4.6 narrows that gap significantly).

GPT-5.4 also has tighter GitHub Copilot Enterprise and Azure DevOps integration for teams already in the Microsoft stack, and its terminal execution capabilities benchmark higher.

Practical verdict: Debugging a gnarly production issue and need the model to reason about root causes across multiple files? Use Claude. Standing up a new service, generating boilerplate, or building UI components fast? Use GPT-5.4. Many developers run Sonnet 4.6 as their default and escalate to Opus or GPT-5.4 Pro for the hard problems.

Agentic Latency: The Spec Nobody Publishes

One data point missing from almost every comparison: Time-to-Action for agentic tasks.

In informal testing, Claude Code via MCP typically takes 8–14 seconds to complete a multi-tool action (read Jira ticket → query database → draft Slack message). ChatGPT Computer Use on a comparable browser-based task runs 12–20 seconds, partly because it navigates real UI elements rather than calling structured APIs.

Neither is “slow,” but if you’re running these agents in loops across hundreds of tasks, that latency compounds. For pure API-connected workflows, Claude Code’s MCP approach is meaningfully faster than browser-based automation.

Claude vs ChatGPT for Writing

Claude feels like a thoughtful editor. GPT-5.4 feels like a fast content engine. Both descriptions are accurate, and both have a place.

The real difference shows up at scale: if you’re drafting a 40-page technical whitepaper, Claude 4.6 won’t lose the thread of the executive summary by page 30. GPT-5.4 still struggles with what researchers call “mid-document drift” — the tendency to subtly shift tone or forget established constraints in the middle of long documents — without heavy re-prompting.

Claude also pushes back. Give it a weak argument or a vague brief, and it flags the problem rather than generating text that sounds plausible. Whether that’s a feature or an annoyance depends entirely on what you’re doing.

GPT-5.4 produces faster output, handles formatting instructions more precisely, and excels at structured content for SEO and content production workflows. For a team running a content calendar, GPT-5.4’s speed and formatting control are genuinely more useful than Claude’s depth.

Practical verdict: Claude for long-form documents, research-heavy writing, and anything where coherence across thousands of words matters. GPT-5.4 for speed, structured templates, and production-volume content work.

The Voice of the Machine

One thing benchmarks never capture: the feel of working with each model.

Claude’s prose is more cautious, more hedged, and more willing to say “I’m not sure.” It reads like a careful researcher. You sometimes have to push it to commit to a recommendation.

GPT-5.4’s prose is more confident and immediate. It commits fast, sometimes too fast. For exploratory drafts and brainstorming, that directness is a feature. For high-stakes outputs where a confident wrong answer is worse than a hedged right one, it’s a liability.

Neither voice is “better.” They serve different editorial needs.

Reasoning, Research, and Long-Context Tasks

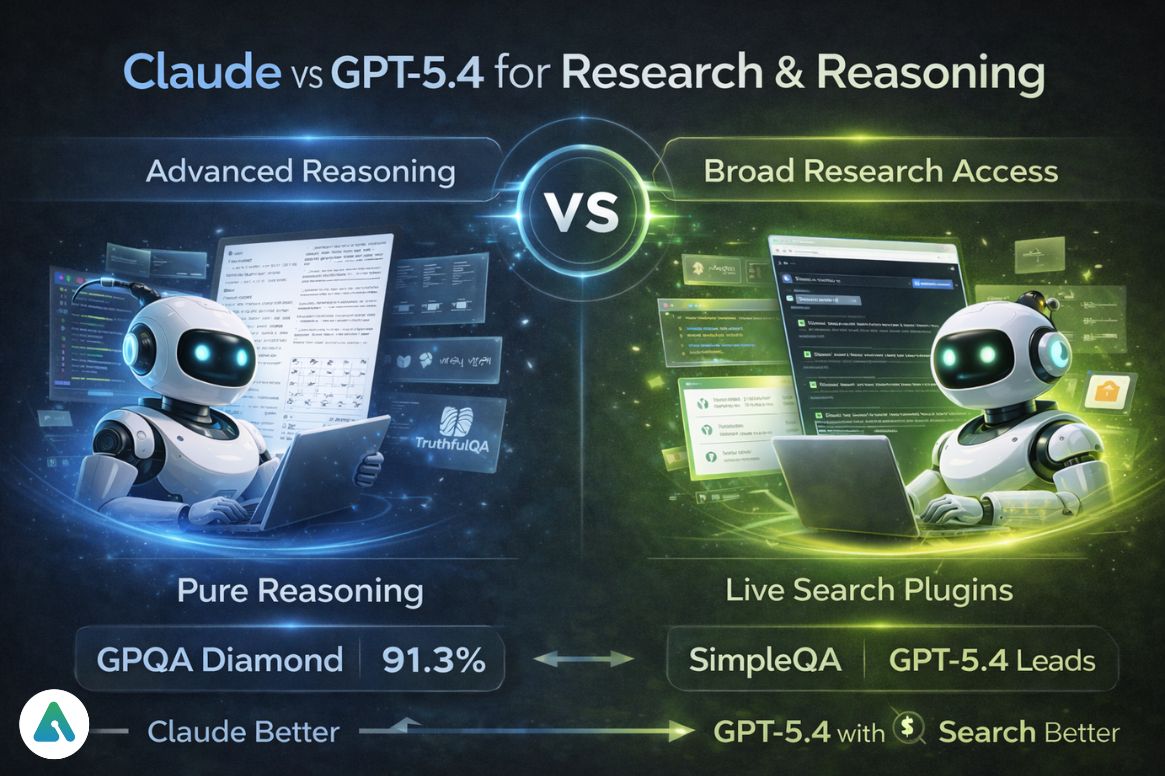

On GPQA Diamond — the graduate-level benchmark covering physics, chemistry, and biology — Claude leads at 91.3% versus GPT-5.4’s ~89.9%. This benchmark specifically resists training-set contamination, so it carries more signal than most.

On TruthfulQA — which targets questions where models produce plausible-sounding but incorrect answers — Claude leads. On SimpleQA, which tests factual knowledge without retrieval augmentation, GPT-5.4 leads.

The practical implication: for pure reasoning tasks on long documents, Claude is the more consistent choice. But pair GPT-5.4 with its search plugins, and it catches up fast on research tasks that benefit from live web access. The question isn’t just which model reasons better — it’s whether your workflow benefits more from depth of reasoning or breadth of tool access.

Multimodal Capabilities (Clear Winner)

This category has no real contest.

GPT-5.4 includes image generation via DALL-E, advanced voice mode, and native computer-use agents that navigate real desktop interfaces. If your work involves visual content creation and voice interfaces, GPT-5.4 is simply the tool.

Claude can analyze and reason about images. It cannot generate them. It has limited voice capability. Anthropic has made that choice deliberately — Claude’s focus is text and code.

Practical verdict: GPT-5.4 dominates multimodal by a wide margin. This isn’t close.

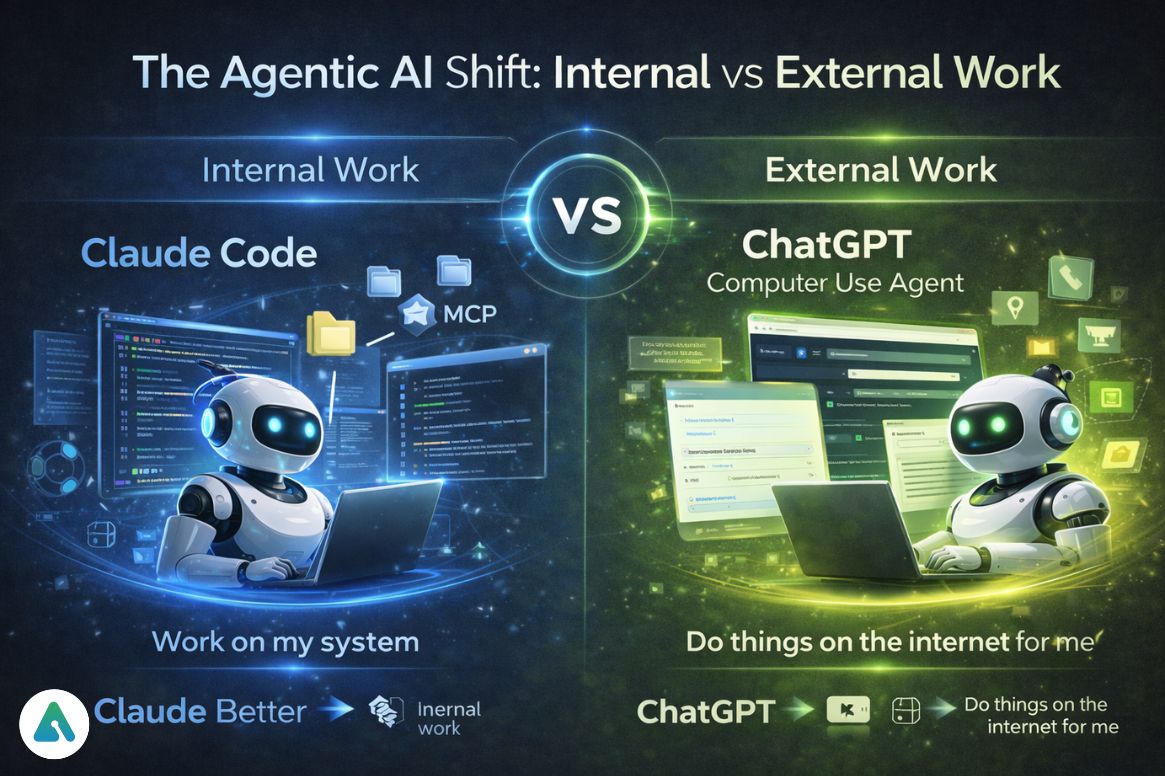

The Agentic AI Shift: Internal vs External Work

The real question isn’t which AI is smarter. It’s: do you need the AI to work inside your systems, or do you need it to interact with the outside world?

Claude Code runs in the terminal, works on local files and systems, and through MCP connects to your entire dev stack as a standardized bridge. It operates inside your system. It reads your codebase, understands your project structure, and reasons about changes before making them. Claude’s agent approach: “Work on my system with my data.”

ChatGPT’s Computer Use agent runs in a virtual browser environment, interacts with websites and desktop applications, and automates real-world UI tasks. It operates on the external world. If you need it to fill out a form, extract data from a website, or interact with a SaaS product that has no API, ChatGPT’s approach: “Do things on the internet for me.”

Neither replaces the other. They solve different problems. The teams getting the most out of AI in 2026 run both — Claude Code for systems engineering and codebase management, ChatGPT agents for external automation and web-based tasks.

Real-World Hybrid Workflow (2026 Example)

In practice, many professionals use both tools together rather than choosing one.

A developer might use Claude Code via MCP to refactor a large backend system across multiple repositories — letting it read Jira tickets, query the database, and reason about architectural changes. Then switch to ChatGPT to generate frontend UI components, create documentation visuals, and automate deployment steps using browser-based agents.

This hybrid approach consistently outperforms relying on a single AI system. The cost-to-value math also works out: running Sonnet 4.6 for the reasoning-heavy backend work and GPT-5.4 for the faster frontend iteration keeps token costs reasonable without sacrificing quality where it matters.

The Third Layer: Research and Workspace AI

In 2026, advanced users often don’t rely on just one or two AI systems.

Tools like Perplexity AI work as a research layer for real-time information retrieval, while platforms like Google Gemini handle workspace integration across documents, spreadsheets, and collaboration tools.

This creates a three-layer workflow: Claude for deep reasoning, ChatGPT for execution and automation, and Perplexity or Gemini for research and context gathering. Each layer does what it’s architecturally suited for.

2026 Benchmark Comparison Table

| Feature | Claude 4.6 (Opus/Sonnet) | ChatGPT (GPT-5.4) |

|---|---|---|

| GPQA Diamond | 91.3% | ~89.9% |

| SWE-bench Verified | ~80.8% | ~80% |

| SWE-bench Pro | ~45% | 57.7% |

| Terminal-Bench 2.0 | 65.4% | 75.1% |

| TruthfulQA | Leads | Close second |

| SimpleQA | Second | Leads |

| Reasoning | Adaptive Thinking (auto-depth) | Thinking model (manual tiers) |

| Agents | Claude Code + MCP + Agent Teams | Computer Use + GPT Store |

| Context | 1M tokens + Context Compaction | 1M tokens + long-term memory |

| Multimodal | Image analysis only | Image/video gen + voice + computer use |

| Best for | Systems engineering, heavy research | Automation, multimodal, fast iteration |

Benchmark data from published sources and verified third-party testing, April 2026.

Privacy and Data Control (2026 Reality)

The main difference is where your data goes.

Claude is designed around stricter data boundaries and is often preferred in enterprise environments where internal documents and sensitive workflows are involved. Its architecture — especially when used locally through Claude Code — keeps more operations within your own system. Anthropic holds SOC 2 Type II certification and offers enterprise data controls for teams handling sensitive workflows.

ChatGPT offers strong privacy controls and enterprise tiers, but many of its most powerful features — including browser-based agents and cloud execution — operate externally. OpenAI’s enterprise privacy documentation covers its data handling in detail.

Practical takeaway: If your work involves sensitive data or internal systems, Claude is generally the safer default. If your work involves interacting with the web and external tools, ChatGPT is more capable.

Pricing (2026)

Both platforms price their consumer tier at ~$20/month — Claude Pro and ChatGPT Plus.

At the API level, Claude Sonnet 4.6 sits at $3/$15 per million tokens (input/output). GPT-5.4 prices at $2.50/$15. Claude Opus 4.6 runs approximately 6x more expensive per token than GPT-5.4 at list price — a real consideration for production workloads.

To put that in practical terms: a 2,000-word research-heavy document generation task using Opus 4.6 costs roughly $0.08–$0.15 in tokens. The same task on Sonnet 4.6 costs $0.02–$0.04. GPT-5.4 comes in around $0.02–$0.03. For one-off tasks, the difference is negligible. At production scale (thousands of documents per month), it’s significant.

Claude Pro includes access to Claude Code with MCP integration. ChatGPT Plus includes the multimodal toolkit and GPT Store ecosystem.

Practical verdict: Same price, different value focus. The right choice depends on what you actually use AI for most.

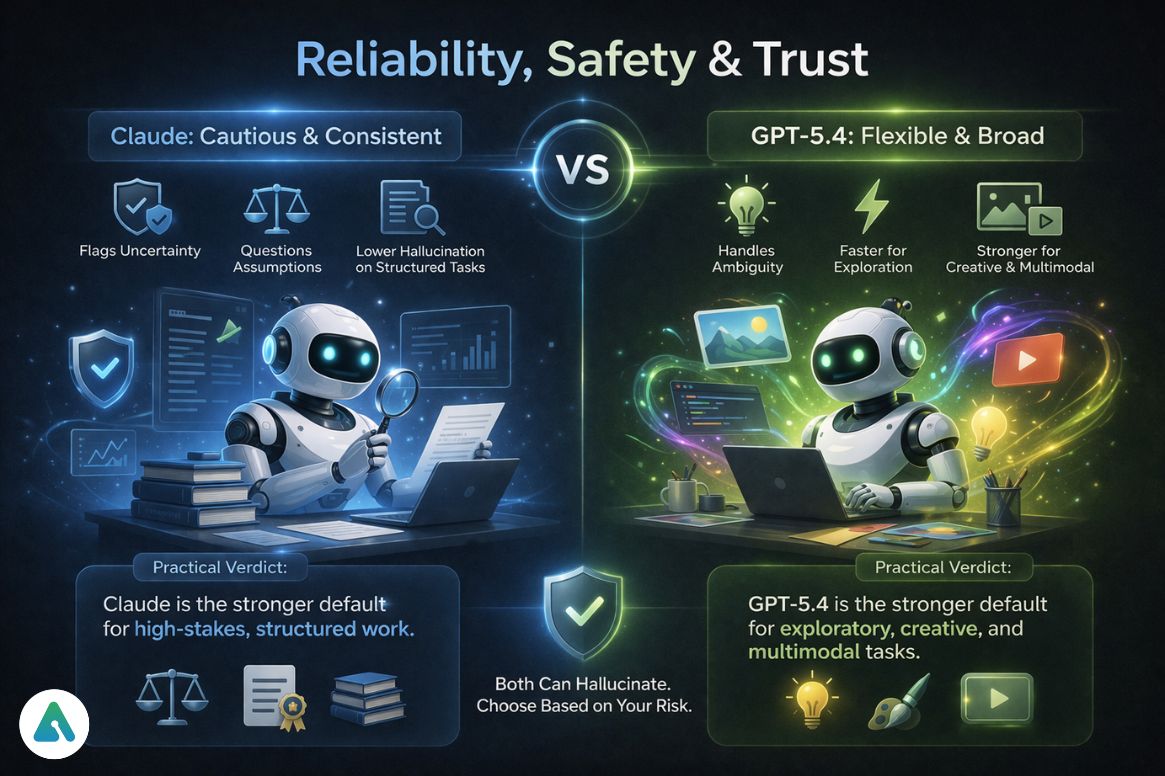

Reliability, Safety, and Trust

Claude is more cautious. It flags uncertainty, questions weak assumptions, and produces lower hallucination rates on structured tasks. This makes it a stronger choice for high-stakes outputs — legal drafts, technical documentation, research summaries — where a confident wrong answer is worse than a hedged right one.

GPT-5.4 is more flexible. It produces broader outputs and tolerates more ambiguity, which makes it faster for exploratory work but higher variance for precise tasks.

Neither model is “safe” in a blanket sense. Both hallucinate. Both can be confidently wrong. Claude is less likely to hallucinate on structured, verifiable tasks. GPT-5.4 sometimes generates plausible-sounding answers that don’t hold up under scrutiny.

Practical verdict: Claude is the stronger default for high-stakes, structured work. GPT-5.4 is the stronger default for exploratory, creative, or multimodal tasks.

The Ecosystem Lock-In Factor

One thing most comparisons skip entirely: how hard is it to switch after you commit?

If you build heavily on the GPT Store — custom GPTs, saved workflows, trained agents — moving that work to a Claude-based setup requires rebuilding from scratch. MCP connections, by contrast, are an open standard. If you build your dev workflow on MCP servers, those connections work with any MCP-compatible client, not just Claude.

For individual users, lock-in matters less. For teams building internal AI tooling, it matters a lot. Open standards reduce long-term dependency risk, and MCP’s open-source nature gives it an architectural advantage over proprietary agent stores — assuming the ecosystem continues to grow at its current rate.

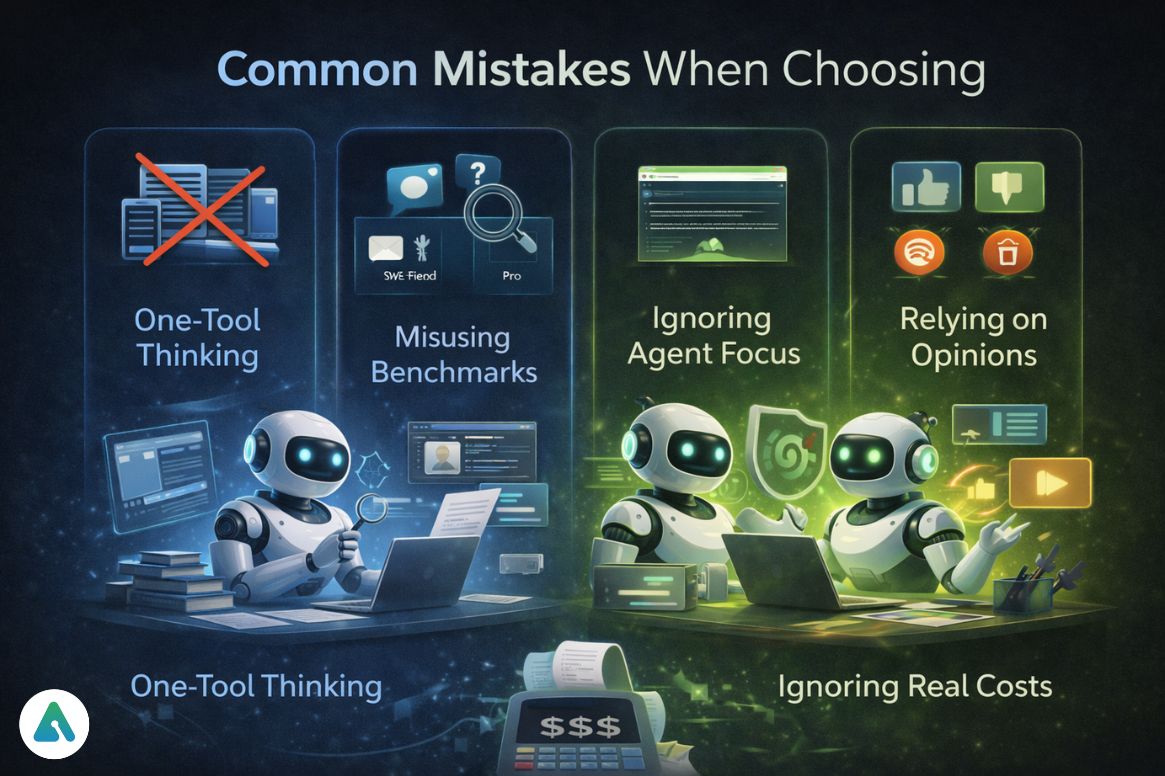

Common Mistakes When Choosing

Treating this as a one-tool decision. The highest-performing users in 2026 run both. Choosing one and ignoring the other is like choosing between a text editor and a browser — they’re not in competition.

Overweighting benchmark headlines. SWE-bench Verified and SWE-bench Pro tell completely different stories about the same two models. Always ask which benchmark and for what task type.

Ignoring agent architecture. Whether you need internal system access (Claude’s model) or external world automation (ChatGPT’s model) determines more about your real-world experience than raw benchmark scores.

Following social media opinions blindly. Developer communities on Reddit and Hacker News tend to favor whichever model was newest when they last tested. Both platforms have changed significantly in the past six months.

Forgetting per-task cost at scale. The ~$20/month consumer plans make both feel “free,” but API costs for production workloads vary meaningfully by model and task type. Run a cost estimate before committing to Opus 4.6 at volume.

10-Second Decision Framework

Choose Claude if you:

- Work with large codebases and need architectural reasoning

- Write long documents where coherence across thousands of words matters

- Need a coding agent that plugs directly into your dev stack via MCP

- Prioritize accuracy over speed on structured, high-stakes tasks

- Run research workflows requiring deep document analysis

- Handle sensitive internal data and need stricter data boundaries

Choose ChatGPT if you:

- Need image or voice generation

- Automate external tasks via browser and desktop agents

- Work heavily with voice interfaces or visual content

- Run fast iteration loops where speed outweighs depth

- Live in the Microsoft/GitHub ecosystem

Use both if you:

- Do serious knowledge work that spans coding, research, and execution

- Want Claude to reason and build, and GPT-5.4 to automate and ship

Frequently Asked Questions

Q. Is Claude better than ChatGPT in 2026?

The main difference is how they are used. Claude is better for reasoning, large codebase analysis, and long-document tasks, while ChatGPT is better for multimodal features, automation, and real-time interaction. Neither AI is universally better — the right choice depends on your workflow.

Q. Which is better for coding: Claude or ChatGPT?

Claude is better for large codebases, multi-file refactoring, and architectural reasoning, especially with Claude Code and MCP integration. ChatGPT is better for fast coding tasks, debugging, and workflows integrated with GitHub or development tools. Many developers use both together for best results.

Q. Is Claude better than ChatGPT for writing?

Claude is generally better for long-form writing, research-heavy content, and maintaining coherence across large documents. ChatGPT is better for fast content creation, structured formatting, and SEO-focused writing workflows.

Q. Can Claude generate images like ChatGPT?

No. Claude can analyze and describe images but cannot generate them. ChatGPT supports image generation through DALL-E and video generation through Sora, making it the better choice for visual content creation.

Q. What is MCP in Claude, and why is it important?

MCP (Model Context Protocol) is an open standard that allows Claude to connect directly to tools like GitHub, Slack, Jira, and databases. It enables Claude to work across real systems and data sources, making it especially powerful for developers and enterprise workflows.

Q. Which AI is better for students: Claude or ChatGPT?

Claude is better for research, academic writing, and deep analysis tasks. ChatGPT is better for interactive learning, visual explanations, and voice-based study sessions. Students often benefit from using both depending on the subject.

Q. Should I use both Claude and ChatGPT together?

Yes. Many professionals use Claude for reasoning, coding, and long-form work, and ChatGPT for automation, content generation, and multimodal tasks. Using both tools together provides better results than relying on just one.

Q. Which AI is better for everyday use?

ChatGPT is generally better for everyday use because it supports voice, images, and automation tasks. Claude is better for focused work like writing, coding, and research, where accuracy and depth matter more than features.

Final Verdict

The main difference is execution style, not intelligence. Claude is built for thinking, reasoning, and working inside your systems. ChatGPT is built for acting, automating, and interacting with the outside world.

The real differentiator is execution environment: Claude operates inside your system through local and structured context (MCP and Claude Code), while ChatGPT operates externally through browser-based agents and multimodal tools.

That architectural difference matters more than any benchmark score in real-world usage. Know which environment your work lives in, and the choice becomes obvious.

Related: ChatGPT vs Grok Comparison 2026 — Is Grok Better Than ChatGPT?

| Disclaimer: This guide reflects AI capabilities as of 2026 and may evolve over time. We’ll update it as meaningful changes occur. Results can vary by use case, and AI may produce inaccurate outputs—so always review and verify important information before relying on it. |