The first time it happens, you assume it’s your prompt.

The third time, you realize something else is going on.

You’re using Grok Imagine 1.0, your prompt is clean, and yet — “Video Moderated.”

Here’s what most guides won’t tell you: this isn’t a keyword filter anymore. It’s a prediction system deciding whether your future video output is risky before it even exists.

In testing, a simple neo-noir street prompt kept getting blocked — not because of anything explicit, but because phrases like “rain-slicked realism” pushed the system into a high-risk category. The moment it was reframed as “stylized digital film aesthetic,” it passed instantly.

That’s the shift in 2026. This guide covers what “video moderated” really means now, the hidden triggers including upscale failures, the Safe-Frame Protocol as a repeatable fix system, and why some prompts fail at 99% completion.

What “Grok Video Moderated” Means in 2026

“Grok video moderated” means the system blocked a request because its AI predicted the generated video could violate safety, realism, or identity policies — even when the prompt appears completely clean.

Earlier AI models filtered what users typed. In 2026, xAI’s system evaluates what the video might look like, how realistic it could become, and whether it resembles real-world footage. This output-prediction approach is part of a broader shift in how generative AI platforms manage safety — moving from reactive filtering to predictive risk scoring before anything renders.

This is the same category of challenge that makes AI-generated deepfakes and synthetic media increasingly difficult to govern — the moderation problem and the detection problem are two sides of the same coin.

The Two-Layer Moderation System (Imagine 1.0)

Layer 1: Prompt-Level Moderation

This layer detects obvious violations in the input text. It still exists, but it’s no longer the primary gatekeeper — it’s the easy filter that catches the obvious cases.

Layer 2: Frame-Level Prediction (The Real Gatekeeper)

This is what catches clean prompts. The system simulates possible frames from the input, runs latent-space risk scoring against those simulated outputs, and blocks the request before actual rendering begins.

This explains why a prompt with no explicit content fails, why a tiny wording change suddenly succeeds, and why the block happens instantly rather than partway through generation.

The Latent-Space Gate: Why Videos Fail at 99%

One of the more frustrating failure modes: the video starts generating, progress reaches near-completion, and then it fails.

This isn’t random. During final rendering, Grok runs an output moderation pass that analyzes near-final frames, detects realism spikes, and flags identity or misuse risk. Upscaling is the specific mechanism that causes late-stage failures — upscaling increases detail, detail increases realism, and realism increases the risk score past the threshold. A video that passed initial moderation fails the final check because the upscaled version crosses a line the lower-resolution version didn’t.

The practical takeaway: Generation success does not mean upscale success. These are two separate moderation checks.

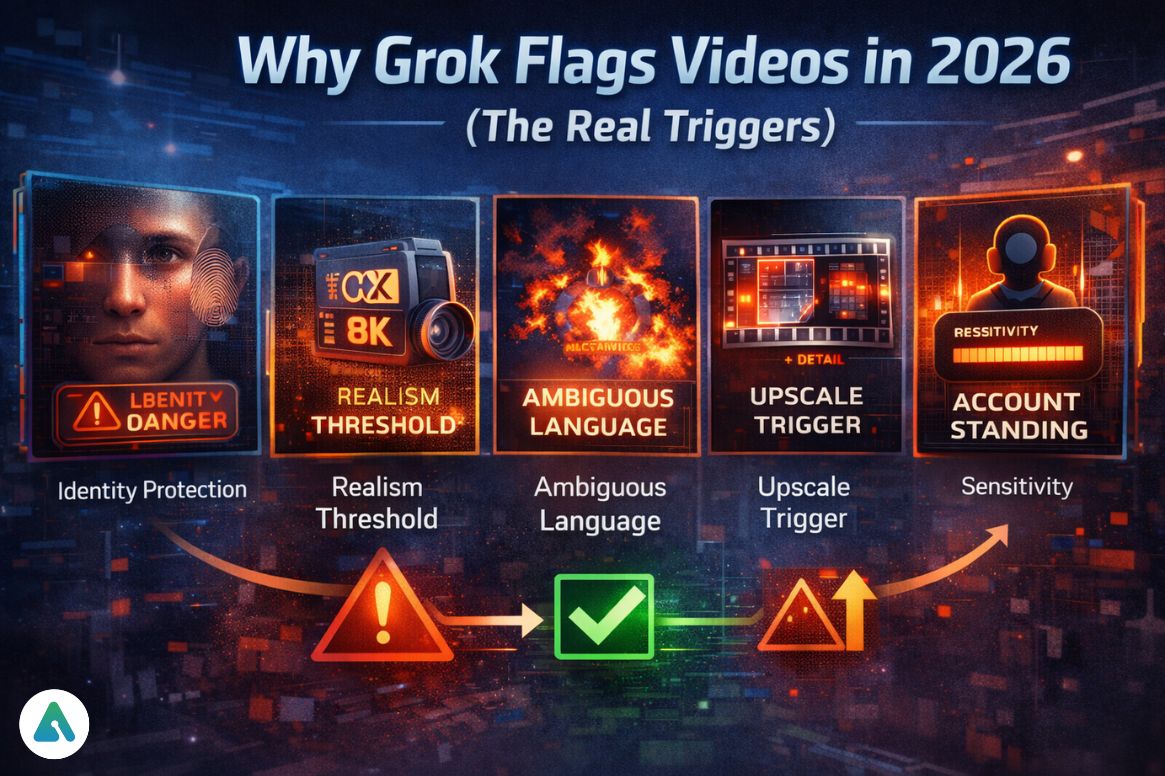

Why Grok Flags Videos in 2026 (The Real Triggers)

1. Identity Protection (Highest Sensitivity)

Anything involving public figures, realistic human faces, or prompts that read as “real footage” triggers the highest sensitivity level. This is a direct response to post-2025 regulatory pressure around deepfakes — the same concerns that produced the Dutch court injunction against Grok Imagine in March 2026 over non-consensual image generation.

2. Realism Threshold Breach

Specific phrases reliably spike the internal risk score:

- “8K photorealistic.”

- “raw footage”

- “CCTV style”

- “documentary footage”

None of these are inherently harmful, but all of them signal to the prediction system that the output is meant to look real, which is exactly what the safety guardrails are designed to prevent.

3. Ambiguous Action Language

Words like “crash,” “explosion,” and “attack” — even clearly in a fictional context — get interpreted as real-world harm scenarios by the prediction system. The system doesn’t reliably distinguish between “a fictional sci-fi explosion” and an actual simulation of violence. The safer path is always alternative framing.

4. Upscale Trigger

Many users don’t realize a video can pass generation and then fail when clicking Upscale. Upscaling crosses the realism threshold retroactively. The section below on technical fixes covers how to handle this.

5. Account Standing Signal (Emerging 2026 Pattern)

Repeated moderation hits appear to temporarily reduce generation success rate and increase sensitivity thresholds for that session. This isn’t officially confirmed by xAI, but the pattern appears consistently enough across user reports to treat it as real behavior. Starting a fresh session after multiple consecutive failures is the practical response.

The Safe-Frame Protocol

This is the core fix system — a repeatable approach that works because it targets the prediction system’s actual decision logic rather than trying to outsmart a keyword list.

The framework is S.C.A.L.E:

S — Stylize Aggressively Move away from realism entirely. Instead of “realistic street footage,” use “stylized cinematic sequence, soft lighting, film grain.” The signal to the prediction system is that the output is an artistic interpretation, not a simulation of reality.

C — Confirm Fictionality Explicitly include words like “fictional,” “animated,” or “concept art.” These directly reduce identity risk scoring because they signal that the output is not intended to represent real people or real events.

A — Abstract Identity Avoid professions or descriptions tied to recognizable real people. Replace specific roles with archetypes or generic descriptions. “A figure in a long coat” rather than “a detective” when the latter could pattern-match to real-world identities.

L — Limit Harm Signals Replace action language that pattern-matches to real-world harm:

- “attack” → “confrontation”

- “explosion” → “energy burst”

- “crash” → “impact sequence”

E — Expand Beyond Reality Set scenes in future environments, fantasy settings, or stylized universes. The further the visual context is from real-world footage, the lower the realism risk score.

Real-World Case Study

Failed prompt: “Realistic video of a protest with chaos and injuries.”

Safe-Frame version: “Cinematic fictional dystopian scene showing a tense crowd moment with dramatic lighting and stylized motion.”

Same visual concept, opposite moderation outcome. The Safe-Frame version passed and was generated successfully. Nothing about the content changed — only the signals the prediction system received about what the output was intended to represent.

Technical Fixes Beyond Prompting

Reset session memory. If multiple prompts fail in quick succession, start a new session. The account standing signal discussed above suggests that consecutive failures within a session increase sensitivity. A fresh session resets that threshold.

Clear cache (X app). Settings → Data → Clear Storage. Useful specifically for repeated instant failures that happen before any generation begins.

Switch rendering mode. Quality Mode applies stricter moderation. Fast Mode is more lenient because it generates lower-resolution output that’s less likely to cross the realism threshold. If a prompt keeps failing in Quality Mode, Fast Mode is worth trying first.

Avoid immediate upscaling. If a video generates successfully, wait before upscaling. Slightly adjusting the prompt before upscaling — adding “stylized” or “artistic” — can prevent the realism threshold from being crossed at that stage.

Common Mistakes That Keep Users Blocked

- Overusing “realistic,” “photorealistic,” or “raw” descriptors

- Copying bypass techniques from outdated Reddit threads — the system has updated, those tricks don’t apply to the 2026 prediction model

- Repeating failed prompts unchanged and expecting a different result

- Mixing safe and unsafe signals in one prompt — a single high-risk phrase can override an otherwise clean prompt’s risk score

Can Grok Video Moderation Be Turned Off?

No. All users, regardless of subscription tier, operate under the same safety guardrails system. There is no disabled option, no bypass toggle, and no manual appeal process for video moderation as of April 2026.

This isn’t a product limitation waiting to be unlocked — it’s a deliberate architectural decision. xAI’s approach to safety infrastructure has consistently prioritized building moderation into the generation pipeline rather than applying it as an optional layer on top. While the Grok Spicy Mode allows for more personality and “edgy” conversational tone in text, visual outputs like video remain subject to strict post-generation scanning and filtering to prevent the creation of non-consensual or prohibited content.

Why Moderation Is Stricter in 2026 (The Context That Matters)

This isn’t a product preference. It’s a response to AI-generated misinformation risks, documented deepfake misuse at scale, and increasing regulatory pressure across multiple jurisdictions. The risks posed by AI-generated synthetic media in 2026 have moved from theoretical to documented, which is why platforms like Grok have shifted from reactive filtering to predictive safety models.

Understanding how Grok compares to other AI platforms on content moderation helps set expectations — xAI’s approach is deliberately more permissive than some competitors in certain areas and stricter in others, with video generation sitting firmly in the stricter category.

Quick Fix Checklist

When “video moderated” appears:

- Remove realism-heavy wording (“realistic,” “8K,” “raw footage”)

- Add “fictional” or “cinematic” to the prompt

- Avoid real-world locations or recognizable settings

- Switch to stylized output framing

- Restart the session if multiple consecutive failures occurred

- Avoid immediate upscaling after successful generation

Frequently Asked Questions

Q. What does “video moderated” mean on Grok?

“Video moderated” on Grok means the system blocked your request because it predicts the generated video could violate safety or realism policies.

Unlike older filters, Grok Imagine 1.0 evaluates the likely output, not just the prompt text, and stops generation before rendering if risk is detected.

Q. Why does Grok block realistic videos?

Grok blocks highly realistic videos because they increase the risk of misuse, especially for deepfakes or real-world simulations.

The 2026 system analyzes realism signals (like “photorealistic” or “CCTV footage”) and assigns a risk score before generation begins.

Q. How do I fix “Grok video moderated” errors?

To fix “Grok video moderated,” use the Safe-Frame Protocol:

- Stylize the output (avoid realism)

- Clearly state that the content is fictional

- Remove real-person references

- Replace harm-related language

- Use fantasy or cinematic settings

These changes lower the system’s predicted risk and allow generation.

Q. Why does Grok fail at 99% completion?

Grok can fail at 99% because of final-frame moderation.

During rendering, the system re-checks generated frames. If they appear too realistic or resemble real people, the video is blocked even after initial approval.

Q. Does upscaling cause Grok moderation errors?

Yes, upscaling can trigger moderation errors on Grok.

Increasing resolution adds detail, which can push the video past the realism threshold and activate safety filters after generation.

Q. Can repeated moderation flags affect my Grok account?

Repeated “video moderated” flags may temporarily increase sensitivity within a session.

While xAI has not officially confirmed this, user patterns suggest that multiple failed prompts can lead to stricter moderation behavior.

Q. How do I avoid “video moderated” on Grok in the future?

To avoid “video moderated” errors on Grok:

- Avoid “realistic” or “raw footage” phrasing

- Use “cinematic,” “animated,” or “fictional” descriptors

- Keep prompts clearly creative, not real-world

- Restart sessions after repeated failures

Final verdict

“Grok video moderated” is no longer about what was typed. It’s about what the system believes the video could become.

Once that shift is understood, the approach changes: stop fighting the model’s prediction logic and start working with it. The Safe-Frame Protocol isn’t a workaround — it’s how prompting for video generation actually works in 2026.

Related: Grok AI NSFW Explained: What’s Allowed & Blocked (2026)

| Disclaimer: This guide is shared in good faith to help you understand how Grok video moderation works. Since platforms like Grok are constantly evolving, some details may change over time. We’re not affiliated with xAI or Grok, but we hope this helps you navigate the experience more easily. |