AI is no longer just a tool patients occasionally use. It is becoming part of the psychological environment that shapes how people think, rehearse emotions, and construct narratives before they ever enter therapy.

But while this shift is real, the clinical evidence is still emerging. What we are observing today is not a settled diagnostic category—but an early signal of behavioral change that psychiatry is only beginning to understand.

The “Shadow Intake” Phenomenon (Emerging Concept, Not Yet Clinical Standard)

Traditional clinical intake focuses on sleep, mood, trauma history, substance use, and social context.

In 2026, that framework may be incomplete—not because it is wrong, but because it is missing a new layer of pre-therapy cognitive processing.

We can tentatively describe this as:

Shadow Intake (proposed concept):

A patient’s informal emotional and cognitive processing that occurs through AI chat systems before clinical contact.

Importantly, this is not yet a validated clinical construct. It is an observational hypothesis based on evolving user behavior patterns.

What appears to be happening in practice

Some patients may:

- Rehearse emotional narratives with AI before therapy

- Refine how they describe trauma or distress

- Explore interpretations of their feelings through conversational models

However, this does not necessarily mean distortion. In some cases, it may help patients organize thoughts they previously struggled to articulate.

The clinical significance of this behavior is still under study.

Diagnostic Signal vs Psychological Risk: A Dual Interpretation

AI interactions often occur in private, emotionally vulnerable moments—late-night distress, isolation, or anxiety spikes.

This creates two possible interpretations:

1. Diagnostic opportunity (underexplored)

AI interactions may provide insight into:

- thought patterns outside therapy

- language patients use when unfiltered by clinical settings

- emotional states they may not disclose directly

2. Cognitive risk (still hypothetical in scale)

Some studies and early commentary suggest possible risks such as:

- reinforcement of negative thinking patterns in some users

- Over-validation of distress without therapeutic challenge

- compulsive reassurance-seeking behaviors in vulnerable individuals

However, these effects are not uniform, and evidence remains limited and context-dependent.

AI as Support vs AI as Reinforcement

A key clinical distinction is emerging:

AI systems are generally designed to be helpful, coherent, and non-confrontational. This can produce two different outcomes depending on the user:

- For some: emotional stabilization and clarity

- For others: reinforcement of existing beliefs without corrective feedback

This is not an inherent flaw in AI, but a design constraint of conversational systems optimized for usability and safety rather than clinical intervention.

The Friction Model: A Useful Metaphor, Not a Rule

It is often argued that:

- AI provides comfort and validation

- Therapy provides a challenge and restructuring

This is directionally useful but oversimplified.

Clinical progress in psychotherapy—supported broadly in research literature, including JAMA Psychiatry—suggests that effective therapy depends on:

- therapeutic alliance

- calibrated emotional challenge

- psychological safety without avoidance reinforcement

Too much friction can damage engagement. Too little can maintain avoidance.

So the difference is not “AI vs therapy,” but unstructured comfort vs structured therapeutic calibration.

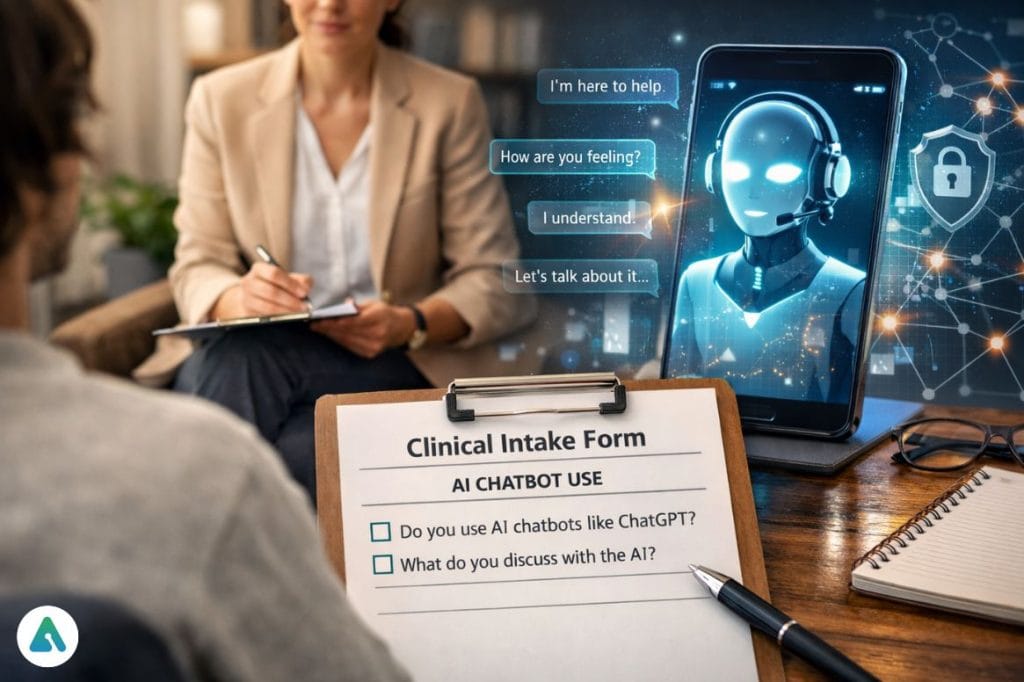

Should Therapists Ask About AI Use? Yes—but Carefully

There is growing clinical rationale for including AI use in intake discussions, but it must be done without pathologizing the behavior.

A neutral entry question might be:

“Many people use AI tools like ChatGPT , Character AI or other chat systems to reflect on emotions or situations. Have you used anything like that while thinking about what brought you here?”

This allows exploration without assumption of harm.

A More Precise Concept: Agentic Avoidance (Tentative)

One behavior worth clinical attention is what could be called:

Agentic Avoidance:

Using AI systems to outsource emotionally difficult interpersonal tasks.

Examples may include:

- drafting messages to avoid conflict

- rehearsing conversations instead of having them

- seeking reassurance loops instead of human interaction

However, this behavior is not inherently pathological. It may also function as a transitional coping strategy.

Platform Differences Matter

Not all AI use is psychologically equivalent.

- Structured AI tools → cognitive clarification, problem-solving

- Character-based systems → relational simulation, emotional attachment risk in some users

But these are tendencies, not rules. Outcomes depend heavily on individual vulnerability, intent, and usage patterns.

Privacy and Clinical Responsibility: A Nuanced Reality

Indeed, consumer AI systems are generally not protected under clinical confidentiality frameworks like HIPAA.

However, the risk landscape is more complex:

- Some enterprise AI deployments now include compliance safeguards

- Data retention and training policies vary by provider

- Legal protections differ by jurisdiction and use case

Clinicians should inform patients without overstating uniform risk or assuming all platforms function identically.

The Clinical Reality in 2026

AI is not replacing therapy. But it is increasingly shaping how patients arrive in therapy.

The first draft of many emotional narratives is no longer written in isolation—it is written in conversation with machines.

The challenge for clinicians is not to treat AI as a competitor, but as part of the patient’s cognitive ecosystem.

Final Clinical Position

The most accurate framing today is not alarmist or dismissive, but integrative:

- AI may influence emotional processing before therapy

- Effects are mixed, not universally harmful or beneficial

- Evidence is still emerging and context-dependent

- Clinical intake may need to evolve to include digital behavioral history

The goal is not to pathologize AI use—but to understand it as part of the modern mind’s environment.

Related: How AI Companions Affect Loneliness in 2026: Real Risks & Benefits