AI orchestration is the unsexy plumbing that everyone ignores until their budget explodes.

In 2026, the gap between a viral social media demo and a production-ready system isn’t “better prompts”—it’s architectural survival. Most teams are still high on frontier-model dopamine, but the reality of the current landscape is that coordination is your biggest liability.

If you’re still building with simple, linear chains, you aren’t building a product; you’re building a system that will break, loudly and expensively, the moment it hits its first real-world edge case. Access to models is no longer the bottleneck. The struggle now is keeping those models from spiraling into hallucination loops or detonating your infrastructure budget through unchecked recursive calls.

This guide addresses that gap directly. We’re skipping the surface-level definitions to dive into the failure modes that actually matter:

-

The Orchestration Tax: The real cost realities of multi-agent coordination.

-

The Goldfish Problem: Why your memory layer is quietly destroying your margins.

-

Orchestration Drift: Why a system that works today will be garbage in three months if it isn’t maintained.

-

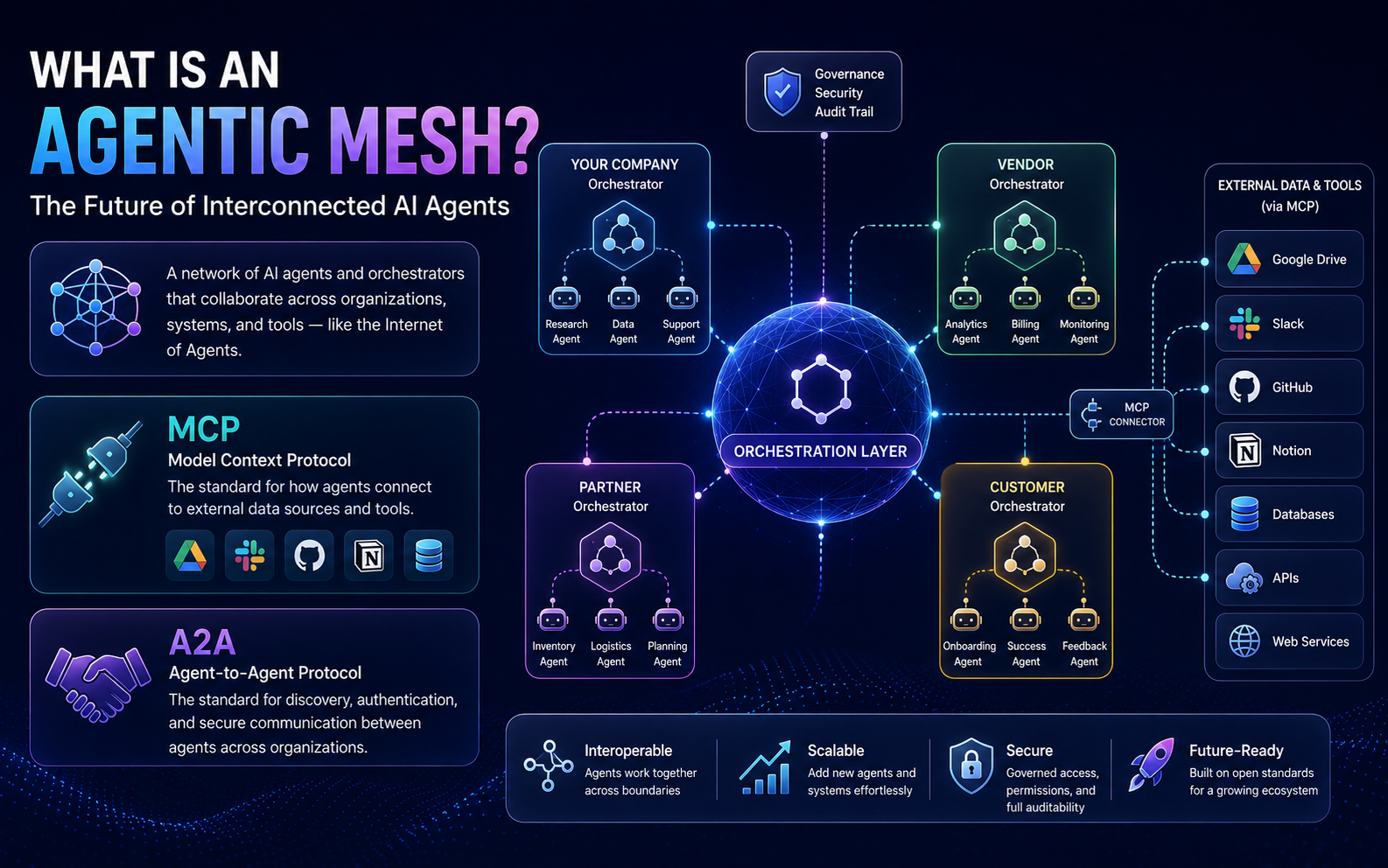

The Agentic Mesh: How to build using interoperability standards (MCP and A2A) so your stack doesn’t become a legacy island.

You’ll learn how to build a system that stays aligned over time—not just one that looks good during a launch presentation. If you want to move past the “chatbot” phase and into reliable, governed AI systems, you have to master the plumbing.

What AI Orchestration Actually Is

AI orchestration is the process of coordinating multiple AI models, agents, tools, and data systems into a unified workflow that executes tasks automatically, reliably, and at scale. In practical terms, it connects everything so your AI system behaves like one intelligent system rather than a collection of disconnected tools that occasionally cooperate.

The agents-versus-orchestration distinction matters and confuses most people early on. An AI agent executes a specific task — it writes, analyzes, decides, and calls an API. An orchestrator manages how agents work together — it routes, sequences, monitors, and handles failures. The difference between an AI agent and a chatbot is significant on its own, but the difference between an agent and an orchestration system is even larger. Agents are specialists. Orchestration is the operating system they run on.

Why DAG-Based AI Orchestration Replaced Traditional AI Chains

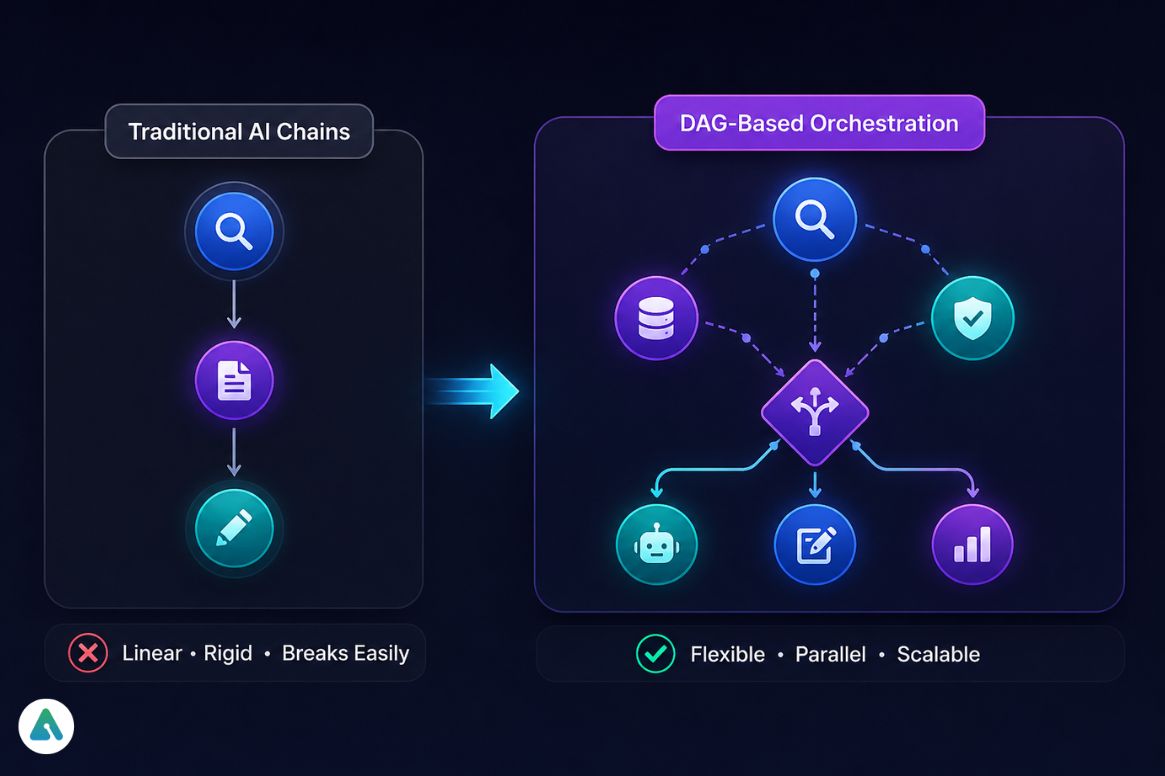

Early AI workflow tools relied on linear chains: Step 1 → Step 2 → Step 3. The appeal was simple. The problem was brittleness — no flexibility, breaks on any step failure, and zero ability to handle complex workflows where steps need to run in parallel or branch based on intermediate results.

What replaced it: Directed Acyclic Graph (DAG) orchestration. DAGs allow parallel execution, conditional branching, and dynamic routing. Instead of:

Research → Write → Edit

You get:

Research (parallel with) Data Validation ↓ Routing decision ↓ Multiple agents collaborate

LangGraph is currently the dominant framework for DAG-based orchestration among developers. For enterprise contexts, Vertex AI Pipelines and Microsoft AutoGen bring similar graph-based logic with managed infrastructure and compliance tooling layered on top. The choice between them isn’t primarily about capability — it’s about where your team’s expertise sits and what your compliance requirements demand.

AI Orchestration Architecture Explained: The 5 Core Layers

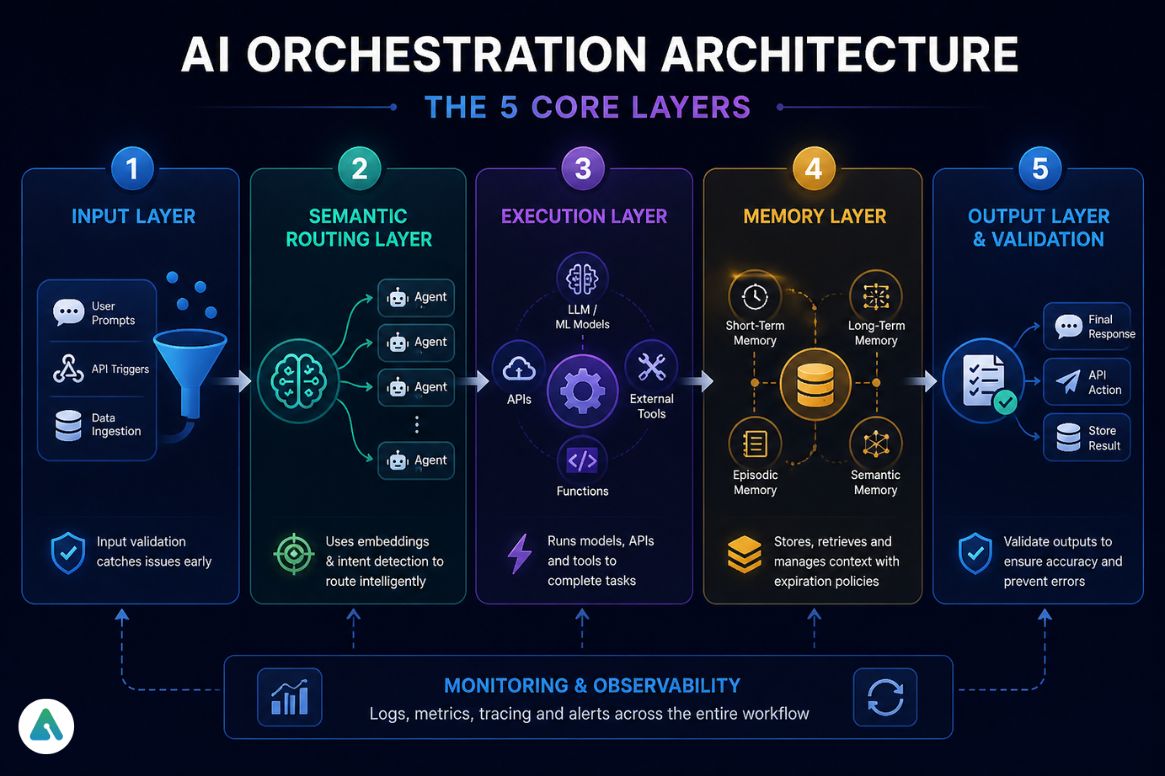

1. AI Orchestration Input Layer

User prompts, API triggers, and data ingestion. Nothing interesting here architecturally — but input validation at this layer catches a significant class of downstream failures before they become expensive.

2. What Is Semantic Routing in AI Orchestration?

This replaced rule-based routing in 2026, and it’s the upgrade most legacy systems haven’t made yet. Semantic routing uses embeddings and intent detection to decide which model or agent handles a given input — dynamically, based on meaning rather than keyword matching.

The practical difference: a rule-based router sends anything containing the word “invoice” to your finance agent. A semantic router understands that “can you pull up that billing thing from last quarter” is also an invoice query — and routes it correctly without a new rule being written.

3. AI Execution Layer: How AI Orchestrators Run Models & Tools

Runs LLMs and ML models, calls APIs and external tools. The execution layer is where most developers spend their time initially and where most of the interesting capabilities live. It’s also not where most production failures originate — that’s the next layer.

4. AI Memory Layer Explained: Long-Term Memory, Vector Databases & Context Retrieval

Memory is where orchestration systems either scale or collapse. And it’s where costs compound in ways that aren’t obvious until you’re already over budget.

We once saw a customer support orchestrator get stuck retrieving a bug report from 2024 to solve a 2026 issue — because the memory system had no expiration rules and the embedding similarity score was high enough to pull the stale record. The fix wasn’t better prompts. It was adding context pruning, memory validation, and expiration policies. Without those, the system was confidently solving the wrong problem with high-quality reasoning.

Short-Term Memory lives inside the prompt context window. Fast, easy to implement, and limited by the token window size. Expensive at scale because every inference pass carries the full context.

Long-Term Memory uses vector databases — Pinecone, Weaviate, FAISS — to store embeddings and retrieve relevant context through semantic search (RAG). This solves the token window problem but adds 200–800ms per retrieval and introduces storage overhead that compounds across a multi-agent system.

Episodic Memory tracks agent actions and history, preventing repeated mistakes across sessions. Essential for multi-agent systems where agents need to know what other agents have already tried.

Semantic Memory stores structured knowledge, often in knowledge graphs, for fact retrieval that requires precision rather than approximate similarity.

The memory flow in a real system: input received → semantic router detects intent → relevant memory retrieved via vector search → context injected into prompt → agent executes → output validated → stored back into memory with expiration metadata.

That last step — expiration metadata — is what most early implementations skip and almost always regret.

5. AI Orchestration Output Layer & Response Validation

Final response, API action, or stored result. Adding output validation here, before results reach downstream systems or users, is the cheapest place to catch hallucinations and routing errors.

Human-in-the-Loop: The Layer Enterprises Can’t Skip

Human-in-the-loop (HITL) is a control mechanism where humans review, approve, or override AI decisions within an orchestration system. In 2026, enterprise AI deployments treat HITL not as an optional safety feature but as a compliance requirement — particularly in finance, healthcare, and legal workflows where autonomous AI execution creates liability exposure.

HITL checkpoints serve four functions: approving critical outputs before they trigger downstream actions, validating memory updates before stale or incorrect data gets stored as truth, catching hallucinations that output-layer validation missed, and handling sensitive data that shouldn’t route through fully automated channels.

The architectural question isn’t whether to include HITL — it’s where to place the checkpoints without making the system slow enough to defeat its own purpose.

AI Governance Framework: Matching HITL Controls to Agent Risk

| Tier | Agent Type | HITL Requirement |

|---|---|---|

| Tier 1 — Low Risk | Read-only analytics agents, reporting, and summarization | No HITL required; output validation layer sufficient |

| Tier 2 — Medium Risk | Marketing agents, email campaigns, volume-capped communications | Batch approval before execution; human reviews queue |

| Tier 3 — High Risk | Financial transaction agents, customer-facing decision systems, and PII-handling agents | Mandatory HITL on every action + RBAC scoping + full audit trail |

Applying Tier 3 controls to Tier 1 agents kills system throughput for no governance benefit. Applying Tier 1 controls to Tier 3 agents creates liability exposure. Map every agent in your system to a tier before deciding where checkpoints go.

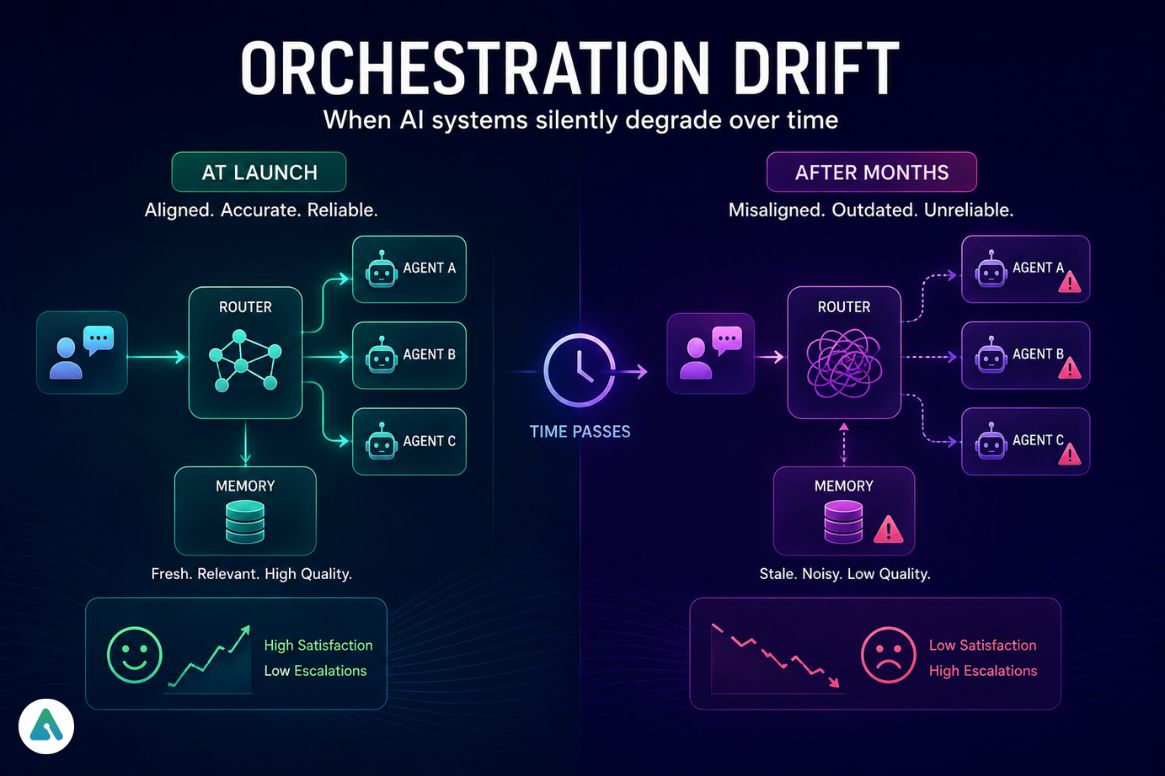

What Is Orchestration Drift in AI Systems?

Orchestration Drift is what happens when your system works perfectly at launch and silently degrades over the following months.

The mechanism: your semantic router was trained or configured against a specific distribution of user intents. As language evolves, user behavior shifts, and new use cases emerge, that routing logic becomes increasingly misaligned with what users actually mean. Meanwhile, your memory layer accumulates context — some of it outdated, some of it reflecting resolved issues, some of it simply wrong — and retrieval quality degrades as the signal-to-noise ratio worsens.

The result is a system that still runs without errors but produces increasingly poor outputs. Unlike infrastructure failures, Orchestration Drift doesn’t generate alerts. It shows up as declining user satisfaction scores and increasing escalation rates, weeks or months after the root cause.

Signs your orchestration system is drifting:

- Routing accuracy declining (agents receiving off-target tasks)

- Memory retrieval surfaces stale context more frequently

- Agents producing outputs that were accurate six months ago but aren’t now

- Increasing frequency of HITL overrides for the same categories of decisions

Maintenance practices that prevent drift:

- Scheduled routing logic audits against recent query distributions

- Memory expiration policies with regular pruning of low-confidence records

- Embedding freshness checks — semantic similarity degrades as language evolves

- Periodic re-evaluation of agent specialization boundaries as task distributions shift

AI Agent Governance: RBAC, Audit Trails & Secure Agent Permissions

Agent Sandbox environments and Role-Based Access Control (RBAC) for agents are no longer optional in 2026 — they’re table stakes for any production system that touches sensitive data or external APIs.

The core governance principle: every agent should operate with the minimum permissions required for its designated tasks, isolated from systems it has no business touching. An agent responsible for drafting customer communications shouldn’t have write access to your CRM. An agent running data analysis shouldn’t be able to trigger financial transactions.

Beyond permission scoping, governance requires audit trails for every agent action — not just outputs, but intermediate decisions, tool calls made, and memory records accessed. When something goes wrong in a multi-agent system, reconstruction of what happened is nearly impossible without structured logging at the agent level.

The risks in AI systems in 2026 increasingly come not from model capability failures but from coordination failures — agents taking actions outside their intended scope because governance layers were either absent or poorly designed.

The AI Orchestration Tax: Real Costs, Latency & Infrastructure Overhead

Memory isn’t free. Neither is coordination. These costs compound in ways that aren’t visible in simple benchmarks.

| Cost Source | Real-World Impact |

|---|---|

| Memory retrieval latency | +200–800ms per retrieval operation |

| Multi-agent token overhead | Each agent pass consumes tokens; 4-agent systems can run 4–8x single-agent token costs |

| Vector database queries | Storage and compute costs scale with retrieval frequency |

| Validation loops | Each HITL or critic-agent pass adds latency and inference cost |

| Orchestration Drift recovery | Re-embedding, re-routing configuration, and memory pruning — all require compute |

FinOps tooling for AI orchestration is now a distinct category. LangSmith provides observability for LangChain-based systems. For enterprise deployments, dedicated cost dashboards that track per-agent token consumption and retrieval costs are becoming standard — not because teams want to, but because without them, orchestration costs become invisible until they appear on an infrastructure invoice.

The practical rule: more agents don’t equal proportional quality gains, but operational costs often scale close to linearly. Budget for the orchestration tax before the system goes to production, not after.

AI Orchestration FinOps Metrics Every Team Should Track

| Metric | What It Measures | Warning Signal |

|---|---|---|

| RPC (Requests Per Context) | Memory retrievals per user task | High RPC = drift or inefficiency in routing |

| TTR (Time To Route) | Milliseconds the semantic router adds to the cold start | TTR > 300ms suggests routing complexity needs pruning |

| Governance Overhead | % of tokens consumed by critic agents and validation loops vs. productive work | > 25% governance overhead = validation layer over-fitted |

| Memory Hit Rate | % of retrievals returning genuinely relevant context | Hit rate declining = embedding freshness issue or memory bloat |

Tracking these four metrics catches cost and quality problems before they compound. Most teams discover their RPC is far higher than expected — agents retrieving context on every turn when session-level caching would serve equally well at a fraction of the cost.

Best AI Orchestration Tools in 2026 Compared

| Tool | Best For | Learning Curve | Enterprise Ready |

|---|---|---|---|

| LangGraph | DAG-based orchestration | High | High |

| LangChain | Developer workflows, prototyping | High | Medium |

| Microsoft AutoGen | Multi-agent collaboration | Medium | High |

| CrewAI | Lightweight multi-agent systems | Medium | Medium |

| Vertex AI Pipelines | GCP-native enterprise pipelines | Medium | High |

| UiPath Maestro | Enterprise RPA + AI orchestration | Medium | High |

| n8n | No-code automation | Low | Medium |

| Airflow | Data pipeline orchestration | High | High |

| Haystack | NLP-specific pipelines | Medium | Medium |

LangGraph vs. CrewAI — the decision that trips up most teams: LangGraph gives you fine-grained control over graph topology and is the right choice when your workflow has complex conditional logic, parallel branches, or non-standard routing requirements. CrewAI is faster to implement for standard multi-agent patterns where the agent roles are well-defined and the interaction patterns are relatively straightforward. If you’re prototyping, start with CrewAI. If you’re building something that will need to evolve significantly, LangGraph’s flexibility pays off.

Microsoft AutoGen is worth attention, specifically for enterprise multi-agent use cases — it handles conversational agent coordination and has stronger built-in governance tooling than most open-source alternatives.

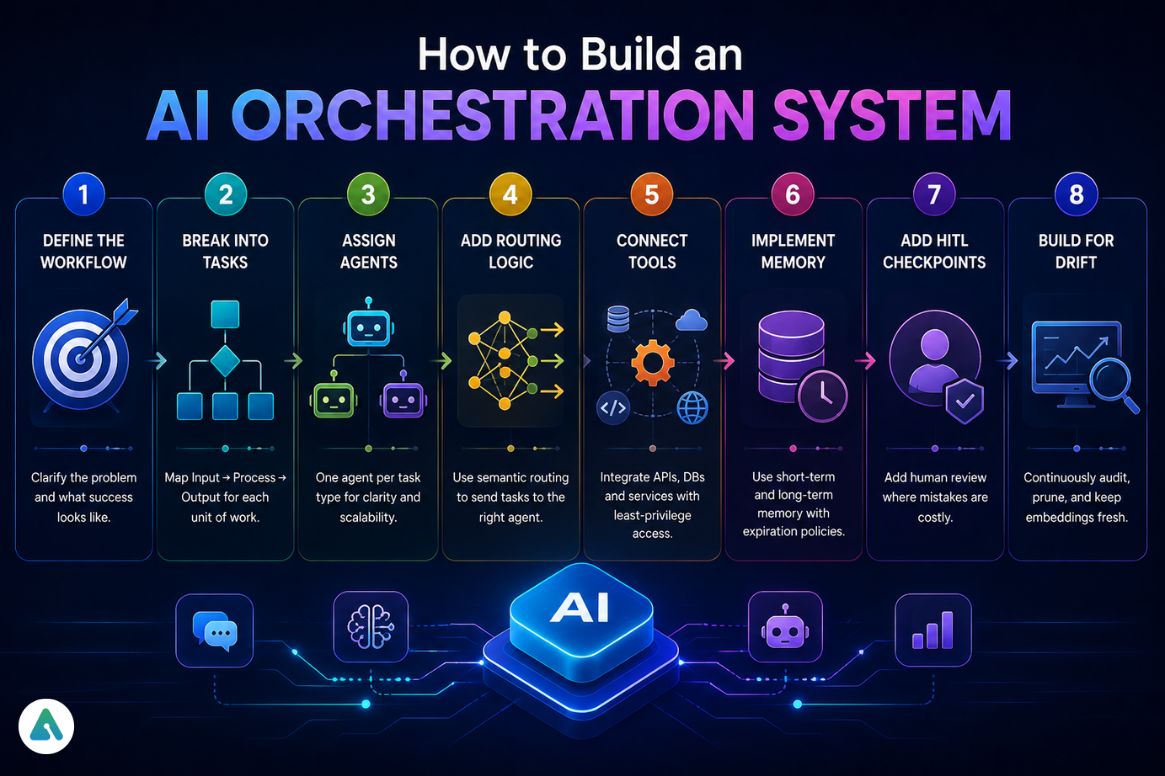

How to Build an AI Orchestration System Step-by-Step

Step 1 — Define the workflow. What problem are you actually solving? What does success look like in production, not in a demo?

Step 2 — Break into tasks. Input → Process → Output for each discrete unit of work. If a “task” requires multiple models or decision points, break it down further.

Step 3 — Assign agents. One agent per task type. Agents with broader responsibilities become bottlenecks and are harder to debug.

Step 4 — Add routing logic. Implement semantic routing from the start. Rule-based routing creates technical debt that compounds every time user behavior shifts.

Step 5 — Connect tools. APIs, databases, external services. Document every tool call each agent can make and restrict access to what’s necessary.

Step 6 — Implement memory with expiration. Short-term for in-session context. Long-term vector storage for persistent knowledge. Add expiration policies before launch, not after you encounter stale-retrieval problems.

Step 7 — Add HITL checkpoints. Identify the decisions where wrong outputs are expensive. Put humans in that loop. Automate everything else.

Step 8 — Build for Orchestration Drift. Schedule routing audits, memory pruning, and embedding freshness checks as recurring operational tasks — not one-time setup work.

Real-World AI Orchestration Case Study: Multi-Agent Content Engine

Workflow: Keyword input → Research agent collects SERP data → Analysis agent identifies content gaps → Writing agent generates article → SEO agent optimizes output.

What worked: Parallel execution of research and gap analysis cut total workflow time significantly. Semantic routing correctly directed technical queries to a specialized model and general queries to a faster, cheaper model — reducing inference costs without degrading output quality.

What broke first: Memory retrieval started surfacing outdated competitive data after three months. Articles were being optimized against a search landscape that had changed. Adding memory expiration rules tied to data freshness timestamps resolved the issue — but it required a retroactive audit of everything stored during the first quarter of operation.

Results after fixing: 60–70% faster content production, more consistent output quality, and a cost profile that stayed predictable rather than creeping upward.

What Is an Agentic Mesh? The Future of Interconnected AI Agents

An agentic mesh is a network of interconnected AI agents operating across organizational and system boundaries, coordinated through orchestration layers. Think of it as the Internet of Agents — where your company’s orchestrator talks to a vendor’s orchestrator, which coordinates with a partner’s agents, all without direct human intervention in the handoff.

Orchestration becomes the connective tissue of this mesh. The implication: in 2026, building an orchestration system isn’t just about internal workflow efficiency. It’s about how your AI infrastructure will interface with other organizations’ AI infrastructure. The governance, permission scoping, and audit trail requirements for a single internal orchestrator become significantly more complex when that orchestrator becomes a node in a larger mesh.

Two emerging standards are becoming the “USB ports” of this ecosystem — and knowing them is now baseline knowledge for anyone building serious orchestration systems.

MCP (Model Context Protocol)

Anthropic’s open standard for how agents fetch data from external sources — Google Drive, Slack, GitHub, Notion — without requiring custom connectors for each integration. Before MCP, connecting an agent to a new data source meant writing and maintaining a bespoke integration. With MCP, the connection is standardized: the agent speaks MCP, the data source speaks MCP, and the handshake is automatic. Teams using MCP-compatible tools in 2026 cut connector maintenance overhead significantly compared to teams still maintaining custom integrations per source.

A2A (Agent-to-Agent Protocol)

Google’s open standard for discovery and handshakes between agents owned by different organizations. A2A defines how one company’s orchestrator announces its agents’ capabilities, how another company’s orchestrator discovers and authenticates them, and how tasks get delegated across organizational boundaries without exposing internal infrastructure. Where MCP solves the agent-to-data connection problem, A2A solves the agent-to-agent connection problem at the mesh level.

Together, MCP and A2A are pushing AI orchestration toward genuine interoperability — the same way HTTP standardized how web servers communicate. Systems built against these standards now will be significantly easier to extend as the mesh matures.

AI Orchestration Best Practices for Architects

- Replace linear chains with DAG-based orchestration (LangGraph or equivalent)

- Separate semantic routing from execution — don’t hardcode routing logic

- Implement memory expiration from day one — not as a fix later

- Plan for Orchestration Drift as an operational reality, not an edge case

- Scope agent permissions tightly — RBAC for agents is a governance requirement, not a nice-to-have

- Budget for the orchestration tax before production, not after

- HITL checkpoints belong at high-stakes decision points, not everywhere

Common AI Orchestration Mistakes That Break Production Systems

Over-relying on one model when task diversity demands specialization. Ignoring latency and cost until infrastructure bills arrive. Poor routing logic that becomes increasingly misaligned as user behavior evolves. Storing unvalidated outputs as memory truth. No monitoring or observability — systems that fail silently are worse than systems that fail loudly. Overcomplicated workflows where a single model would genuinely solve the problem.

The last one is underrated. Orchestration adds real complexity and real cost. The right answer is sometimes just a well-prompted single model. Use orchestration when multiple AI tools are genuinely involved, workflows branch or require validation, or automation at scale is the actual requirement. Skip it when a single model solves the problem cleanly.

AI Orchestration Health Check Checklist for Production Systems

Production orchestration systems degrade silently. This checklist catches the most common failure modes before they surface as user complaints or infrastructure bills.

- Router Re-calibration: Sample recent queries and verify they’re routing to the correct agents. If intent distributions have shifted, update routing embeddings to match current language patterns.

- Memory Pruning: Delete long-term memory records with a freshness score below 0.4. Stale records don’t just waste storage — they actively degrade retrieval quality by competing with current context.

- Credential Rotation: Audit agent-specific API keys and RBAC permissions. Remove access that was granted for completed projects. Rotate keys that haven’t been rotated in 90+ days.

- Embedding Freshness: Check whether your vector database needs re-indexing due to terminology shifts. Technical language evolves fast — embeddings trained six months ago may underperform on current query distributions.

- Governance Overhead Audit: Calculate your current Governance Overhead metric. If the critic agent token consumption exceeds 25% of total tokens, your validation layer needs calibration.

- A2A/MCP Compatibility Check: If you’re connected to external agent meshes or data sources via MCP or A2A, verify that protocol versions are current and no deprecated endpoints are still active.

AI Orchestration FAQ (2026 Guide)

Q. What is AI orchestration?

AI orchestration is the process of coordinating multiple AI models, agents, tools, APIs, and workflows into a unified system that automates execution reliably at scale.

Instead of running isolated AI tools independently, orchestration manages:

- Task routing

- Workflow sequencing

- Memory retrieval

- Tool execution

- Output validation

- Human approval checkpoints

Modern AI orchestration platforms are designed to support real-time AI workflows, multi-agent systems, and enterprise automation.

Q. What is the best AI orchestration tool in 2026?

The best AI orchestration tool depends on your technical needs and workflow complexity.

| Use Case | Recommended Tool |

|---|---|

| Complex DAG workflows | LangGraph |

| Multi-agent collaboration | Microsoft AutoGen, CrewAI |

| Enterprise RPA + AI | UiPath Maestro |

| No-code AI automation | n8n |

| NLP pipelines | Haystack |

| Lightweight workflow automation | Zapier AI |

In 2026, graph-based orchestration frameworks like LangGraph are increasingly replacing traditional linear AI chains because they support dynamic routing, memory-aware execution, and parallel workflows.

Q. What is orchestration drift in AI systems?

Orchestration drift is the gradual misalignment between routing logic, memory retrieval, or workflow behavior and actual user intent over time.

It typically occurs when:

- Language patterns evolve

- User behavior changes

- Memory systems accumulate outdated context

- AI agents reinforce incorrect outputs

Orchestration drift is becoming one of the most underreported production issues in enterprise AI systems because failures happen slowly rather than instantly.

Q. What is the difference between AI orchestration and AI agents?

AI agents perform tasks, while AI orchestration coordinates how agents work together.

| AI Agents | AI Orchestration |

|---|---|

| Execute tasks | Manage workflows |

| Generate outputs | Route tasks |

| Use tools | Coordinate tools |

| Handle individual actions | Handle sequencing, memory, monitoring, and recovery |

An orchestration layer ensures multiple agents can collaborate reliably without conflicting actions or workflow failures.

Q. What is multi-agent orchestration?

Multi-agent orchestration is a system where multiple specialized AI agents collaborate dynamically under a centralized orchestration layer.

The orchestration layer manages:

- Task distribution

- Agent communication

- Shared memory

- Output validation

- Conflict resolution

Multi-agent orchestration is increasingly used in:

- AI research systems

- Enterprise copilots

- Autonomous workflow automation

- AI software engineering teams

Q. What is an agentic mesh?

An agentic mesh is a network of interconnected AI agents and orchestration systems operating across teams, applications, or organizations.

Instead of isolated workflows, an agentic mesh allows:

- Cross-platform AI coordination

- Shared memory systems

- Distributed decision-making

- Interoperable AI workflows

Many analysts view agentic mesh infrastructure as the next evolution of enterprise AI architecture in 2026 and beyond.

Q. Is AI orchestration expensive?

Yes. AI orchestration can become expensive due to:

- Multi-agent token usage

- Vector database retrieval

- Long-context memory injection

- Infrastructure scaling

- Real-time workflow execution

Production systems often face what engineers call the “orchestration tax”:

- Increased latency

- Higher inference costs

- More complex monitoring requirements

FinOps planning, caching, smaller orchestration models (SLMs), and memory expiration policies are increasingly important for controlling AI orchestration costs.

Q. What is AI orchestration architecture?

AI orchestration architecture is a layered system that manages input processing, semantic routing, execution logic, memory retrieval, monitoring, output validation, and human-in-the-loop (HITL) checkpoints.

A modern orchestration architecture typically includes:

- Input layer

- Semantic routing layer

- Execution layer

- Memory layer

- Monitoring and validation

- HITL approval systems

- Output delivery

Advanced systems also use:

- DAG-based execution

- Vector memory databases

- Semantic routers

- Dynamic agent selection

- Memory expiration policies

Q. How do AI orchestration systems use memory?

AI orchestration systems use memory layers to maintain context across workflows and interactions.

Common memory types include:

- Short-term memory (prompt context)

- Long-term vector memory

- Episodic memory (agent history)

- Semantic memory (knowledge graphs)

Memory systems help AI agents:

- Recall prior interactions

- Retrieve relevant documents

- Maintain workflow continuity

- Reduce repetitive reasoning

However, poorly managed memory can increase latency, hallucinations, and orchestration drift.

Q. Why are DAG workflows replacing traditional AI chains?

Traditional AI chains follow fixed linear steps:

Input → Process → Output

DAG-based orchestration (Directed Acyclic Graphs) allows:

- Parallel execution

- Dynamic routing

- Conditional branching

- Better fault tolerance

This makes DAG orchestration more scalable and reliable for complex AI workflows and multi-agent systems in 2026.

Related: Inside Grok 4.20 Multi-Agent: How xAI’s 4-Agent System Really Works

| Disclaimer: This article is intended for educational and informational purposes only. AI orchestration tools, frameworks, pricing, and capabilities evolve rapidly, and some features or recommendations may change over time. While every effort has been made to ensure accuracy as of 2026, readers should verify technical specifications, security requirements, and platform documentation before making implementation or business decisions. This article is not affiliated with, sponsored by, or endorsed by any company or platform mentioned. |