Last updated: May 2026 | Testing methodology: 40+ hours across five API providers and three proxy configurations

AI chatbot platforms have become dramatically more personal in 2026. People are no longer just asking AI for homework help or coding advice — they’re building emotional attachments to AI characters, roleplaying for hours, and connecting third-party language models through platforms like Janitor AI.

That shift explains why searches like “Is Janitor AI safe?”, “Can Janitor AI creators see chats?” “Is Janitor AI safe from hackers?” and “Is DeepSeek safe on Janitor AI?” have exploded across Google, Reddit, and Discord communities.

The short answer: Janitor AI is generally safe for adults when used responsibly — but the real privacy risks usually come from the APIs, proxies, and infrastructure connected behind the scenes. That distinction matters more than ever in 2026. Most articles collapse this into a binary “safe” or “dangerous” verdict. Reality is more complicated, and your actual risk depends heavily on which AI provider you connect to, whether you use public proxies, how your API keys are managed, and how realistic your expectations about privacy are.

Understanding the Real Privacy Risks Behind Janitor AI

For most adults, Janitor AI is reasonably safe if used carefully. The platform itself is not widely associated with malware, mass account theft, or intentionally malicious behavior. However, users consistently underestimate the risks that actually matter.

| Area | Risk Level |

|---|---|

| Malware risk | Low |

| Privacy risk | Moderate |

| NSFW exposure | High |

| Child safety | Poor |

| API misuse risk | Moderate-High |

| Fake proxy risk | High |

| Emotional dependency risk | Growing concern |

The better question in 2026 isn’t “Is Janitor AI safe?” — it’s “Is my Janitor AI setup safe?” That’s a completely different conversation, and almost nobody is having it.

How Janitor AI Works Behind the Scenes

One of the biggest misunderstandings online is that Janitor AI itself powers every chatbot response. It usually doesn’t.

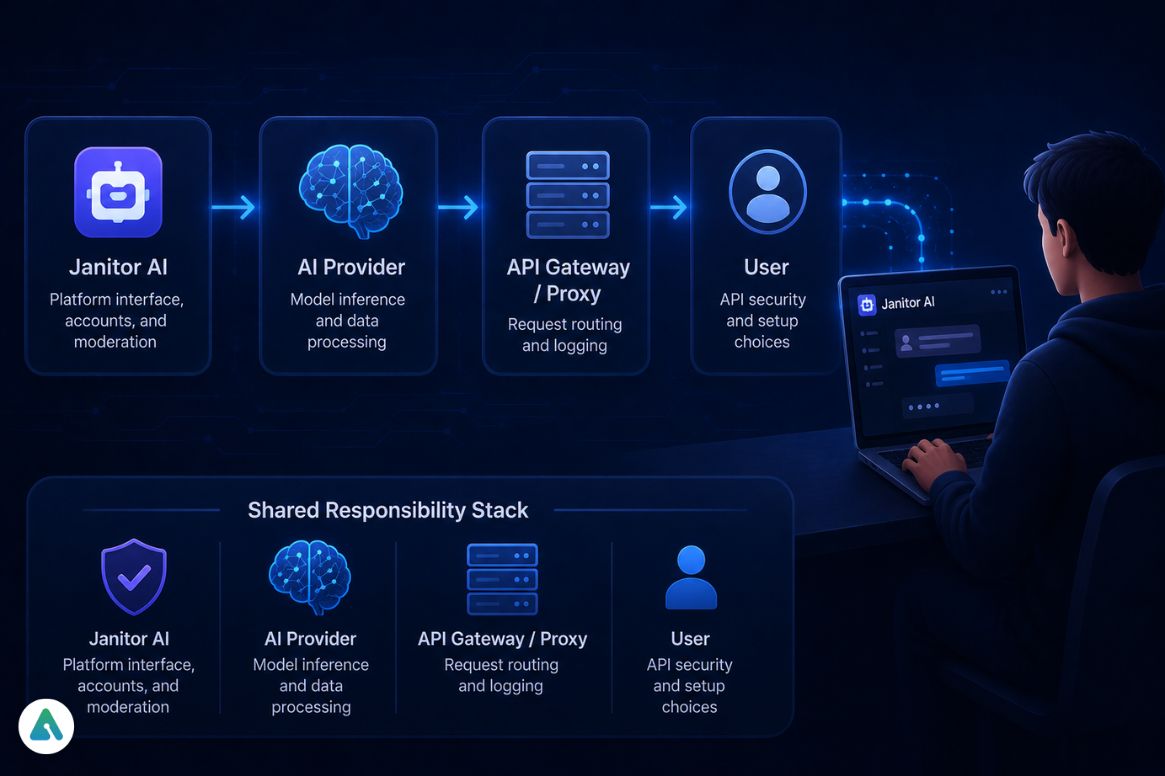

Janitor AI is primarily a character-based chat interface — the frontend, the character system, the chat manager, and the moderation layer. External AI models generate the actual responses. Those models may come from OpenAI, DeepSeek, OpenRouter, KoboldAI, Chutes AI, local LLMs, or community proxies.

That architecture creates a major privacy implication that most users never think about.

The Shared Responsibility Stack:

| Layer | Responsibility |

|---|---|

| Janitor AI | Platform interface, accounts, and moderation |

| AI provider | Model inference and data processing |

| API gateway/proxy | Request routing and logging |

| User | API security and setup choices |

Two Janitor AI users can have completely different privacy risks depending on which provider they use, whether logging is enabled, whether the API is official, and whether a public proxy sits in the middle. Blanket safety assessments are becoming meaningless precisely because of this variability.

Why Your API Setup Changes Your Privacy Risk

This is the part most Reddit threads completely oversimplify.

| Setup Type | Privacy Level | Risk Level | Recommended? |

|---|---|---|---|

| Local LLM (Ollama/LM Studio) | Maximum | Lowest | Best for full privacy |

| Official OpenAI API | Moderate-High | Low | Good balance |

| Official DeepSeek API | Moderate | Moderate | Depends on retention settings |

| OpenRouter / Aggregators | Moderate-Low | Moderate | Provider-dependent |

| Shared Community Keys | Low | High | Avoid if possible |

| Free Public Proxy APIs | Very Low | Very High | Highest risk setup |

The local LLM option deserves more attention than it typically gets in Janitor AI safety discussions.

Local LLMs vs Cloud APIs: Which Option Is More Private?

Running a local LLM through tools like Ollama or LM Studio is the only configuration that keeps your conversations entirely off external servers.

Janitor AI supports CORS (Cross-Origin Resource Sharing), which allows a locally hosted model to communicate with the Janitor AI interface directly from your own machine. When configured correctly, your prompts never leave your device — no API provider, no inference server, no logging gateway in the chain.

The trade-off: setup requires more technical comfort than pasting an API key into a settings field, and local inference is limited by your own hardware. But for users whose primary concern is genuine data privacy rather than access to frontier models, local hosting is the definitive answer.

For anyone already exploring how to run models locally, connecting one to Janitor AI via CORS is the logical next step.

What Really Happens to Your Janitor AI Conversations

Many users assume AI chats work like encrypted messaging apps. They don’t.

Most AI systems process conversations through cloud servers, inference providers, API gateways, logging systems, and moderation layers. That doesn’t automatically make Janitor AI unsafe — but it does mean you should never treat AI chats as completely private.

Can Janitor AI staff see your chats?

There’s no evidence that employees manually read all conversations. However, moderation systems, reports, debugging tools, and infrastructure access can potentially expose conversations under certain circumstances. That’s true across many AI platforms, not just Janitor AI.

The safest assumption is simple: never type anything into an AI chatbot that would seriously harm you if exposed publicly. That includes passwords, banking details, government IDs, private business information, medical records, and workplace secrets.

Is DeepSeek Safe to Connect With Janitor AI?

Many users connect DeepSeek to Janitor AI because it’s cheaper and often less restrictive than mainstream models. The part most guides miss: the model provider is only one piece of the chain.

Even if a model provider has strong privacy policies, those protections may not fully apply once third-party tools enter the workflow. In 2026, many AI providers explicitly separate their own infrastructure responsibilities from third-party platform responsibilities.

The weak point is usually proxy services, exposed API keys, unofficial browser tools, logging gateways, or public API relays — not the model itself.

When you ask, “Is DeepSeek safe on Janitor AI?” the real question is “Who else sits between the model and me?” That’s the far more important thing to understand.

The Most Common Ways Janitor AI Users Get Compromised

The platform itself is not widely associated with major hacking incidents. But users expose themselves through poor security practices far more often than through platform-level breaches.

The real risks look like this:

| Risk | Example |

|---|---|

| Password reuse | Using the same Discord password across AI platforms |

| Phishing sites | Fake Janitor AI login pages in Google results |

| Unsafe extensions | Browser tools are stealing session tokens |

| Proxy scams | “Free AI” services that log every prompt |

| API leaks | Accidentally exposing paid API keys in shared configs |

In testing across multiple configurations, password reuse was the single most common vulnerability — users recycling old gaming or Discord credentials across AI platforms, dramatically increasing account takeover risk after unrelated data breaches.

Why Free AI Proxies Are Becoming a Bigger Security Risk

Several “free unlimited AI proxy” tools circulating in late 2025 and early 2026 were accused in online communities of aggressive logging, hidden rate limits, prompt retention, injected ads, and API abuse.

Not every proxy is malicious. But the risk profile is substantially higher than official providers — and the “unlimited uncensored AI forever for free” framing is a reliable signal that something in the economics doesn’t add up.

The practical fix: Create a dedicated API key for Janitor AI with a hard monthly spending limit. If the key is compromised, your financial exposure stays capped, the damage remains limited, and revoking access is straightforward. Even a $5–10 monthly limit protects against runaway API abuse without meaningfully restricting normal use.

Why Janitor AI May Not Be Suitable for Teen Users

For most minors, probably not. Janitor AI is heavily associated with NSFW roleplay, adult conversations, violent themes, emotionally manipulative characters, and uncensored community bots. Even with moderation systems, inappropriate content remains easy to encounter.

The concern goes beyond content filtering. Modern AI companions can simulate affection, validation, emotional intimacy, and romantic attachment in ways traditional moderation systems were never designed to handle. The dangers of teen AI chatbot use in 2026 are qualitatively different from social media risks — the parasocial dynamic is more intense, and the feedback loops are harder for young users to recognize as artificial.

The Growing Concern Around AI Emotional Dependency

Early AI safety conversations focused on malware, hacking, and data breaches. By 2026, researchers and online safety communities started discussing something different: AI emotional dependency.

The more human-like AI becomes, the more likely users are to reveal trauma, discuss relationships, share fantasies, disclose personal information, and emotionally bond with fictional characters. Ironically, the systems that feel emotionally “safe” reduce users’ privacy caution — they share things with AI characters that they would never type into a search engine.

That doesn’t make Janitor AI uniquely dangerous. It means the psychology behind AI attachment is now a legitimate safety consideration alongside traditional technical risks. Users who understand this pattern protect themselves better than users who only think about hacking.

Janitor AI vs Character AI: Which Platform Feels Safer in 2026?

| Feature | Janitor AI | Character AI |

|---|---|---|

| NSFW restrictions | Minimal | Strong |

| Content moderation | Moderate | Heavy |

| Teen safety | Weak | Better |

| API flexibility | High | Low |

| Roleplay freedom | Very High | Limited |

| Privacy predictability | More variable | More centralized |

| Local LLM support | Yes (CORS) | No |

Character AI prioritizes moderation, brand safety, and mainstream accessibility. Janitor AI prioritizes customization, openness, and external model flexibility. That freedom attracts power users — but it also shifts more responsibility onto users themselves. The question of whether Character AI is safe involves a different set of trade-offs than Janitor AI safety, and understanding both helps users make informed platform choices.

Practical Ways to Use Janitor AI More Safely

Use a separate email address. A dedicated email reduces identity correlation, phishing exposure, and cross-platform tracking risks.

Never share sensitive personal information. Treat AI chats like cloud-hosted conversations — not encrypted diaries. Real addresses, financial details, legal documents, employer secrets, and medical records don’t belong in any AI chat.

Avoid random public proxies. If a service promises unlimited, uncensored AI forever for free, the economics don’t work. Infrastructure costs money. If users aren’t paying financially, the platform likely monetizes logs, metadata, prompts, or traffic patterns.

Use spending-limited API keys. Create isolated keys with low spending caps, provider-specific access, and revocable permissions.

Consider local LLMs for maximum privacy. If privacy is genuinely important to your use case, local hosting via CORS is the only configuration that keeps data entirely on your device.

Maintain emotional distance from AI characters. Some users spend multiple hours daily roleplaying and invest more emotional energy in AI relationships than real ones. Setting healthy boundaries with AI companions matters more than most people expect — and recognizing when that boundary has eroded is harder than it sounds when the AI has been designed to feel emotionally responsive.

Does Unfiltered AI Automatically Mean Higher Risk?

A common misconception: “If the AI is uncensored, the platform must be dangerous.”

The bigger security risks usually involve weak infrastructure, shady proxies, poor API practices, exposed credentials, and deceptive logging policies — not the content restrictions of the model itself. An unrestricted AI model is not automatically malicious. However, less-moderated ecosystems often attract riskier third-party tools, which changes the overall security environment around them.

Frequently Asked Questions About Janitor AI Safety

Q. Is Janitor AI safe to use in 2026?

Yes, Janitor AI is generally safe for adults when used responsibly. The biggest risks usually come from third-party APIs, public proxies, weak passwords, and unsafe integrations rather than the platform itself. Users can improve safety by using trusted AI providers, spending-limited API keys, and avoiding sensitive personal information in chats.

Q. Is Janitor AI safe from hackers?

Janitor AI is not widely associated with major hacking incidents, but users can still expose themselves through phishing pages, password reuse, malicious browser extensions, or leaked API keys. Most account compromises happen because of weak security practices rather than direct platform breaches.

Q. Are Janitor AI chats private?

Janitor AI chats are not fully private by default. Depending on the platform configuration, conversations can pass through servers, APIs, logging systems, moderation layers, or third-party infrastructure. Users should avoid sharing passwords, financial information, legal documents, or other highly sensitive personal data.

Q. Can Janitor AI creators or staff see your chats?

Character creators generally cannot casually browse private conversations. However, moderation systems, infrastructure access, reports, or debugging tools may expose portions of chats under certain conditions. Like most AI chatbot platforms, users should assume that absolute privacy is not guaranteed.

Q. Is Janitor AI encrypted?

Some Janitor AI traffic is encrypted during transmission using standard web security protocols. However, encrypted traffic is different from true end-to-end encryption. Users should not assume Janitor AI offers the same privacy protections as secure messaging apps like Signal.

Q. What is the safest way to use Janitor AI?

The safest setup is using a local LLM through CORS without routing conversations through external cloud APIs. For most users, a safer practical setup includes:

- official API providers

- isolated API keys

- spending limits

- strong passwords

- avoiding free public proxies

This significantly reduces privacy and credential risks.

Q. Is DeepSeek safe on Janitor AI?

Using official DeepSeek APIs is generally safer than using unknown public proxies or shared community keys. The overall safety depends more on the full infrastructure chain — including gateways, logging systems, and browser tools — than the model name itself.

Q. Is Janitor AI safe for kids or teenagers?

Most experts would not consider Janitor AI suitable for minors. The platform contains unrestricted NSFW content, adult roleplay, emotionally intense AI interactions, and community-created characters that may expose younger users to inappropriate material.

Q. What are the biggest downsides of Janitor AI?

The biggest downsides of Janitor AI in 2026 include:

- privacy uncertainty

- NSFW exposure

- emotional dependency risks

- unsafe public proxy ecosystems

- complicated API setup

- inconsistent moderation quality

Advanced users often accept these trade-offs in exchange for greater AI freedom and customization.

Q. Can Janitor AI leak your chats?

No major evidence shows that Janitor AI intentionally leaks chats at scale. However, unsafe APIs, compromised accounts, malicious proxies, browser extensions, and weak user security practices can still expose conversations. Users can reduce the risk by choosing trusted providers and avoiding sensitive information.

Related: How to Fix Janitor AI Proxy Error 429 (Complete Guide)

| Disclaimer: This article provides informational and educational content only and does not offer legal, cybersecurity, or mental health advice.

We are not affiliated with or endorsed by Janitor AI, DeepSeek, OpenAI, OpenRouter, or any other platform mentioned. AI tools, privacy policies, moderation systems, and third-party integrations can change over time, so readers should always review official documentation and use their own judgment before sharing personal or sensitive information online. |