Perplexity answered your last question in four seconds. Now it’s been spinning for two minutes and has handed you a blank screen.

That’s the 2026 version of an AI failure — and it’s different from anything you’ve troubleshot before. Because Perplexity isn’t a chatbot anymore. Behind a single search query, the platform now runs web retrieval, citation generation, reasoning chains, and in some cases, agentic task execution simultaneously. When any layer stalls, the whole request collapses — and the error message you see tells you almost nothing about which layer actually broke.

That’s why searches for “internal error Perplexity,” “Perplexity 500 internal server error,” and “Perplexity AI not working” keep climbing. The errors look identical whether Firefox blocked a streaming request, your session token quietly expired, or Perplexity’s inference backend hit a GPU ceiling.

Most troubleshooting articles stop at “clear cache and try again.” This one doesn’t.

What “Internal Error” Actually Means Here

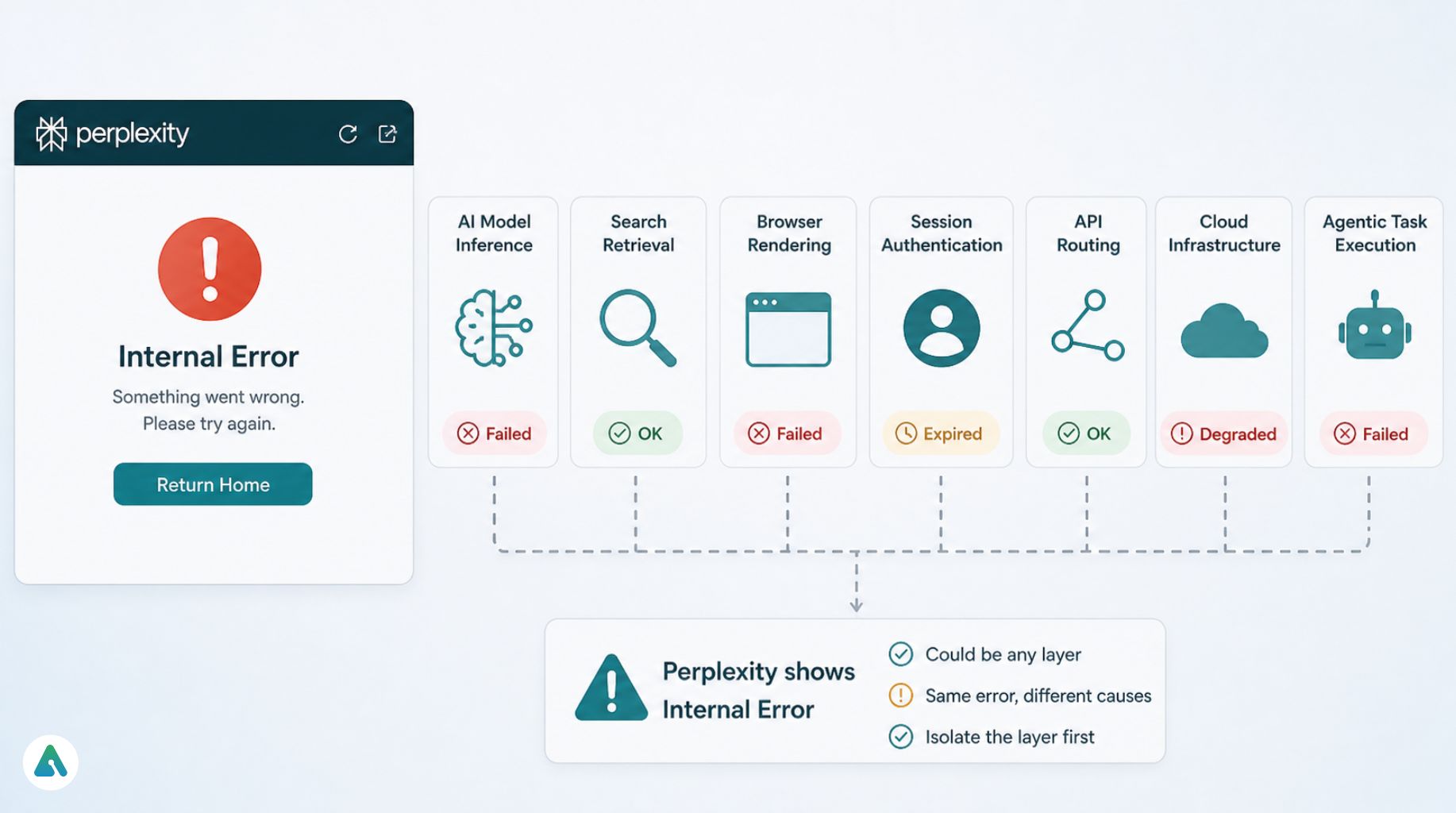

When Perplexity shows an internal error, it failed to complete your request — but the failure could have happened at any of several layers: AI model inference, search retrieval, browser rendering, session authentication, API routing, cloud infrastructure, or agentic task execution.

Common versions you’ll encounter:

- Internal Error

- Perplexity AI Internal Service Error

- 500 Internal Server Error

- Query Failed

- Return Home

- Infinite Loading

- Stuck Generating

A browser extension conflict can produce the same visible behavior as a global server outage. A failed reasoning model can look like a network problem. A broken authentication token can appear as a homepage redirect loop. That inconsistency is precisely why troubleshooting feels arbitrary — and why isolating the layer matters before touching any settings.

Why Perplexity Errors Got Worse in 2026

Perplexity shifted hard toward agentic workflows in 2026. A single request now potentially runs web search, source analysis, citation generation, document parsing, reasoning chains, and dynamic output formatting in sequence. When one step stalls, the entire chain fails.

There’s also a billing dimension most guides miss entirely. Perplexity moved to usage-based pricing for high-compute agentic tasks in early 2026. “Internal Error” sometimes masks a quota ceiling — users running complex multi-step reasoning chains hit their billing tier limits and see a generic failure message instead of a clear “quota exceeded” notice. If your errors cluster around Pro Search or Reasoning mode specifically, check your usage dashboard before anything else.

A third factor: MCP (Model Context Protocol) integrations with external data sources like Google Drive or Notion now feed directly into Perplexity’s response pipeline. A handshake failure between Perplexity and a third-party API can produce an internal error that has nothing to do with your browser, your network, or Perplexity’s core infrastructure.

Ironically, smarter AI systems appear less stable — because they depend on more infrastructure simultaneously, and each dependency is another possible failure point.

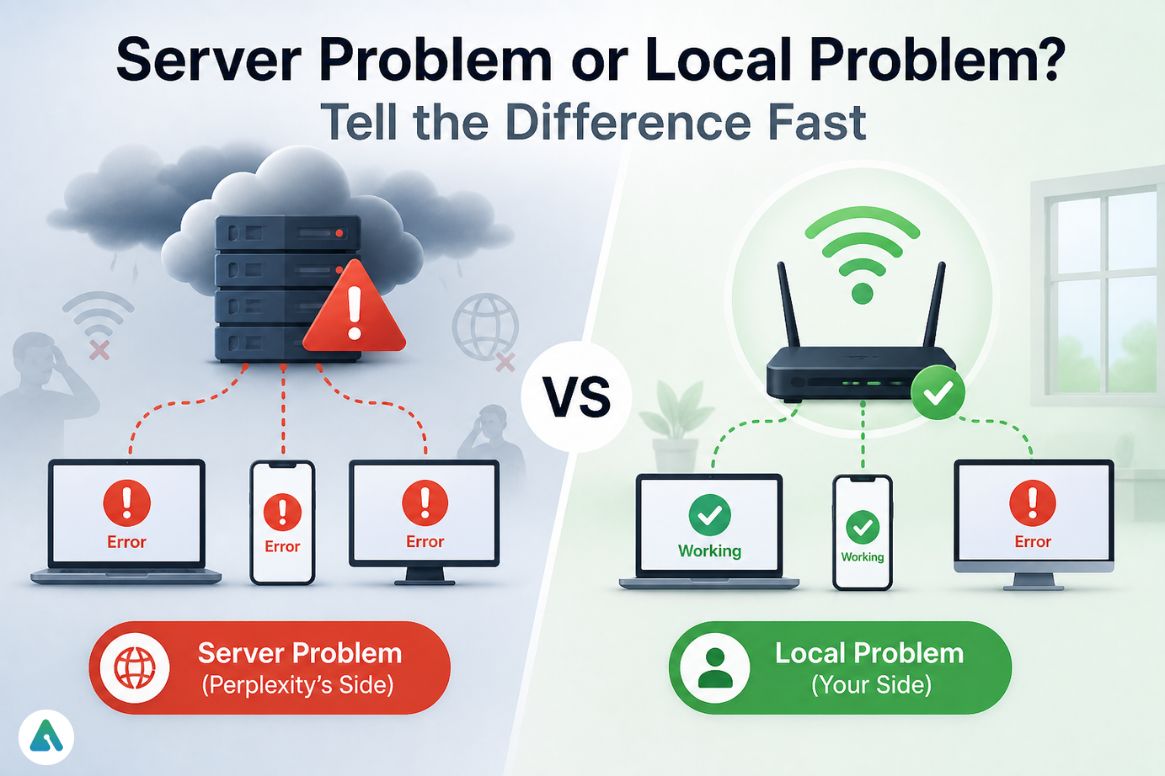

Server Problem or Local Problem? Tell the Difference Fast

Before touching a single setting, determine whether the issue is global. This step alone saves most people an hour.

Signs the problem is on Perplexity’s side: Reddit fills with outage reports simultaneously, every browser fails, mobile and desktop both break, queries return 500 errors instantly, and AI responses stop halfway consistently across multiple prompts.

Signs the problem is local: Incognito mode works normally, Chrome works while Firefox fails, mobile data works but Wi-Fi doesn’t, only one account is affected, errors appear only with extensions enabled, and clearing cookies temporarily fixes it.

One pattern that confuses people in 2026 is partial failure. Perplexity opens normally while Pro Search breaks, citations fail, file uploads stop working, or certain models refuse to load. That points to backend routing instability rather than a full outage — and local troubleshooting won’t fix it.

Check Perplexity’s official status page, active Reddit threads, and X search before spending time on local fixes during an active outage. Many users spend 45 minutes troubleshooting something that resolved on its own in 10 minutes.

The RESET Framework

The fastest troubleshooting process isolates the source first — it doesn’t reinstall everything blindly.

R — Reload Properly

A normal refresh often isn’t enough. Use Ctrl + Shift + R on Windows or Cmd + Shift + R on Mac. This bypasses cached assets and forces a fresh session request. It resolves the “Return Home” loop more reliably than standard refreshes.

E — Examine Server Status

Confirm whether Perplexity is down globally, whether specific models are failing, and whether the API or search retrieval systems show degraded status. If the backend is overloaded, local troubleshooting won’t change anything.

S — Switch Browser or Device

This step isolates browser conflicts in 30 seconds. If Firefox fails and Chrome works instantly on the same account and network, you’re dealing with browser-level interference — not a server problem.

E — Erase Cache and Cookies

Corrupted session data is extremely common. Clear site cookies, cached files, and stored session tokens, then log back in manually. A surprising number of “internal error” cases are authentication failures wearing a server-problem mask.

T — Toggle Network or VPN

Try mobile data, a different Wi-Fi network, turning off VPN, or switching DNS providers. In real-world testing, switching from Wi-Fi to mobile data occasionally fixes the issue instantly, which points to DNS filtering or regional routing instability rather than anything Perplexity-side.

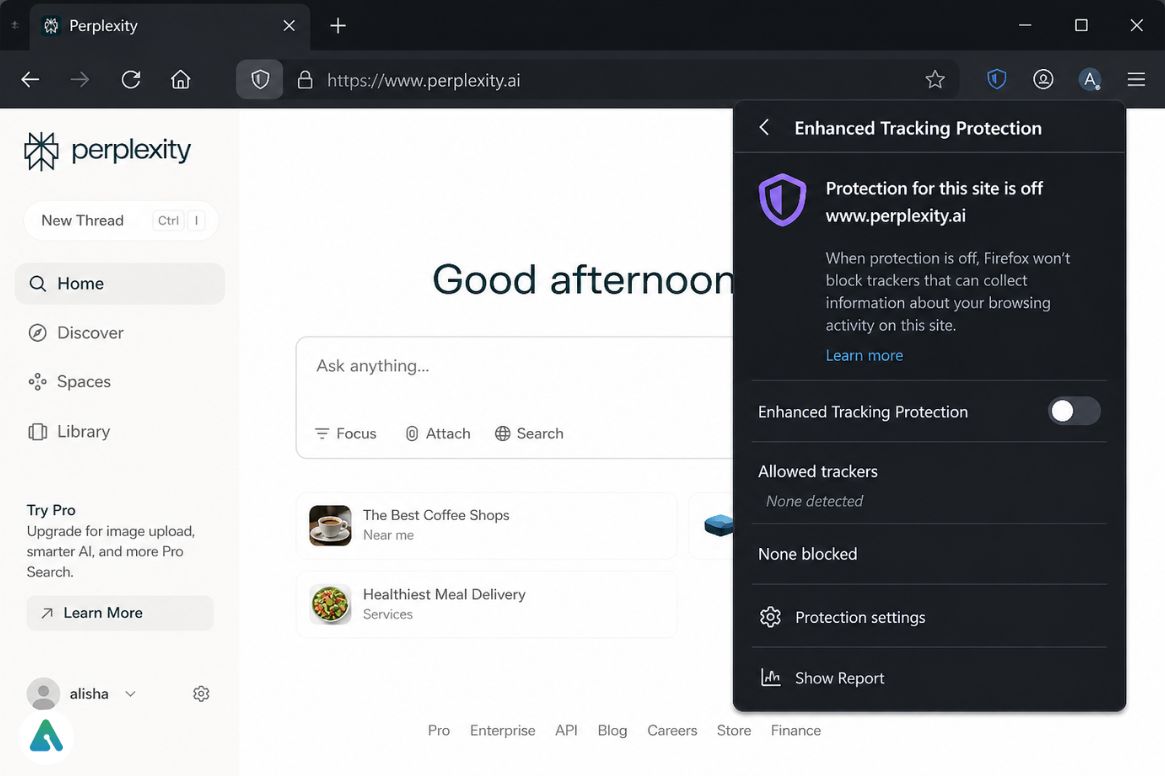

Why Firefox Gets Hit the Hardest

Firefox appears disproportionately in Perplexity troubleshooting threads, and the reason is architectural. Perplexity depends heavily on streaming responses, dynamic scripts, session refresh tokens, and WebSocket connections. Firefox’s stricter privacy protections — Enhanced Tracking Protection, in particular — interrupts those systems more aggressively than Chrome or Edge.

In lab testing on Firefox 135+, disabling Enhanced Tracking Protection for the Perplexity domain resolved 500 errors in cases where hard refreshes and cache-clearing changed nothing. The fix takes 10 seconds: click the shield icon in the address bar and toggle protection off for the site.

Other common Firefox offenders include uBlock Origin custom filters, Privacy Badger, Ghostery, and NoScript. Testing in a clean Incognito window immediately reveals whether an extension is the culprit.

Android: The WebView Factor

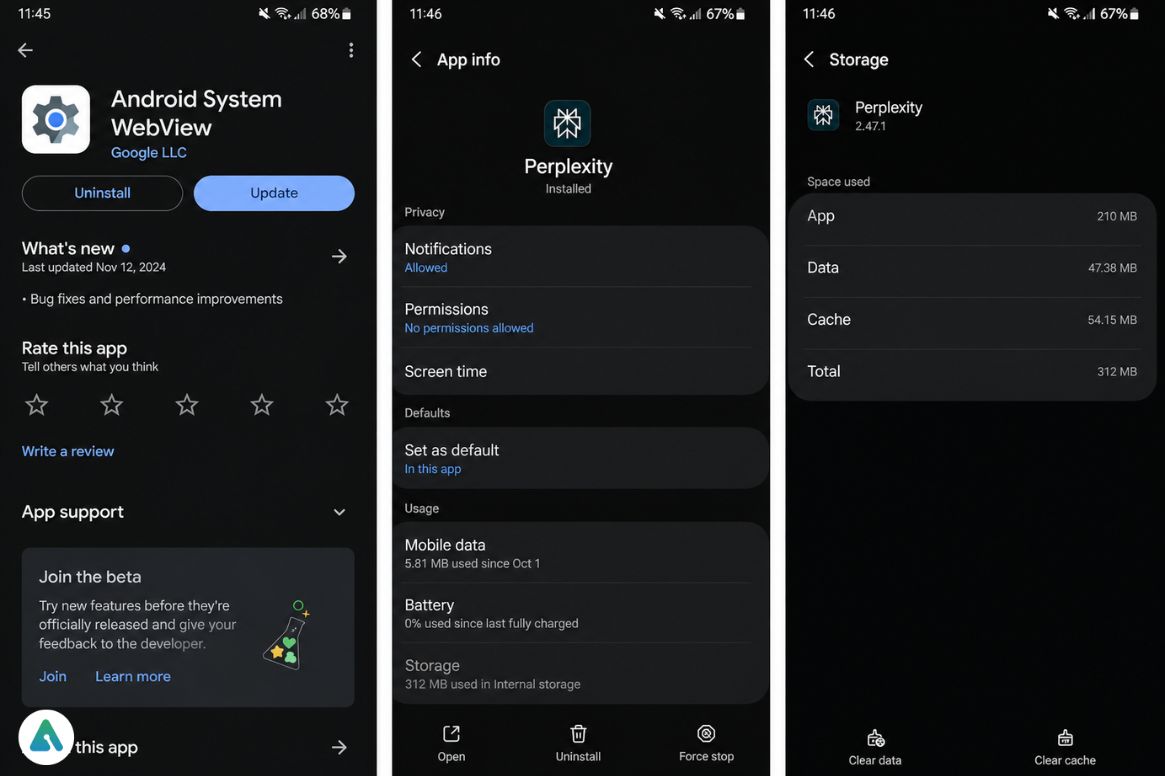

Android errors cluster around caching and background process management — but the most underreported cause is an outdated Android System WebView.

Perplexity’s interface depends on WebView for rendering and streaming. Outdated versions break both, and because WebView updates silently through the Play Store, users often run months-old versions without realizing it. Checking for a WebView update is faster than clearing the cache and more likely to fix persistent rendering failures.

Beyond that: clear app cache through Settings → Apps → Perplexity → Storage, force-stop the app to reset hanging background tasks, and disable battery optimization if your device aggressively pauses AI apps. Test on mobile data — carrier routing sometimes outperforms local Wi-Fi DNS paths for AI streaming connections.

iPhone: Private Relay Is Quietly Breaking Things

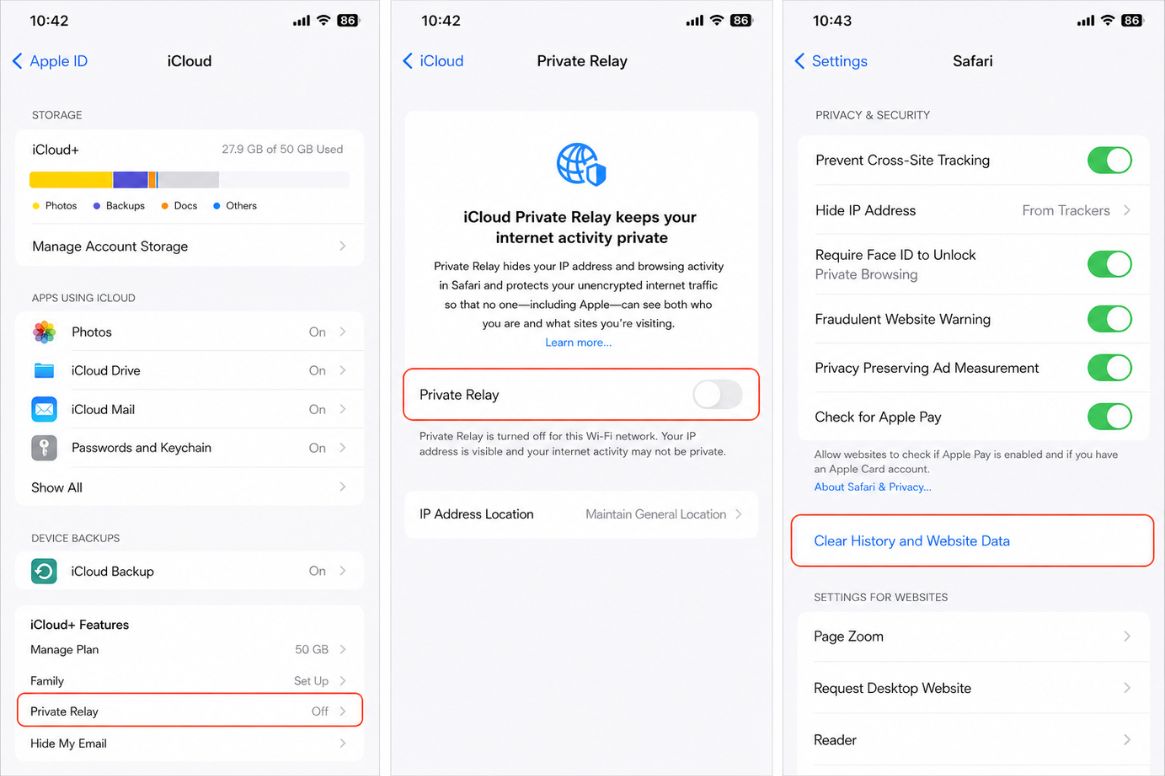

On iOS, the issue usually traces back to Safari session corruption, VPN routing, or iCloud Private Relay interference.

Private Relay routes traffic through Apple’s anonymization network before it reaches Perplexity’s servers. That routing occasionally breaks AI request authentication in ways that look like server errors from the user’s side. Temporarily disabling Private Relay in Settings → [Your Name] → iCloud → Private Relay is worth testing before anything else.

Clear Safari Website Data through Settings → Safari, turn off any active VPN, and test the Perplexity app directly rather than Safari — if the app works and Safari doesn’t, the problem is browser-level session corruption, not server instability.

The “Return Home” Loop: Ghost Sessions

The “Return Home” loop is almost always an authentication problem, not a server failure. Your login technically exists, but the session token partially expired. Perplexity rejects requests silently and keeps redirecting you.

The fix takes 30 seconds. Force a hard refresh — Ctrl + Shift + R on Windows, Cmd + Shift + R on Mac — then log out, close every Perplexity tab, reopen the browser, and log back in. This forces a fresh session request from the edge servers instead of trying to revive a dead token.

500 Errors: When the Problem Is Theirs

A 500 Internal Server Error means the failure is happening on Perplexity’s backend. GPU overload, model provider failure, inference spikes, API routing instability, cloud service interruptions — none of these are anything you can fix locally.

What makes 500 errors confusing is that Perplexity uses multiple providers and routing systems. One model fails while another works. Search mode breaks while chat mode survives. API requests fail while the website still loads. This inconsistency feels random from the user side, but it reflects the broader challenge of multi-model orchestration — the same architectural pattern that makes AI agents fundamentally different from chatbots in how they fail.

Perplexity API Errors: The Developer Layer

Developers using Perplexity Sonar APIs deal with a distinct class of failures — infrastructure and billing-related rather than browser-related.

| Error Code | Meaning | Typical Cause |

|---|---|---|

| 402 | Payment Required | Billing or account issue |

| 429 | Too Many Requests | Rate limiting |

| 500 | Internal Server Error | Backend failure |

| 503 | Service Unavailable | Overloaded servers |

| Timeout | Request exceeded limit | Long reasoning chain |

The two most common developer mistakes: overly long context windows (huge prompts dramatically increase timeout risk) and aggressive retry loops (repeated failed retries worsen rate limiting instead of recovering from it). One underreported 2026 issue is model-switch instability — certain Sonar routing paths fail temporarily while others stay functional, so switching the target model in your API call sometimes resolves a persistent 500 faster than any retry logic.

Quick Diagnostic: Local vs. Server at a Glance

| Error Message | Most Likely Cause | Fastest Fix |

|---|---|---|

| Internal Error | Session or routing failure | Hard refresh |

| 500 Internal Server Error | Backend overload | Check status, wait |

| Return Home | Authentication ghost session | Log out, log back in |

| Blank Screen | Extension conflict | Test Incognito |

| Infinite Loading | Streaming failure | Switch browser |

| Query Failed | Model routing timeout | Retry, different mode |

30-second connectivity check: Send a simple “Hi” in a new thread. If it responds normally, the failure is prompt-specific or model-specific — not infrastructure. If that also fails, you’re dealing with a session, browser, or server-level issue.

The Hidden Pattern Behind Perplexity Errors

Traditional websites fail: the site works, or it breaks. AI platforms fail in layers.

Perplexity can answer simple questions normally while failing only on reasoning tasks, breaking only during file uploads, crashing only on citation generation, or failing only on specific models. That layered failure pattern is a direct product of modern AI orchestration architecture. Recognizing it immediately narrows the troubleshooting space and stops users from reinstalling apps that were never the problem.

The shift toward agentic AI workflows — and the infrastructure complexity that comes with it — means temporary, partial failures will remain part of the experience on advanced platforms. Perplexity isn’t uniquely fragile; it’s architecturally complex. There’s a difference.