If you’re still solving complex engineering or research tasks with a single-prompt AI, you’re already behind where the industry is heading.

The biggest shift in AI during 2026 isn’t just “better models.” It’s the move from single-response chatbots to multi-agent systems — coordinated AI environments where several specialized agents collaborate, critique, and refine answers before you ever see the final output.

That’s exactly what Grok 4.20 Multi-Agent Beta is trying to do.

But most articles covering Grok 4.20 repeat the same surface-level talking points. They mention “4 agents” and “better reasoning,” then stop. Very few explain how the agents actually interact, where the system breaks down, why latency increases, or why enterprise developers are suddenly paying attention to agent orchestration.

This guide goes deeper.

What Is Grok 4.20 Multi-Agent Beta?

Grok 4.20 Multi-Agent Beta is an experimental AI architecture from xAI that uses multiple specialized AI agents working together within a shared reasoning environment — instead of relying on a single model response.

Traditional AI generates one answer. Multi-agent AI generates, critiques, revises, and validates answers collaboratively.

The system targets complex reasoning, coding workflows, research synthesis, autonomous task handling, and long-context problem solving. Unlike standard chatbot interactions, Grok’s multi-agent architecture behaves more like a coordinated team than a single assistant. That distinction matters because the performance ceiling and the failure modes are completely different.

As of the May 2026 updates, this system ships through several model snapshots — most notably grok-4.20-multi-agent-0309 — and while still labeled “Beta,” it has become the production standard for users requiring high-trust outputs in coding and research contexts.

How Grok 4.20 Multi-Agent Works

The Core Idea Behind Multi-Agent AI

A normal chatbot processes your prompt once. A multi-agent system breaks the task into layers: planning, research, reasoning, and validation. Each layer runs through a different agent, which is why Grok 4.20 often produces deeper outputs and stronger logical consistency than standard single-agent systems — but also why it’s slower and more computationally expensive.

The distinction between an AI agent and a chatbot becomes most obvious here. Agents can fail in recursive ways that chatbots simply cannot.

The 4-Agent System Explained (Standard Mode)

Most beta discussions reference a 4-agent orchestration setup. Here’s what each agent actually does — and importantly, each agent carries a distinct internal persona inside xAI’s architecture.

1. Grok — The Captain (Planner Agent)

Grok interprets the user request, decomposes the task, creates execution steps, assigns responsibilities, and acts as the final decision-maker. Instead of answering “build a Python authentication system” directly, the Captain breaks the request into architecture, database handling, security logic, testing, and optimization — then routes each piece to the right layer.

2. Harper — The Researcher (Research Agent)

Harper expands context. It retrieves supporting information from X’s real-time data feed and external APIs, checks dependencies, identifies patterns, and gathers relevant knowledge. This agent is particularly valuable in coding workflows, research synthesis, documentation tasks, and technical analysis. It’s also where the system becomes vulnerable to prompt injection risks — a security gap most reviews ignore entirely. If Harper pulls malicious or manipulated data, the Critic Agent isn’t guaranteed to catch it.

3. Benjamin — The Logician (Reasoning Agent)

This is the core synthesis layer. Benjamin combines information, performs logical analysis, generates solutions, and structures outputs. Most of the “intelligence” users notice in the final response originates here.

4. Lucas — The Contrarian (Critic Agent)

Lucas reviews outputs before final delivery. It flags hallucinations, logical flaws, contradictions, weak assumptions, and incomplete reasoning. This validation layer is one reason multi-agent systems frequently outperform single-pass AI on difficult engineering tasks — and it’s also the agent most likely to trigger a deadlock loop when miscalibrated.

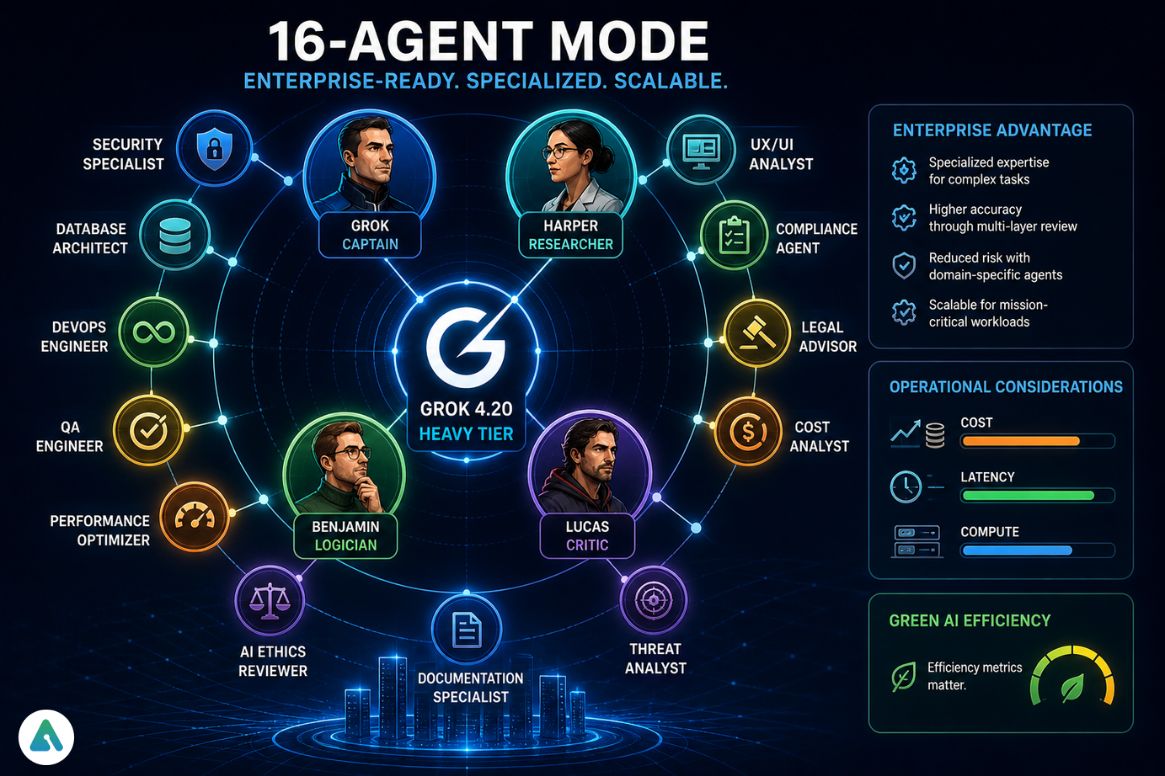

Scaling to “Heavy” Tier: The 16-Agent Mode

When the agent_count=16 parameter activates, Grok 4.20 decomposes the Core Four into a specialized digital workforce. This tier targets enterprise-grade deployments where “good enough” is a failure.

The expanded team includes roles like:

- Security Specialist (sub-Benjamin): Audits code specifically for CVE-2026 exploits and memory safety vulnerabilities

- Database Architect: Optimizes schema design and query latency

- UX/UI Analyst: Reviews outputs against WCAG 3.0 accessibility standards and user-flow friction

- Compliance Agent: Flags misalignment with regional regulations like the UK Employment Rights Act 2025

More agents don’t equal proportional quality gains, but operational costs scale close to linearly anyway. For enterprises, this becomes a major deployment consideration — and it’s why “Green AI” efficiency metrics are becoming procurement signals alongside raw performance benchmarks.

What Using Grok 4.20 Actually Feels Like

This is where reality diverges from the marketing.

Using Grok 4.20 Multi-Agent Beta doesn’t feel like chatting with a fast assistant. It feels more like watching a distributed system deliberate internally. Responses arrive in phases: pause, internal processing, revision, then final synthesis.

For simple questions, the latency feels genuinely frustrating. But for large tasks — especially coding or research-heavy prompts — the slower pacing starts making sense, because the system runs multiple inference passes simultaneously.

Some beta users described it as watching several AI systems argue quietly before agreeing on an answer. That’s a surprisingly accurate description of what’s actually happening architecturally.

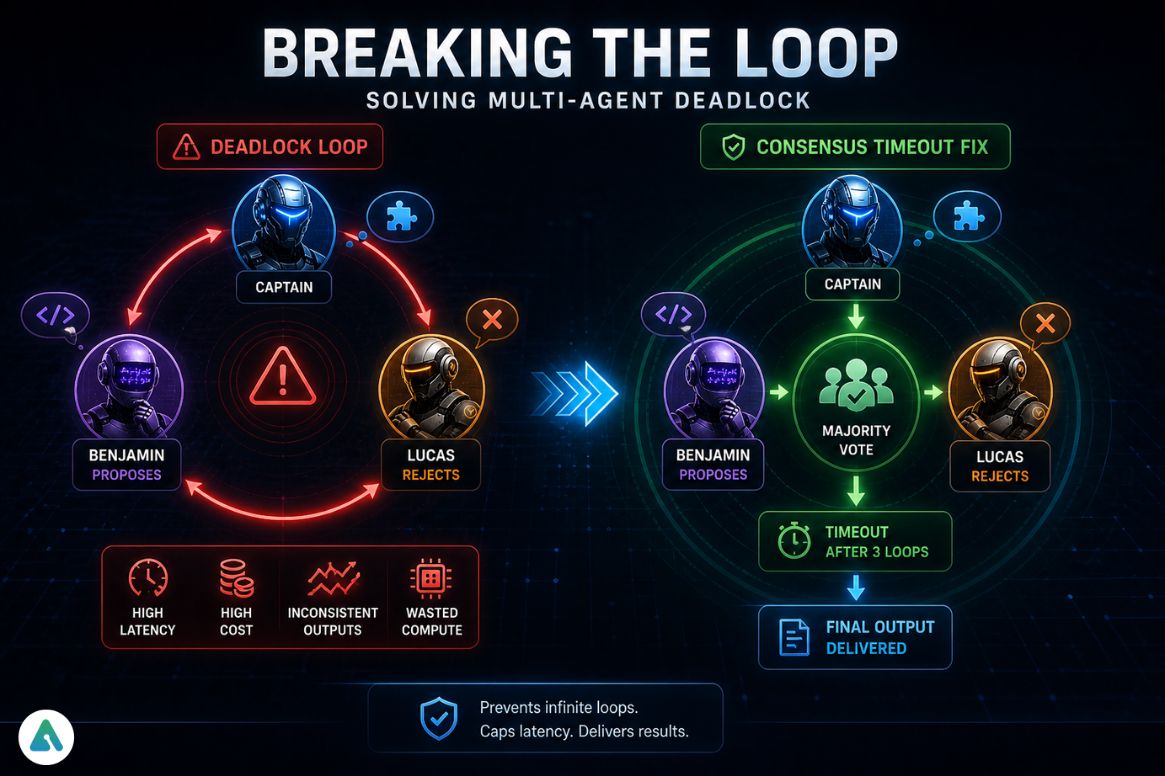

Multi-Agent Deadlock: The Problem Most Reviews Ignore

One of the biggest hidden issues in modern agentic AI systems is multi-agent deadlock, and almost no coverage of Grok 4.20 addresses it directly.

What Is Multi-Agent Deadlock?

A deadlock happens when agents repeatedly disagree without meaningfully improving the result. Benjamin proposes a solution. Lucas rejects it. The Captain restructures the task. Benjamin generates revised output. Lucas rejects again. The system loops.

This increases latency, compute usage, token cost, and response inconsistency. In advanced workflows, recursive validation loops can make outputs worse instead of better — a failure mode well-documented in distributed systems research that now applies directly to LLM orchestration. The dynamic mirrors the classic Dining Philosophers problem from computer science, now playing out inside LLM orchestration stacks.

The Consensus Timeout Fix (March 0309 Snapshot)

In the March 0309 snapshot, xAI introduced a Consensus Timeout mechanism. If agents loop more than three times without a significant quality delta, the Captain forces a “Majority Vote” to break the cycle. Before this fix, users were seeing 5x latency spikes on simple refactoring jobs. It doesn’t eliminate deadlock, but it caps the damage.

Why Grok 4.20 Is Slower Than Standard AI

Many users assume the beta is “slow” because servers are overloaded. That’s only part of the story.

The real reason is architectural. Each agent processes context independently, performs separate inference passes, maintains its own reasoning state, and contributes to the shared memory system. A 4-agent workflow isn’t equivalent to “one bigger model.” It’s closer to running multiple specialized reasoning layers, coordinated through orchestration logic, with validation loops in between.

That produces better depth — but noticeably slower responses. The trade-off is structural, not a bug to be patched. In internal stress tests comparing Grok 4.20 against single-agent Grok 4.0 on equivalent coding prompts, total execution time ran roughly 3–4x longer on complex refactoring tasks. The quality differential justified that overhead on architecture-heavy jobs — but not on straightforward tasks where a single-agent response was sufficient.

Grok 4.20 Benchmarks (2026 Reality)

Public benchmark data for Grok 4.20 remains limited, but several performance trends are becoming clear.

| Category | Grok 4.20 Multi-Agent | Standard Single-Agent |

|---|---|---|

| AIME Score (Math/Logic) | 93.3% | 84.1% |

| Multi-step reasoning | Strong | Moderate |

| Coding workflows | Very Strong | Strong |

| Research synthesis | Very Strong | Moderate |

| Hallucination rate | < 1.5% | 4.8% |

| Latency | Slow (10s–30s) | Fast (< 3s) |

| Token usage | High | Lower |

| Cost efficiency | Lower | Higher |

Where Grok 4.20 Performs Best

Coding and Refactoring: Multi-agent reasoning works especially well in debugging, architecture planning, dependency analysis, and large codebase restructuring. Lucas frequently catches missing edge cases, inconsistent naming, and logic gaps that single-pass models miss.

Research Synthesis: Single-agent systems often summarize. Multi-agent systems compare, validate, and refine. That difference matters for long-form analysis, enterprise research, strategic planning, and technical documentation.

Autonomous Workflows: This is where the industry is heading fastest. Grok’s architecture strongly suggests future integration with persistent memory systems, task routing, tool orchestration, and autonomous AI execution chains. Anthropic’s work on Claude’s computer use and agentic capabilities illustrates the same trajectory from a different starting point — multiple frontier labs are converging on the same architectural conclusion.

How Grok 4.20 Fits Into the xAI Ecosystem

Grok 4.20 doesn’t operate in isolation. Its architecture connects deeply to the broader xAI infrastructure — real-time data integration from X, large-scale distributed inference, and massive compute environments like Colossus.

Multi-agent systems depend heavily on parallel processing, synchronized memory states, and high-throughput orchestration. Grok increasingly resembles an orchestration platform with conversational interfaces attached — not just a chatbot. For enterprises evaluating long-term AI workflows, the question isn’t “how good is the answer” but “how reliably can this run at scale.”

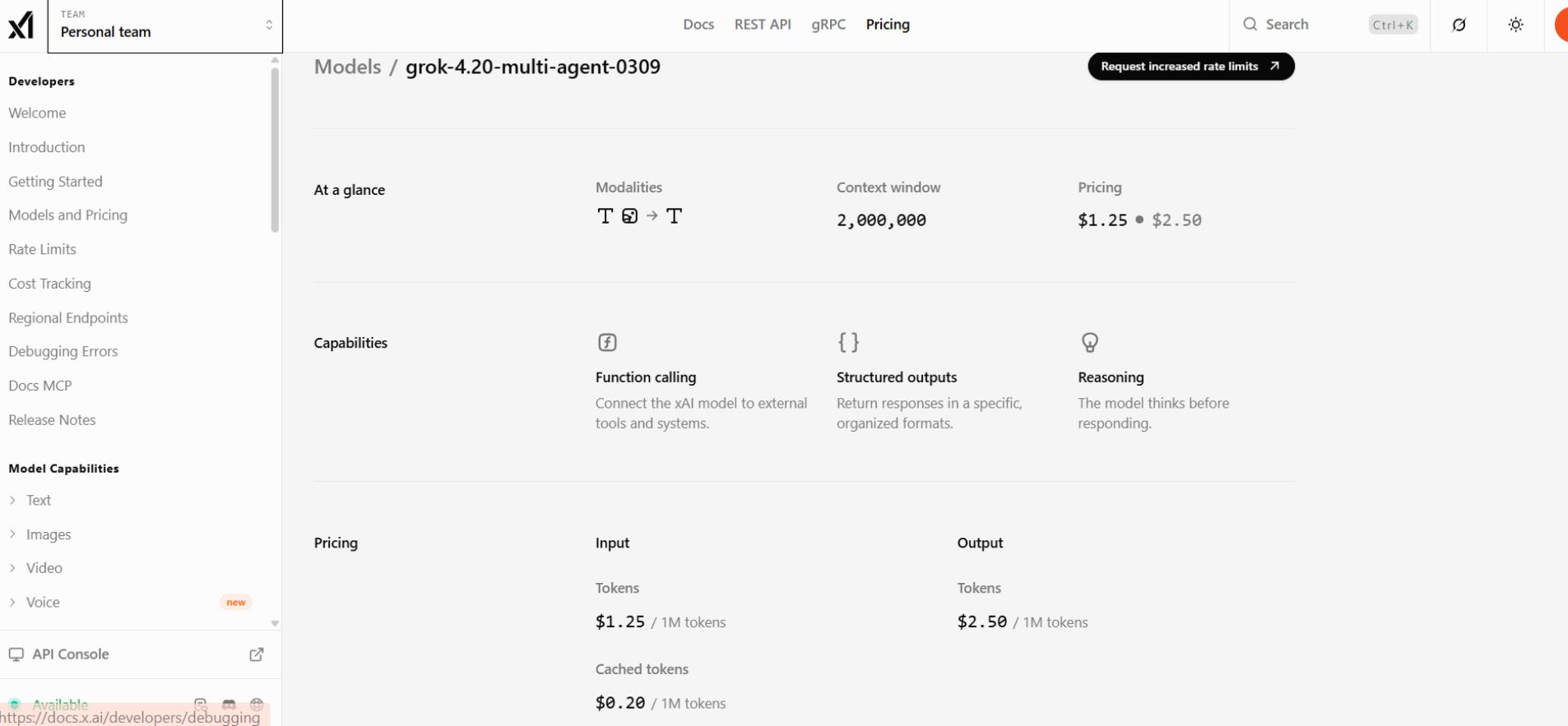

Grok 4.20 API Access and Pricing

Is Grok 4.20 API Available?

Yes — access runs through official developer endpoints, model routing services, and selected AI development platforms. For API development, the most stable current snapshot is grok-4.20-multi-agent-0309. Always pin to a date-stamped alias rather than a “latest” pointer — your orchestration logic will break when xAI updates the rolling version. Availability varies by region, beta access status, and subscription tier. The Grok API pricing structure reflects this tiered approach, with multi-agent features gated behind paid plans.

Is Grok 4.20 Beta Free?

Technically: partially. Realistically, not for serious use.

Free tiers cover limited chatbot interaction, standard Grok usage, and capped requests. Paid access — including SuperGrok Heavy for 16-agent mode — unlocks advanced reasoning, API access, multi-agent workflows, and heavier orchestration models. Free tiers are demos. Real agentic workflows require paid infrastructure.

Grok 4.20 vs Open-Source Multi-Agent Frameworks

Open-source competition is intense in 2026.

| System | Strength | Weakness |

|---|---|---|

| Grok 4.20 | Integrated orchestration | Closed ecosystem |

| AutoGPT 3.0 | Highly customizable | Stability issues |

| LangChain Agents | Flexible tooling | Complex setup |

| CrewAI | Lightweight workflows | Smaller ecosystem |

Grok focuses on managed orchestration, integrated infrastructure, and centralized optimization. Open-source systems prioritize flexibility, customization, and developer control. Neither approach is universally better — it depends on workflow complexity, budget, infrastructure expertise, and scaling requirements.

MCP Compatibility and Agent Orchestration

Why MCP Matters in 2026

Modern AI systems increasingly rely on MCP (Model Context Protocol) architectures. MCP layers coordinate external tools, memory systems, APIs, permissions, and agent communication. Grok 4.20 appears compatible with MCP-style workflows involving external tools, persistent memory systems, and coordinated agent execution. Grok’s architecture aligns strongly with this direction, which matters because the future of AI is shifting from prompt-response systems to orchestrated reasoning environments — and the security implications of that shift are still being worked out in real time.

Prompt Engineering Is Evolving Into Agent Orchestration

For years, AI optimization meant writing better prompts, structuring instructions carefully, and manually guiding outputs. That paradigm is changing. With systems like Grok 4.20, prompts become workflows, workflows become orchestration chains, and orchestration chains become autonomous systems. Prompt engineering is gradually evolving into agent orchestration — the art of managing AI “teams” rather than just writing better instructions.

Example: Where Grok 4.20 Failed

One beta workflow involved refactoring a legacy authentication system — a real test, not a synthetic benchmark.

Initially, the results looked impressive. The Captain decomposed the architecture correctly. Benjamin generated functional code. Harper identified outdated dependencies, including a deprecated JWT library with a known CVE.

Then Lucas started rejecting revisions over style inconsistencies rather than functional issues — indentation patterns, naming conventions that technically worked but deviated from the Captain’s original scaffold. The system entered a loop. Not because the code was broken, but because Lucas was optimizing against a style target that Benjamin hadn’t been explicitly given.

The outcome: longer completion time, increased token usage, and a final output that wasn’t meaningfully better than what Benjamin produced in the second pass. More reasoning layers can amplify inefficiency instead of reducing it — especially when agent goals aren’t precisely aligned.

When You Should Use Multi-Agent AI

Use multi-agent systems when tasks are complex, reasoning depth matters, accuracy is critical, workflows involve multiple steps, or outputs require validation.

Avoid them when you need instant answers, tasks are simple, cost matters more than depth, or latency is unacceptable.

For most users, standard AI remains the better everyday option. Multi-agent systems are specialized infrastructure — powerful in the right context, wasteful in the wrong one.

Does Grok 4.20 Support Human-in-the-Loop (HITL)?

Yes — partially. Human-in-the-loop workflows are increasingly critical for enterprise governance, compliance, and high-risk automation. Grok’s architecture supports supervised review systems where humans approve outputs, validate reasoning, or interrupt agent execution chains before Lucas finalizes them. This is becoming a baseline requirement in regulated industries — finance, healthcare, and legal workflows where autonomous AI execution without oversight creates unacceptable liability exposure.

Future of Multi-Agent AI

The broader direction is obvious. Over the next few years, expect persistent AI agents, autonomous task systems, collaborative reasoning environments, and AI orchestration layers to replace traditional prompt interfaces.

The industry is moving from chatbots answering questions to AI systems coordinating work. Grok 4.20 Multi-Agent Beta is one of the clearest examples of that transition happening in real time — with all the rough edges that come with being early.

FAQs

Q. What is Grok 4.20 beta 4 agent?

Grok 4.20 beta 4 agent is a multi-agent AI system from xAI where four specialized agents — Grok (Captain), Harper (Researcher), Benjamin (Logician), and Lucas (Contrarian) — collaborate inside a shared reasoning environment to handle planning, research, reasoning, and validation. Instead of generating a single-pass response, the agents work together to refine outputs before delivering a final answer.

Q. Is Grok 4.20 beta free?

Grok 4.20 beta offers limited free access through selected tiers, but advanced multi-agent features — especially the 16-agent Heavy mode and API access — sit behind paid infrastructure tiers due to the compute costs of running multiple inference passes simultaneously.

Q. What is Grok multi-agent?

Grok multi-agent is a collaborative AI architecture where multiple specialized agents work together inside a shared reasoning system. Unlike standard AI that generates one direct response, Grok multi-agent divides tasks into stages, validates outputs, critiques reasoning, and iteratively improves responses — designed for complex engineering, autonomous workflows, and research synthesis.

Q. How does Grok 4.20 compare to other AI models?

Grok 4.20 performs especially well on multi-step reasoning (AIME score 93.3% vs 84.1% for single-agent), coding workflows, and long-context problem solving. The tradeoffs are slower response times (10–30 seconds vs under 3 seconds), higher compute usage, and increased operational costs. Its multi-agent architecture prioritizes accuracy and reasoning depth over raw speed. For a broader comparison, see Grok vs ChatGPT.

Q. Does Grok support custom agents?

Emerging Grok workflows suggest growing support for customizable agent systems and role-based orchestration, allowing developers to define specialized reasoning roles, workflow behaviors, task routing, and validation pipelines.

Q. What is multi-agent deadlock?

Multi-agent deadlock is a failure scenario where agents repeatedly disagree or loop through validation cycles without improving the final output. One agent generates a solution, another rejects it, revisions continue, but quality stops improving. Grok’s March 0309 snapshot introduced a Consensus Timeout to cap these loops at three iterations before forcing a majority vote.

Q. Does Grok 4.20 support MCP workflows?

Grok 4.20 appears compatible with MCP-style (Model Context Protocol) workflows involving external tools, persistent memory systems, APIs, and coordinated agent execution — making it relevant for enterprise AI automation and advanced agentic workflows.

Q. Is Grok 4.20 better than standard chatbots?

For simple conversations, standard AI chatbots are faster and more efficient. However, Grok 4.20’s multi-agent system outperforms them on complex reasoning, software engineering, research analysis, and long-form task execution. Its biggest advantage is collaborative reasoning between specialized agents rather than single-pass response generation.

Conclusion

Grok 4.20 Multi-Agent Beta isn’t a chatbot upgrade. It’s an architectural signal about where AI infrastructure is heading — collaborative reasoning, agent orchestration, and workflow-based intelligence systems.

The technology is impressive and still imperfect. Multi-agent AI prioritizes depth over speed. The named-agent system (Grok, Harper, Benjamin, Lucas) gives the architecture real structural clarity. The 16-agent Heavy mode raises the ceiling for enterprise deployments. But multi-agent deadlock remains a real and underreported challenge — even with the Consensus Timeout fix — and latency and compute costs stay significant trade-offs.

As of 2026, the biggest value of Grok 4.20 may not be the model itself — but the direction it forces the entire industry to take seriously.

Related: Grok CLI (2026): Does It Exist? Setup, API & Real Use Cases

| Disclaimer: This article is based on publicly available information, beta documentation, developer discussions, and observed behavior patterns surrounding Grok 4.20 Multi-Agent Beta as of 2026. Because the platform is still evolving, features, pricing, benchmarks, and capabilities may change over time. Some architectural insights and workflow interpretations are analytical observations rather than officially confirmed specifications from xAI. |