Running a 14B model on a home machine and chatting with a custom character through a phone while the PC does the heavy lifting sounds like something you’d need a dedicated server room for. In 2026, it’s a Tuesday afternoon setup.

That’s the shift Backyard AI represents — and why most of the guides covering it are already describing something that doesn’t exist anymore.

Most AI chat apps today are cloud-based, filtered, and operating entirely on infrastructure the user doesn’t control. Backyard AI takes the opposite position: the model runs locally, the data stays on the hardware, and the character behavior is defined by the user rather than a platform’s content policy. The trade-off is real — it requires capable hardware and some tolerance for configuration — but for the users it suits, there’s nothing else quite like it.

What Backyard AI Actually Is

Backyard AI is a platform that lets users run and interact with customizable AI characters using their own models — either locally on a PC or through a tethered connection from a mobile device.

The simplest contrast: ChatGPT processes everything in OpenAI’s cloud. Backyard AI processes everything on hardware the user controls. No data leaves the machine unless the user specifically configures an API connection to an external service.

What that enables practically: running AI locally on a PC, connecting a phone to that machine through local tethering, using community-built character cards, and controlling memory, tone, and character behavior at a level no cloud platform exposes.

It sits in the same broader category as local LLM setups for roleplay and AI interaction — but with a specific focus on character-driven conversation rather than raw model testing.

Why Backyard AI Setup Changed in 2026

Backyard AI started as a desktop application. That’s what most existing guides describe. The 2026 reality is different.

The platform has evolved into a web interface plus mobile apps (iOS and Android), plus a local tethering system that connects the phone to the PC. The PC still runs the model — that’s where the compute lives. But the user chats from their phone, and nothing goes to an external server. The inference happens at home; the interface travels with the user.

This hybrid architecture is now the core experience, and understanding it changes everything about how setup and troubleshooting work. Someone following a 2024 setup guide will be looking for UI elements that no longer exist in the same form.

How Backyard AI Works (The Technical Layer Without the Jargon)

Three components connect to make Backyard AI function:

Interface — the web or mobile chat UI where conversation happens.

Model — the AI brain running locally. Backyard AI doesn’t provide intelligence. It provides the runtime and interface. The user brings the model.

Bridge — the local tethering system or API connection that links the interface to the model. For local setups, this runs on the home network. For API setups, it routes to a hosted model.

| Mode | What Happens | Best For |

|---|---|---|

| Local (Tethered) | Model runs on home PC, phone connects over local network | Privacy, power users, serious setups |

| Cloud / API | Uses hosted models via API connection | Quick setup, lower hardware requirements |

The local mode is what distinguishes Backyard AI from every other AI chat platform. The API mode makes it accessible to users who want character customization without the hardware investment.

Best Performing AI Models for Local Use in 2026

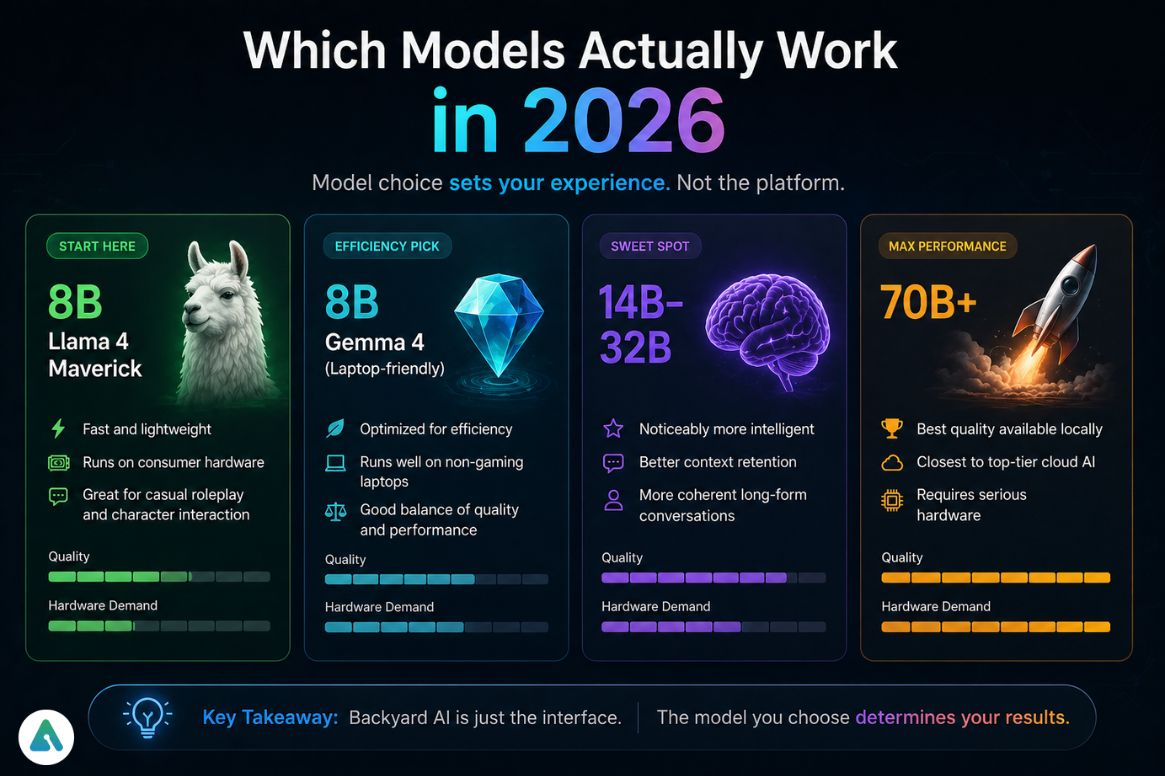

Model selection plays a key role in performance and experience.

Llama 4 Maverick (8B) — A Llama 4 family Mixture-of-Experts model designed for strong general-purpose performance in chat and reasoning tasks. It delivers solid conversational quality and efficient inference depending on hardware and quantization, making it suitable for mid-range local AI setups.

Gemma 4 (laptop-friendly) — Optimized for efficiency. Runs well on machines that aren’t dedicated gaming or workstation builds. Good balance between response quality and resource demand.

14B–32B models — The range where conversations become noticeably more intelligent. Characters hold context better, responses feel less templated, and longer interactions stay coherent. This is the sweet spot for users who’ve moved past testing.

70B+ models — Best quality available locally. Require serious hardware. The experience is closest to top-tier cloud AI — but the machine doing it needs to be capable.

Model choice matters more than most users expect before they start. The platform itself is relatively neutral — the quality ceiling is set by the model, not by Backyard AI’s software.

The Hardware Reality (The Section Most Guides Skip)

“The software is free. Your GPU is the subscription.” That framing captures the economics accurately.

Budget setup (~8GB VRAM): Runs 7B–8B models adequately. Decent for basic character interaction. Conversations have depth limits — the smaller context window and parameter count show in longer exchanges.

The sweet spot (~16GB VRAM): Runs 13B–32B models smoothly. This is where the experience shifts from “impressive for local AI” to “genuinely useful daily driver.” The investment here unlocks a noticeably different quality tier.

Powerhouse setup (32GB+ VRAM or unified memory): Runs 70B+ models. The closest local experience to frontier cloud models. Apple Silicon Macs with M3/M4 chips and 32–64GB unified memory are a popular path here because the GPU and CPU share memory, making large models more accessible than VRAM-limited discrete GPUs at the same price.

Most users underestimate the hardware requirements and overestimate what entry-level setups deliver. Testing a 7B model and concluding “local AI isn’t very good” is a hardware conclusion, not a platform conclusion.

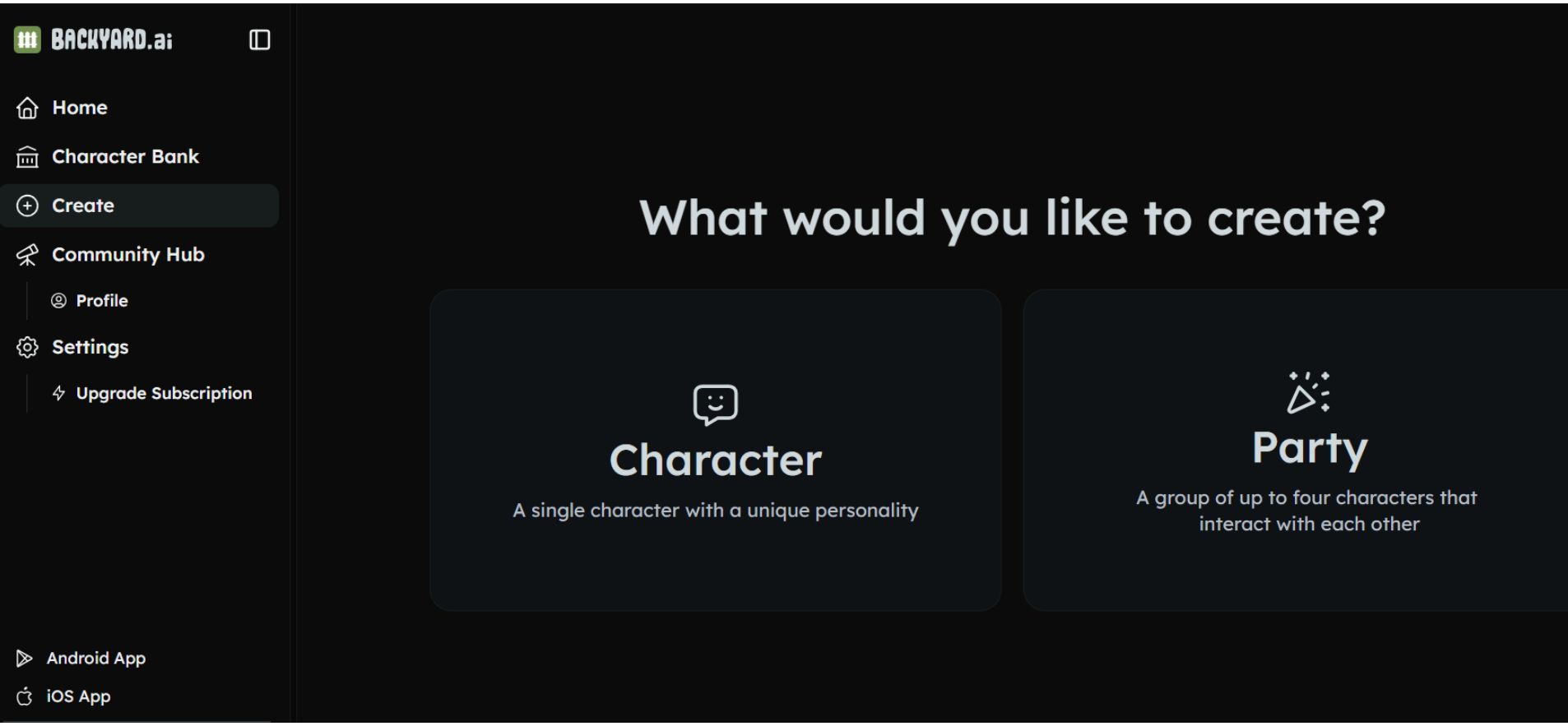

The Party Feature: Multi-Character Simulation

One of the more significant 2026 additions is group chat functionality — called Parties — where multiple AI characters run simultaneously and interact with each other.

Multiple characters with distinct personalities can be loaded into the same session. They respond to the user and to each other, enabling debates, collaborative storytelling, roleplay scenarios with multiple participants, and dynamic conversations that single-character sessions can’t produce.

This transforms Backyard AI from a chatbot into something closer to a simulation environment. The multi-character group chat experience that platforms like Character AI offer through their group chat feature is what Backyard AI’s Parties provides locally, with the added dimension that character behavior is fully user-defined rather than constrained by platform moderation.

The Character Hub: The Community Layer

Backyard AI has a community ecosystem built around shareable character cards — .json and .png files that encode a character’s personality, tone, memory triggers, and behavioral patterns. Users create them, share them, and build on each other’s work.

The closest analogy is Steam Workshop for AI personalities. The character card format makes setups portable: find a card someone built for a character type that fits a use case, import it, and it brings all the prompt engineering that person invested in it.

This community layer is a significant practical advantage. The first-time setup question, “how do I make a character actually behave the way I want?” has a shortcut: find a card that’s already been tuned by someone who spent the time.

The AI character card format and the conventions around building them translate directly to Backyard AI’s system — the principles are the same, even if the platform interface differs.

The Context Window Problem (Why Characters Forget)

Context window limits are the most common frustration in extended Backyard AI sessions, and they’re architectural rather than fixable through settings.

Every AI model has a maximum amount of text it can actively process at once. As a conversation extends, the model pushes earlier exchanges out of the active window to accommodate new ones. The character doesn’t “forget” because of a bug — it forgets because the earlier messages have physically left the context.

The practical effect: characters lose personality consistency in long sessions, story continuity breaks down, and details established early in a conversation disappear. This is the same context window drift that affects AI companion platforms across the category — the local-versus-cloud distinction doesn’t change the underlying model architecture.

The mitigation strategies: choose models with larger context windows (this is a model spec to check before downloading), keep prompts structured and reference important details periodically, and for serious long-form sessions, use models in the 32B+ range where context handling is meaningfully better.

Backyard AI vs. Other Local AI Tools

| Tool | Best For | Key Difference |

|---|---|---|

| Backyard AI | Character interaction, persistent personalities | Built for living with AI, not testing it |

| LM Studio | Developers, model evaluation | Model testing environment without character layer |

| Cloud AI apps | Convenience, no setup | No local control, no privacy, platform-filtered |

LM Studio and Backyard AI are often compared, and the distinction is clean: LM Studio is for evaluating models. Backyard AI is for building an ongoing character-driven experience with them. Users who want to benchmark GGUF files use LM Studio. Users who want a consistent AI character that remembers their preferences use Backyard AI.

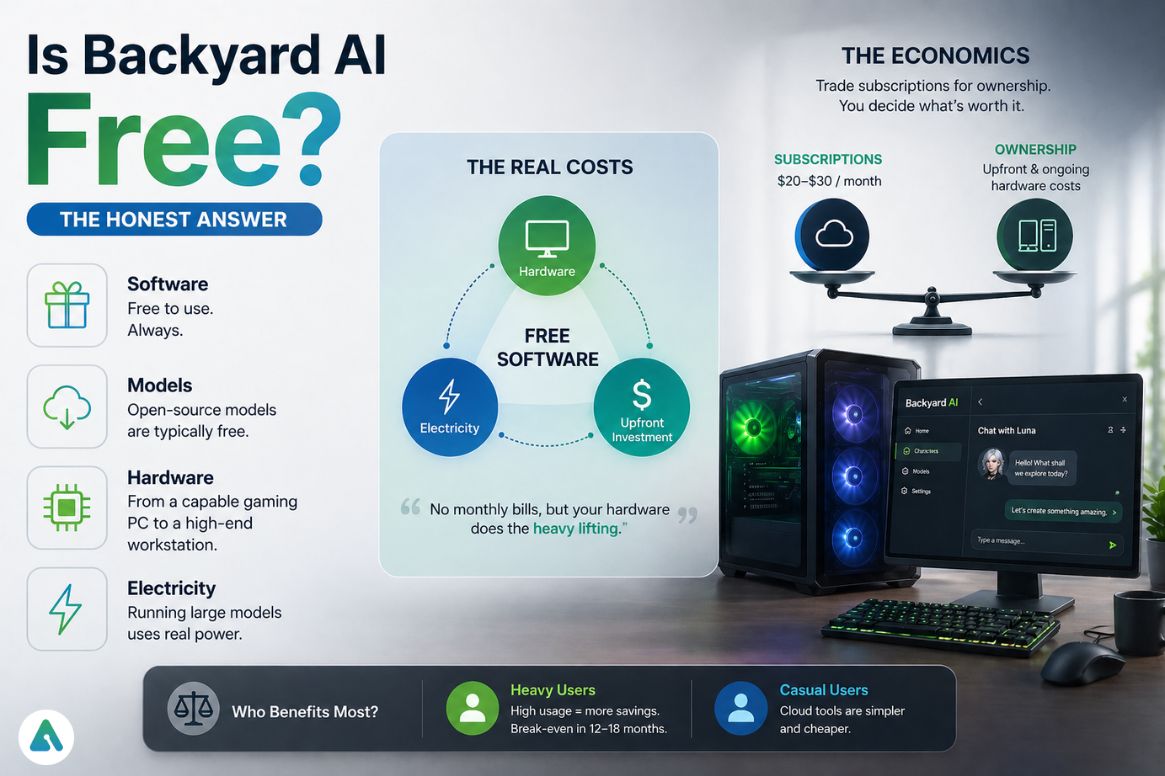

Is Backyard AI Free? (The Honest Answer)

The software itself is free. That’s accurate but misleading without context.

Models are typically free to download. Hardware capable of running useful models ranges from adequate (a gaming PC with 16GB VRAM) to expensive (a high-VRAM workstation or a MacBook Pro with 36GB unified memory). Electricity is an ongoing cost — running a 30B model inference continuously draws real power over time.

The economics: users trade recurring subscription fees for upfront and ongoing hardware costs. For heavy users who would otherwise pay $20–$30/month for multiple AI subscriptions, the hardware investment often amortizes favorably over 12–18 months. For casual users, cloud tools are simply cheaper.

Is Backyard AI Safe?

The privacy profile is genuinely stronger than cloud alternatives when configured correctly. Data stays on the local machine. No conversation content reaches external servers unless the user explicitly sets up an API connection to a hosted model.

The risk profile shifts: instead of trusting a platform’s data handling, the user trusts their own network security and the sources from which they download models. Downloading character cards or model files from unverified sources is the primary attack surface. Misconfigured local network connections that expose the tethering port to the broader internet are the secondary ones.

Understanding how AI companion platforms handle privacy helps set expectations — Backyard AI’s local-first approach offers more inherent privacy than cloud platforms, but trades platform-managed security for user-managed security.

Getting Started: The Realistic First Session

Install Backyard AI from the official site. Open the web or mobile interface. Connect to the local machine if using tethering. Load a model — start with an 8B model if hardware requirements are uncertain. Import a character card from the Hub or configure a basic character from scratch. Start chatting.

Everything beyond that is optimization. The common mistake is trying to configure everything before having a conversation — most of the important settings only become obvious once the baseline experience is running.

Mistakes that kill the first experience:

- Expecting a polished experience from a 7B model on marginal hardware

- Importing a complex character card before understanding how the basic interface works

- Running a 30B+ model on hardware that can’t support it and concluding the platform is slow

- Letting context windows overflow in early sessions instead of resetting when conversations drift

Frequently Asked Questions

Q. What is Backyard AI used for?

Backyard AI is used for running local AI models with customizable characters, enabling private and platform-independent conversations. Unlike typical chatbots, it focuses on persistent personalities and long-form interaction rather than one-time questions, making it popular for roleplay, simulation, and personal AI use.

Q. Is Backyard AI safe to use?

Backyard AI is generally safer than cloud AI tools when used locally, because your data stays on your own device. However, safety depends on downloading trusted models, securing your network, and avoiding misconfigured APIs. It offers privacy advantages but requires more user responsibility.

Q. Is Backyard AI free?

Backyard AI is free to install and use, and most compatible AI models are also free. The main cost comes from hardware requirements, such as a capable GPU or a high-memory system needed to run advanced models efficiently. In practice, your device acts as the “subscription.”

Q. How does Backyard AI work?

Backyard AI works by connecting a chat interface (web or mobile) to an AI model running locally or via an API. The model generates responses, while Backyard AI manages the character personality, memory behavior, and conversation interface, including mobile-to-PC tethering.

Q. Can Backyard AI be used on mobile?

Yes, Backyard AI supports mobile use through iOS and Android apps with local tethering. In this setup, the AI model runs on your home computer, while you interact with it through your phone, combining mobility with local processing and privacy.

Q. What models work best with Backyard AI?

The best models for Backyard AI depend on your hardware. Lightweight setups work well with Llama 4 Maverick (8B), while 14B–32B models offer better quality for most users. High-end systems can run 70B+ models for more advanced and realistic conversations.

Q. Why does Backyard AI forget conversations?

Backyard AI “forgets” conversations due to context window limits in AI models. These limits restrict how much past conversation the model can actively remember. As new messages are added, older ones are removed. Larger models reduce this issue but do not eliminate it.

The Bigger Shift Backyard AI Represents

Backyard AI isn’t just a local chat tool — it’s an early instance of a structural change in how AI is distributed. The trajectory from platform-controlled cloud AI toward user-controlled local AI is real and accelerating in 2026. Local-first AI systems, personal AI environments, and custom AI personalities that exist entirely outside platform content policies are all part of the same shift.

The risks of depending entirely on centralized AI platforms — where policy changes, outages, or business decisions can alter the experience or remove access — are exactly what Backyard AI’s architecture sidesteps. The trade-off is responsibility: users who control the system also maintain it.

For the users that trade is worth making, Backyard AI is the most capable option in its category in 2026.

Related: Character AI Lag Fix (2026): Why It’s So Slow & How to Fix It

| Disclaimer: This article is for informational purposes only and is not sponsored or affiliated with Backyard AI. We do not receive any compensation for mentioning tools or platforms. All opinions are based on independent research and analysis. |