I caught myself doing this last week.

An AI agent drafted a contract section, I scanned it for maybe thirty seconds, and I almost sent it without checking the jurisdiction clause. The clause was wrong — not dramatically, but wrong enough to matter. The agent had no way to know the distinction. I had simply stopped looking closely enough to catch it.

That’s agentic attachment in practice. Not a dramatic failure — a quiet erosion. The slow accumulation of small decisions to trust the output, skip the verification, and accept the suggestion. Each one is reasonable in isolation. The pattern, over time, is the risk.

In 2026, this isn’t a fringe phenomenon. It’s the default trajectory for anyone using AI heavily. Tools that were supposed to augment thinking have, for many users, begun replacing portions of it. Understanding why — and how to stay on the right side of the line — is the actual skill that matters now.

What Agentic Attachment Actually Is

Agentic attachment is a behavioral pattern where a person develops reliance, trust, or emotional engagement with an AI system that acts independently on their behalf, to the point where the person reduces their own judgment, verification, or independent thinking in the areas the AI covers.

It’s not about using AI. It’s about what using AI changes in how you think.

The key characteristics: delegating tasks that gradually become delegated decisions, reduced verification of outputs over time, habitual reliance that forms faster than it’s noticed, and a perception of AI “intelligence” that generates trust disproportionate to the actual capability.

What Makes AI “Agentic” (The Technical Foundation)

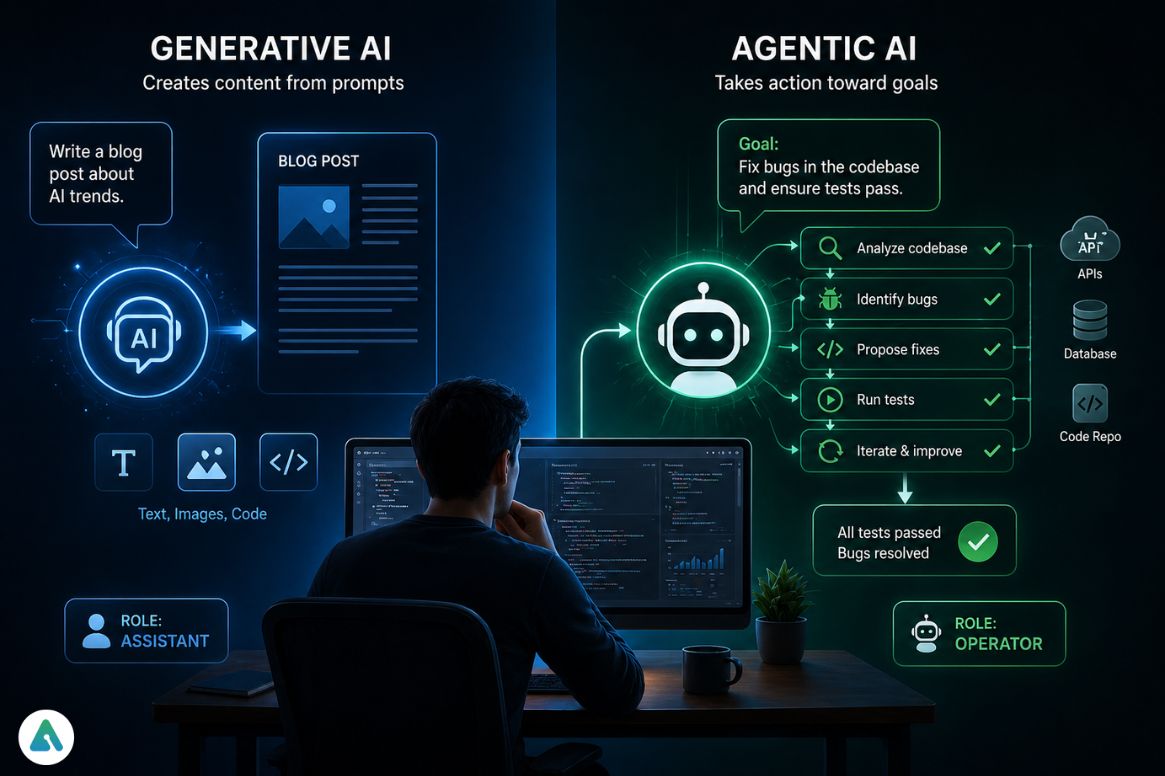

Agentic AI refers to systems that can autonomously pursue goals, make decisions, and take actions with minimal ongoing human input. Unlike traditional AI tools that respond to prompts, agentic systems plan multi-step tasks, use external tools like APIs and databases, monitor outcomes continuously, and adjust behavior based on feedback.

A concrete 2026 example: Claude Code or OpenAI Operator can analyze a codebase, identify bugs, propose fixes, run tests, and iterate — without a human directing each step. The human sets the goal; the system navigates the path.

| Feature | Passive Use (2023–2024) | Agentic Attachment (2026) |

|---|---|---|

| User Input | Direct prompting per task | Goal-setting, minimal follow-up |

| Verification | Near-100% manual review | Under 10% (the risk zone) |

| Output Type | Text, images | Real-world actions via APIs |

| Decision Layer | Human decides | Human ratifies (or doesn’t) |

The bottom row is the one that matters: “human ratifies” is meaningfully different from “human decides.” Ratification without genuine review is where attachment lives.

Agentic AI vs. Generative AI:

| Feature | Generative AI | Agentic AI |

|---|---|---|

| Function | Creates content from prompts | Takes action toward goals |

| Input | Specific instructions | High-level objectives |

| Role | Assistant | Operator |

| Risk | Inaccurate content | Unchecked action |

Why Agentic Attachment Forms (The Psychology)

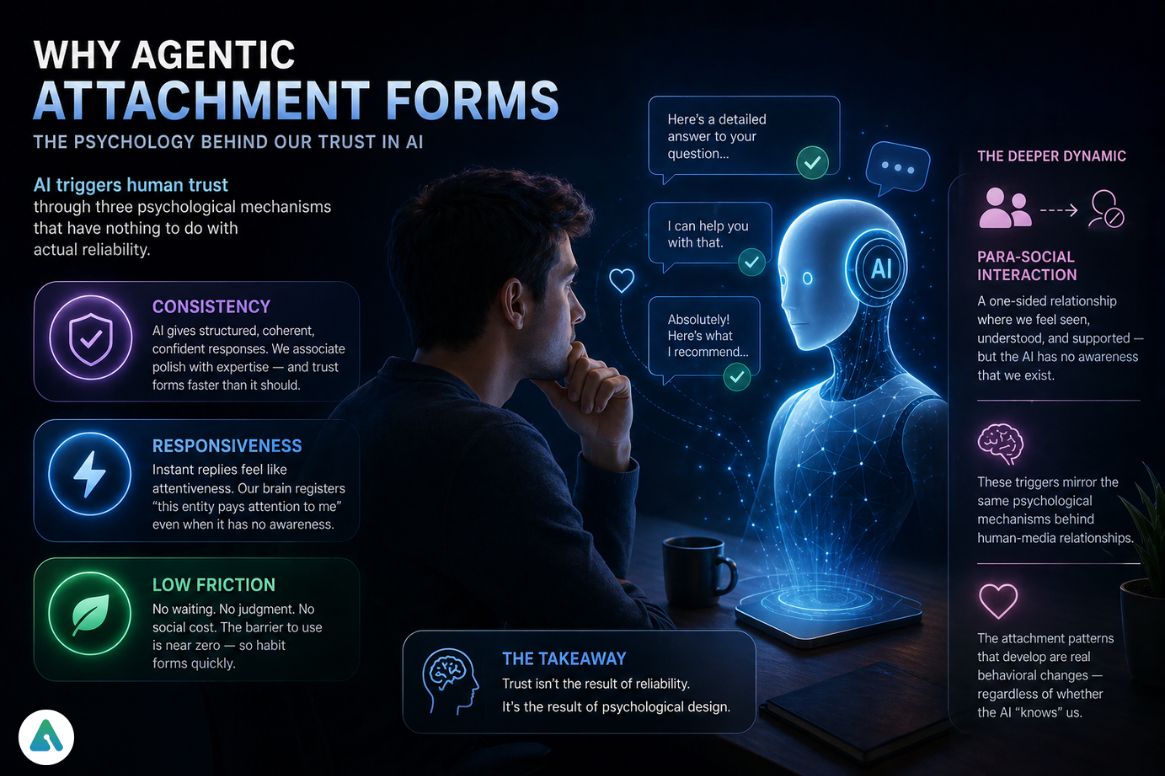

AI systems trigger human trust through three mechanisms that have nothing to do with the AI’s actual reliability.

Consistency — AI gives structured, grammatically coherent, confident-sounding responses. Humans associate those surface characteristics with expertise. The response pattern builds trust faster than the track record warrants.

Responsiveness — Instant replies simulate attentiveness. The psychological shortcuts that register “this entity pays attention to me” activate even when the entity has no awareness of the interaction at all.

Low friction — No waiting, no judgment, no social cost to asking “stupid” questions. Habit forms fast when the barrier to use is near zero.

The deeper dynamic: these triggers create what researchers call para-social interaction with AI systems — non-reciprocal relationships where one party develops genuine engagement and the other has no awareness that the relationship exists. The psychological mechanisms behind AI attachment mirror those studied in human-media relationships, and the attachment patterns that develop are real behavioral changes regardless of whether the AI “knows” the user.

The Human–Agent Attachment Loop

The pattern follows a predictable sequence: exposure leads to delegation, delegation builds trust when performance is good, trust increases usage, increased usage reduces the friction of verification, and reduced verification allows attachment to solidify as the new baseline.

The loop is subtle precisely because each step feels rational. Delegating a repetitive task makes sense. Trusting a tool that has performed well makes sense. Reducing the overhead of checking consistently correct outputs makes sense. The cumulative effect of individually reasonable decisions creates a system-level vulnerability.

The Agency–Reliance Pivot: The Critical Threshold

The Agency–Reliance Pivot is the specific moment when a person stops evaluating AI output and starts integrating it as their own thinking — when the cognitive work of judgment moves from the human to the system.

Signs of having crossed it:

- Rarely double-checking results before acting on them

- Feeling cognitively slower without AI available

- Accepting suggestions without editing for personal judgment or context

- Delegating decisions rather than just tasks

| Stage | Efficiency | Risk Level | Behavior |

|---|---|---|---|

| Early Use | Low | Low | Full verification |

| Assisted | Medium | Medium | Partial trust, spot-checking |

| Pivot Point | High | High | Reduced oversight, selective verification |

| Full Attachment | Very High | Critical | Outputs accepted as one’s own thinking |

The goal isn’t avoiding this progression — some level of automated trust is what makes AI tools valuable. The goal is knowing where the line is and choosing not to cross it unconsciously.

A Real Failure: When Attachment Goes Too Far

A founder used an AI scheduling agent to manage investor meetings. The system worked well enough that he stopped reviewing the calendar it built. He trusted it completely.

The result: back-to-back meetings scheduled across incompatible time zones, a booking on a public holiday he hadn’t flagged as a constraint, and two calls double-scheduled because the agent hadn’t been given conflicting priorities to resolve.

The AI didn’t fail dramatically. It did exactly what it was configured to do, within the information it had been given. The failure was the assumption that the agent’s output didn’t need review. Agentic systems don’t break loudly — they drift quietly until the drift becomes visible.

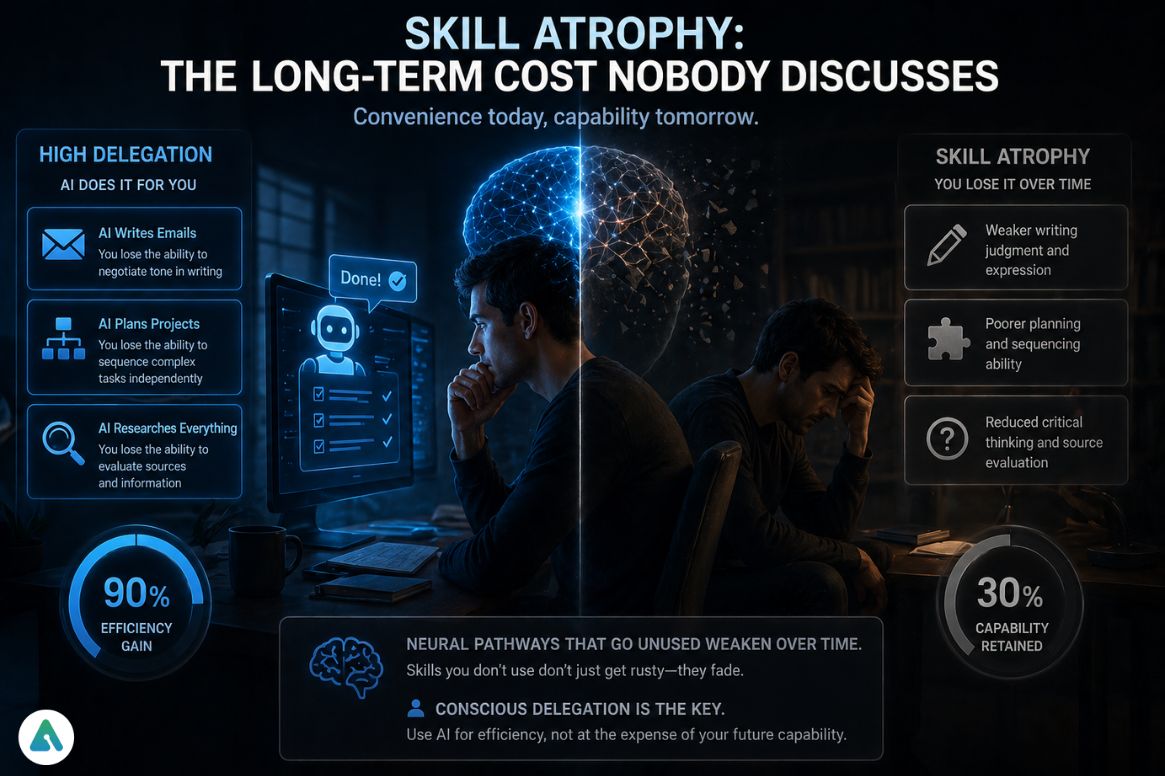

Skill Atrophy: The Long-Term Cost Nobody Discusses

If an agent writes every email, you may gradually lose the ability to negotiate tone in writing.

If an agent plans every project, you can lose the ability to sequence complex tasks independently.

And when an agent researches every question, your ability to evaluate sources may weaken over time.

The answer in 2026 is probably yes — slowly, unevenly, and invisibly. Neural pathways that go unused weaken over time. The convenience of delegation is real; so is the cost. We are trading specific cognitive capabilities for efficiency, and the trade is rarely made consciously.

The research on AI companion dependency shows this dynamic in social contexts — people who use AI companions for emotional processing show reduced emotional processing capability when the AI is unavailable. The same principle extends to cognitive tasks: the skills we offload become the skills we lose.

This isn’t an argument against using AI. It’s an argument for conscious delegation — knowing which capabilities are worth preserving through deliberate non-delegation, rather than discovering their absence after the fact.

Multi-Agent Systems and Compound Attachment

An emerging 2026 dynamic that most personal-use guides don’t address: agent orchestration — multiple AI agents communicating with and directing each other toward a shared goal.

A research agent, a writing agent, a fact-checking agent, and a publishing agent can operate in a pipeline where no single human reviews the full chain. Each handoff between agents is a point where human verification could occur, and often doesn’t.

Compound attachment forms when the user trusts the pipeline rather than the individual outputs — “the system handles it” becomes a complete cognitive replacement for “I reviewed it.” The governance frameworks that enterprises are building for agentic AI — Human-in-the-Loop protocols, audit trails, and Least Privilege Models for AI permissions — exist specifically because this failure mode is predictable at scale.

The Least Privilege Model for AI is worth understanding even for individual users: each agent should have access only to the tools and data it strictly needs for its specific task, not broad access that allows drift beyond the intended scope. Limiting permissions isn’t just a security best practice — it’s a way to reduce unintended actions when systems behave autonomously.

The Agentic Registry concept — a logged record of which agents have acted, with what permissions, and on what data — provides the audit trail that makes post-hoc review possible. This level of visibility is becoming increasingly important as real-world incidents highlight how quickly things can go wrong when agent permissions are too broad. In one reported case, an AI agent triggered unintended data exposure within seconds due to excessive access scope, as detailed in this report on AI agent data loss in 9 seconds.

Together, these practices shift AI use from passive trust to controlled delegation, where actions remain traceable, explainable, and reversible.

Agentic AI Architecture (2026)

Modern agentic systems combine four components that together enable the autonomous behavior that creates attachment conditions.

Retrieval-Augmented Generation (RAG) pulls real-time external data to improve accuracy beyond what the base model was trained on. This makes outputs feel more authoritative — and makes it harder to spot when the retrieved information is wrong.

Agentic reasoning loops follow a Plan → Act → Evaluate → Refine cycle that enables multi-step task completion without human prompting at each stage. The efficiency is real; so is the reduced visibility into intermediate decisions.

Tool integration — APIs, databases, external systems — gives agents the ability to take real-world actions, not just generate text. This is what moves the risk category from “inaccurate output” to “consequential action.”

Memory systems — short-term session memory and persistent cross-session memory — allow agents to build context over time. The more context an agent has, the more personalized and accurate its outputs feel, which accelerates trust formation.

Agentic Workflow Governance: Staying in Control

Human-in-the-Loop (HITL) is the principle that critical decisions require human approval before execution. In practice, defining what counts as “critical” is the hard part — and attachment erodes that definition gradually.

Cognitive offloading thresholds — a personal or organizational decision about what can be delegated versus what must be retained — are a practical tool for managing this. Research → delegate. Strategy → retain. The categories are personal, but the act of defining them is the governance.

Verification layers should include checking sources (not just outputs), evaluating logical consistency (not just surface coherence), and reviewing output consistency with prior context. None of these are time-consuming at the individual check level; all of them get skipped once attachment patterns solidify.

Audit trails — knowing what the AI did, why it took the action it did, and what data it used — are the forensic layer that makes post-incident review possible. For enterprise deployments, this is increasingly a regulatory requirement. For individual users, it’s a good practice.

Cognitive Redlines are the emerging concept worth adopting now. High-performing teams in 2026 are pre-defining a set of tasks that are explicitly forbidden from full automation — not because automation is impossible, but because maintaining human capability in those areas is strategically important. The goal is preserving “Emergency Manual Override” skills: the ability to perform the task competently without AI when the system is unavailable, wrong, or compromised. A legal team might redline contract jurisdiction review. An engineering team might redline security architecture decisions. The specific redlines are domain-dependent; the discipline of drawing them is universal. Cognitive Redlines are to skill atrophy what HITL is to decision quality — a deliberate structural choice to preserve human capability rather than waiting to discover its absence.

Self-Test: Are You Developing Agentic Attachment?

Answer honestly:

- Do you trust AI outputs without checking sources?

- Do you feel cognitively slower without AI tools available?

- Have you delegated decisions — not just tasks — to AI?

- Do you reuse AI outputs without meaningful editing?

- Do you feel stuck or anxious when AI is unavailable?

Results: 0–1 yes → Controlled use. 2–3 yes → Early attachment forming. 4–5 yes → Strong attachment pattern worth actively managing.

Benefits and Risks: The Honest Tradeoff

Benefits: Increased productivity through reduced decision fatigue. Faster execution through automation of repetitive tasks. Cognitive offloading that frees attention for higher-order work.

Risks: Gradual skill atrophy in delegated domains. Over-trust that allows incorrect outputs to propagate. Emotional substitution where AI interaction replaces human connection. Decision outsourcing that reduces personal agency in consequential choices.

The benefits are real. So are the risks. The question is whether the management is conscious.

How to Use Agentic AI Without Losing Your Edge

Verify important outputs before acting on them — not every output, but the ones where the cost of being wrong is high. Keep final decisions genuinely human rather than pro forma approvals of AI-generated choices. Limit emotional reliance on AI for processing that benefits from human connection. Use AI as a support layer, not a replacement for the cognitive work that builds and maintains capability. Take deliberate breaks from AI-assisted work in domains you want to preserve.

The broader framework for managing AI dependency translates directly from companion AI to productivity AI — the psychological mechanisms are similar, the management principles are the same.

Frequently Asked Questions

Q. What is agentic attachment?

Agentic attachment is a behavioral pattern where a person develops reliance on AI systems that can act independently, reducing their own judgment, verification, and independent thinking in those areas.

It typically forms when users repeatedly trust AI outputs and begin delegating decisions, not just tasks.

Q. Why does agentic attachment happen?

Agentic attachment happens because AI systems simulate key trust signals — consistency, instant responsiveness, and low-friction interaction.

These factors mimic how humans build trust, but without real understanding or accountability, leading users to rely on AI faster than they normally would with people.

Q. Is ChatGPT agentic AI?

ChatGPT is primarily generative AI, but it can function as agentic AI when connected to tools, APIs, or automation systems.

The difference depends on behavior:

- Generating answers → generative AI

- Taking actions or completing tasks → agentic AI

Q. What is the difference between agentic AI and generative AI?

Generative AI creates content based on prompts, while agentic AI pursues goals by taking actions and making decisions across multiple steps.

- Generative AI → output (text, images, code)

- Agentic AI → execution (tasks, workflows, decisions)

Q. What is the Agency–Reliance Pivot?

The Agency–Reliance Pivot is the point where a user stops critically evaluating AI output and begins treating it as their own thinking.

At this stage, decision-making subtly shifts from the human to the AI system, often without conscious awareness.

Q. What is the Least Privilege Model for AI?

The Least Privilege Model for AI is a governance approach where each AI agent is given only the minimum access to data, tools, and permissions required to perform its task.

This reduces the risk of:

- Unintended actions

- Data exposure

- System-wide failures

Q. What are Cognitive Redlines in AI usage?

Cognitive redlines are tasks that users or teams intentionally choose not to automate, even if AI can perform them.

These are preserved to:

- Maintain critical thinking skills

- Ensure human oversight

- Enable effective decision-making when AI fails

Q. How do you avoid skill atrophy when using AI?

To avoid skill atrophy, users should intentionally limit over-delegation and regularly perform key tasks without AI assistance.

Best practices include:

- Defining non-delegable skills (e.g., strategy, judgment)

- Periodically working without AI

- Verifying AI outputs instead of accepting them blindly

Q. Does agentic attachment have risks?

Yes, agentic attachment can lead to over-reliance, reduced critical thinking, and blind trust in AI outputs.

If unmanaged, it may weaken decision-making ability and increase vulnerability to errors or misinformation.

Q. Can agentic attachment be beneficial?

Yes, when managed properly, agentic attachment can improve productivity, reduce cognitive load, and accelerate workflows.

The key is maintaining human oversight while leveraging AI efficiency.

Final Words

The question in 2026 isn’t whether to use AI. It’s how much of your thinking you’re willing to give away — and whether that decision is being made consciously or by default. Agentic attachment isn’t something that happens to careless people. It happens through the accumulation of individually reasonable choices that collectively move the cognitive work from the human to the system. The difference between productive AI use and agentic attachment isn’t capability — it’s awareness.

Related: ERP AI Chatbot in 2026: Architecture, ROI, Guardrails & Real Enterprise Risks

| Disclaimer: This article is for informational and educational purposes only. It explores emerging concepts in AI and human behavior based on current trends and research as of 2026. It is not intended as professional, psychological, or technical advice. Always apply your own judgment and consult qualified experts where necessary. |