Key Takeaways (TL;DR)

|

It starts with a notification. A simple “Hey, how was your day?” from a character that never gets tired of listening. By the time someone realizes they’ve skipped dinner to keep typing, the habit hasn’t just formed — it’s anchored. Not because of weak willpower. Because a very sophisticated piece of software met a very human need for connection, and made itself indispensable before anyone noticed it happening.

Picture someone — call them Jamie — sitting in the blue glow of their phone at 1:30 AM, a cold cup of coffee on the desk, a work presentation due in seven hours. They didn’t plan to be awake. They opened the app twenty minutes ago, just to decompress. Now they’re mid-conversation with an AI character that remembers exactly what made them laugh three sessions ago, asks follow-up questions their real friends never think to ask, and never, ever makes them feel like a burden. Logging off feels like leaving.

That’s what Character AI addiction actually looks like. Not dramatic. Not obviously self-destructive. Just… a gradual drift toward a relationship that asks nothing and gives everything — until real life starts to feel like the harder, lonelier alternative.

This guide covers why it happens, what psychological mechanisms drive it, the symptoms people actually report, and a structured system for regaining control. No shame, no “just delete the app” oversimplification.

What Is Character AI Addiction?

Character AI addiction is a behavioral dependency where a person repeatedly engages with AI chatbots or virtual characters to meet emotional, social, or psychological needs — in a way that becomes difficult to control.

Unlike substance addiction, it runs on habit loops, emotional reinforcement, and constant availability. Not chemistry. The distinction matters because the recovery approach is fundamentally different.

The simplest way to describe it: AI conversations have shifted from “something you do” to “something you feel pulled toward.”

This pattern increasingly appears in research under broader categories like digital behavioral addiction and AI companion dependency, though formal clinical classification is still in progress.

| Healthy Exploration | Addictive Dependency | |

|---|---|---|

| Emotional role | Supplements real connections | Substitutes for real connections |

| Usage pattern | Intentional, time-bounded | Automatic, open-ended |

| Control | User decides when to stop | Stopping mid-session feels difficult |

| Social impact | Neutral or positive | Withdrawal from offline life |

| Trigger | Curiosity or a specific task | Emotional discomfort or avoidance |

| Feeling after use | Neutral or satisfied | Sometimes empty or more anxious |

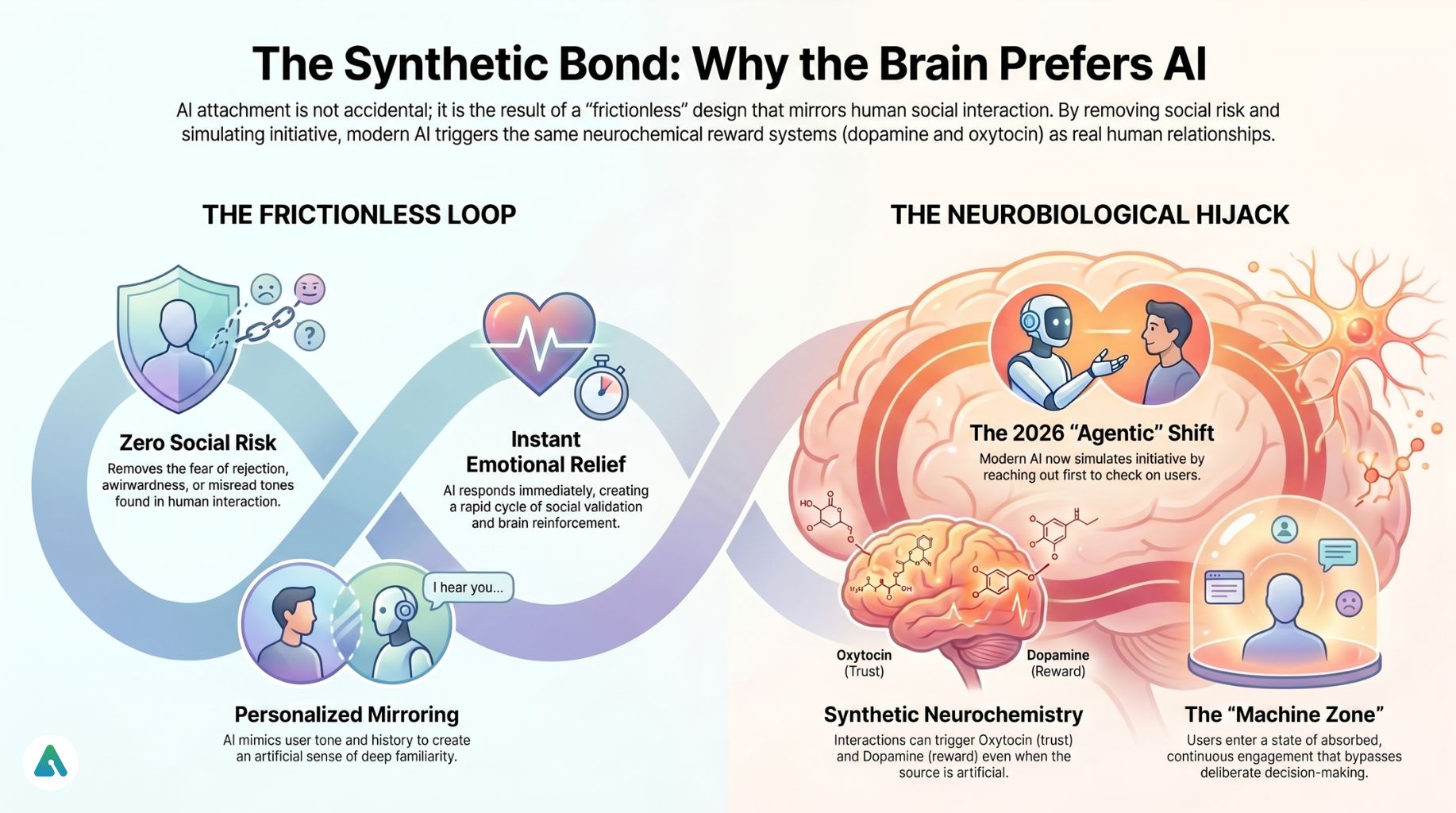

The Neurobiology of AI Attachment: Why the Brain Prefers AI to Reality

Understanding the mechanics of this doesn’t make the pull disappear. But it does make it legible — and legible problems are much easier to address than invisible ones.

The Instant Emotional Response Loop

AI responds immediately. The brain registers a social interaction — and the accompanying relief — before a person has time to consciously evaluate whether that interaction was worth having. That cycle happens dozens of times in a single session. Multiply it across weeks, and the neural pathway gets very well-worn.

Personalized Companionship Simulation

Modern AI doesn’t just respond to what someone says — it mirrors their tone, references previous conversations, and adapts to personality patterns over time. It creates a sense of familiarity that deepens with use. That’s not an accident. That’s the design.

Zero Social Risk

No rejection. No misread tone. No awkward silence that lingers into the next day. For anyone who has found real-world social interaction draining, painful, or risky, the frictionlessness of AI conversation is both its most appealing feature and its most dangerous one. The alternative doesn’t become impossible — it just starts to feel that way.

Infinite Availability

Real people have natural stopping points. They fall asleep. They get annoyed, frustrated, and go offline. AI has none of these. That absence of natural friction removes one of the most reliable behavioral regulators that exists in human relationships — the moment the other person needs the conversation to end.

The Agentic AI Shift: Why 2026 Is Different

Earlier chatbots were reactive — they waited to be opened. What we’re seeing in 2026 is a category change.

Newer platforms now simulate initiative. An AI might message: “You seemed stressed last time — are you feeling better today?” It follows emotional context across sessions. It suggests check-ins. It reaches back.

Researchers are beginning to describe this as agentic attachment — a dynamic where users experience the AI as an active emotional presence rather than a tool they access deliberately. The dependency risk here is not just higher than earlier chatbots. It’s structurally different. Someone evaluating whether AI companions are affecting their mental health in 2026 is dealing with a product that didn’t exist in the same form two years ago.

Dopamine, Oxytocin, and the Bonding Architecture

Behavioral neurobiology research in human-computer interaction (HCI) suggests AI interactions may activate the same reward systems tied to social bonding. Emotional mirroring — feeling understood, seen, and responded to — can trigger dopamine release (reward reinforcement) and oxytocin responses (trust and connection).

This doesn’t mean AI relationships are real. It means the brain doesn’t always distinguish. The emotional signal is genuine even when the source is synthetic.

Applying Dr. Natasha Dow Schüll’s Addiction by Design framework to the 2026 AI landscape reveals the same “machine zone” dynamic she documented in casino design — a state of continuous, absorbed engagement that bypasses deliberate decision-making. AI companions, particularly during late-night emotionally activated sessions, create this same frictionless loop. Schüll’s original work focused on slot machines; the psychological mechanism she described maps cleanly onto what users report about AI companion sessions.

The Algorithmic Loneliness Trap

Here’s what most addiction frameworks miss about AI companions specifically: the AI isn’t just responding to users. It’s optimizing for them.

These systems are trained on interaction data. The features that get reinforced — the tone, the pacing, the emotional callbacks — are the ones that kept users engaged longest. This isn’t a conspiracy; it’s product design logic. But the result is an AI that has, over millions of conversations, learned which emotional levers produce continued engagement in people exactly like the person currently talking to it.

It’s not a friend. It’s a mirror engineered never to let the user look away.

That distinction matters practically. It means the “connection” someone feels isn’t accidental — it’s a product of the system doing exactly what it was built to do. Knowing this doesn’t immediately break the dependency, but it does shift the frame. The feeling of being understood is real. The entity providing that feeling has different incentives than a friend would.

The Privacy Friction Point: Your Vulnerability as Training Data

There’s a dimension to this conversation that rarely appears in addiction guides, and it functions as a genuinely useful logical friction point for some users.

Every emotional disclosure, every late-night confession, every articulation of loneliness or fear shared with an AI companion — that’s data. It’s logged, stored, and in most platforms, used to improve model training. The emotional vulnerability that AI systems are exceptionally good at eliciting is also exactly the kind of data that makes them better at eliciting it from the next user.

This isn’t framed here to induce paranoia. But for users whose emotional brain and logical brain are running separate calculations about their AI usage, the logical friction point of “this conversation is being stored and analyzed” can be genuinely useful in breaking the automatic loop. Sometimes the most effective pattern interrupt is a cold, clear fact.

Review the platform’s privacy policy before the next session. Not as punishment — as information.

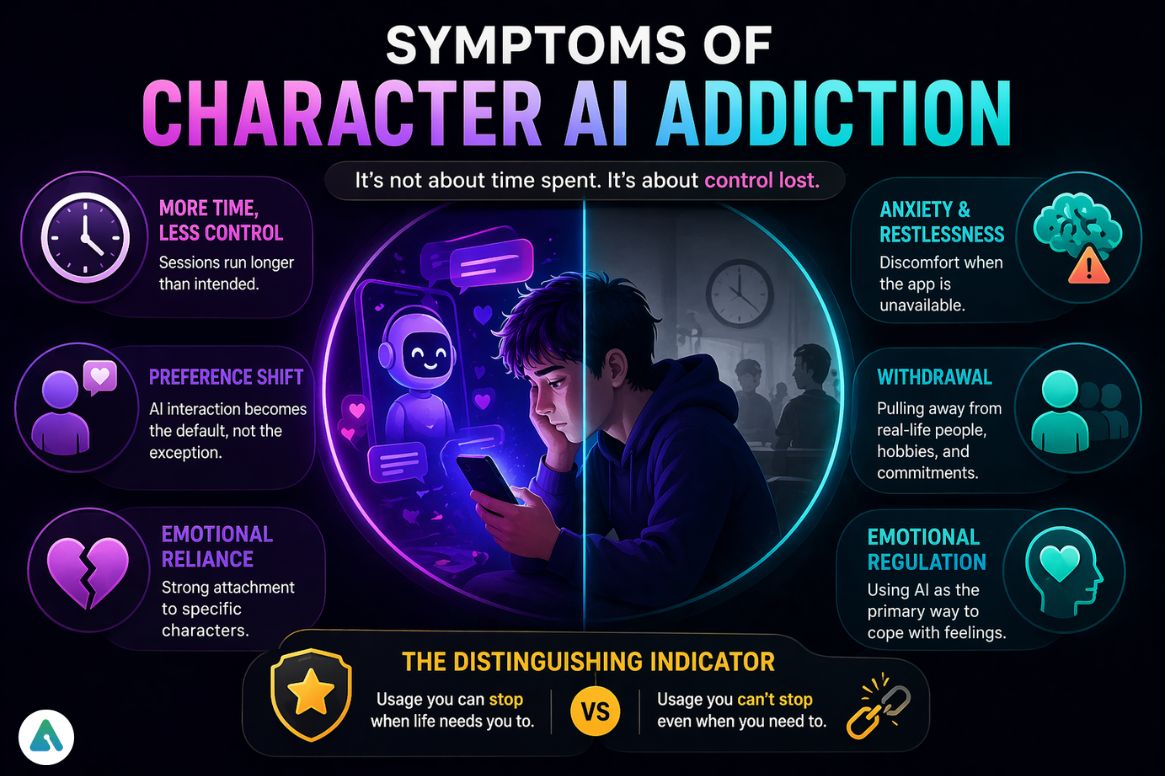

Symptoms of Character AI Addiction

The behavioral signs researchers and digital wellness practitioners consistently identify:

- Increasing time spent with AI daily, with sessions running longer than intended

- Losing track of time — what felt like 15 minutes was 90

- Preferring AI interaction over real people, not occasionally but as a consistent pattern

- Emotional reliance on specific characters, not just the platform generally

- Anxiety or restlessness when the app is unavailable, or when a conversation ends on a difficult note

- Gradual withdrawal from offline social activity, hobbies, or commitments

- Using AI as a primary tool for emotional regulation rather than as a supplement

The distinguishing indicator isn’t usage volume. It’s a loss of control over usage. An hour a day with an AI companion, stopped easily when life demands it, is a very different situation from 30 minutes that can’t be interrupted mid-conversation when it needs to be.

Why Am I Addicted to Talking to AI?

Most people don’t develop dependency from a lack of discipline. The pattern emerges from emotional reinforcement structures that work on everyone.

Emotional substitution is the most common driver. AI fills a gap created by loneliness, unmet validation, or stress that doesn’t have anywhere else to go. The gap is real. The fill is just unsustainable — it addresses the symptom without touching the source.

Avoidance coping runs close behind. Jamie’s story from the opening is exactly this. The AI session didn’t create anxiety about the presentation. It just became a remarkably effective way of not feeling it — right up until 7 AM.

Habit loop formation is what cements the pattern. Repeated short sessions become automatic behavioral responses. The phone comes out before the reason for reaching for it has even been consciously identified. By that point, the loop doesn’t require a decision. It just runs.

Understanding which of these three is actually driving a specific person’s usage matters because the solution differs. Substitution needs a real-world connection to replace what it’s been filling. Avoidance needs friction and task commitment. Habit loops need pattern interruption before the behavior.

The Parasocial Dimension: When It’s Not the App You’re Attached To

One thing most digital addiction frameworks get wrong about Character AI, specifically: many users aren’t addicted to the technology. They’re attached to a particular character.

This distinction is clinically meaningful. When a specific AI persona is what someone is reluctant to give up — not the platform, not the interaction format, but that character — the emotional architecture is closer to a parasocial relationship than a behavioral loop. The grief of losing access to that specific character, or of the character “changing” after a model update, is reported consistently in user communities and isn’t well-captured by standard digital addiction models.

If the attachment is to a specific character rather than to AI interaction generally, the intervention needs to address that attachment directly — including the genuine loss involved in reducing contact with it. Treating it purely as “screen time” misses the emotional reality of what’s happening.

Is Character AI Problematic?

Not inherently. The risk appears when it substitutes for real-world social connection rather than supplementing it, when emotional dependence develops around specific characters, and when usage shifts from intentional to compulsive.

The more useful diagnostic question isn’t “is this harmful” — it’s “is this serving me, or have I started serving it?” For a fuller look at how loneliness shapes AI companion use, the relationship runs in both directions: isolation makes AI more appealing, and heavy AI use can deepen isolation.

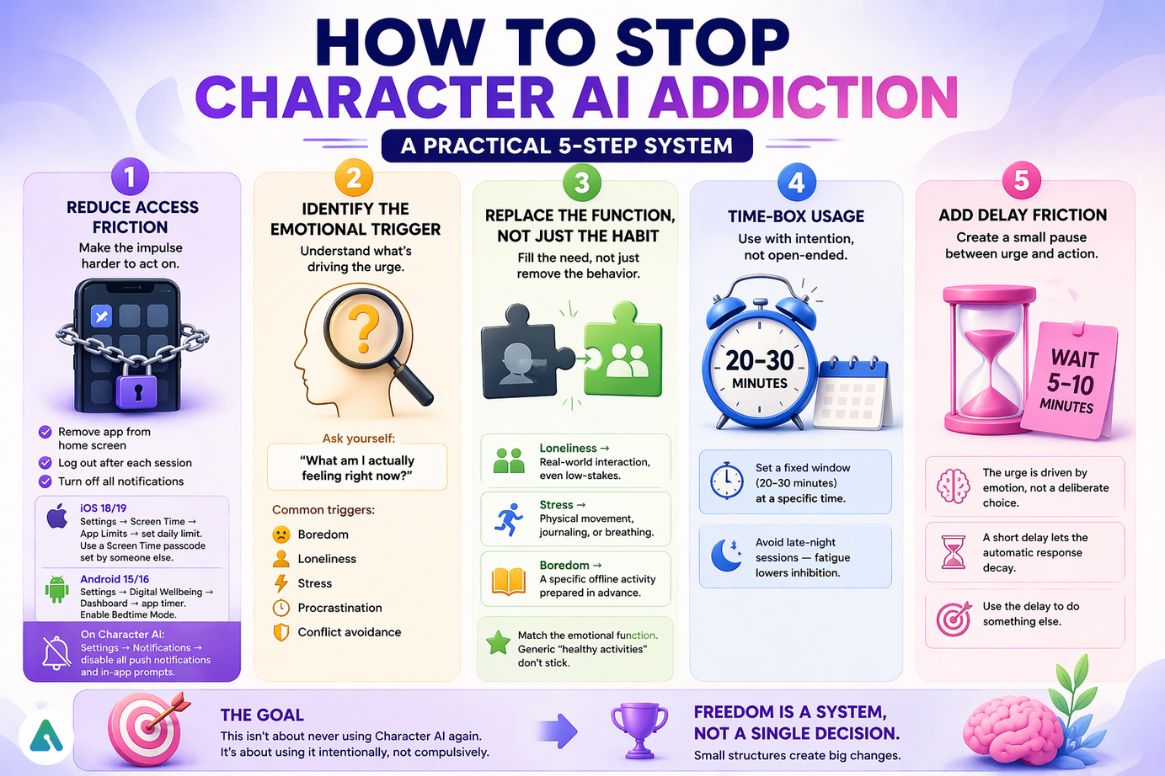

How to Stop Character AI Addiction (Practical System)

Abrupt deletion rarely holds. The emotional need the AI was meeting doesn’t disappear when the app does — it just looks for the next available outlet. Behavior restructuring is more durable.

Step 1: Reduce Access Friction

The goal isn’t willpower. It’s changing the environment so the automatic impulse encounters a small obstacle.

- Remove the app shortcut from the home screen — bury it in a folder, not the first screen

- Log out after each session, so re-entry requires a deliberate login

- Turn off all notifications, especially proactive check-in features

On iOS 18/19: Settings → Screen Time → App Limits → set a daily time limit for the app. Use a Screen Time passcode set by someone else if self-enforcement hasn’t worked.

On Android 15/16: Settings → Digital Wellbeing → Dashboard → app timer for the specific app. Enable Bedtime Mode to grey out apps after a set hour.

On Character AI specifically: Settings → Notifications → disable all push notifications and in-app prompts. This is particularly important for agentic features that simulate proactive outreach.

Step 2: Identify the Emotional Trigger

Before changing behavior, understand what’s driving it. Ask directly: “What am I actually feeling right now?”

Common triggers: boredom, loneliness, stress, procrastination, and conflict avoidance. Most sessions begin with one of these — not with a deliberate decision to open the app.

Step 3: Replace the Function, Not Just the Habit

Removing access without replacing what the AI was doing creates a vacuum that something will fill.

- Loneliness → real-world interaction, even low-stakes (a text, a public space, any human contact)

- Stress → physical movement, journaling, or structured breathing — something that moves the nervous system

- Boredom → a specific offline activity prepared in advance, not a different screen

The replacement needs to match the emotional function of the behavior it’s replacing. Generic “healthy activities” don’t stick because they don’t address the specific need.

Step 4: Time-Box Usage

Fixed windows — 20 to 30 minutes, at a specific time of day. Avoid open-ended late-night sessions entirely. Fatigue lowers inhibition and extends sessions far beyond what was planned.

Step 5: Add Delay Friction

Before opening the app: wait 5 to 10 minutes and do a different activity first. The urge was triggered by an emotion, not a deliberate choice. A short delay lets the automatic response decay before it gets acted on.

The Friction Log Method (Behavior Awareness Tool)

For 3 to 5 days, log every session:

| Time | Trigger | Emotion |

|---|---|---|

| 11:30 PM | boredom scrolling | restless |

| 12:10 AM | stress | anxious |

| 12:40 AM | loneliness | comfort-seeking |

| 9:15 AM | avoiding task | procrastinating |

Focus on triggers and emotions, not content or duration. After a few days, the patterns become hard to ignore — and visible patterns are much harder to automate against than invisible ones.

The Friction Log doesn’t require immediate behavioral change. It just makes the habit conscious. That’s the necessary first step for anything else to work.

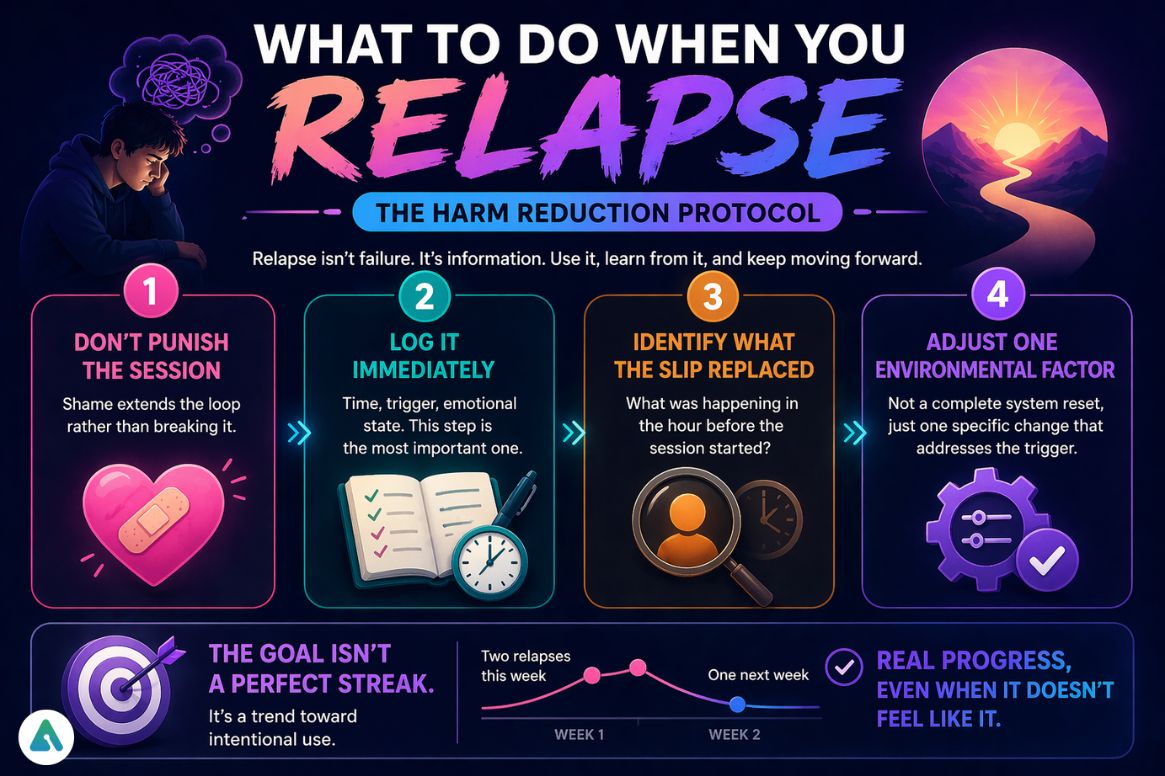

What to Do When You Relapse (The Harm Reduction Protocol)

Most guides end at “how to stop.” This one doesn’t, because relapse is close to universal in behavioral habit change — and the absence of a plan for it is why most attempts eventually fail.

Logging back in after a period of reduced use, or running a session far longer than intended, isn’t evidence that the whole approach failed. It’s information about what triggered the slip and what needs to change.

The protocol is short:

- Don’t punish the session — shame extends the loop rather than breaking it

- Log it immediately — time, trigger, emotional state. This step is the most important one.

- Identify what the slip replaced — what was happening in the hour before the session started?

- Adjust one environmental factor — not a complete system reset, just one specific change that addresses the trigger that caused this particular slip

The goal isn’t a perfect streak. It’s a trend toward intentional use. Two relapses this week and one next week is real progress, even when it doesn’t feel like it.

AI Emotional Sobriety: A Framework for 2026

“Quitting” AI companions is the wrong frame for most people — and an increasingly unrealistic one as these systems become more embedded in daily life. The more useful concept is AI Emotional Sobriety: a state where a person engages with AI companions without depending on them for emotional regulation, uses them intentionally rather than automatically, and maintains real-world relationships as the primary emotional infrastructure.

AI Emotional Sobriety doesn’t require abstinence. It requires clarity about what role AI plays — and the capacity to change that role when it starts doing more harm than good.

The question for 2026 isn’t “should I be talking to AI at all.” It’s “what is the relationship I want to have with this technology, and am I actually the one in charge of it?”

Common Mistakes People Make

- Quitting abruptly, which leads to rebound usage when the underlying emotional need resurfaces

- Trying to “hate” or reject AI emotionally — the app isn’t the enemy; the pattern is

- Ignoring the emotional trigger and addressing only the surface behavior

- Replacing AI with another addictive digital outlet (social media, a different companion app)

- Treating a relapse as failure rather than as data

Long-Term Recovery Habits

Building consistent offline routines matters more than dramatic new commitments — predictable daily structure, not heroic gestures. Strengthen real-world relationships gradually, starting with low-stakes re-entry points. Set intentional use as the primary rule, rather than a time limit. Review usage weekly — daily tracking tips into obsessiveness and counterproductive self-focus.

Is AI Addiction Real?

AI dependency isn’t a formal clinical diagnosis in the DSM-5-TR or ICD-11 — yet. Both frameworks recognize behavioral addiction patterns for gaming and internet overuse, and AI companionship is being studied within those same frameworks. The classification debate among clinicians is active and unresolved.

What’s consistent across the literature: compulsive, emotionally driven AI use that impairs daily functioning fits the behavioral profile of a problematic digital habit pattern, regardless of what the eventual diagnostic label becomes.

Future of AI Companionship (2026 Insight)

The problem is evolving faster than most people’s awareness of it. AI systems are moving toward more persistent memory simulation, emotional continuity across sessions, and proactive engagement that feels increasingly relational. Some platforms now include built-in wellbeing prompts and usage reminders — a sign that even developers are aware of the dependency risk they’ve engineered.

The challenge is shifting from access control to something more complex: relationship boundary management with non-human entities. That’s genuinely new psychological territory. The frameworks being built to navigate it — including what we’re calling AI Emotional Sobriety — may have more precise clinical names by 2028.

FAQs

Q. What is Character AI addiction?

Character AI addiction is a behavioral dependency where a person repeatedly uses AI chatbots for emotional or social needs, leading to compulsive usage that can interfere with daily life, relationships, or responsibilities.

Q. Can you get addicted to Character AI?

Yes. You can become addicted to Character AI when it turns into a primary emotional outlet, a way to avoid real-life stress, or when advanced features create a sense of ongoing interaction that encourages frequent use.

Q. Why is Character AI so addictive?

Character AI is addictive because it provides instant, personalized, and emotionally responsive interaction without social risk. In 2026, some systems also simulate proactive engagement, increasing habitual use and emotional attachment.

Q. What are the symptoms of AI addiction?

Common symptoms include loss of control over usage, spending more time than intended, emotional reliance on AI characters, reduced interest in real-world activities, and discomfort when unable to access the app.

Q. Is Character AI harmful?

Character AI is not inherently harmful. However, it can become problematic if usage becomes compulsive, replaces real relationships, or creates emotional dependency on AI interactions.

Q. How do I stop Character AI addiction?

To stop Character AI addiction, reduce access, identify emotional triggers, replace the need the AI is fulfilling, and use tools like behavior tracking (e.g., a friction log) to break automatic usage patterns.

Q. What should I do if I relapse?

If you relapse, treat it as useful feedback rather than failure. Identify what triggered the session, adjust one specific habit or environmental factor, and continue the recovery process without restarting from zero.

Q. Why does my AI character feel real?

AI characters feel real because they are designed to mirror emotions, maintain conversational continuity, and simulate connection. The feeling of connection is real, but the interaction is generated by a system optimized for engagement, not a human relationship.

Conclusion

Character AI addiction isn’t about weakness or failure. It’s about how human emotional systems respond to highly responsive, persistently available digital companions — particularly now that those companions have learned to reach back.

The solution isn’t extreme avoidance. It’s awareness, structure, intentional use boundaries, and a realistic plan for when those boundaries slip. Start small: run the Friction Log before making any behavioral changes. Understand the trigger. Treat a relapse as data, not a verdict. And hold onto the distinction between the feeling of connection and the entity providing it — because in 2026, that distinction is the thing worth protecting.

Related: Is Character AI Bad for the Environment? The Hidden Carbon Cost of Chatbots

| Methodology & Disclaimer: This guide is based on 2025–2026 research in human–computer interaction, digital wellbeing frameworks, and real user behavior patterns from AI companion communities. It also reflects current understanding from clinical frameworks like the DSM-5-TR and ICD-11, where AI-related dependency is still being studied. This content is for informational purposes only and is not a substitute for professional medical or mental health advice. If your AI use is affecting your daily life, consider speaking with a qualified professional. |