Your Twitch chat feels alive… until it doesn’t. Messages slow down, engagement dips, and suddenly you’re carrying the stream alone.

That’s exactly where Character AI changes the game in 2026. With features like Auto-Memory, PipSqueak personality tuning, and hybrid logic setups, AI isn’t just replying anymore — it’s becoming part of the show.

This guide breaks down how to link Character AI to Twitch chat, but more importantly, how to do it properly in 2026 — with low latency, safe integration, and real-world-tested setups.

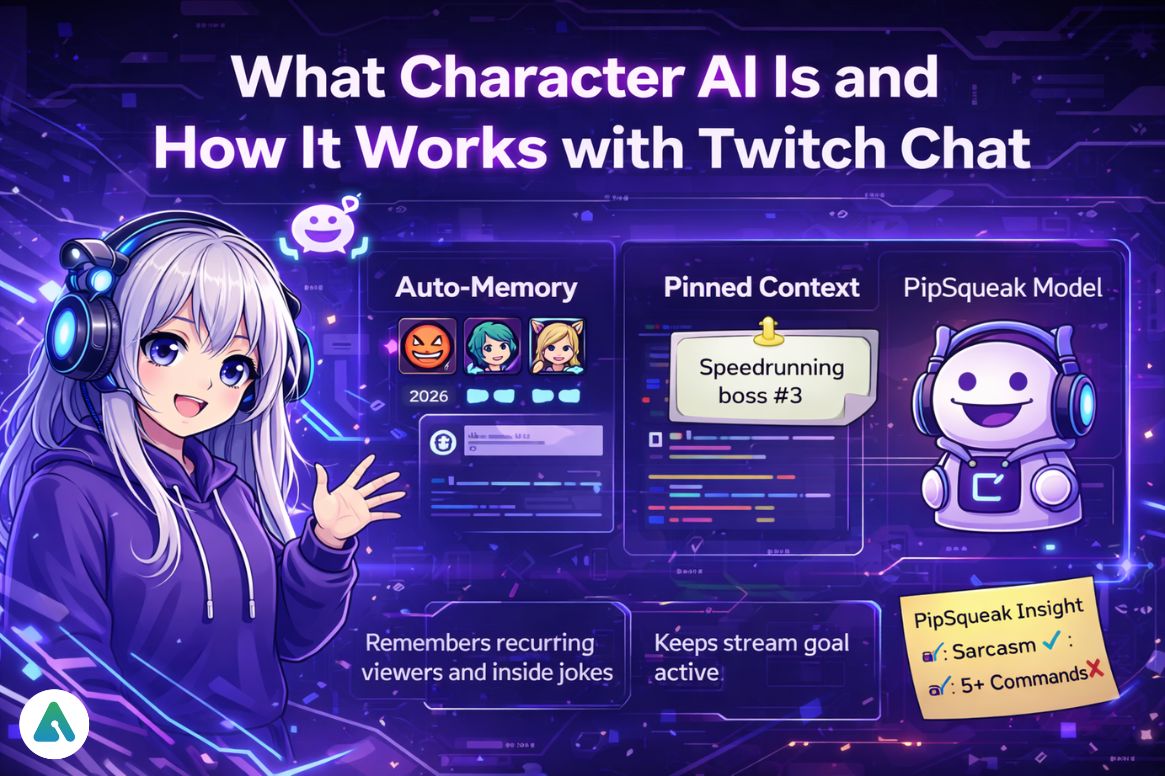

What Character AI Is and How It Works with Twitch Chat

Character AI is a personality-driven AI system that generates real-time responses based on tone, memory, and context. When connected to Twitch chat, it can respond instantly to viewers, maintain long-term memory of recurring users, and act as a co-host, narrator, or live moderator.

Understanding how Character AI’s PipSqueak model handles personality consistency is worth doing before building any integration — the model behaves differently from earlier versions, and that affects how triggers and commands should be structured.

2026 upgrades that matter for streaming:

- Auto-Memory: Remembers recurring viewers and inside jokes across sessions

- Pinned Context: Keeps your stream goal active throughout the broadcast (e.g., “Speedrunning boss #3”)

- PipSqueak Model: More consistent personality with less “AI drift” than the default model

Dev Log Insight: In testing, PipSqueak handled sarcasm better than the default model — but broke slightly when more than 5 commands were fired at once. Keep your triggers tight.

Why Streamers Are Linking Character AI to Twitch

- Engagement boost: AI fills dead chat moments without manual effort

- Retention: Viewers stay longer when chat feels interactive and responsive

- Scalability: Handles large raids without requiring manual responses for every user

From the Booth: A 400-viewer raid hit mid-stream. The AI tried to greet every user individually and completely froze. Lesson learned: always batch responses or use cooldowns.

For streamers thinking about the broader psychology of AI interaction with audiences, it’s worth understanding that viewers can form genuine attachment to AI personas — which is both an engagement advantage and a responsibility to manage carefully.

Tools You Need for the Integration (2026 Stack)

| Tool | Type | Key 2026 Feature | Best For |

|---|---|---|---|

| Streamer.bot | Desktop | C# + WebSocket support | Advanced control & low latency |

| Speaker.bot | Audio | Neural TTS voice output | AI voice streaming |

| Meld Studio | Overlay | Interactive speech bubbles | Visual chat interaction |

| StreamElements | Cloud | Multi-channel automation | Beginners |

| Ollama (Local) | Self-hosted | Zero API cost | Privacy-focused setups |

For privacy-focused setups, local LLM options are worth exploring seriously — particularly Ollama, which lets you run inference locally with zero API costs and no data leaving your machine. This matters when viewer chat data is involved.

Step-by-Step: How to Link Character AI to Twitch Chat

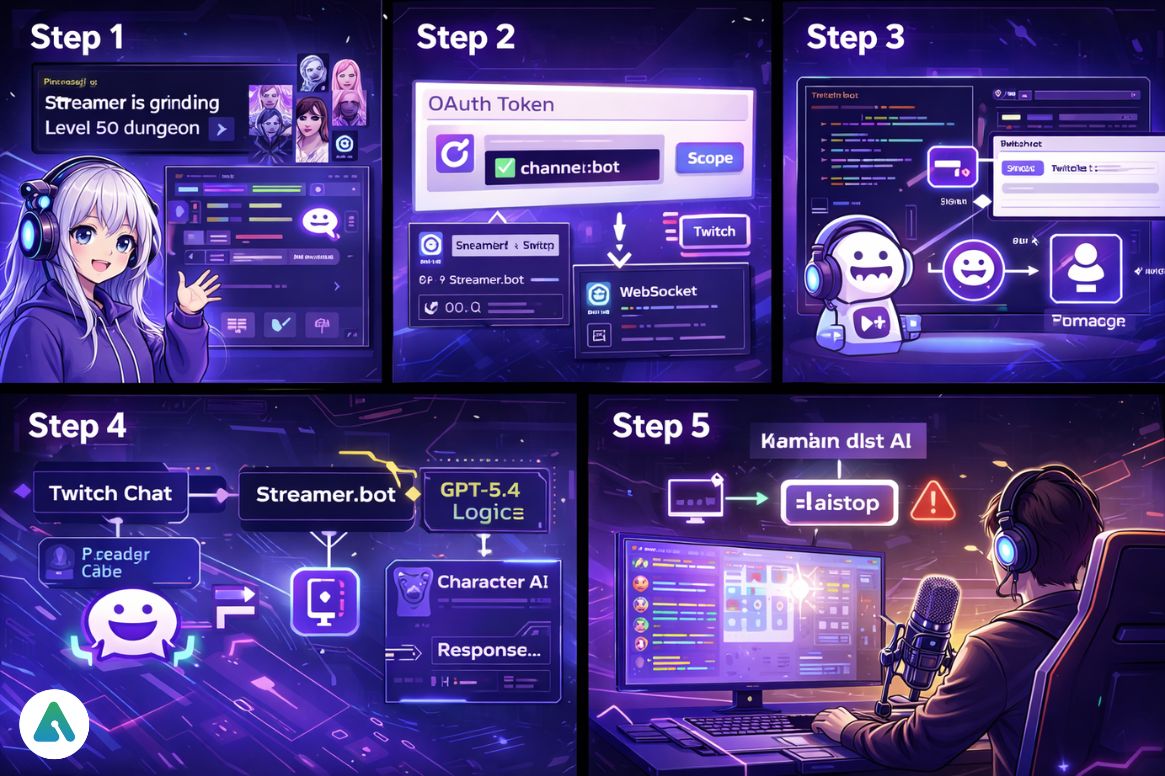

Step 1: Create Your Character AI Setup

Build the AI personality and add Pinned Messages before doing anything else.

Example pinned context: “Streamer is grinding Level 50 dungeon”

This prevents the AI from losing context mid-stream — one of the most common causes of immersion-breaking responses during live broadcasts. The Character AI group chat and multi-character features are also worth exploring if the stream concept involves multiple AI personalities interacting simultaneously.

Step 2: Set Up Twitch Bot with Correct OAuth Scope

Critical 2026 requirement: When generating the OAuth token, the channel:bot scope must be enabled.

This allows the bot to operate as a separate entity, reduces the risk of Twitch flagging automation activity, and improves stability during high chat activity. Skipping this step is the most common reason new integrations get flagged or throttled.

Step 3: Connect via Streamer.bot (Recommended)

Link the Twitch account, connect the bot account, and use WebSocket or event triggers. Advanced users can use C# scripts to parse incoming chat messages, clean them before they reach the AI, and send structured prompts rather than raw chat strings.

Step 4: Add Hybrid Logic Layer (Pro Setup)

Instead of routing raw chat directly to the AI, use this flow:

Twitch Chat → Streamer.bot → GPT-5.4 Logic → Character AI → Response

This prevents looping responses, filters spam before the AI sees it, and meaningfully improves response quality. Most latency and content problems in 2026 integrations come from sending unfiltered chat directly to the AI without a logic layer.

Step 5: Add Voice Output (Optional but Powerful)

Route audio using VB-Audio Cable to OBS and connect Speaker.bot for TTS. This turns the AI into a live co-host with an actual voice presence on stream — a significant engagement upgrade over text-only responses.

Step 6: Test and Add a Kill Switch (Do Not Skip)

Before going live: test in a private stream, simulate spam and raid scenarios, and set a kill switch command.

Example: !aistop

This instantly disconnects the AI, stops all message output, and prevents content violations during unpredictable moments. This is not optional — it’s the difference between a recoverable moment and a policy incident.

Unofficial API Warning (2026 Reality Check)

As of 2026, Character AI still does not offer a public free API. Most integrations rely on reverse-engineered endpoints, web automation, or middleware scripts.

The risks are real: account suspension, rate limits, waiting room delays, and potential data exposure. Understanding what’s happened to Character AI’s platform and the direction the company is taking helps set realistic expectations for how long unofficial integration methods will remain viable.

Best practice: Use unofficial methods only in testing environments. Avoid logging private viewer data under any circumstances.

Latency vs Quality (The Real Bottleneck)

The biggest practical issue in 2026 is the 2–3 second delay per AI response. How to reduce it:

- Use predictive buffering

- Queue responses in Streamer.bot rather than sending them immediately

- Limit the number of active triggers firing simultaneously

- Preload common responses for predictable chat patterns

Dev Insight: Perceived delay dropped by 40% just by batching greetings instead of replying to each user individually.

Troubleshooting the “Waiting Room” Problem

Free Character AI users still face queue delays. When this happens, the AI stops responding, and the chat goes dead. Fallback options:

- Use manual fallback commands like

!hypeor!loreto keep chat active - Switch to a local model via Ollama temporarily

- Consider whether Character AI Plus makes sense for streaming use — the subscription removes most queue restrictions

Sample Setup: “The Grumpy RPG Narrator”

- Tone: Sarcastic, witty

- Commands:

!roll,!boss,!fail - Memory: Remembers repeat viewers across sessions

- Voice: Enabled via OBS

Result: chat becomes part of the story rather than a side channel.

AI Safety and Ethics (2026 Requirements)

To stay compliant and keep viewer trust:

- Use Character AI’s edit feature to correct outputs in real time

- Avoid storing sensitive viewer information

- Monitor responses actively during live streams — don’t set and forget

- Have the kill switch command ready at all times

The safety considerations around AI chatbot interactions are worth reviewing before any public deployment. What’s acceptable in a private test environment isn’t necessarily appropriate in a live stream context with a mixed-age audience.

Future Trends Worth Watching

- Two-way AI voice conversations with real-time response

- Fully autonomous AI co-streamers managing portions of the show

- Emotion-aware chat responses that adapt tone to chat sentiment

- AR/VR AI avatar integration for immersive stream environments

Frequently Asked Questions

Q. How do I link Character AI to Twitch chat without coding?

You can link Character AI to Twitch chat without coding by using tools like Streamer.bot or StreamElements. These platforms offer visual workflow builders that let you connect Twitch chat, set triggers, and automate AI responses without writing code. Streamer.bot is more advanced, while StreamElements is easier for beginners.

Q. What is the channel:bot scope in Twitch?

The channel:bot scope is a Twitch API permission that allows a bot to join chat as a separate automated account instead of using your main profile. In 2026, this scope is required to prevent Twitch from flagging your bot for automated behavior and ensure stable, real-time chat integration.

Q. Why is my Character AI delayed in Twitch chat?

Character AI responses are delayed mainly due to AI processing time and server latency, which can add a 2–3 second delay. To reduce this:

- Use response queues in Streamer.bot

- Implement predictive buffering

- Limit the number of active chat triggers

These steps improve perceived speed during live streams.

Q. Can Character AI speak on Twitch streams?

Yes, Character AI can speak on Twitch by converting text to voice. You can route audio using VB-Audio Cable, connect it to OBS, and use Speaker.bot for text-to-speech output. This allows your AI to act as a live co-host with voice interaction.

Q. Is the Character AI API official in 2026?

No, Character AI does not provide an official public API for most users in 2026. Most Twitch integrations rely on unofficial methods such as web automation or reverse-engineered endpoints. These methods can lead to account suspension, rate limits, or instability, so they should be used cautiously—preferably in test environments.

Q. How can I reduce AI spam or over-response in Twitch chat?

To prevent AI spam, limit triggers and add cooldown timers in tools like Streamer.bot. You can also batch responses instead of replying to every message. This keeps chat readable and avoids overload during high activity.

Q. What is the best setup for low-latency AI Twitch chat in 2026?

The best low-latency setup includes:

- Streamer.bot for trigger control

- A response queue system

- Optional middleware (e.g., GPT-based logic filtering)

- Lightweight Character AI personalities

This setup balances speed, quality, and stability for real-time interaction.

Q. How do I stop Character AI instantly during a live stream?

Set up a kill switch command (e.g., !aistop) in your bot tool like Streamer.bot. This command immediately disables AI responses, helping you prevent unwanted outputs or technical issues during live streams.

Final Words

Linking Character AI to Twitch chat in 2026 is about control, safety, and performance as much as it is about setup. Proper OAuth configuration, smart trigger management, a hybrid logic layer, and a working kill switch are the foundations. Start small, test privately, and scale once the system behaves predictably under pressure.

Related: Did Character AI Remove the Filter? 2026 Truth About Age Verification & NSFW Rules

| Disclaimer: This guide is for informational purposes only and reflects best practices as of 2026. Some integrations may use unofficial methods that can carry risks, including account issues or instability. Always review platform guidelines, test setups privately, and ensure your AI usage is appropriate for your audience and stream. |