Every second, your brain is a chaotic construction site. It takes the hum of your laptop, the shifting light of your screen, and the syntax of this sentence, and builds a coherent “reality” from raw sensory data. For decades, we have tried to watch this process through the foggy window of fMRI machines—expensive, slow, and claustrophobically limited.

But Meta AI’s recent release of TRIBE v2 (TRImodal Brain Encoder) marks a “Kitty Hawk moment” for neuroscience. We are moving from simply observing the brain to building a computational “simulation layer” that approximates human neural activity at scale.

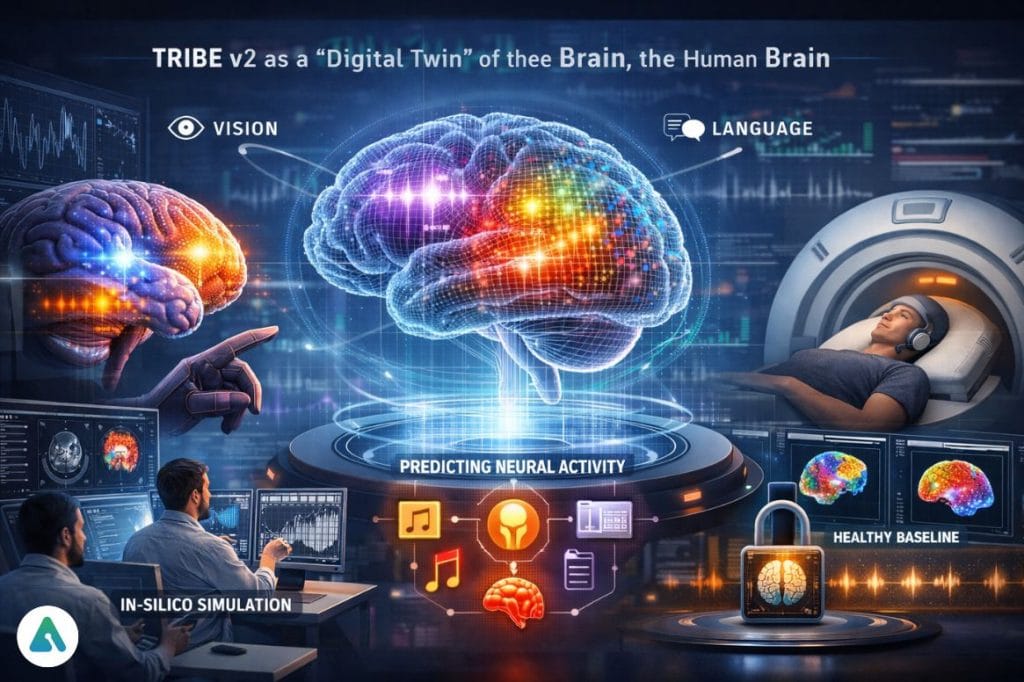

What is TRIBE v2? (The Digital Brain Twin)

At its core, the Meta TRIBE v2 is a foundation model for human perception. Instead of putting a volunteer in a million-dollar scanner to see how they react to a podcast, researchers can now feed that podcast into TRIBE v2.

The model acts as a “digital twin,” predicting the exact 3D map of neural activation—the fMRI signature—that a healthy human brain would produce. It does this across three modalities simultaneously: Vision, Sound, and Language.

The 70x Resolution Breakthrough

Earlier brain models were “blurry.” They could tell you that the visual cortex was active, but they couldn’t distinguish between the fine-grained layers of perception. TRIBE v2 offers a 70-fold increase in spatial resolution, mapping activity across roughly 70,000 voxels. This allows us to distinguish between the brain’s reaction to a face versus a landscape, or the subtle shift between hearing a word and reading it.

Technical Authority: Solving the “Biological Lag”

What many standard news reports miss is the technical hurdle of timing. Neurons fire in milliseconds, but blood flow (what fMRI measures) takes about five seconds to peak. This is known as the Hemodynamic Response Function (HRF).

TRIBE v2 doesn’t just process data; it understands this “biological clock.” Its architecture includes a temporal transformer that syncs high-speed AI processing with the slow, rhythmic pulse of human blood flow. By accounting for this 5-second lag, the model achieves a level of predictive accuracy that was previously impossible.

Expert Insight: TRIBE v2 uses a “Modality Dropout” training strategy. By occasionally “blinding” the model to one sense (like audio) during training, Meta forced the AI to learn how the brain integrates information. This makes it robust enough to predict brain activity even when fed incomplete sensory data.

The “In-Silico” Revolution: Science Without Subjects

The most profound impact of TRIBE v2 is the birth of In-Silico Neuroscience. In aerodynamics, engineers test plane designs in digital wind tunnels before building a physical prototype. TRIBE v2 brings this to brain science.

Researchers can now:

Simulate Research in Seconds: Test how the brain might respond to new stimuli without recruiting a single participant.

Screen Hypotheses: Use the model to “filter out” dead-end theories, ensuring that expensive human lab time is reserved for only the most promising breakthroughs.

Establish a “Healthy Baseline”: By comparing a patient’s real scan to TRIBE’s “average” prediction, doctors can pinpoint exactly where neural processing breaks down in conditions like Alzheimer’s, Aphasia, or Epilepsy.

Ethics and the Future of Neural Privacy

As we build digital twins of the mind, we must address the “Neural Friction” that comes with it. If an AI can accurately predict how you will respond to a specific image or sound, the door swings open for Computational Neuromarketing. Imagine media perfectly engineered to trigger the highest dopamine response before it’s even launched. While TRIBE v2 is an open-source tool for scientific good, it reminds us that we are entering an era where the “black box” of human perception is finally being decoded.

Final Verdict

TRIBE v2 is not a mind-reading machine; it is an infrastructure for understanding. By releasing the model under a CC BY-NC license, Meta has ensured that the keys to this digital wind tunnel are in the hands of the global scientific community.

For the first time, we aren’t just looking at the brain—we are learning to speak its language.

Related: Intimate Advertising AI: How Algorithms Are Stealing Our Emotional Trust