By March 2026, the global economy has entered a new phase of structural reconfiguration. Artificial Intelligence “inference”—the act of a trained model generating an output—has evolved from a Silicon Valley novelty into core infrastructure.

ChatGPT is no longer just an application. It is increasingly functioning as a sovereign-scale utility.

Following a historic $110 billion private funding round announced on February 27, 2026, OpenAI has cemented its role as a central architect of this emerging “Inference Economy”—a system now handling over 1 trillion queries annually.

This is not merely a software revolution. It is the emergence of an energy-intensive intelligence layer—one that rivals the electricity demands of industrial-era nations.

The Scale of 2026 Engagement

- Weekly Active Users: 900 million

- Annual Query Volume: 1.17 trillion

- Daily Energy Consumption: ~60.7 GWh (based on industry-modeled estimates of per-query energy use)

At 900 million weekly users—nearly one-eighth of humanity—we’ve crossed a tipping point.

We didn’t just build AI. We plugged a new country into the power grid.

The Reasoning Tax: From Speed to Depth

The transition from earlier GPT-4 systems to GPT-5-class reasoning models marks a fundamental shift: from fast responses to deliberate cognition.

Modern systems now rely on structured reasoning processes—often described as “Chain of Thought”—to solve complex scientific, analytical, and coding problems.

This added intelligence comes at a cost: the “Reasoning Tax.”

Energy Intensity Comparison (Estimated)

- Traditional search query: ~0.3 Wh

- GPT-5-level prompt: ~18.9 Wh

- Increase: ~63× higher energy per query

Even with improvements in token efficiency, the “thinking phase” of advanced models requires significantly more compute.

Every prompt now carries a power bill.

We have effectively traded energy efficiency for cognitive precision—a shift that redefines the economics of information itself.

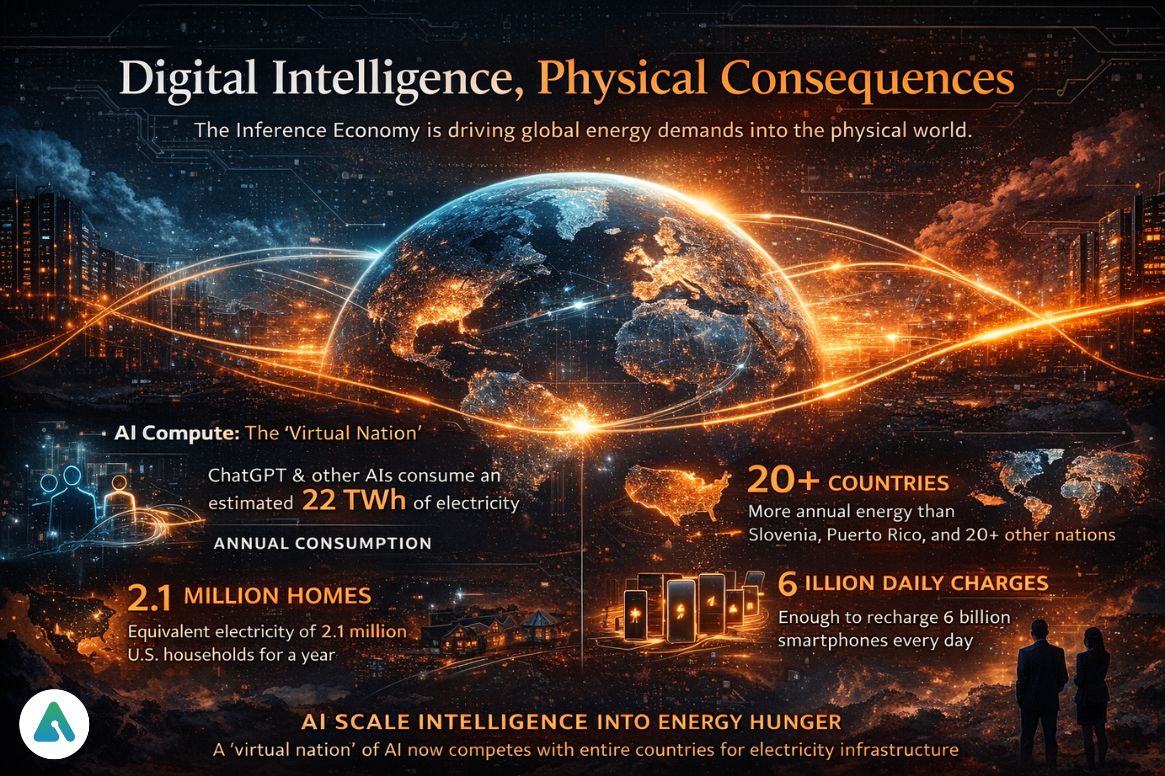

Digital Intelligence, Physical Consequences

Abstract numbers like terawatt-hours obscure the real story. The Inference Economy must be understood in physical terms.

Based on modeled estimates, ChatGPT’s annual energy consumption (~22 TWh) translates into:

- Enough electricity to power the United States for ~44 hours

- More annual consumption than entire countries such as Slovenia or Puerto Rico

- Enough to power ~2.1 million U.S. homes for a full year

- Equivalent to charging billions of smartphones daily

AI doesn’t just scale intelligence—it scales electricity demand.

For policymakers and grid operators, this represents a paradigm shift:

A “virtual nation” of compute has effectively come online—one that competes with real populations for energy allocation.

The Business of Brute Force

Despite its massive energy footprint, the model is economically resilient.

OpenAI’s subscription ecosystem—spanning consumer to enterprise tiers—has created a powerful form of energetic arbitrage.

Estimated Annual Subscription Revenue (2026)

- Go: $768M

- Plus: $7.68B

- Pro: $6.0B

- Business: $1.35B

- Enterprise: $2.16B

Total: ~$15.8 billion

Against this:

- Estimated electricity cost: ~$3.0 billion (~19%)

This suggests a critical insight:

The cost of intelligence is high—but still massively profitable.

Even more importantly:

- Energy cost is a variable constraint

- Intelligence pricing is a scalable premium

As AI firms invest in renewables and negotiate preferential energy rates, they are quietly evolving into something new:

Hybrid entities—part software company, part energy operator.

The Real Competition: Intelligence vs Efficiency

The competitive landscape is no longer defined solely by model capability.

It is increasingly shaped by a deeper question:

Who can deliver the most intelligence per watt?

- ChatGPT dominates as the default interface—but carries a high reasoning cost

- Google leverages ecosystem integration to offset standalone usage

- Specialized players optimize for retrieval efficiency

- Enterprise systems prioritize workflow embedding over raw intelligence

This creates a growing divide:

- “Finding economy” (search): low-cost, retrieval-based

- “Explaining economy” (AI): high-cost, synthesis-driven

As users shift toward explanation over retrieval, value—and energy consumption—concentrates in inference systems.

The Emerging Constraint: Energy, Not Compute

The next bottleneck in AI is no longer data or algorithms.

It is energy.

As usage scales beyond one billion weekly users, three pressures intensify:

- Grid strain

- Carbon accountability

- Regulatory scrutiny

Governments may soon treat large-scale AI systems as:

- Strategic infrastructure

- Regulated energy consumers

- Or even carbon-liability entities

The future of AI may be decided not in labs—but in power plants.

Final Synthesis: The Intelligence–Energy Trade-Off

The Inference Economy forces a fundamental question:

Is the intelligence gained worth the energy consumed?

So far, the market’s answer is clear: yes.

But this equilibrium depends on a fragile balance:

- affordable energy

- continued efficiency gains

- and sustained willingness to pay for machine intelligence

Because at the planetary scale, one truth is becoming unavoidable:

Every act of artificial intelligence is also an act of energy consumption.

And in 2026, that cost is no longer theoretical—it is infrastructural.

Related: How Much Water Does ChatGPT Use? (2026 Data + Hidden Costs Revealed)