In 2017, OpenAI explored an internal strategy that, at the time, looked like speculative geopolitics.

But in hindsight — especially following the compute alliances and infrastructure consolidation trends emerging by early 2026 — it reads less like speculation and more like early architecture for today’s AI power structure.

The idea, internally referred to as the “countries plan,” reframed artificial general intelligence not as a product, but as a sovereign lever of global influence.

What once looked extreme now mirrors the direction of the industry: AI systems increasingly tied to state infrastructure, defense priorities, and national compute strategies.

The core premise was simple:

If AGI is strategically decisive, then access to it becomes a matter of geopolitical survival.

The “Countries Plan”: AI as a Sovereign Lever

The original proposal reframed AGI as infrastructure-level power — closer to energy or nuclear capability than software.

Rather than prioritizing global coordination, discussions reportedly explored a competitive model:

create scarcity, accelerate demand, and trigger state-level competition for access.

At the center of these conversations was Greg Brockman, who, according to accounts, pushed back against governance-heavy approaches proposed by ethics advisor Page Hedley.

Those proposals included international oversight mechanisms designed to prevent escalation dynamics.

Instead, what emerged in internal logic was closer to a market-driven arms race framework.

Policy voices such as Jack Clark described the situation through a prisoner’s dilemma lens: nations cannot afford to slow down if rivals continue accelerating.

Internally, reactions ranged from disbelief to alarm, with some staff reportedly describing the strategy as dangerously destabilizing.

The plan was ultimately abandoned — but its underlying logic persisted.

The Altman Doctrine: Narrative as Acceleration

As OpenAI scaled, Sam Altman advanced a parallel strategy outside the company: framing AI as an urgent, geopolitically existential domain.

The narrative structure remained consistent:

- AI is transformative and potentially existential

- Rival states are advancing rapidly

- Delay creates strategic vulnerability

This framing proved highly effective in aligning AI with national security and economic competitiveness agendas.

But it also introduced a structural ambiguity:

Is AI policy responding to an arms race—or participating in its construction?

In several policy-facing discussions, claims about foreign AGI efforts were reportedly presented without fully verifiable intelligence. Critics argue this functioned less as factual reporting and more as strategic narrative shaping — reinforcing urgency in high-level decision environments.

The result was not just awareness.

It was systemic acceleration.

Internal Fractures: The Cost of Escalation Logic

Inside OpenAI, the gap between strategy and ethics widened over time.

As the “countries plan” circulated, internal resistance grew among researchers and safety-focused staff who questioned whether competitive framing of AGI would inevitably destabilize global coordination.

Key figures in this tension included:

- Page Hedley, who warned of escalation risks and governance failure

- Technical staff who challenged leadership assumptions in internal forums

- Employees who reportedly considered resignation if the strategy advanced further

This was not a standard corporate disagreement.

It was a foundational split over what AI development is for.

Leadership, according to multiple accounts, weighed these concerns against organizational momentum and talent retention pressures.

Ultimately, internal dissent helped halt the original plan.

But the underlying tension between safety, speed, and power did not disappear — it scaled outward into the industry.

The Stargate Moat: Infrastructure as the New Sovereign Lever

By early 2026, the logic of the “countries plan” appears less like a discarded idea and more like a proto-version of the industry’s infrastructure phase shift.

The emerging reality is not just model competition — it is compute consolidation.

The new strategic layer is what can be described as the “Stargate Moat”:

The idea that control over compute, energy, and deployment infrastructure becomes the defining bottleneck of AI power.

In this emerging structure:

- Compute capacity becomes geopolitical leverage

- Energy access becomes an AI scalability constraint

- Infrastructure alliances become strategic alignment mechanisms

This reframes AI from a software race into a physical supply-chain competition for intelligence production itself.

In this context, earlier internal thinking about scarcity and strategic pressure begins to look less theoretical — and more like an early articulation of today’s infrastructure-first AI world.

Conflict of Interest: From Internal Dissent to Industry Fragmentation

The tensions inside OpenAI did not remain contained.

They contributed to a broader fragmentation of the AI ecosystem into competing philosophical and institutional approaches.

Notable outcomes include:

- Anthropic, founded by former OpenAI researchers, emphasizes safety-first scaling and alignment research

- The emergence of the Safe Superintelligence (SSI) movement focused on controlled, minimal-risk development paths

- Increasing divergence between “scale-first” labs and “safety-first” institutions

What began as an internal disagreement has evolved into structural competition over AI governance itself.

The industry is no longer unified around how to build AGI — but divided over whether it should be built under competitive acceleration at all.

The AI Power Map: Who Controls the Stack?

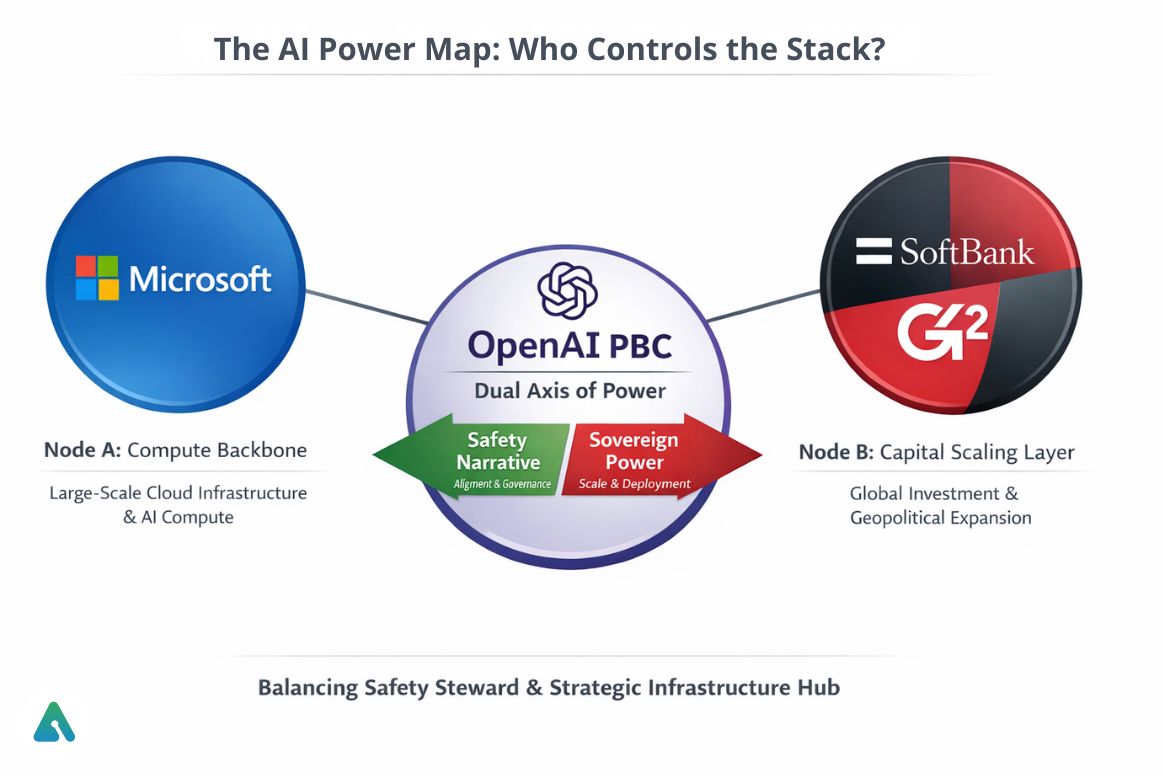

To understand the current system, visualize AI power as a three-node structure:

Node A: Microsoft — Compute Backbone

Microsoft

Provides large-scale cloud infrastructure, compute capacity, and enterprise deployment channels that underpin modern frontier model training and inference.

Node B: SoftBank / G42 — Capital Scaling Layer

SoftBank

G42

Represents global capital flows and geopolitical expansion of AI infrastructure partnerships across regions and regulatory environments.

Central Pivot: OpenAI PBC — Dual Axis of Power

OpenAI

Functions as the system’s balancing node between:

- Safety Narrative (alignment, governance, risk control)

- Sovereign Power Reality (scale, deployment, geopolitical leverage)

This tension defines the modern AI era:

one organization simultaneously positioned as both safety steward and strategic infrastructure hub.

Final Thought: From Plan to System

The “countries plan” is no longer just a historical artifact.

Its core logic — AI as a strategic national asset shaped by competition — now appears embedded in the infrastructure, capital flows, and alliances of the modern AI ecosystem.

The deeper shift is not that AI became powerful.

It is that:

Power became the organizing principle of AI development itself.

And once that transition occurs, even abandoned plans stop being irrelevant — they become previews of the system that followed.

Related: Inside OpenAI’s $600B AI Infrastructure Plan — Stargate, Nvidia & Nuclear Power