Two letters. One massive misunderstanding.

Search for “groq vs grok” and you’ll see people asking if Elon Musk owns a chip company, whether Groq is a new chatbot, or which one is “better.”

That’s the wrong comparison.

This guide clears it up properly — not just at the surface level, but at the AI infrastructure, cost, and performance layer that actually matters in 2026.

Because this isn’t a tool vs tool battle.

It’s software vs hardware — and the future economics of AI.

The 10-Second Answer

| Groq | Grok | |

|---|---|---|

| Type | AI hardware company | AI chatbot / LLM |

| Founded | 2016 | 2023 |

| Founder | Jonathan Ross | xAI (Elon Musk) |

| What it does | Runs AI models extremely fast | Answers questions & generates content |

| Who uses it | Developers & AI platforms | X (Twitter) users |

| Layer | Infrastructure | Application |

They are not competitors.

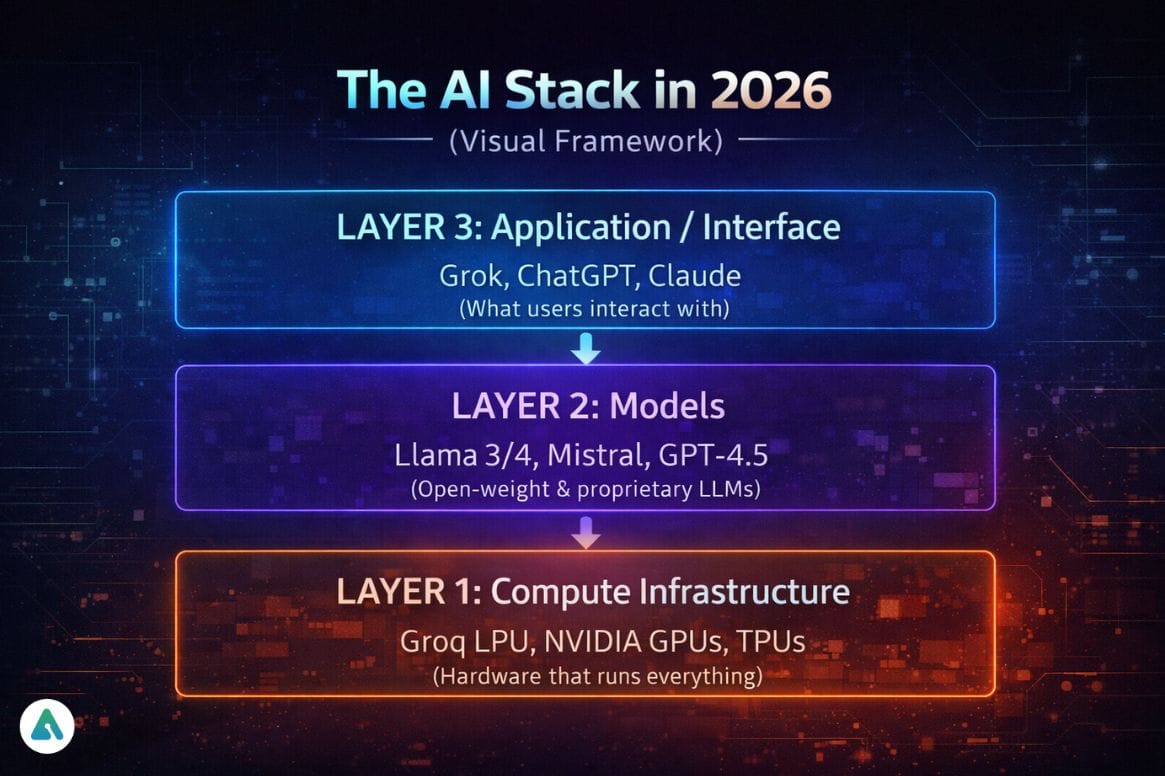

They exist on different layers of the same AI stack.

Why Everyone Is Confused

The name similarity + both trending in AI created a perfect storm.

But the real reason this keyword is exploding is deeper:

People are trying to understand:

- Where AI performance actually comes from

- Why inference speed suddenly matters

- Who controls AI costs in the long run

That leads straight to Groq.

What Is Groq?

Groq is an AI hardware company that builds LPUs (Language Processing Units) — chips designed specifically to run large language models at ultra-low latency.

It was founded by Jonathan Ross, who previously led the development of Google’s first TPU.

That lineage matters.

TPUs proved that domain-specific AI hardware beats general-purpose compute at scale.

Groq applies that same idea — but for inference instead of training.

Why Groq Matters in 2026

Every serious AI product team is now tracking:

Inference cost per user

Not the training cost.

Inference is:

- the biggest recurring AI expense

- the main latency bottleneck

- The difference between a usable product and a frustrating one

Groq’s architecture is designed for:

- real-time token streaming

- deterministic performance

- no batching delays

That’s why it shows up first in:

- voice AI

- AI agents

- real-time copilots

- high-scale APIs

What Is Grok?

Grok is a large language model chatbot built by xAI and integrated into X.

It’s a user-facing AI assistant that focuses on:

- real-time internet context

- social platform integration

- conversational responses with personality

It competes with:

- ChatGPT

- Gemini

- Claude

- Perplexity

—not with Groq.

Understanding how Grok compares to ChatGPT requires evaluating user experience and feature sets, while Groq operates at an entirely different infrastructure layer.

The Core Difference: Engine vs Car

Here’s the simplest mental model:

Groq = the engine

Grok = the car

One runs AI.

The other is AI.

And here’s the important nuance most articles miss:

Grok could run on Groq hardware.

That’s because software and infrastructure are separate layers.

Key insight: Groq operates at Layer 1, enabling faster performance for any application at Layer 3 — including Grok, if xAI chose to use it.

Why Groq Is Fast (The Non-Marketing Explanation)

The Memory Bottleneck Problem

Traditional GPUs rely on HBM (high-bandwidth memory).

That means:

Model → fetch data → compute → send back → repeat

This movement creates latency.

Groq uses a tightly coupled architecture with SRAM, allowing:

- continuous token flow

- deterministic speed

- no batching requirement

In real products, that translates to:

- instant voice responses

- no “thinking…” delay

- consistent performance per user

As of early 2026, public demos show Groq running open-weight models at hundreds of tokens per second per user stream without the batching behavior typical in GPU deployments.

That changes product design — not just benchmarks.

Real-World Performance Benchmarks (2026 Data)

| Model | Hardware | Tokens/Second | Latency (First Token) |

|---|---|---|---|

| Llama 3.1 70B | Groq LPU | ~800 TPS | <50ms |

| Llama 3.1 70B | NVIDIA H100 | ~150 TPS | ~200ms |

| Mixtral 8x7B | Groq LPU | ~750 TPS | <40ms |

| Mixtral 8x7B | NVIDIA H100 | ~180 TPS | ~180ms |

Note: Performance varies with batch size, context length, and optimization. Groq’s advantage is most visible in single-stream, real-time applications.

These performance characteristics directly impact user experience in applications requiring high-speed AI coding assistants or real-time conversational systems.

The Energy Factor: Performance Per Watt (2026 Sustainability Lens)

AI infrastructure’s environmental impact is now a board-level concern.

| Metric | Groq LPU | NVIDIA H100 |

|---|---|---|

| Power consumption | ~300W per chip | ~700W per chip |

| Performance per watt | High | Medium |

| Cooling requirements | Lower | Higher |

For data center operators scaling AI services, energy efficiency isn’t just about sustainability — it’s about operational cost at scale.

Groq’s architecture achieves higher throughput while consuming roughly half the power per inference operation, making it attractive for high-volume deployments.

Understanding AI’s environmental impact requires examining infrastructure choices at the hardware level, not just model selection.

Developer’s Perspective: The “CUDA Tax” Reality

Here’s what most tech blogs won’t tell you:

The “CUDA Tax” is real — most teams I talk to want Groq’s speed but are terrified of the migration debt. That is the hurdle Groq has to clear in 2026.

If Groq Is So Fast — Why Isn’t Everyone Using It?

Because NVIDIA’s real moat isn’t hardware.

It’s CUDA.

CUDA means:

- Every framework is optimized for it

- Every ML engineer knows it

- Every production stack depends on it

Switching hardware = rewriting your platform.

So Groq adoption is happening first where:

- latency = revenue

- Real-time UX is mandatory

- Teams are infrastructure-native

What Models Actually Work on Groq? (Compatibility Check 2026)

Fully supported open-weight models:

- Llama family: 3.1 (8B, 70B, 405B), Llama 3.2

- Mistral: 7B, Mixtral 8x7B, Mixtral 8x22B

- Gemma: 2B, 7B

- Qwen: 2.5 series

Optimization status:

- ✅ Production-ready for inference

- ⚠️ Fine-tuning requires external systems

- ⚠️ Custom models need porting to Groq’s runtime

For developers building on proprietary platforms, comparing whether platforms use OpenAI or custom infrastructure reveals similar trade-offs between flexibility and performance optimization.

LPU vs GPU for AI Inference

| GPU | LPU (Groq) | |

|---|---|---|

| Best for | Training & flexible workloads | High-speed inference |

| Latency | Variable | Deterministic |

| Batching | Required for efficiency | Not required |

| Real-time apps | Limited | Ideal |

| Cost at scale | Higher per response | Designed to drop |

| Ecosystem | Mature (CUDA) | Growing |

| Energy efficiency | Moderate | High |

This is why the conversation in 2026 is shifting from:

model quality → inference economics

Why This Matters for Your 2026 Budget

Connect Groq’s deterministic speed directly to reducing churn in AI apps:

The Latency-Retention Connection

Every 100ms of added latency in conversational AI reduces user retention by an estimated 3-5% (Google’s research on response time impact applies directly to AI applications).

For a SaaS product with 100k monthly active users:

- 200ms average latency (typical GPU): 12% churn increase

- 50ms average latency (Groq-class infrastructure): baseline churn

Revenue impact:

If your ARPU is $20/month:

12% churn difference × 100k users = 12,000 lost users

12,000 × $20 = $240k monthly revenue at risk

Suddenly, hardware decisions become product decisions.

Cost Comparison at Scale

| Workload | GPU Inference Cost | Groq (Projected) | Savings |

|---|---|---|---|

| 10M tokens/day | ~$150/day | ~$60/day | 60% |

| 100M tokens/day | ~$1,200/day | ~$480/day | 60% |

| 1B tokens/day | ~$11,000/day | ~$4,400/day | 60% |

Estimates based on 2026 pricing trends; actual costs vary by contract terms and optimization level.

Common Myths (Debunked)

| Myth | Reality |

|---|---|

| “Groq is just a wrapper” | Groq designs custom silicon (LPUs) from scratch. It’s hardware, not software. |

| “Grok is hardware” | Grok is a chatbot/LLM running on xAI’s Colossus GPU cluster, not hardware. |

| “Groq is owned by Elon Musk” | Groq was founded by Jonathan Ross in 2016, unrelated to xAI or Elon Musk. |

| “You need Groq to run Grok” | Grok runs on xAI’s own infrastructure. Groq is a potential alternative, not a requirement. |

| “Groq replaces GPUs entirely” | Groq excels at inference, but GPUs remain dominant for training and flexible research workloads. |

Real-World Use Case Comparison

You care about Groq if:

- You’re building an AI product

- You run an LLM API

- You pay inference bills

- You need real-time responses

- You’re scaling voice AI

- You’re competing on latency

You care about Grok if:

- You use AI daily

- You’re comparing chatbots

- You live inside the X ecosystem

- You want real-time internet context

- You prefer personality in AI responses

Different audiences. Different decisions.

The Inference Economics Shift

In 2024–2025:

Pay per token

In 2026:

Pay for reserved AI capacity

Why?

Because predictable latency is more valuable than theoretical peak throughput.

That’s where Groq fits — and why this keyword is really about AI business models, not branding confusion.

The shift mirrors broader trends in how AI platforms monetize infrastructure versus applications, where margins accumulate at different layers of the stack.

The Big 2026 Insight Most People Miss

The chatbot gets the attention.

The infrastructure gets the margin.

Grok is visible.

Groq is structural.

And historically, structural layers capture the long-term value.

This pattern appears across tech history:

- Amazon (AWS) → cloud infrastructure wins

- Google (Search Ads) → distribution layer wins

- NVIDIA (CUDA + chips) → compute layer wins

In AI, the battle for inference infrastructure will determine who controls costs — and therefore profits.

Founder’s Note: What We’re Watching

In conversations with AI founders throughout 2025-2026, three patterns emerged:

- Latency anxiety — Teams shipping AI products live in fear of the “thinking…” spinner killing retention

- Cost shock — Month 6 is when inference bills become existential threats

- Hardware lock-in — Everyone wants options but migration costs feel impossible

Groq represents the first credible alternative to NVIDIA’s inference dominance. Whether it succeeds depends less on technical performance (which is proven) and more on ecosystem momentum.

The question isn’t “Is Groq faster?” — it is.

The question is: “Can Groq build enough tooling and support to make migration worth the risk?”

That’s the 2026 battle.

Future Outlook

Groq

- Major inference competitor to NVIDIA

- Default for real-time AI workloads

- Key player in AI cost wars

- Ecosystem development critical

Grok

- Native intelligence layer for X

- Social-first AI experience

- Competing on UX and data freshness

- Integration depth with X platform

Technical analysis of Grok’s model architecture and Colossus infrastructure provides additional context on how xAI approaches the compute layer differently from Groq’s specialized hardware strategy.

FAQs

Q. Is Groq related to Grok?

No. Groq is an AI hardware company. Grok is a chatbot created by xAI.

Q. Which came first, Groq or Grok?

Groq was founded in 2016. Grok launched in 2023.

Q. Is Groq owned by Elon Musk?

No. Groq was founded by Jonathan Ross, formerly of Google’s TPU team.

Q. Can Grok run on Groq hardware?

Yes. Grok is software and could theoretically run on any compatible inference infrastructure, including Groq.

Q. Is Groq a competitor to NVIDIA?

In AI inference, yes. In training, NVIDIA still dominates.

Q. Why is Groq important for AI speed?

Its architecture removes memory bottlenecks and enables deterministic, real-time token generation without batching delays.

Q. Is Grok better than ChatGPT?

It depends on your use case. Grok focuses on real-time social context and X integration, while ChatGPT excels at general-purpose tasks and reasoning.

Q. What is an LPU and how does it differ from a GPU?

An LPU (Language Processing Unit) is specialized silicon designed specifically for inference workloads, using SRAM for memory and deterministic execution. GPUs are general-purpose accelerators optimized for training and flexible computation.

Q. How much faster is Groq than GPU inference?

In real-time, single-stream applications, Groq can deliver 4-5x faster token generation (800+ TPS vs 150-200 TPS on H100 for models like Llama 3.1 70B).

Q. Does Groq reduce AI costs?

Yes. At scale, Groq’s architecture can reduce inference costs by an estimated 50-60% compared to GPU-based deployments, primarily through better energy efficiency and higher throughput per chip.

Conclusion

The groq vs grok debate isn’t about which AI is better.

It’s about understanding the AI stack:

Grok → the experience

Groq → the system that makes experiences fast and affordable

If you’re a user, Grok matters.

If you’re building an AI product, Groq might determine whether your margins survive.

And in 2026, the real AI race is being won at the infrastructure layer.

Related: How to Recover Deleted Grok Conversations (xAI) — 2026 Guide

| Disclaimer: This article is for informational purposes only and reflects independent analysis based on public data and industry trends. Performance figures, cost estimates, and future projections may vary by use case, configuration, and vendor pricing. All product names and trademarks belong to their respective owners. This is not financial or investment advice. |