Most AI tools still depend on you. You ask, they answer, you come back and repeat the cycle. The interaction model hasn’t changed much since the first chatbots — it’s just gotten faster and smarter.

Genspark AI is trying to break that model entirely.

When tested for a competitor research task, the experience was noticeably different from anything else in the current AI landscape. Instructions went in, the laptop closed, and hours later the platform returned structured insights, sourced information, and a usable report — without a single follow-up prompt. That’s not a chatbot behavior. That’s an AI agent executing a workflow autonomously.

In 2026, Genspark isn’t positioning itself against tools like ChatGPT. It’s trying to replace entire workflows. With features like Claw (a persistent cloud computer), a Mixture-of-Agents architecture, and a phone-call agent, it’s become one of the more genuinely interesting platforms in a crowded field. This guide covers what it actually does, what it costs, where it works well, and where the hype gets ahead of the reality.

What Genspark AI Actually Is

Genspark AI is an AI agent platform built around cloud-based task execution. The key distinction from most AI tools is that it doesn’t just respond to queries — it plans, executes, and delivers outcomes, often continuing to work after the user has logged off.

The shift matters more than it might initially sound. Most of what gets called “AI” in 2026 is still fundamentally a response system — a very capable one, but one that waits for input and stops when the conversation ends. Genspark’s architecture is built around persistence. The agent keeps going.

This puts it in the same emerging category as other agentic AI systems that are beginning to move beyond content generation into actual task completion. The difference is that Genspark has built a full cloud infrastructure layer to support that behavior, rather than bolting agent features onto an existing chat interface.

Genspark Claw: The AI Agent That Works While You Sleep

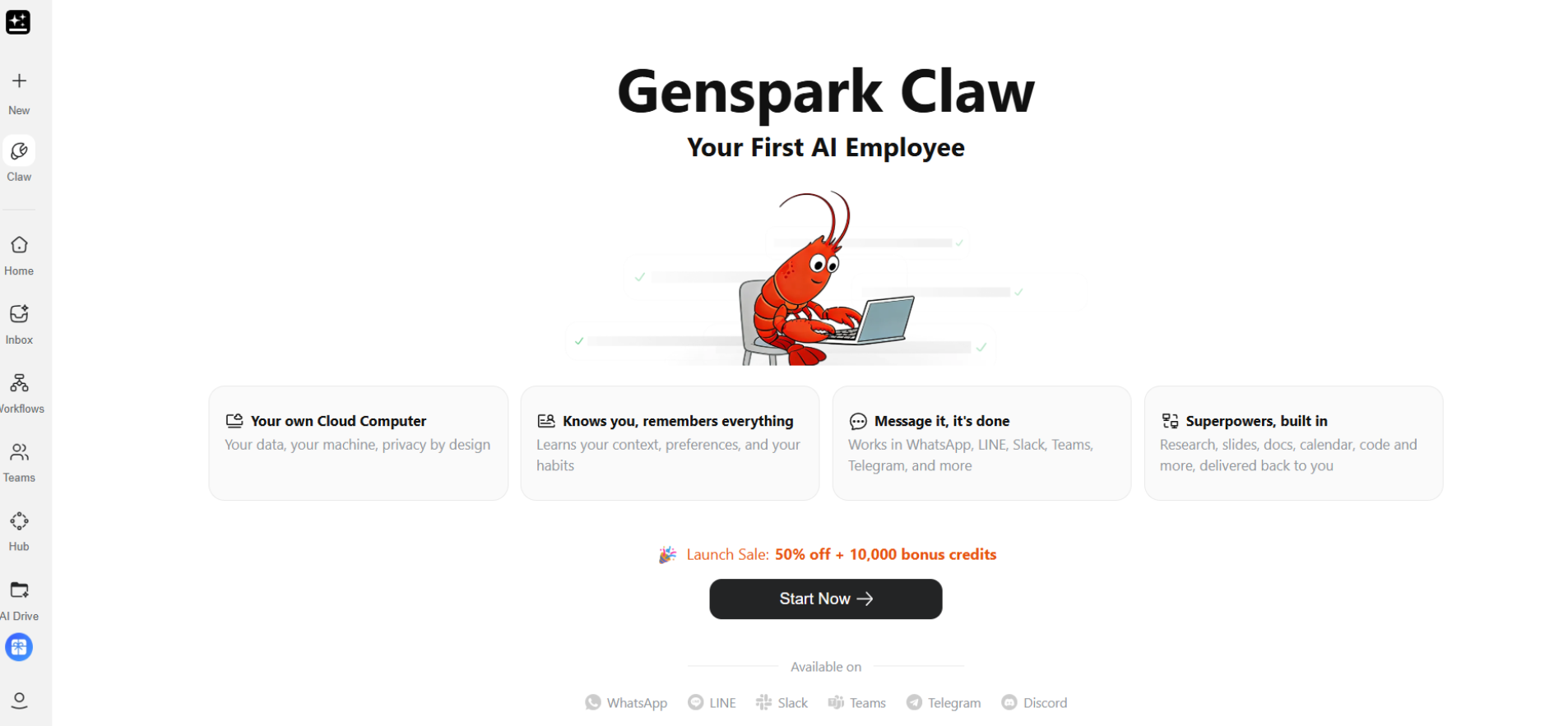

The most important and most misunderstood part of Genspark’s platform is Claw.

Claw is a persistent AI agent that runs on a dedicated virtual machine in the cloud. It can execute tasks continuously without requiring the user to be present — the agent keeps working through long-running workflows, scrapes and organizes information over time, and delivers structured outputs when the task is complete. Connecting it to tools like Slack or Telegram means results can surface in whatever workspace a team is already using.

The practical implication is significant. A research task that would otherwise require a human to sit at a computer running searches, organizing findings, and synthesizing sources can be handed off to Claw with a natural language brief. The human reviews the output rather than generating it. For operations that involve a lot of research, analysis, or structured data collection, that’s a meaningful shift in how time gets spent.

This is also where the “AI that works while you sleep” framing earns its credibility, and where the distinction from standard AI assistants becomes clearest. A ChatGPT session ends when the window closes. Claw doesn’t.

How the Mixture-of-Agents System Works

Under the hood, Genspark doesn’t run a single AI model. It uses what the company calls a Mixture of Agents architecture — multiple models running in parallel, with a reflection layer that evaluates and synthesizes their outputs.

In practice, this means responses are being cross-checked rather than generated by a single source. Models from the GPT series, Claude, and Gemini [UNVERIFIED — confirm current model mix before publishing] are reportedly involved, each generating answers that a verification layer then evaluates against each other. The best version is selected and refined before the user sees anything.

The rationale is straightforward: AI hallucination — the tendency for language models to produce confident but incorrect information — is one of the most persistent reliability problems in the field. Running multiple models and comparing outputs doesn’t eliminate the problem, but it reduces the chance that a single model’s error makes it into the final response unchallenged. It’s a consensus mechanism applied to AI outputs.

Most tools give one answer. Genspark gives a vetted one. Whether that vetting is robust enough to justify the claim is something each user will need to evaluate for their specific use case — but the architectural approach is sound.

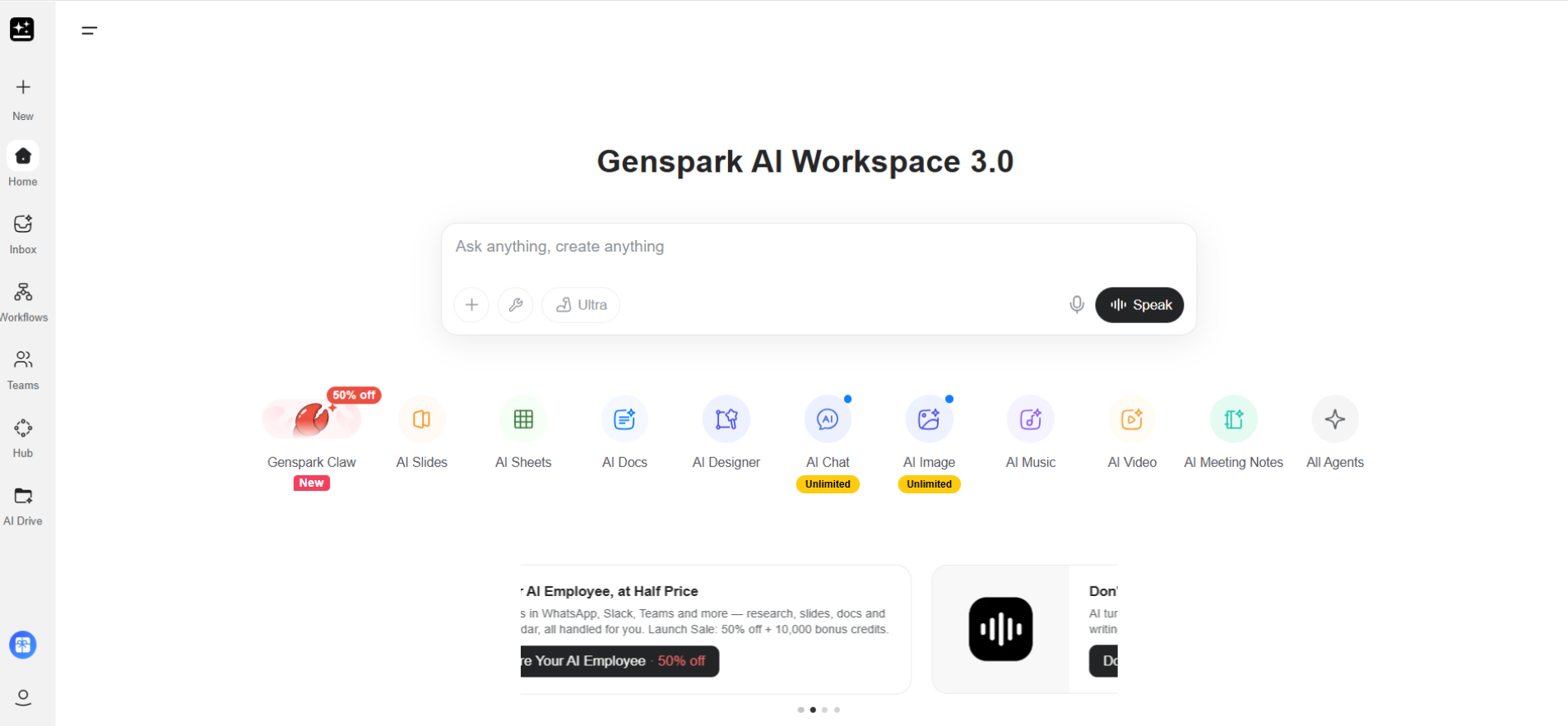

What Genspark Can Actually Do in 2026

The platform has expanded considerably from its earlier incarnation as an AI research tool. Core capabilities now include:

Autonomous research is the clearest strength. Multi-source analysis, structured outputs, and the ability to run tasks over extended periods without supervision make this genuinely useful for competitive intelligence, market research, and anything that would otherwise require hours of manual aggregation.

Content creation — articles, presentations, and reports — works through the same agent infrastructure, meaning longer-form content can be generated and structured without the back-and-forth that most AI writing tools require.

AI video generation runs a script-to-video pipeline that is fully automated. This is credit-intensive (more on that below) but represents a meaningful capability for teams that produce video content regularly.

Call for Me is the feature that most clearly signals where this category is heading. The agent places real phone calls on the user’s behalf — booking reservations, handling customer support interactions, scheduling appointments. This moves Genspark from “software that does digital tasks” into “software that operates in the physical world on your behalf.” The implications for how AI interacts with real-world systems are worth thinking through carefully, particularly around verification and accountability.

Genspark Workspace 2.0 introduced Speakly (a voice-first interface that works like an AI keyboard), AI Inbox 2.0 (which organizes emails and suggests replies automatically), and improved Claw automation with faster execution and better third-party integrations.

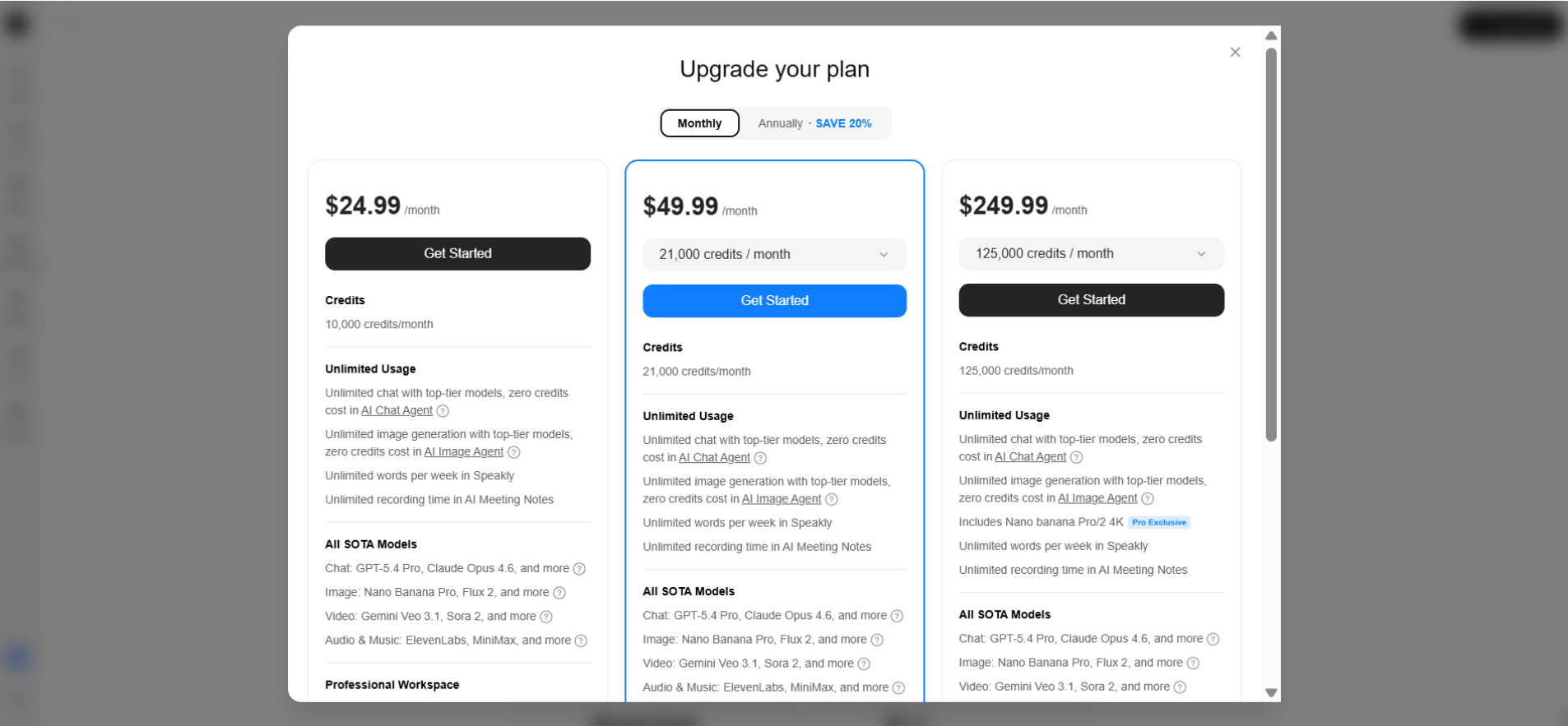

Pricing: What’s Free, What Costs Credits, and How It Actually Works

Genspark doesn’t follow the typical “unlimited usage” model. Instead, it runs on a credit-based system tied to computing power, which changes how you use it.

The free plan gives you limited daily access to core features, including basic agent tasks, chat, and generation. It’s enough to explore the platform, but not enough for consistent, heavy workflows.

Where things shift is with credits.

Every action—whether it’s research, image generation, or video—consumes credits based on how complex the task is. Longer outputs, higher-quality models, and multi-step workflows all increase usage. In simple terms, you’re paying for how much work the system actually does.

Core Usage (Agents & Research)

| Task Type | Free Plan | Plus Plan | Pro Plan |

|---|---|---|---|

| Deep Research | ~1/day | ~50/month | ~625/month |

| Data Search | ~1/day | ~50/month | ~625/month |

| Fact Check | ~1/day | ~40/month | ~500/month |

Image Generation (Examples)

| Model | Free | Plus | Pro |

|---|---|---|---|

| Flux Ultra | ~4/day | ~400/month | ~5000/month |

| Qwen Image | ~12/day | ~1111/month | ~13889/month |

| Recraft V3 | ~6/day | ~588/month | ~7353/month |

Video Generation (5s Clips)

| Tool | Free | Plus | Pro |

|---|---|---|---|

| Vidu | ~2/day | ~120/month | ~1506/month |

| PixVerse V5 | ~1/day | ~100/month | ~1250/month |

| Sora 2 | — | ~40/month | ~500/month |

The biggest credit usage comes from:

- Claw (long-running autonomous tasks)

- AI video generation

- Advanced research workflows

There are a few important rules behind the scenes. Credits reset each billing cycle, unused credits don’t carry over, and extra credit packs expire after a limited time. Usage also varies depending on model complexity and system demand.

What this means in practice is simple: you don’t use Genspark casually.

You use it when the output is worth it.

A competitor analysis that saves hours of manual work? Easy decision. A quick question you could ask a chatbot? Probably not.

That’s why Genspark isn’t really competing for everyday users. It’s built for people who think in terms of time saved, not prompts used.

You’re not paying for access—you’re paying for outcomes.

Genspark vs. ChatGPT vs. Perplexity: What Each Is Actually For

The comparison question comes up constantly, but it’s slightly the wrong framing. These tools aren’t really competing for the same jobs.

| Feature | Genspark AI | ChatGPT | Perplexity |

|---|---|---|---|

| Autonomous task execution | ✅ | ❌ | ❌ |

| Cloud-based persistent agent | ✅ | ❌ | ❌ |

| Multi-model architecture | ✅ | Partial | ❌ |

| Real-world actions (calls, automation) | ✅ | ❌ | ❌ |

| Always-on background tasks | ✅ | ❌ | ❌ |

The cleaner way to think about this: ChatGPT is a thinking tool — the best available for reasoning through problems, drafting, and generating ideas in a back-and-forth conversation. Perplexity is a search tool — optimized for fast, sourced answers to direct questions. Genspark is an execution system — designed for tasks that have a defined outcome and can be handed off rather than collaborated on in real time.

There’s significant overlap with what some users do with the best AI chatbots for specific workflows, but Genspark’s value proposition is more specifically about replacing labor rather than augmenting it.

Real-World Use Cases That Hold Up to Scrutiny

Some early enterprise reports suggest significant efficiency gains from using Genspark for research-heavy workflows. In one widely cited example, a marketing team reported up to 80% faster data analysis when using Genspark for competitive research. While the exact figure isn’t independently verified, it aligns with what you’d expect when manual aggregation work is automated.

That context matters. “Faster” often means faster than a process that involves multiple tabs, tools, and manual synthesis. The real value isn’t just speed—it’s the shift from doing the work to reviewing it.

Where Genspark consistently makes sense:

- Marketing and research teams running ongoing competitive analysis, industry monitoring, or content production at scale. The overnight workflow is the clearest example—brief the agent at the end of the day, review structured outputs the next morning.

- Founders and solo operators who need capabilities that would normally require hiring. Running research, content creation, and basic automation through a single system is a practical use case, not a theoretical one.

- Operations teams dealing with repetitive information workflows—data collection, organization, and reporting. These are tasks where humans are often acting as manual processors, which makes them ideal for automation.

There is a trade-off.

The more you rely on automated research, the more important it becomes to verify outputs and maintain critical oversight. Genspark reduces workload, but it doesn’t eliminate the need for judgment.

That balance—automation with review—is where most of the real value shows up.

Credibility and Funding (The “Is This Real?” Check)

Genspark has crossed the threshold where the “interesting startup” framing stops being adequate. As of 2026, the company carries a valuation above $1.25B, with backing from Emergence Capital and Mirae Asset. CEO Eric Jing leads a team that includes engineers from Google and Baidu.

That funding history matters not just as a credibility signal but as an indicator of runway. AI agent platforms are expensive to run — the compute costs of running multi-model architectures and persistent cloud agents are substantial. A well-funded company is more likely to still be operating and improving in 18 months than a bootstrapped alternative with similar features.

The broader AI funding landscape in 2026 has become more discerning after the early-cycle enthusiasm of 2023–2024. The companies attracting serious institutional capital are the ones with differentiated architectures and demonstrated enterprise use, not just impressive demos. Genspark fits that description better than most.

The Bigger Picture: From Tools to Digital Employees

Genspark sits at the leading edge of a category shift that’s worth naming clearly. The trajectory in AI productivity is moving from tools (respond to inputs) to assistants (help complete tasks with guidance) to agents (execute outcomes autonomously). Most of the AI landscape is still in the first two categories. Genspark is genuinely operating in the third.

The “digital employee” framing — software that handles defined workloads rather than just answering questions — changes how organizations should think about AI adoption. The question stops being “which AI tool should we subscribe to” and becomes “which workflows can we hand off entirely, and to what.” That’s a different kind of evaluation, and it requires thinking about accountability, output quality review, and appropriate human oversight in ways that prompt-based tools don’t demand.

The risks that come with delegating real-world actions to AI systems — including phone calls made on users’ behalf, automated outreach, and unsupervised research — deserve serious attention alongside the efficiency gains. The Call for Me feature is impressive. It’s also the kind of feature that creates new failure modes if used without thought.

Common Mistakes Users Make

Using it like ChatGPT. The prompting instinct — ask a question, get an answer, ask another — doesn’t translate well to an agent platform. Genspark rewards task framing: define an outcome, specify constraints, let the system work. Users who treat it as a faster chatbot miss most of the value.

Ignoring credits until they’re gone. The free tier is generous enough that new users often don’t think about credit consumption until they hit a limit mid-task. Mapping out credit costs for planned workflows before starting saves frustration.

Over-trusting automation. Every Claw output, every multi-model research report, and every generated article needs human review before use. The system is more reliable than single-model tools, but “more reliable” isn’t “infallible.” Treating automated outputs as drafts rather than finished products is the right default.

Frequently Asked Questions

Q. What is Genspark AI?

An AI agent platform that executes multi-step tasks autonomously using cloud-based infrastructure and a multi-model architecture. It’s designed for task completion rather than conversation.

Q. Is Genspark AI actually free?

The core features — AI chat and image generation — are free without limits. Advanced capabilities, including Claw tasks, video generation, and web automation, run on a credit system.

Q. What is Genspark Claw?

A persistent cloud-based AI agent that executes tasks continuously, including after the user has logged off. It runs on a dedicated virtual machine and can integrate with tools like Slack and Telegram.

Q. How is Genspark different from ChatGPT?

ChatGPT is optimized for conversation and reasoning — it’s a thinking tool. Genspark is optimized for autonomous execution — it’s a task completion system. They serve different jobs and the best choice depends on what the work actually requires.

Q. Does Genspark AI make real phone calls?

Yes. The Call for Me feature places actual phone calls on the user’s behalf for tasks like booking, customer support interactions, and scheduling. This represents one of the clearest examples of AI moving from digital assistance into real-world action.

Q. What is the Mixture of Agents system?

Multiple AI models run simultaneously on the same task, and a reflection layer evaluates and synthesizes their outputs before returning a result. The goal is to reduce hallucination and improve reliability through consensus rather than relying on a single model’s output.

Q. Who is Genspark actually best suited for?

Professionals and teams with high-volume research, content, or data workflows where the cost of human time is concrete. Solo operators, marketing teams, and operations-heavy businesses tend to find the clearest ROI. Casual users who primarily need a conversational AI will likely find the credit model frustrating relative to simpler alternatives.

Final Words

Genspark AI isn’t the finished version of what AI agents will eventually become. The credit system creates friction, output quality still requires human review, and “always-on execution” is more useful in some workflows than others. But it’s ahead of most platforms in one dimension that actually matters: it does the work, rather than just explaining how the work could be done.

For anyone running research-heavy, content-heavy, or workflow-heavy operations, that distinction is worth taking seriously in 2026.

Related: Does Character.AI Use OpenAI? (2026 Real Answer)

| Disclaimer: This article is based on information verified from official sources. Features, pricing, and capabilities may change over time. Always confirm the latest details directly with the official platform before making decisions. |