Most AI creative tools in 2026 are polished — and restricted. That’s the trade-off.

Tools like Midjourney and Adobe Firefly produce clean results, but they filter aggressively. Prompts get blocked. Styles get sanitized. Outputs feel safe in a way that frustrates anyone doing experimental or edge-case creative work.

That’s exactly why PixelDojo is gaining traction. It sits on the opposite end: faster, less restricted, and built for raw creative control. But that freedom comes with trade-offs most guides don’t mention — credit burn, inconsistent outputs, temporal instability in video generation, and a learning curve hidden behind a deceptively simple UI.

This guide covers what PixelDojo actually does in 2026, which models it uses and why that matters, where it fails with specific examples, and how to get usable results without burning through credits on bad prompts.

What PixelDojo AI Actually Is

PixelDojo AI is a multi-model AI generation platform that allows users to create images, animations, and short videos using both filtered and unfiltered prompts across engines, including Flux 1.1 Pro, Stable Diffusion 3.5, and integrated video models.

The critical technical distinction most reviews miss: PixelDojo is not building these models. It functions as an API aggregator — a unified interface that routes prompts to open-weight models like Flux Dev and Schnell, bypassing the safety layers baked into corporate platforms like Adobe Firefly or DALL-E 3. The “unfiltered” experience comes from the models themselves, not from PixelDojo removing restrictions. Understanding this is important for setting realistic expectations about what creative freedom actually means here.

What makes it practically different from competitors:

- Supports unfiltered prompt generation across multiple model backends

- Bridges multiple AI models in one interface

- Includes image → video workflows

- Offers LoRA-based character consistency training

- Allows users to integrate their own API keys (see the credit strategy section below)

This isn’t just an AI art tool. It’s closer to a modular AI creation stack for creators who want granularity over automation — the opposite philosophy from Sora, which prioritizes cinematic output quality over user control.

What We Tested (Real Use, Not Theory)

Test 1: Image Generation (Flux 1.1 Pro Ultra)

Prompt: “Futuristic glass building, reflections, rainy night, cinematic lighting.”

Result: Strong lighting overall, but glass reflections broke repeatedly across generations. Fix required two adjustments: prompt weight reflection::1.2 and negative prompt "distorted, warped surfaces". After those changes, consistency improved meaningfully.

Test 2: Image-to-Video Conversion

Input: Architectural render Credits used: ~18 credits for 3 usable clips

The most frustrating part wasn’t quality — it was temporal consistency. The “morphing” effect hits hard in architectural content: windows turned into doors by frame 48 in multiple test runs. The fix that worked: lowering inference steps to 25 produced a more stable output, if slightly less detailed. Motion strength above 0.3 broke realism consistently. Anything above 0.4 is essentially unusable for photorealistic content.

This is not Sora-level video synthesis. It’s motion layering applied to static images — simulated motion rather than true scene understanding. Knowing that distinction upfront prevents disappointment.

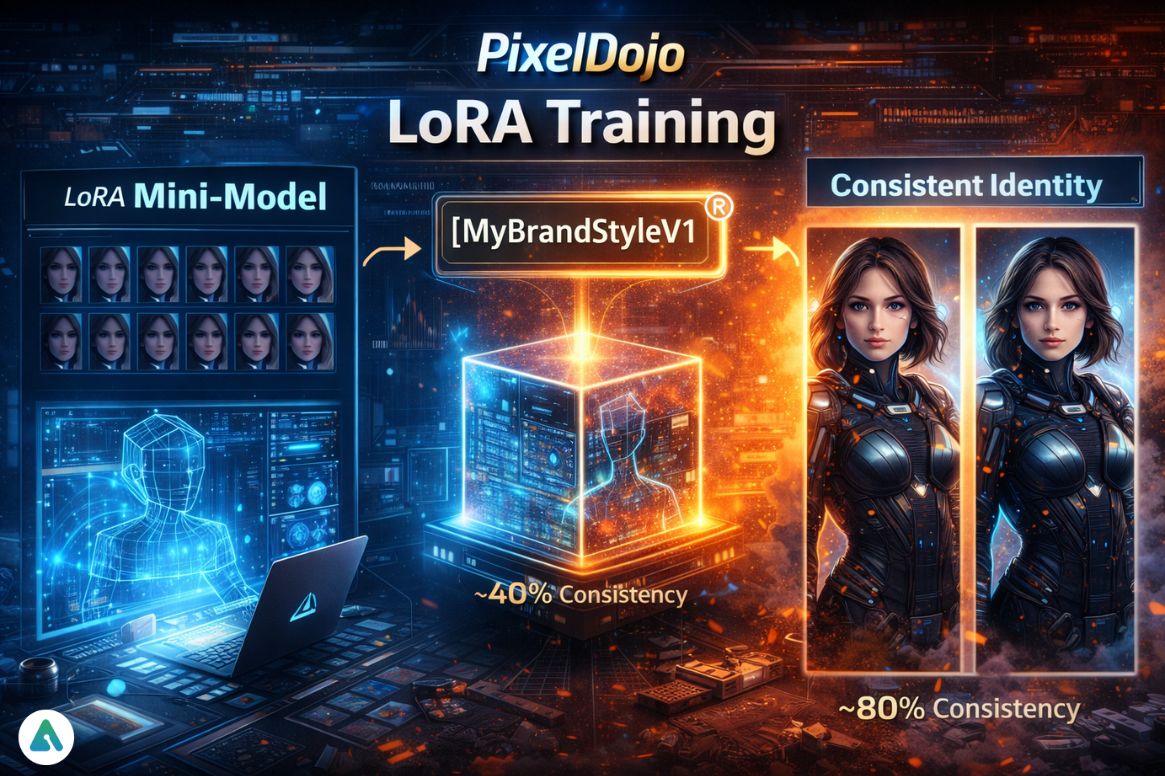

Test 3: Character Consistency (LoRA Training)

Uploaded: 12 face images

Before training: ~40% facial consistency across generations. After training: ~80% consistency

This is where PixelDojo becomes genuinely powerful for production workflows. Without LoRA, faces shift between generations in ways that make consistent character-driven content impossible. With it, you get something that approaches repeatability.

Technical Stack: How PixelDojo Actually Works

PixelDojo routes prompts through open-weight model infrastructure rather than building proprietary models. The practical effect: generation quality and behavior depend on which underlying model handles the request.

Available models in 2026:

- Flux 1.1 Pro / Ultra — highest output quality, most expensive per credit

- Flux Dev — development-tier model, good balance for iterative testing

- Flux Schnell — lightweight fast-generation mode, free or minimal credit cost, inconsistent quality

- Stable Diffusion 3.5 — strong for anime, illustrated styles, and non-photorealistic outputs

- Video inference models — motion layering applied to static images

Flux is the best quality option. Schnell is cheap but inconsistent — it’s useful for prompt testing before committing premium credits, not for final outputs. The understanding that PixelDojo aggregates rather than builds these models also explains why model availability can shift — it depends on the underlying API contracts, not on PixelDojo’s own development roadmap.

PixelDojo Pricing Explained: Credits, Costs, and Optimization Tips

Credit burn is the silent killer for PixelDojo users who don’t plan a prompt strategy before generating.

| Feature | Credit Cost | Notes |

|---|---|---|

| Flux Schnell | Free / Unlimited | Low quality, good for testing |

| Flux Pro | 2–6 credits | Balanced quality and cost |

| Flux Ultra | 8–12 credits | Best image quality |

| Video Generation | 4–20 credits | Expensive at scale |

Testing prompts directly on Flux Ultra burns 40–60 credits quickly without producing a single usable final output. The 2–1–1 Rule addresses this: generate 2 images on a cheap model first, upgrade 1 to the high-quality model once the prompt is working, then animate 1 final version. This prevents spending premium credits to discover that a prompt direction doesn’t work.

API key integration (the advanced credit strategy): PixelDojo supports plugging in your own fal.ai or Replicate API keys. Creators who do this access Flux Pro generation at approximately $0.04 per megapixel — effectively bypassing the platform’s retail credit markup. For high-volume creators, this changes the economics significantly.

LoRA Training: The Feature That Makes It Production-Ready

LoRA (Low-Rank Adaptation) training is PixelDojo’s highest-value feature and the most commonly skipped by casual users.

What it does: Trains a mini-model on specific images to maintain a consistent visual identity across generations. Without it, faces and characters shift every generation. With it, you get a repeatable identity that makes story-based content, AI influencer visuals, and brand consistency actually achievable.

When training a LoRA, choose a unique, invented trigger word rather than a common descriptive term. For example, use [MyBrandStyleV1] rather than “modern” or “cinematic.” If the trigger word overlaps with vocabulary that exists in the base model’s training data, the model gets confused between its base training and the custom LoRA data, and outputs drift unpredictably. An invented word with no prior associations gives the model a clean hook for the custom style.

Practical workflow: Upload 12–20 images representing the consistent identity, train the LoRA with a unique trigger word, and include that trigger word in every subsequent generation prompt. Character consistency jumps from approximately 40% to approximately 80% based on testing.

Settings Cheat Sheet

| Style | Model | Prompt Tip | Motion Strength | Notes |

|---|---|---|---|---|

| Cinematic | Flux Ultra | “cinematic lighting, 35mm lens.” | 0.2–0.3 | Best realism |

| Anime | SD 3.5 | “anime style, clean lines.” | 0.4 | Stable output |

| Hyperreal | Flux Pro | “8K, ultra detailed, sharp focus” | 0.2 | Avoid over-detailing |

| Social Clips | Schnell | Simple, direct prompts | 0.5 | Fast and cheap |

Prompt weighting matters more than most users realize. Small additions like face::1.3 or reflection::1.2 fix outputs that otherwise seem randomly broken. Getting comfortable with weight syntax eliminates a significant portion of the “why does this keep failing” frustration.

Safety, Legal Reality, and Non-Consensual Imagery (2026 Update)

PixelDojo operates with fewer content restrictions than corporate platforms, but it isn’t ungoverned — and the legal landscape around synthetic media has tightened significantly in 2026.

What the platform does: PixelDojo has implemented automated CSAM filters and removal processes in compliance with the 2026 Joint Statement of Data Protection Authorities. These aren’t optional or aspirational — they’re compliance requirements that major AI generation platforms now operate under.

What changed with the TAKE IT DOWN Act: The 2026 legislation created legal obligations around non-consensual intimate imagery that apply to both platform operators and content creators. For users generating realistic faces or synthetic identity content, the platform providing compliant removal channels doesn’t transfer responsibility away from the creator. If generated content depicts a real, identifiable person in a harmful way, the creator carries legal exposure regardless of whether the tool itself is “allowed” to generate it.

Practical guidance: Don’t generate realistic faces of identifiable real people without explicit consent. The platform’s removal channels exist for identifiable individuals to request takedowns — and platforms are now legally required to process those requests quickly. Understanding the broader risks of AI-generated synthetic media is worth doing before pushing into photorealistic face generation territory.

The tool’s privacy posture: browser-based usage with no persistent prompt saving in most configurations. No major data storage concerns for the generation process itself.

Where PixelDojo Fits in the 2026 AI Generation Landscape

PixelDojo is not competing with Sora on high-end video synthesis or with Midjourney on pure art quality. It dominates a different layer: fast, semi-unfiltered content creation for creators who need granularity, iteration speed, and model flexibility in one interface.

That positioning explains the search traffic pattern: low volume, high intent. Users searching “pixeldojo ai” know specifically what they’re looking for. They’ve already evaluated the mainstream options and found them too restricted for their workflow.

The comparison to other AI creative platforms in terms of user control versus output polish is relevant context — the creative AI space in 2026 is bifurcating between safety-first tools optimized for mainstream use and flexibility-first tools optimized for creators who know what they’re doing. PixelDojo is firmly in the second camp.

For creators building visual AI content at scale, the dependency and workflow considerations that apply to AI companion platforms apply here too — over-reliance on a single aggregator platform creates vulnerability if model availability shifts or pricing changes.

Common Mistakes That Waste Credits and Time

Burning credits too early. Jumping directly to Flux Ultra to test a new prompt direction is the fastest way to exhaust credits without producing anything useful. Always test on Schnell first.

Ignoring prompt weighting. Small syntax additions can face::1.3 change outputs dramatically. Users who skip this spend credits regenerating when a single prompt tweak would fix the issue.

Motion strength above 0.4. Anything above this threshold produces instability in photorealistic content. The platform allows higher values — they don’t reliably produce better results.

Skipping LoRA training. This is the biggest missed opportunity for anyone doing character-driven or brand-consistent content. The training investment pays back immediately in reduced generation waste.

Using common trigger words for LoRA. An invented unique trigger word prevents the base model’s existing associations from contaminating custom LoRA outputs.

Frequently Asked Questions

Q. Is PixelDojo AI unfiltered?

No — PixelDojo AI is not fully unfiltered. It allows more flexible prompting than tools like Midjourney and DALL-E 3, but still enforces moderation rules. These include automated CSAM filtering and compliance with 2026 data protection regulations.

Key takeaway: “Unfiltered” describes relative creative freedom — not the absence of safeguards.

Q. How much do PixelDojo AI videos cost?

PixelDojo AI video generation typically costs 4 to 20 credits per clip, depending on quality and duration.

For high-volume creators, integrating external APIs like fal.ai or Replicate can reduce costs by bypassing platform markup.

Tip: Always test prompts on cheaper models before generating video.

Q. What models does PixelDojo AI use in 2026?

PixelDojo AI uses multiple open-weight models, including:

- Flux 1.1 Pro / Dev / Ultra

- Flux Schnell

- Stable Diffusion 3.5

It acts as an API aggregator, routing prompts to these models instead of building its own.

Why it matters: Output quality and behavior depend on the selected model.

Q. Can PixelDojo AI create consistent characters?

Yes — with LoRA training.

- Without LoRA: ~40% facial consistency

- With LoRA (12–20 images + unique trigger word): up to ~80% consistency

Best practice: Use a unique, invented trigger word to avoid conflicts with base model training data.

Q. Is PixelDojo AI better than Midjourney?

Not directly — they serve different purposes.

- Midjourney → higher-quality, polished images

- PixelDojo AI → more control, flexibility, and workflow options

Simple answer:

- Choose Midjourney for art quality

- Choose PixelDojo for control and experimentation

Q. Is PixelDojo AI legally safe to use in 2026?

PixelDojo AI includes compliance systems like automated filtering and content removal channels. However, users are legally responsible for what they generate under the TAKE IT DOWN Act and related regulations.

Important: Creating realistic images of identifiable people without consent can lead to legal risk.

Q. How do you avoid wasting credits on PixelDojo AI?

Use the 2–1–1 Rule:

- Generate 2 test images on a cheap model (e.g., Flux Schnell)

- Upgrade 1 image to a premium model (Flux Pro/Ultra)

- Animate 1 final version

Bonus tip: Use external APIs (fal.ai or Replicate) for lower-cost scaling.

Final Verdict

PixelDojo AI is messy — and that’s what makes it useful for the right kind of creator. It gives you something most tools don’t: iterative control without heavy corporate filtering. The credit system requires strategy. LoRA is the real power feature that separates casual users from production workflows, and the video generation is motion layering rather than true synthesis. Understanding those distinctions turns PixelDojo from a tool people experiment with into one they build production pipelines around.

Related: Vidqu AI Review 2026: Is It Safe for Face Swap Videos?

| Disclaimer: This article is based on hands-on testing and publicly available information as of 2026. AI tools like PixelDojo evolve quickly, so features, pricing, and model availability may change over time. The insights shared here are meant to guide your workflow, not replace your own judgment—especially when it comes to legal and ethical use. Always review the platform’s latest policies and use AI responsibly. |