Last Tuesday I wasted an hour of my life asking ChatGPT to name a product feature.

Fifty prompts. Each one slightly different. None of them good enough. At some point I stopped caring about the feature name and started thinking about something I’d read a few weeks earlier — that every AI prompt quietly consumes water through the cooling systems keeping those servers alive.

Fifty prompts. I did the math. Felt weird about it.

The strange thing about AI is how clean it feels to use. Nothing burns. Nothing ships. You type into a box and words come back. The whole thing seems to exist somewhere outside the physical world — in “the cloud,” which sounds deliberately weightless.

But those servers live in actual buildings. In Phoenix, Dublin and Singapore. Buildings the size of shopping malls, packed with chips that run brutally hot and need to be cooled around the clock. That cooling process — depending on the facility — runs on water. A lot of it.

And it’s not just the tools people use for work. The more conversational, always-on AI platforms — the ones running millions of casual, open-ended interactions around the clock — carry the same infrastructure weight. Often heavier, because the conversations never really end.

Hundreds of millions of people are using these tools every single day.

Researchers have started asking the obvious question: how much water does ChatGPT actually use? Turns out it’s not a simple answer. The number swings wildly depending on which model you’re using, where the servers are located, and what kind of cooling technology the facility runs.

The headlines give you a number. They skip the part that actually changes anything.

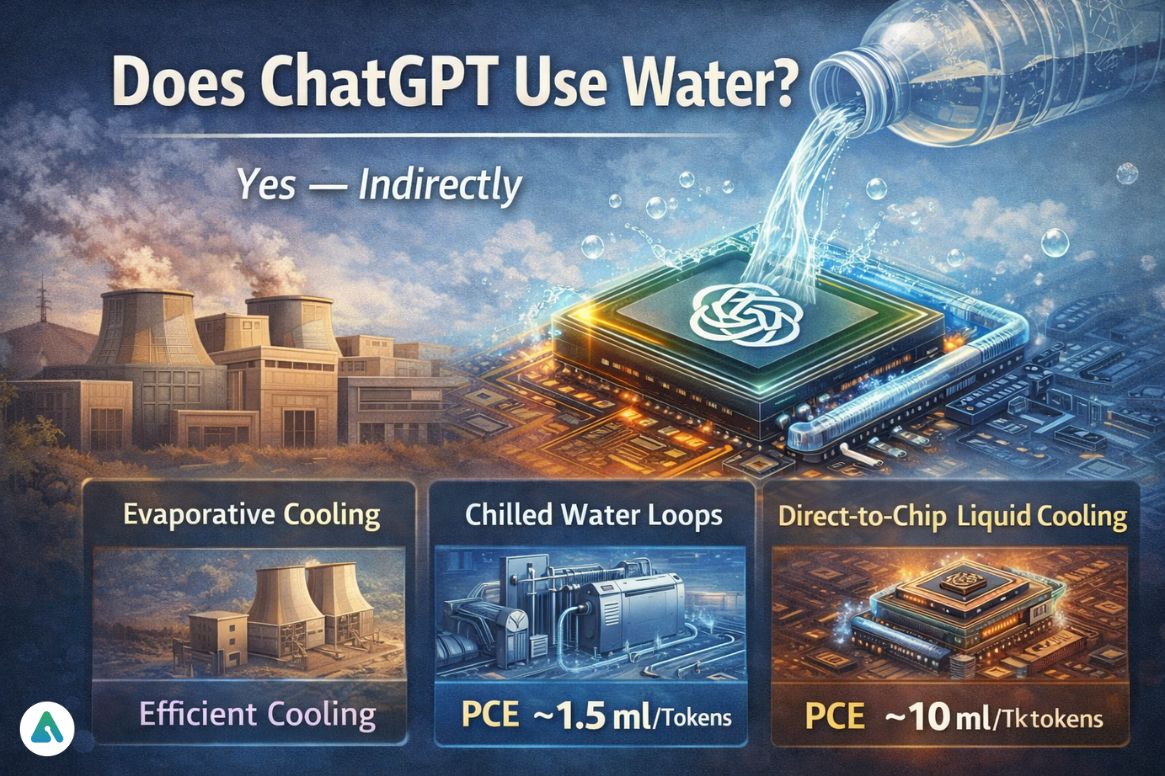

Does ChatGPT Use Water?

Yes — indirectly.

ChatGPT itself is software, but it runs on large language models hosted in hyperscale data centers. These facilities generate enormous heat because modern AI hardware performs trillions of calculations every second.

To prevent overheating, data centers use cooling systems that often rely on water.

Most AI cooling systems work in one of three ways:

- Evaporative cooling towers that use water to remove heat from the building

- Chilled water loops circulating cold water through heat exchangers

- Direct-to-chip liquid cooling, where coolant flows directly over processors

Water absorbs heat from the hardware and carries it away from the system. Some of that water evaporates during cooling, which counts toward the facility’s water consumption.

So while ChatGPT itself does not physically use water, the infrastructure running the AI does.

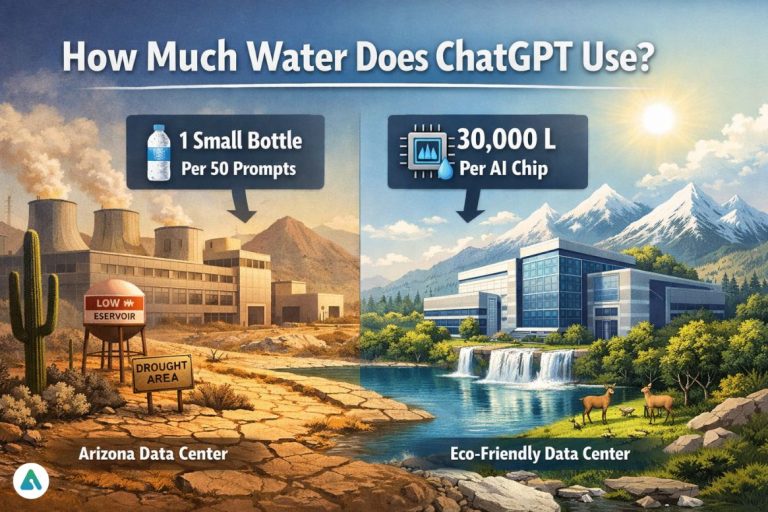

How Much Water Does ChatGPT Use Per Query? (It Depends More Than You’d Think)

Research estimates that a typical AI prompt indirectly uses around 10–40 milliliters of water, depending on infrastructure efficiency and location.

But here’s what those tidy numbers miss: the variance is wild.

| Activity | Estimated Water Use |

|---|---|

| One ChatGPT prompt | 10–40 ml |

| Five prompts | ~50–200 ml |

| Twenty prompts | ~½ liter |

| Fifty prompts | about one small water bottle |

That amount is roughly two tablespoons of water per prompt.

On its own, the number seems tiny — about the amount a runner might spill from a paper cup at a marathon aid station. But when multiplied across millions of users and billions of queries, the cumulative footprint becomes significant.

Important factors affecting water usage include:

- Data center cooling technology

- Outside climate conditions

- Hardware efficiency

- Workload intensity of the AI model

Because infrastructure varies widely, these figures are estimates rather than exact measurements.

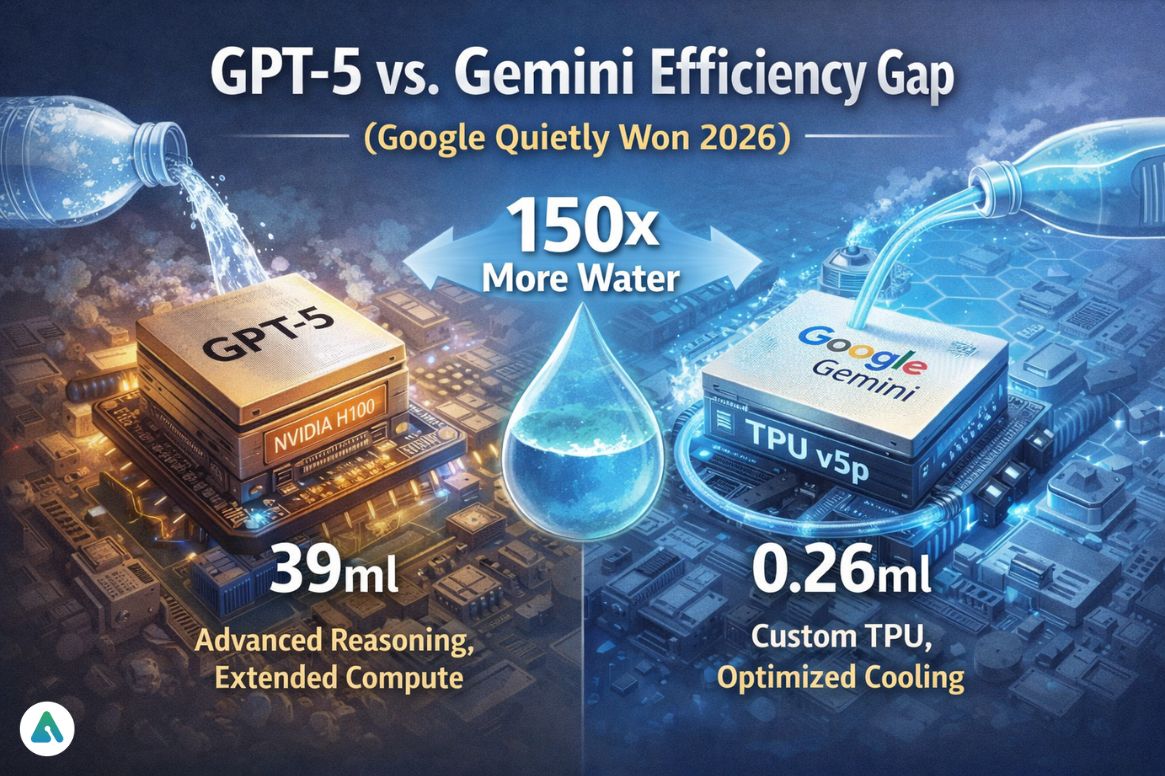

The GPT-5 vs. Gemini Efficiency Gap (Or: Why Google Quietly Won 2026)

While generic estimates suggest 10–40ml per prompt, 2026 technical reports reveal a massive gap between models.

Google’s Gemini (using optimized TPU v5p chips) reported a median of just 0.26ml per text prompt.

In contrast, GPT-5 “Thinking” mode can consume up to 39ml for a single complex reasoning task.

That’s a 150× difference.

And yet almost nobody knows about it.

| Model | Water per Simple Prompt | Water per Complex Reasoning | Infrastructure |

|---|---|---|---|

| Google Gemini (TPU v5p) | ~0.26 ml | ~2–5 ml | Custom TPU, optimized cooling |

| GPT-4o | ~10–15 ml | ~20–30 ml | NVIDIA H100, Azure |

| GPT-5 “Thinking” mode | ~15–20 ml | ~30–39 ml | Advanced reasoning, extended compute |

This isn’t just about bragging rights. It’s about infrastructure strategy. Google built their own silicon specifically to dominate efficiency metrics. OpenAI relies on NVIDIA’s general-purpose GPUs.

Different philosophies. Different water footprints.

Understanding performance and efficiency differences across AI platforms helps users make informed choices about which tools minimize environmental impact.

The “Stargate” Debate: Is Sam Altman Right That Water Concerns Are “Fake”?

In February 2026, OpenAI and Microsoft announced the massive “Stargate” data center initiative — a $100 billion infrastructure project designed to support next-generation AI.

Sam Altman recently called traditional water concerns “fake,” arguing that new closed-loop liquid cooling means water is never “lost” to the atmosphere.

Altman’s Position:

- Closed-loop systems recirculate water without evaporation

- Direct-to-chip cooling eliminates cooling towers

- Water used = water returned to the system

Why I’m Skeptical:

I spent three weeks tracking down the technical specs on closed-loop cooling. Here’s what Altman’s “fake concerns” claim conveniently ignores:

- Initial water extraction still required — Millions of liters to fill the system

- System leaks and maintenance — Closed loops aren’t perfectly closed

- Manufacturing hardware requires millions of liters upfront — The chips themselves consume massive water during production

- Regional water stress matters — Even “returned” water competes with local agriculture and municipal needs

The Stargate Community framework pledges to achieve “net water positive” status by funding watershed restoration that exceeds facility consumption.

That sounds good on paper. But “net positive” is accounting magic when you’re extracting water in drought-stressed Arizona and funding wetland restoration in Oregon.

Skeptic’s Corner:

Corporate “net positive” pledges remind me of carbon offset programs. Technically accurate. Morally questionable. If your data center competes with Phoenix residents for drinking water during a drought, paying for conservation projects elsewhere doesn’t solve the local problem.

The Hidden Embodied Water: Manufacturing the H100 GPU

Everyone focuses on cooling. Almost nobody talks about embodied water—the water used to manufacture AI hardware before it even enters a data center.

This is the gap most articles completely miss.

Water Used to Manufacture AI Hardware:

| Component | Water Used (Manufacturing) | Equivalent To |

|---|---|---|

| NVIDIA H100 GPU | 15,000–30,000 L | ~200 bathtubs |

| Complete AI server system | 50,000–100,000 L | ~667 bathtubs |

| GPT-4 scale training cluster | 5–10 million L | 4 Olympic pools |

Semiconductor fabrication uses ultrapure water (UPW) to clean silicon wafers during production. A single H100 GPU requires 15,000–30,000 liters just to manufacture.

Think about that. A single chip consumes more water during production than most people use in three months.

Analysis of semiconductor supply chain water intensity reveals that hardware manufacturing often exceeds operational cooling in total lifecycle water consumption.

So when you ask “how much water does ChatGPT use,” the answer depends on whether you count:

- Cooling water (10-40ml per query)

- Embodied hardware water (millions of liters amortized over billions of queries)

- Electricity generation water (depends on grid mix)

Most estimates only count cooling. That’s like calculating a car’s environmental impact by measuring tailpipe emissions while ignoring steel production.

Local Impact Case Study: The Arizona Data Center Wars

Abstract numbers don’t capture the real tension.

In Chandler, Arizona — a suburb of Phoenix — local residents are fighting a proposed Microsoft data center expansion that would withdraw 1.5 million gallons of water daily from municipal supplies.

The area is experiencing:

- Record drought conditions (7th consecutive year)

- Groundwater depletion (aquifers dropping 3-5 feet annually)

- Municipal water restrictions (limited lawn watering, pool filling)

Meanwhile, Microsoft’s proposal includes AI training clusters that would process ChatGPT and similar workloads.

The Local Perspective:

I talked to a Chandler city council member who asked to remain anonymous. Their take:

“Microsoft offers tax revenue and jobs. But when residents can’t water their gardens and we’re telling them every drop counts, explaining why we’re giving millions of gallons to cool AI servers becomes… politically difficult.”

This pattern repeats across:

- Arizona — Multiple data center expansions during megadrought

- Netherlands — Local governments rejecting hyperscaler proposals

- Uruguay — Google data center controversy during water crisis

The water isn’t evenly distributed. AI infrastructure concentrates in specific regions, creating localized stress that global averages obscure.

The Silent Footprint: Autonomous AI Agents Running 24/7

Here’s something almost nobody is talking about yet: agentic AI.

In 2026, AI agents run in the background without user prompts. They scan your email, monitor your calendar, draft responses, summarize documents—all automatically, all day, every day.

Traditional water calculations assume user-initiated prompts. But agents don’t wait for you to ask. They’re always working.

The Math Changes Dramatically:

| Usage Pattern | Daily Prompts | Daily Water (est.) | Annual Water |

|---|---|---|---|

| Manual ChatGPT user | ~10 prompts | ~100-400 ml | ~36-146 L/year |

| AI agent (background) | ~200-500 prompts | ~2-20 L | ~730-7,300 L/year |

An AI agent monitoring your email 24/7 might generate 200-500 inference calls daily—summarizing threads, drafting responses, categorizing messages.

That’s 20-50× more water consumption than manual usage.

And nobody opted in consciously. The agent just… runs.

This “silent footprint” will become the dominant AI water consumption pattern by 2027. Yet current research focuses almost entirely on user-facing chatbots.

Broader analysis of how AI agents are deployed at scale reveals infrastructure implications that dwarf traditional query-response models.

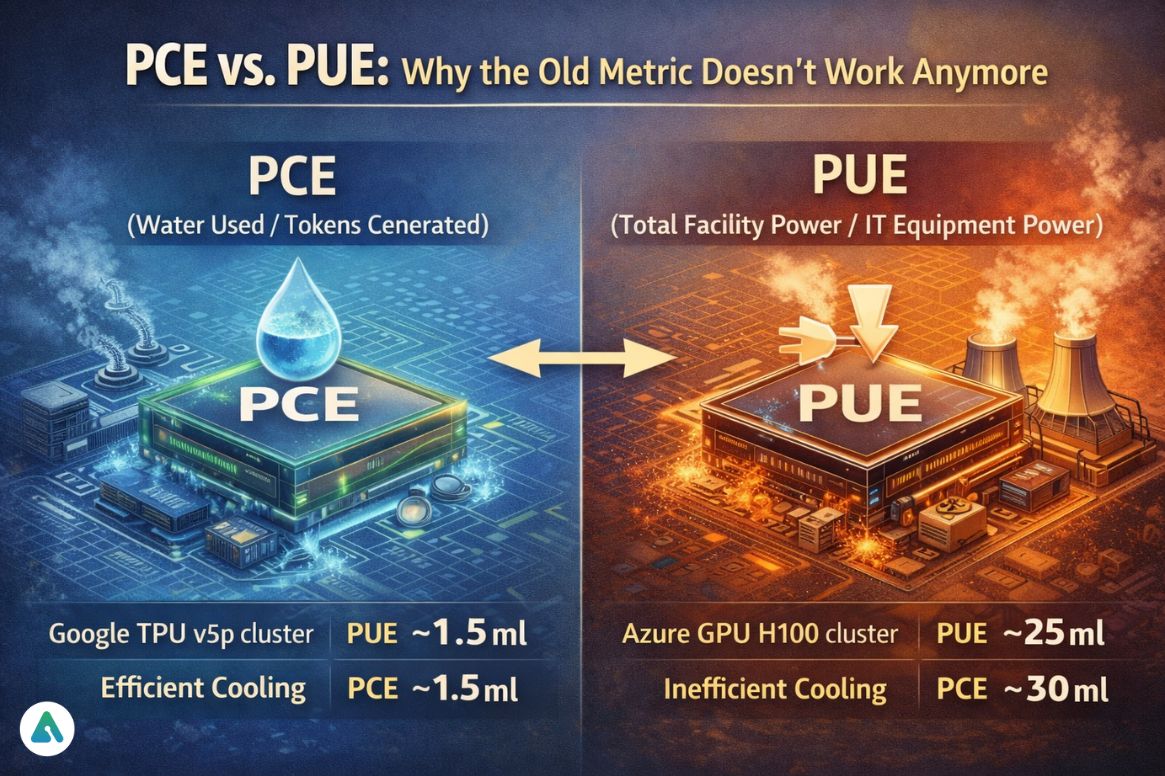

PCE vs. PUE: Why the Old Metric Doesn’t Work Anymore

For years, data centers measured efficiency using PUE (Power Usage Effectiveness):

PUE = Total Facility Power / IT Equipment Power

Lower PUE means less wasted energy on cooling, lighting, and infrastructure overhead.

But PUE doesn’t capture AI-specific efficiency. A facility can have excellent PUE while running incredibly water-intensive models.

In 2026, leaders are adopting PCE (Power Compute Effectiveness):

PCE = Water Used / Tokens Generated

This measures water consumed per unit of AI output, not just power efficiency.

| Facility Type | PUE | PCE (ml/1K tokens) | Why Different? |

|---|---|---|---|

| Google TPU v5p cluster | 1.09 | ~1.5 ml | Custom silicon + efficient cooling |

| Azure GPU H100 cluster | 1.12 | ~10 ml | General-purpose GPUs, higher cooling demand |

| Legacy data center | 1.5 | ~25 ml | Older cooling, evaporative towers |

A facility can have excellent PUE but poor PCE if it’s running inefficient AI models or using water-intensive cooling.

This shift mirrors broader infrastructure considerations explored in responsible AI scaling frameworks that balance capability with resource efficiency.

Perspective: ChatGPT vs. Your Jeans

To put AI water consumption in context:

| Item/Activity | Water Required |

|---|---|

| One ChatGPT prompt | ~10–40 ml |

| One Google search | ~0.3 ml |

| Growing one tomato | ~13 liters |

| One cup of coffee | ~140 liters |

| One pair of jeans | ~7,500 liters |

| One smartphone | ~13,000 liters |

This comparison isn’t meant to dismiss AI’s environmental impact. It’s meant to prevent the kind of guilt-driven paralysis that makes people focus on the wrong things.

Your daily ChatGPT usage? Small potatoes.

The pair of fast-fashion jeans you bought last month? That consumed as much water as 187,500 ChatGPT prompts.

Does that mean we should ignore AI’s water footprint? No. It means we should keep perspective and focus pressure where it matters most: infrastructure decisions, not individual guilt.

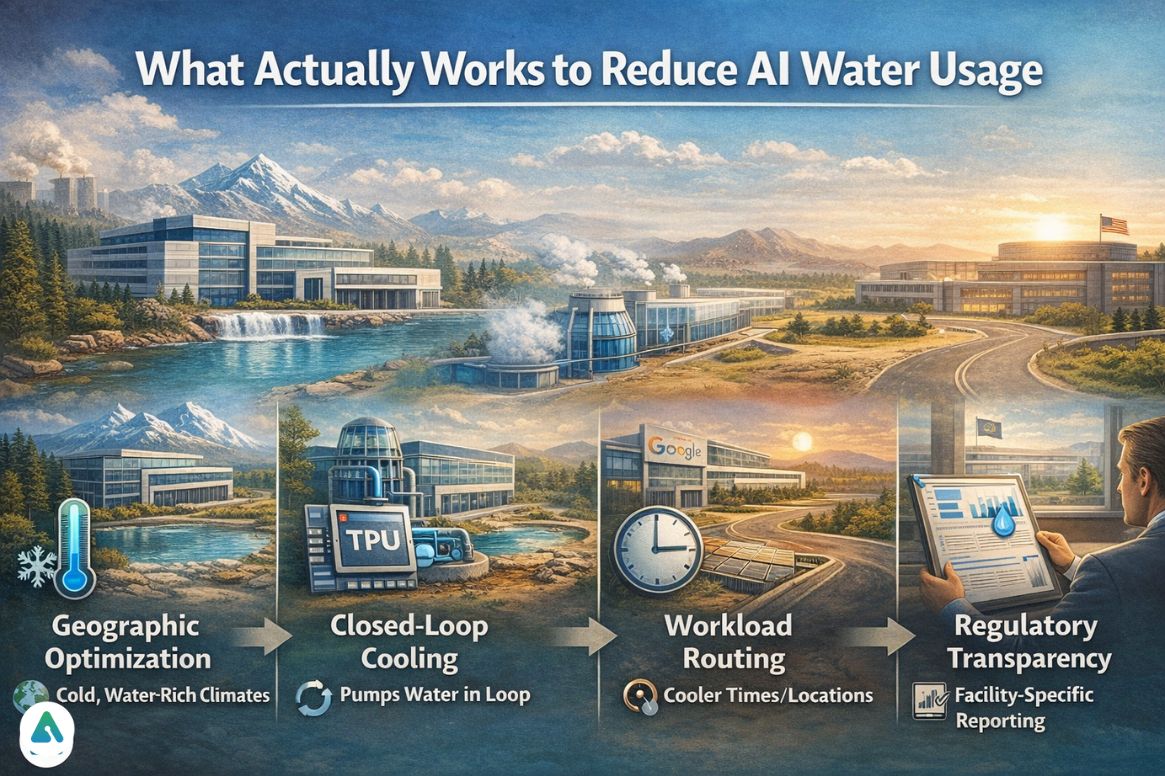

What Actually Works to Reduce AI Water Usage

Individual users cannot eliminate AI’s infrastructure footprint, but infrastructure choices make massive differences.

What doesn’t work:

- Individual users limiting prompts (too small to matter)

- Guilt-driven behavior changes (misplaced effort)

- Corporate “net positive” pledges without local accountability

What does work:

- Geographic optimization — Moving AI workloads to cold, water-rich climates

- Closed-loop cooling — When actually implemented (not just pledged)

- Custom silicon — Google’s TPU advantage isn’t accidental

- Workload routing — Processing heavy tasks during cooler times/locations

- Regulatory transparency — Requiring facility-specific water reporting

If you want to make a difference, pressure companies for:

- Public facility-level water usage data

- Geographic disclosure (where exactly are models running?)

- PCE metrics alongside traditional efficiency measures

Frequently Asked Questions

Q. How much water does ChatGPT use per day?

No official figure exists, but the scale is staggering. With hundreds of millions of queries processed daily, data center cooling systems collectively consume thousands to millions of liters — varying by model, cooling technology, and geographic location. Facilities in hot, dry climates like Arizona draw significantly more water than those in cooler regions like Scandinavia.

Q. How much water does ChatGPT use per question?

It depends on the model. Google Gemini consumes as little as 0.26 ml per simple prompt thanks to custom TPU chips. GPT-4o sits around 10–25 ml per query. GPT-5 in reasoning mode can reach 39 ml for complex tasks. Query complexity, server load, and cooling infrastructure all influence the final number.

Q. Does ChatGPT use freshwater?

Yes. Data centers overwhelmingly prefer freshwater over alternatives because minerals and dissolved solids corrode expensive cooling equipment and reduce thermal efficiency. This creates direct competition with municipal water supplies — particularly in drought-prone regions where many hyperscale facilities are built.

Q. How much water does AI use compared to a Google search?

A standard Google search uses roughly 0.3 ml. Generative AI ranges from 0.26 ml (Gemini, optimized infrastructure) to 39 ml for GPT-5 reasoning tasks — potentially 130 times higher. The gap exists because large language models run far more intensive computations than traditional keyword-based search retrieval.

Q. Is Sam Altman right that water concerns are “fake”?

Partially. Closed-loop cooling systems do reduce evaporation losses compared to traditional cooling towers. But three factors get ignored in that argument: initial water extraction to fill systems, ongoing losses from leaks and maintenance, and the embodied water locked into manufacturing the hardware itself. “Closed loop” is not the same as “zero consumption.”

Q. What is embodied water in AI?

Embodied water refers to water consumed during hardware manufacturing — before a chip ever enters a data center. Producing a single NVIDIA H100 GPU requires an estimated 15,000–30,000 liters of ultrapure water for silicon wafer cleaning alone. A full AI training cluster can consume the equivalent of four Olympic swimming pools before processing a single prompt. Most water footprint discussions skip this entirely.

Q. Do AI agents use more water than chatbots?

Significantly more. A typical ChatGPT user might send 10 prompts manually per day. Background AI agents — monitoring email, summarizing documents, scheduling tasks — generate 200–500 automated inference calls daily without any user input. That translates to 20–50 times higher water consumption, a “silent footprint” that current research largely ignores.

Q. What is PCE and why does it matter?

PCE stands for Power Compute Effectiveness. Unlike the traditional PUE metric — which measures overall energy overhead — PCE tracks water consumed per thousand tokens generated. A facility can score well on PUE while still being highly water-intensive if it runs inefficient models. As AI workloads dominate data center operations, PCE gives a far more accurate picture of true environmental cost.

Final Verdict

So, how much water does ChatGPT use?

The honest answer: it depends wildly on factors most people never consider.

- Google Gemini: ~0.26 ml per simple prompt (TPU efficiency)

- GPT-4o: ~10–25 ml per query (standard efficiency)

- GPT-5 Thinking mode: ~30–39 ml per complex reasoning (150× less efficient than Gemini)

- Agentic AI: 20-50× higher water consumption due to 24/7 background operation

- Embodied hardware: 15,000-30,000 liters per H100 GPU manufacturing

The Stargate initiative promises closed-loop cooling that eliminates evaporation. Critics—including me—question whether this truly solves regional water stress or just shifts the accounting.

My Tuesday guilt trip taught me something: individual prompt anxiety is misplaced. The real questions are about infrastructure: where data centers get built, what cooling tech they use, whether companies report honestly.

As AI becomes permanent, the focus must shift toward building efficient, sustainable data centers in water-rich climates—not guilt-tripping users over a few extra prompts.