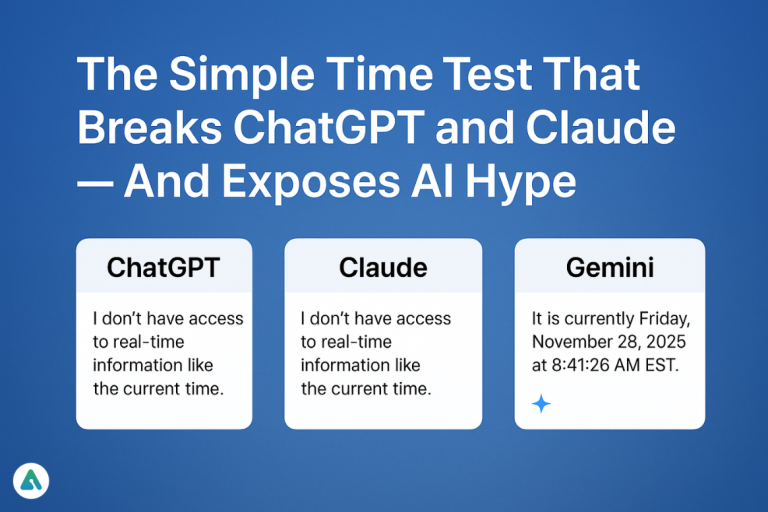

In an era where AI tools are marketed as “smart,” “instant,” and “assistant-level,” the reality is far more uneven. A simple test exposes the gap between perception and capability:

Ask different AI models: “What time is it right now?”

The results are telling — and surprisingly inconsistent.

ChatGPT and Claude: Powerful, But Blind to the Present

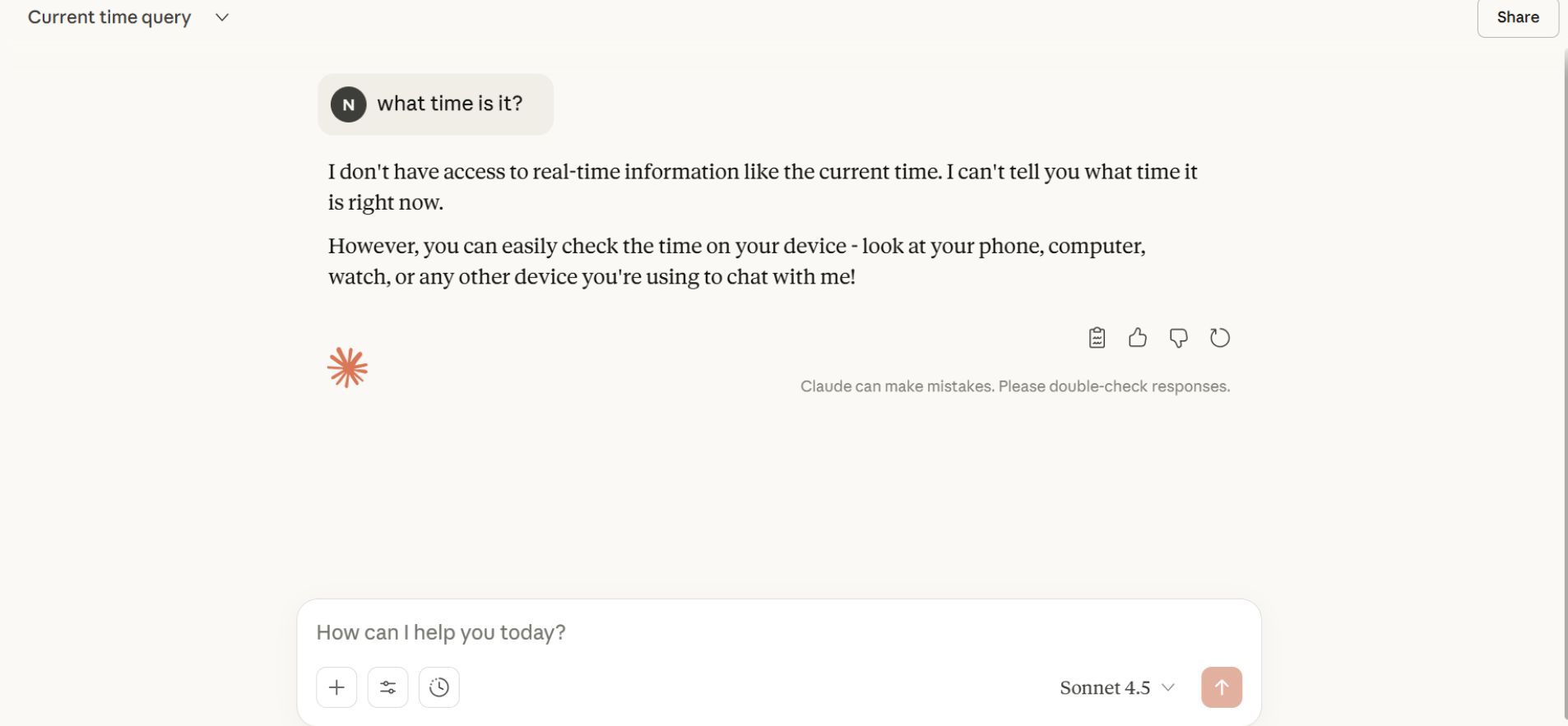

Despite their impressive language skills, ChatGPT and Claude fail at this basic question. They respond with disclaimers such as:

“I don’t have access to real-time information like the current time.”

This is exactly what Claude outputs, as shown in your screenshot.

ChatGPT behaves the same way.

These systems can write essays, refactor code, and summarize complex documents — yet cannot perform the simplest function expected of a personal assistant.

This isn’t an accident. It’s a design choice.

Large-language models like ChatGPT and Claude operate as predictive text engines, not real-time connected agents. They don’t see your device clock, don’t track your location, and don’t have an internal concept of “now.” They stitch together responses based on patterns, not present-moment awareness.

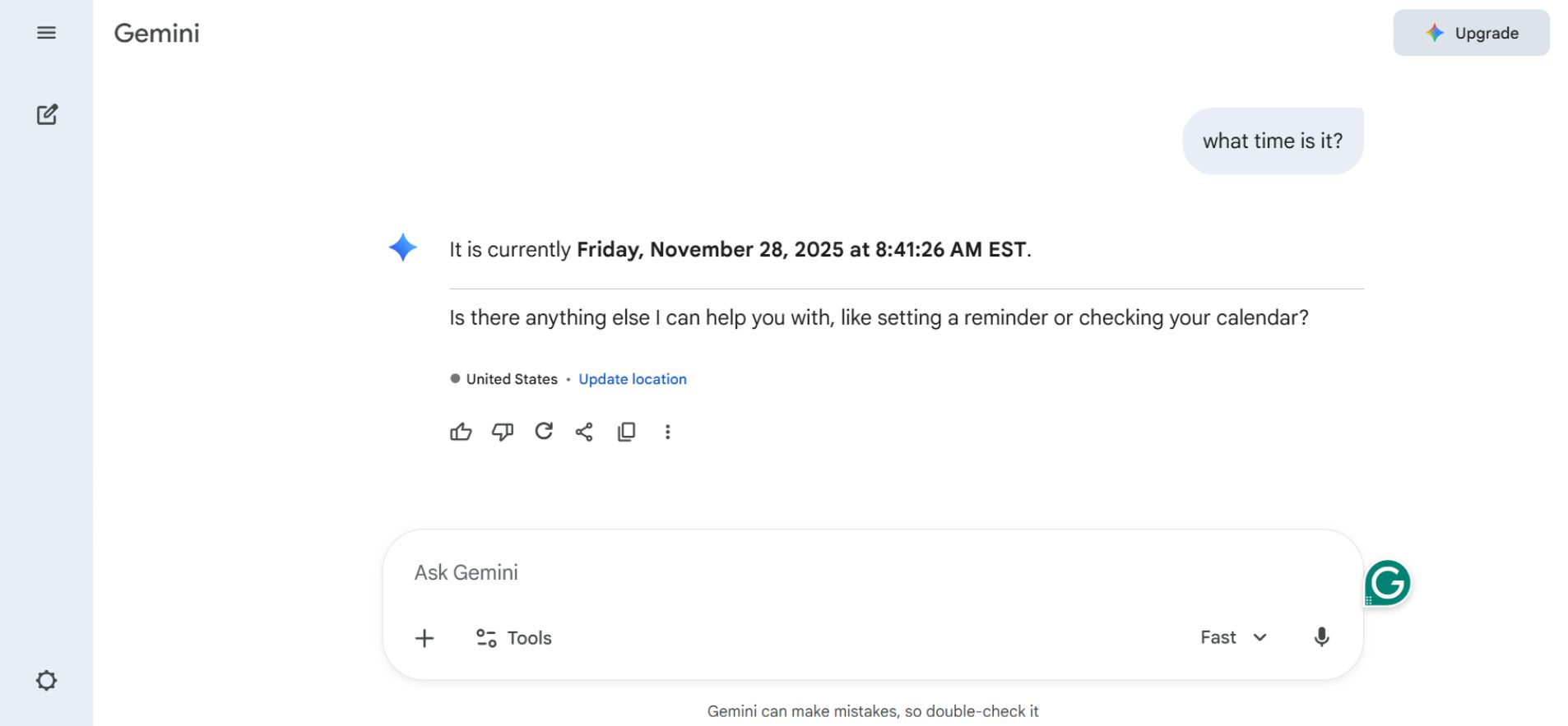

Gemini: A Different Architecture, A Different Result

Ask Google Gemini the same question, and you get a precise timestamp:

“It is currently Friday, November 28, 2025 at 8:41:26 AM EST.”

This accuracy isn’t because Gemini “guesses better.”

It’s because it is architecturally built to access real-time tools, including:

-

system time

-

search

-

maps

-

calendars

-

external APIs

Gemini is not just a language model — it’s a multi-tool AI agent that consults live data sources before generating an answer.

This is why it can tell the time.

It’s also why ChatGPT and Claude cannot.

Why This Difference Matters

The “time test” might sound trivial, but it exposes three deeper issues about today’s AI landscape.

1. The Illusion of Competence

Chatbots are often treated as if they have awareness or real-world intelligence.

But a system that cannot tell the current time clearly lacks:

-

presence

-

situational awareness

-

real-time grounding

If users mistake predictive text for genuine intelligence, they will inevitably overtrust these tools in areas where accuracy matters.

2. Hidden Design Trade-offs

Developers intentionally avoid giving ChatGPT and Claude a constant feed of time/location data. Doing so could:

-

consume valuable context window space

-

introduce instability

-

require constant tool integrations

-

complicate model behavior

In other words, technical convenience takes priority over “assistant-like” functionality.

Gemini, however, solves this differently by separating the roles:

The model predicts text, while external tools provide facts.

3. User Expectations Are Out of Sync with Reality

AI models are advertised as assistants — but most aren’t built like assistants.

-

ChatGPT and Claude → LLMs with no built-in real-time awareness

-

Gemini → LLM + tool interface = real-time agent

This mismatch leads to confusion. Users expect capabilities that these models simply weren’t designed to provide.

A Necessary Correction: Not All Limitations Are Fatal

It’s important not to overstate the critique.

While ChatGPT and Claude cannot tell the time, they remain exceptional at what they are built for:

-

brainstorming

-

drafting

-

code generation

-

explanation

-

analysis

-

creative work

-

rewriting and editing

Their weakness is real-time awareness, not intelligence as a whole.

The takeaway isn’t that these models are unreliable — it’s that they are reliable within their intended scope.

The Real Lesson: Don’t Confuse Prediction With Presence

The inability of major AI models to tell the time is more than a quirky bug — it’s a reminder of a fundamental truth:

LLMs do not perceive the world. They generate predictions, not observations.

Until users and companies fully internalize that distinction, AI will continue to be misunderstood, overhyped, and misapplied.

Gemini shows what future “AI assistants” may become:

systems that combine language modeling with tool access.

ChatGPT and Claude show what LLMs fundamentally are today:

powerful but blind engines of language.

Recognizing the difference isn’t negativity — it’s realism.

And realism is the only way forward.

Related: Google Gemini vs ChatGPT: Choosing the Right AI for Your Needs in 2025