TL;DR: AI isn’t “breaking” in 2026. It’s doing something more dangerous—producing answers that feel right enough to trust, even when they’re wrong.

There’s a strange shift happening in how we talk about AI.

A year or two ago, the conversation was full of caveats—hallucinations, edge cases, weird failures. Now, the tone feels… settled. As if the major problems are behind us, and what remains is just scaling.

That’s why a recent critique from Ars Technica hits a nerve. It doesn’t argue that AI is failing in some dramatic, headline-grabbing way. Instead, it points at something quieter:

We’re starting to trust systems that still don’t actually know what they’re saying.

The narrative is getting smoother than the reality

Part of this comes from how AI is being framed publicly.

Leaders like Sam Altman often talk about progress in terms of acceleration—models getting better, safer, more useful over time. And to be fair, that’s not wrong. The systems are improving.

But something gets lost in that framing. The messy middle disappears.

You don’t hear as much about the small, frequent failures anymore—the ones that don’t go viral but still matter. The wrong summary. The fabricated source. The confident but slightly off explanation that no one double-checks.

Those haven’t gone away. We’ve just stopped centering them.

A familiar failure we keep repeating

If this sounds abstract, it isn’t.

Back in 2023, the Mata v. Avianca case made headlines when a lawyer submitted AI-generated citations that didn’t exist. It became the go-to example of “don’t trust ChatGPT blindly.”

That should have been a turning point.

It wasn’t.

Similar incidents have kept happening—less visible, less reported, but very real. The pattern is almost boring at this point:

- The AI produces something polished

- The human assumes it’s correct

- No one checks until it’s too late

What’s changed isn’t the failure. It’s our tolerance for it.

Why this keeps happening (and isn’t going away)

Under the hood, nothing about these systems has fundamentally changed.

Large language models are still probabilistic. They don’t verify facts; they generate likely sequences. That leads to a few persistent traits:

- They can invent details that fit perfectly

- They can give different answers to the same question

- They sound equally confident whether they’re right or wrong

Engineers will tell you this is expected behavior. Some will even argue it’s a reasonable tradeoff—flexibility over rigidity.

And they’re not entirely wrong.

But here’s the catch: users don’t experience these systems as “probability engines.”

They experience them as answers.

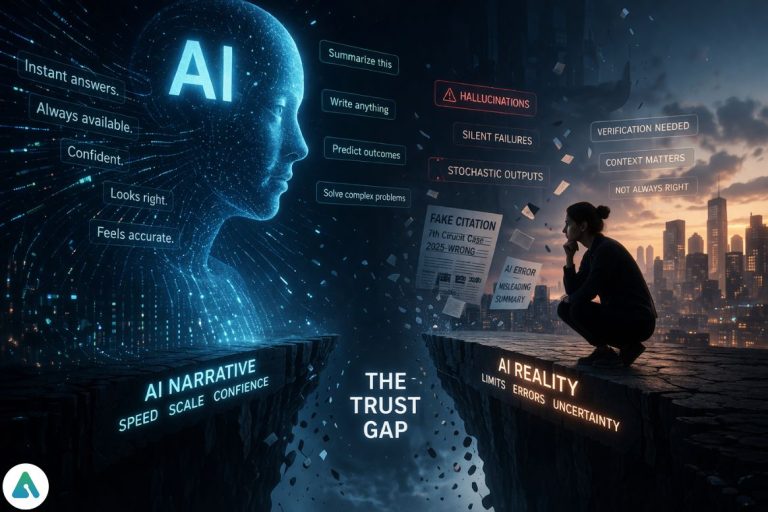

The trust gap is widening—quietly

This creates a gap that’s easy to miss but hard to fix.

On one side, you have what AI actually is:

a system that predicts, approximates, and sometimes guesses.

On the other hand, you have what it feels like:

a system that knows.

That gap shows up in subtle ways:

- People skip verification because the output looks finished

- Teams move faster, assuming the base layer is reliable

- Errors slip through not because they’re hidden, but because they don’t look like errors

I’ve seen this personally in small ways. You ask for something straightforward, get a clean, structured answer, and move on. Later, when you revisit it, something doesn’t quite add up. Not obviously wrong—just off.

That’s the problem. There’s no clear failure signal.

The economic reason no one slows down

If the risks are known, why isn’t anyone pumping the brakes?

Because, in many cases, speed wins.

AI can compress hours of work into minutes. Even if there’s a small error rate, the productivity gain often outweighs the downside—at least in the short term. A team might accept a 5–10% inaccuracy risk if it means moving 10x faster.

Until something breaks in a visible way, the system feels like it’s working.

This is why over-reliance doesn’t look like a mistake. It looks like efficiency.

Not everyone agrees that this is a problem

It’s worth noting that not all researchers buy into the more critical framing.

Some argue that what’s being called “over-trust” is just a normal phase of technology adoption. People misused calculators at first. They misunderstood search engines. Over time, norms developed.

From that perspective, AI isn’t uniquely dangerous—it’s just new.

There’s truth to that. But there’s also a key difference:

AI doesn’t just retrieve information. It generates it.

That changes the stakes.

The rise of “shadow AI” inside companies

Another layer that’s getting less attention than it should: employees are using AI even when companies haven’t formally approved it.

This “shadow AI” behavior is spreading because:

- It’s faster than internal tools

- It feels more helpful than rigid systems

- It often produces better first drafts

But it also means decisions are being influenced by systems that haven’t been vetted, logged, or monitored.

In some organizations, leaders are trying to limit AI use while employees quietly rely on it more each week. That mismatch creates risk that doesn’t show up in official workflows—but still shapes outcomes.

A simple way to not get burned

You don’t need a full governance framework to reduce risk. But you do need some friction.

A practical approach I’ve seen work is a lightweight check:

- Ask twice: If the answer changes, treat it as unstable

- Check one source: Even a quick verification catches most major issues

- Look for specifics: Vague confidence is often a red flag

- Pause on high-stakes use: If it matters, don’t trust it blindly

It’s not perfect, but it reintroduces something that’s been quietly disappearing: skepticism.

The bigger shift: AI is becoming a filter for reality

This is the part that feels under-discussed.

AI is no longer just helping us do things. It’s shaping how we understand things. We search through it, write through it, and increasingly, think through it.

That means its mistakes don’t just stay contained. They propagate.

Not as obvious errors—but as slightly distorted versions of reality.

So what’s actually wrong?

Nothing, in a technical sense.

These systems are doing what they were designed to do.

What’s off is the environment around them:

- The narrative is cleaner than the reality

- The trust is higher than the reliability

- The usage is deeper than the understanding

And that combination is where things start to get unstable.

Final thought

AI doesn’t need to be perfect to change how we work and think.

It just needs to be convincing enough.

That’s what makes this moment tricky. The failures aren’t dramatic anymore. They’re subtle, easy to miss, and often only visible in hindsight.

And once you stop noticing them, you stop questioning the system.

That’s not when AI becomes dangerous.

It’s when it becomes the default.

Related: AI Is Making Us Stop Thinking: The Rise of Cognitive Surrender