The two most-hyped AI companies in the world don’t have customers. They have parents.

Two weeks ago, Amazon and Google pledged a combined $65 billion in fresh investment into Anthropic — a company that had already raised $30 billion in February and is reportedly eyeing another $50 billion raise at a $900 billion valuation. Amazon separately committed to a $138 billion, eight-year compute deal with OpenAI. These are not the moves of investors backing a product people want. These are life-support payments from hyperscalers keeping their own infrastructure business alive.

Ed Zitron, author of Where’s Your Ed At and host of the Better Offline podcast, has been the most consistent and forensically detailed skeptic of the AI bubble for years. His latest premium analysis goes further than anything he’s published before — and the central argument is one the financial press has almost entirely refused to engage with: there is no AI compute demand story. There is only a circular funding loop.

The Loop Nobody Will Name

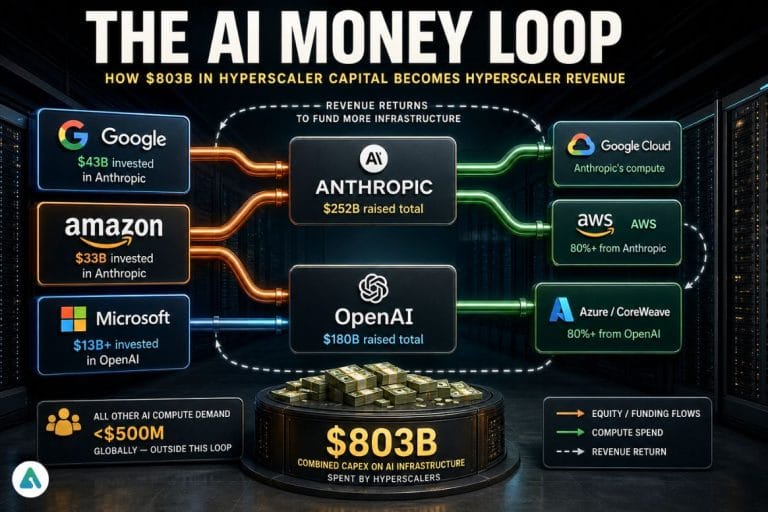

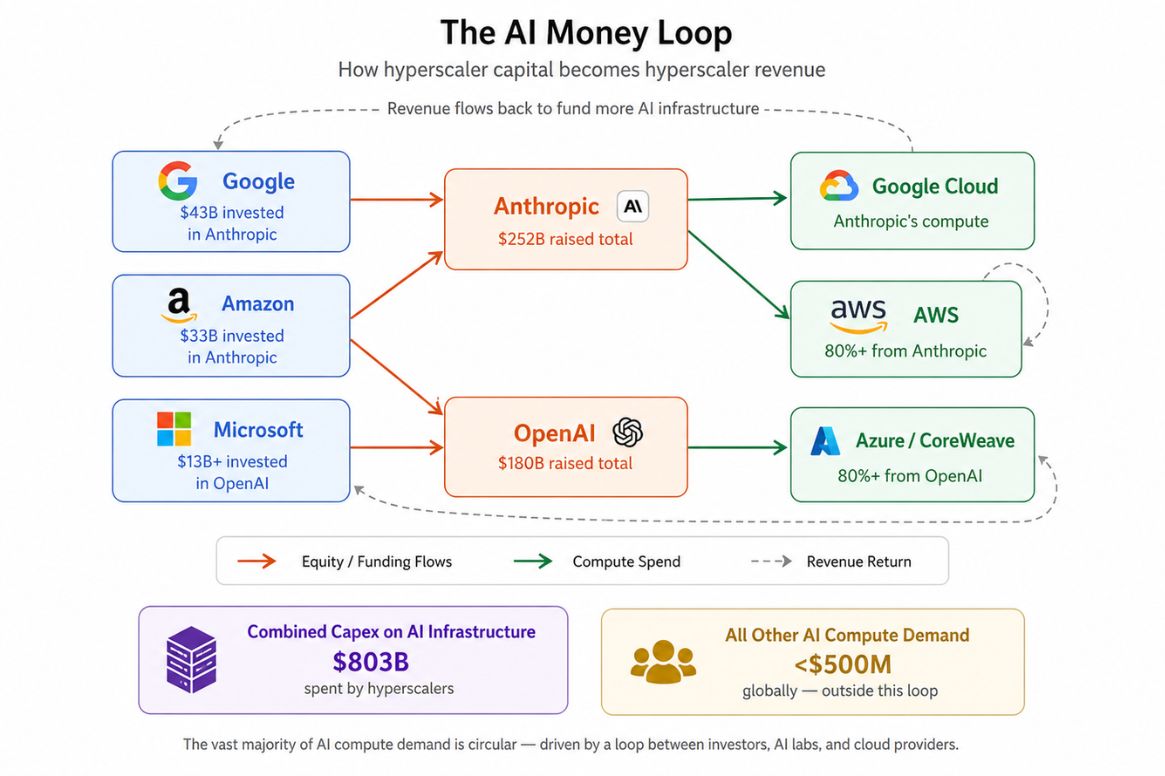

Here’s how the machine actually works. Google, Amazon, and Microsoft have collectively spent $803 billion in capex on AI infrastructure. They’ve also funneled a combined $78 billion directly into OpenAI and Anthropic via equity investments. Those two companies then spend almost all of that money back on AWS, Azure, and Google Cloud — the very infrastructure their investors built.

Zitron estimates that Anthropic accounts for over 80% of Amazon’s $15 billion annualized AI revenue. OpenAI accounts for more than 80% of Microsoft’s 2GW of AI capacity and drives the majority of its $37 billion annualized AI run rate. 67% of CoreWeave’s revenue is Microsoft paying for OpenAI’s training compute.

Strip out OpenAI, Anthropic, Google, Microsoft, Amazon, CoreWeave, and Meta, and less than $500 million in actual external AI compute demand exists across the entire industry. Not $500 billion. $500 million.

That’s the demand story. It’s three companies feeding themselves.

Anthropic’s Numbers Are Science Fiction

The financial projections Anthropic is shopping around make the circular nature of this even more obvious. Per reporting from The Information, Anthropic projects $18 billion in revenue for 2026, scaling to $148 billion by 2029 — while losing $11 billion in both 2026 and 2027, then somehow swinging to profitability. The Wall Street Journal separately reported that Anthropic plans to spend at least $86 billion on training costs alone through 2029.

Nobody, including Anthropic, can explain the mechanism by which those losses become profits. The company is burning money at a rate that requires perpetual hyperscaler subsidy to survive — and the hyperscalers keep writing the checks because the alternative is admitting that the entire infrastructure buildout has no real customer base.

Microsoft, notably, has started edging toward the exit. CFO Amy Hood quietly disconnected Microsoft from OpenAI’s welfare payments, allowing Oracle to absorb that risk instead. Microsoft’s latest 10-Q mentions Anthropic exactly once — as part of the word “philanthropic” on page 59.

The Hyperscaler Defense — And Why It Doesn’t Hold

There is a counterargument worth acknowledging: hyperscalers aren’t stupid, and they know the current revenue loop is circular. The bull case is that they’re building for future demand — that Fortune 500 companies, healthcare systems, manufacturers, and governments will eventually replace OpenAI and Anthropic as the dominant compute customers. In this view, the current structure is simply the cost of securing early infrastructure dominance before enterprise adoption scales.

It’s a coherent argument. It’s also one that’s been made every year since 2023, with the “enterprise wave” perpetually 18 months away. Real demand doesn’t consistently fail to arrive on schedule. And crucially, if this enterprise wave were genuinely coming, you’d expect to see it showing up in early absorption data — small contracts, pilot programs, incremental capacity leases. Zitron has been looking for that evidence for years. He can’t find more than $500 million of it outside the existing funding chain.

The forecast-based investment thesis requires believing that $148 billion in annual AI revenue materializes for Anthropic alone by 2029 — without a remotely credible path from here to there.

The Google SPV Trick

The baroque financial engineering required to sustain this illusion keeps getting stranger. Google has started creating Special Purpose Vehicles with investment firms to effectively sell its own TPU chips to itself via Anthropic — then bill Anthropic for the compute, completing a loop that begins and ends at Google’s own balance sheet. Broadcom facilitated $63 billion in TPU sales to Anthropic under this structure.

This isn’t innovation. It’s a very expensive way to book revenue.

$3 Trillion or Bust

The stakes of this being wrong are not abstract. Big Tech needs $3 trillion in new AI revenue by 2030 to justify the capex already committed. There are currently $157 billion in annual revenues required just to monetize the 15.2GW of data centers under construction and expected online by the end of 2027 — infrastructure being built almost entirely to service two companies that lose $22 billion a year between them.

Real demand doesn’t require its suppliers to also be its investors, its customers, and its primary source of revenue. Real demand shows up in contracts from companies that aren’t already in the funding chain.

The AI compute boom is the most expensive circular argument in the history of technology. And the only people who don’t seem to have noticed are the ones getting paid not to.

Related: Anthropic’s $1.5B Wall Street Deal Signals a New Era of AI Power