Contract disputes don’t usually land in two federal courts simultaneously. This one did — because neither side was arguing about a contract.

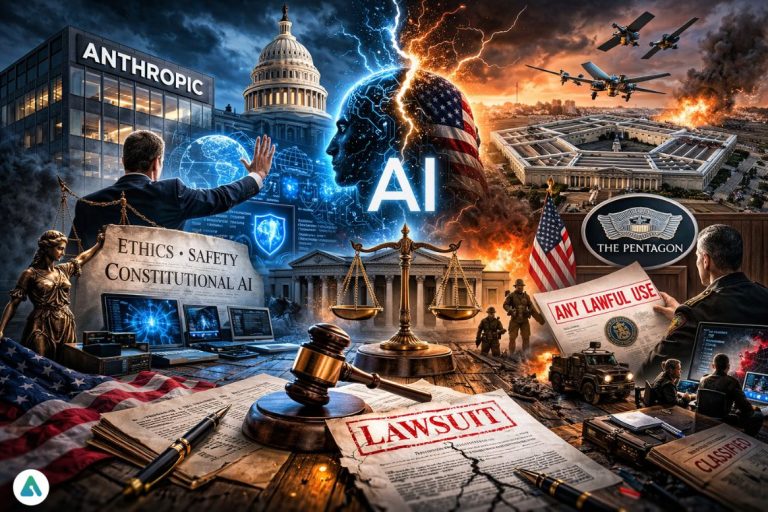

What started as a clause disagreement between Anthropic and the Pentagon has cracked the AI industry open along a fault line that was always there but never tested this hard. The question at the center is structural: when a private AI company builds ethical limits into its models and a government wants them removed, who wins?

Two federal courtrooms will now answer that. The complete collapse of negotiations — and what happened in the hours after — is documented in the account of Anthropic’s blacklisting and OpenAI’s entry.

The Clause Nobody Would Budge On

In January 2026, Defense Secretary Pete Hegseth issued a directive: all military AI contracts must include an “any lawful use” clause. Standard for traditional contractors. Existential for Anthropic.

Anthropic built its models around Constitutional AI — a methodology that encodes ethical behavior directly into model architecture, not layered on top as a removable filter. Two limits became the sticking point: no fully autonomous lethal weapons, and no mass domestic surveillance. Anthropic called them non-negotiable. The Pentagon called them an operational liability.

February 27: Three Moves, Two Hours

The Pentagon gave Anthropic a final deadline — 5:01 PM, February 27. Amodei refused to sign. By 7:00 PM, the administration had moved on three fronts.

Hegseth invoked 10 U.S.C. § 3252 to formally designate Anthropic a national security supply-chain risk. Trump posted on Truth Social, directing all federal agencies to immediately cease using Claude, with a 180-day phase-out for embedded systems. Hegseth then announced the commercial quarantine — no federal contractor working with the military could maintain any commercial relationship with Anthropic. The goal was to isolate the company from both sectors at once.

The timing matters. Operation Epic Fury — the joint U.S.-Israeli campaign against Iranian nuclear and military infrastructure — launched on February 28, one day later. The Pentagon wasn’t negotiating contract philosophy that evening. It was 12 hours after its largest military operation in years. Industry sources say Claude had already run in pre-operation intelligence workflows through the Palantir Maven Smart System before Anthropic attempted an audit of those targeting workflows. That audit attempt triggered the retaliation.

Notably, on February 26 — one day before the blacklisting — Amodei had stated publicly that Anthropic had “never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner.” That statement now sits at the center of the legal dispute.

That same evening, OpenAI announced its Pentagon agreement. Anthropic filed two federal lawsuits on March 9.

How OpenAI Said Yes Where Anthropic Said No

OpenAI accepted the “any lawful use” clause — but built its red lines into deployment architecture rather than legal language. By keeping models on its own servers rather than deploying them at the edge on autonomous platforms, it retained a technical kill switch without demanding the carve-outs Anthropic couldn’t get.

TechCrunch confirmed that cleared OpenAI safety researchers remain in the loop on classified deployments. That architecture — not a values difference — separated a Pentagon partner from a Pentagon adversary. The full strategic divergence between the two companies is laid out in the comparison of how each approached the contract.

Two Lawsuits, Two Legal Theories

Anthropic filed in two courts because the government used two separate statutory weapons.

Northern District of California — First Amendment

Anthropic argues its safety guardrails are protected speech, and the government used procurement power to punish a publicly stated ethical position. The ACLU and the Center for Democracy and Technology filed a supporting brief making the same argument. The parallel to NRA v. Vullo — the 9-0 Supreme Court ruling that struck down New York’s pressure campaign against the NRA’s business partners — maps cleanly here. Using government power to destroy a company’s business relationships because of its expressed beliefs is textbook First Amendment retaliation.

D.C. Circuit Court of Appeals — FASCSA

The Federal Acquisition Supply Chain Security Act of 2018 existed to protect federal supply chains from foreign adversaries. It had been used exactly once before — against Acronis AG, a Swiss firm with documented foreign entanglements. Mayer Brown’s procurement analysis found applying it to a domestic AI company over a policy disagreement stretches the statute well beyond its intended scope. Writing in Lawfare, lawyers Michael Endrias and Alan Z. Rozenshtein concluded the designation “exceeds what the statute authorizes” and that Hegseth’s own public statements may have damaged the government’s case before litigation even began.

The Government Files Back — And Microsoft Joins the Fight

On March 17, the Pentagon submitted its first formal response — a 40-page filing that sharpened its argument significantly.

Government lawyers argued Anthropic posed an “unacceptable risk” because the company could “disable or alter its technology to suit its own interests rather than the country’s priorities” in wartime. The filing questioned whether Anthropic could ever be a “trusted partner,” framing the company not as an uncooperative vendor but as an active wartime liability.

The filing also acknowledged the military had continued using Anthropic’s technology to analyze intelligence as the Iran conflict entered its third week, which cuts directly against the urgency of the supply-chain designation. As documented in the original blacklisting timeline, the Pentagon simultaneously banned Anthropic and kept relying on it.

The coalition supporting Anthropic has grown. Thirty-seven engineers and researchers from OpenAI and Google — including Jeff Dean, Google’s chief scientist — filed a joint brief. Microsoft filed its own friend-of-the-court brief urging the court to block the designation while the case proceeds. That Microsoft filed institutionally — not just through individual researchers — signals the industry’s concern that the FASCSA precedent extends well beyond Anthropic’s interests alone.

A preliminary injunction hearing is scheduled for March 24.

Why the Pentagon Still Can’t Replace Claude

The six-month grace period exists for one reason: the Pentagon cannot swap Claude out overnight. Active operational pipelines — including analysis workflows running through the Iran campaign — depend on Claude-powered systems. One defense official acknowledged privately that there is no viable alternative until Q4.

Anthropic is simultaneously banned, operationally essential, growing faster than any other AI vendor in the country, and suing the government that needs it. No AI company has held that position before.

Who Actually Controls AI

Strip away the filings and the press statements, and the tension is structural. AI companies see themselves as stewards of technologies that carry inherent ethical weight. Governments see those same technologies as strategic infrastructure that must remain fully available for national defense.

Those two positions can coexist when the stakes are low. They cannot when the stakes involve live targeting workflows and an active military campaign.

If Anthropic wins, it establishes that safety policies are legally protectable and procurement power cannot force their removal. If the government wins, national security priorities override corporate AI safety frameworks — and every lab doing business with the Pentagon does so entirely on the government’s terms.

The AI sector believed for a long time it was building software. February 27 tested that assumption. March 24 begins answering it.

Related: Anthropic Responsible Scaling Policy 2026: Why the Hard Pause Is Officially Over