The question that finally breaks the machine isn’t flashy.

It doesn’t ask for a definition, a formula, or a summary.

It asks an AI to interpret a 14th-century medical shorthand note, written by a monk who mixed Latin, Greek, and personal symbols—then infer how that knowledge would be applied in a pre-modern laboratory.

A human specialist shrugs and answers.

The AI freezes.

That moment — quiet, unglamorous, and deeply inconvenient — is why Humanity’s Last Exam exists.

The Benchmark Wasn’t Meant to Be Fair. It Was Meant to Be Honest.

For most of the last decade, AI benchmarks have followed a predictable arc:

-

Humans design a public test

-

Models train on the internet

-

The answers leak into training data

-

Scores explode

-

Everyone celebrates “reasoning”

What actually happened was simpler: the machines memorized the mirror.

Humanity’s Last Exam (HLE), developed with heavy involvement from researchers at Texas A&M University and hundreds of external domain experts, was designed as a direct rejection of that cycle.

Its true enemy has a name now: Data Contamination.

If a model has already seen the answer somewhere online, the question is useless. HLE throws those questions away.

The Parrot’s Mirror Problem

Modern AI is often described as a thinker. A reasoner. A general intelligence.

HLE treats it more accurately: a parrot staring into a mirror.

It can:

-

Recite rare chemical compounds

-

Summarize obscure academic papers

-

Mimic expert tone flawlessly

But ask it to reason inside an unfamiliar constraint — a poorly documented historical lab, a missing assumption, an expert’s “common sense” shortcut — and the illusion breaks.

An AI can tell you the formula for a neurotoxin.

HLE asks how that compound behaves in a lab that predates modern safety standards, using tools that were never digitized.

Language math stops working there.

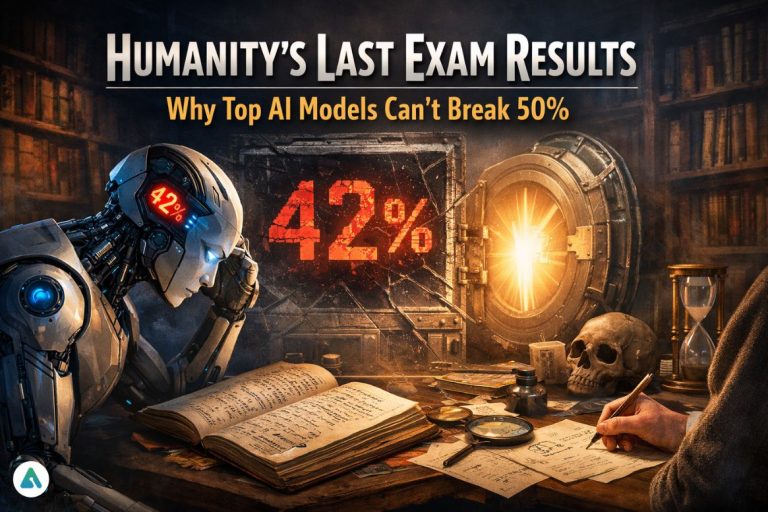

The Scores That Quietly Ended the Hype

Early 2026 testing delivered results many labs expected — but few wanted to publicize.

| Benchmark | Typical Frontier Model Scores |

|---|---|

| Legacy academic benchmarks | 85–95% |

| Professional exams | 70–90% |

| Humanity’s Last Exam (HLE) | 40–50% |

Even cutting-edge systems struggled to cross the halfway mark on HLE’s private and hidden question sets.

Not because they were stupid.

Because they couldn’t cheat.

The Part No One Talks About: The Experts

Over 1,000 specialists contributed to HLE — many unpaid.

Why?

Because their fields were being flattened.

Historians, linguists, chemists, theologians, physicians — people whose expertise depends on context, not recall — were watching AI systems dilute their disciplines by confidently producing answers that sounded right and were subtly wrong.

HLE became a form of defense.

A way to say: “If you want to claim intelligence here, you have to earn it.”

One of the contributors, computer scientist Tung Nguyen, has been blunt about the goal: intelligence without depth is performance, not understanding.

The Controversial Question: Is an Un-Passable Test Even Useful?

Here’s the uncomfortable take:

If a benchmark is designed so AI can never fully pass it, is it measuring progress — or protecting human ego?

It’s a fair criticism.

But HLE isn’t claiming to measure usefulness. It measures epistemic honesty — where models stop knowing and start guessing.

In medicine, law, science, and policy, that boundary matters more than raw accuracy.

The Private Set Is the Real Innovation

Part of Humanity’s Last Exam is permanently private.

No leaks.

tart=”4068″ data-end=”4071″ />>No public answer keys.

This is what makes HLE different — and dangerous to old evaluation culture.

We are entering what researchers quietly call the Post-Benchmark Era.

If an AI can find the answer on the internet, the test is already dead.

HLE functions as a dark benchmark — a vault of offline human knowledge designed to stay out of reach.

Why This Changes AI Evaluation in 2026

Humanity’s Last Exam isn’t about panic.

It’s about restraint.

It tells investors, policymakers, and builders something essential:

-

AI is powerful

-

AI is improving

-

AI is not yet a universal expert

-

And pretending otherwise is reckless

The real shift isn’t that machines failed.

It’s that, for the first time in years, we stopped making tests they were guaranteed to win.

And that may be the most human decision in modern AI history.

Related: Is Claude Replacing Office Jobs? IBM’s 13% Drop Says a Lot