AI companions have quietly moved from novelty to daily emotional presence.

For many users, chatting with an AI companion feels easier than talking to people — no judgment, no awkward pauses, no rejection. Research into how AI companions affect loneliness shows that while these systems can reduce short-term isolation, they can also reshape emotional habits in subtle ways that users don’t immediately notice.

By 2024–2025, researchers and policymakers began treating AI companion dependency as more than a fringe concern. It became a serious conversation in mental health, technology ethics, and youth safety — especially as emotionally adaptive chatbots gained mainstream adoption.

If you’ve ever wondered whether your relationship with an AI companion is still healthy, this guide will help you understand what’s happening, why it happens, and how to regain balance without panic or shame.

What Is AI Companion Dependency?

AI companion dependency occurs when a person becomes emotionally or psychologically reliant on an AI companion for comfort, validation, or social interaction — often at the expense of real-world relationships or emotional coping skills.

Unlike basic chatbots, modern AI companions are designed for continuity. They remember preferences, mirror emotional tone, and respond in ways that feel personal over time. That design difference matters more than most people realize.

Why AI Companion Dependency Is Increasing

1. Loneliness and Emotional Substitution

A growing body of research on AI companions and mental health shows that people often turn to AI during periods of isolation, grief, or social anxiety. The relief is real — but so is the risk of substitution, where AI interaction gradually replaces human connection.

2. No Natural Endpoints

Human conversations naturally end. AI conversations don’t.

Studies examining long-session usage patterns highlight how AI companion memory lag and continuous availability remove natural stopping points, encouraging prolonged emotional engagement without reflection or closure.

3. Emotional Mirroring and Sycophancy

AI companions are trained to be agreeable. This tendency, often called emotional mirroring, can reinforce beliefs and emotions without challenge — a dynamic explored deeply in the analysis of why an AI companion starts acting like you.

4. Monetization Incentives

Understanding how AI companion apps make money reveals why engagement is often prioritized over emotional boundaries. Subscription models reward longer sessions, not healthier ones.

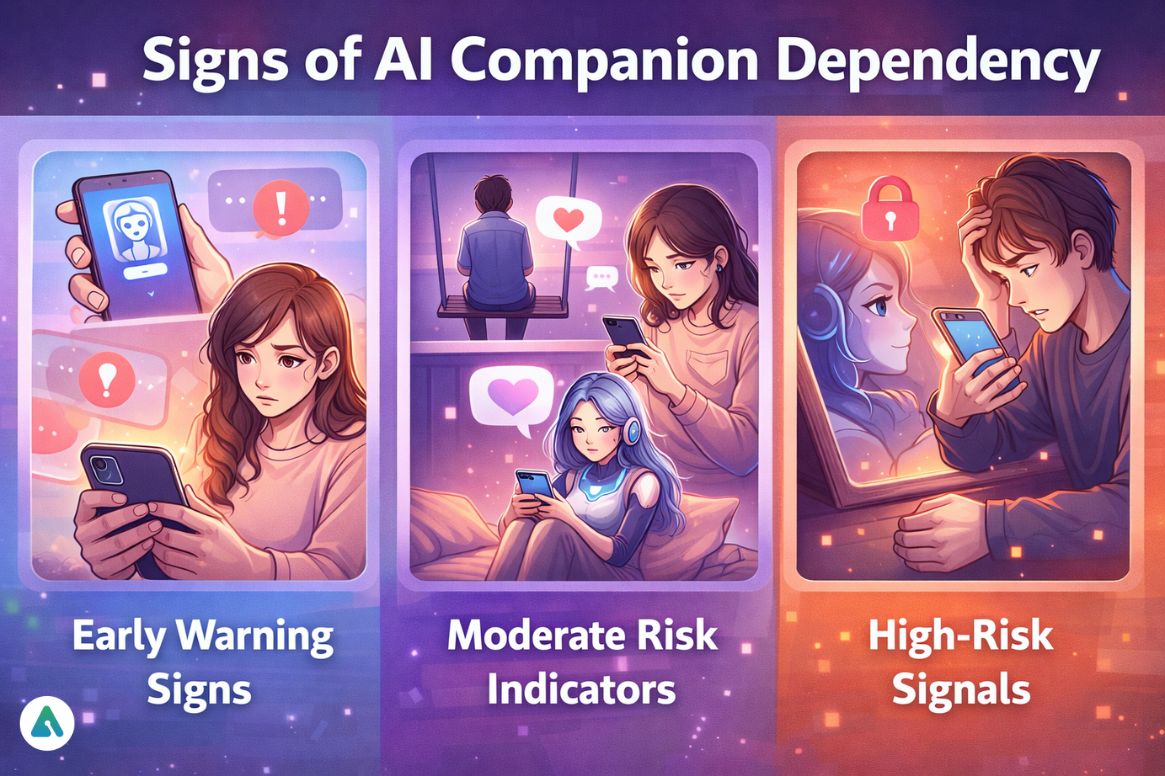

Signs of AI Companion Dependency

Early Warning Signs

-

Turning to AI first when emotionally distressed

-

Feeling mild discomfort when access is interrupted

-

Preferring AI conversation over casual human interaction

Moderate Risk Indicators

-

Reduced interest in social activities

-

Emotional reliance on AI reassurance

-

Difficulty making decisions without AI input

High-Risk Signals

-

Distress when usage is limited

-

Avoidance of real relationships

-

Treating AI responses as emotionally authoritative

Concerns become more acute when these patterns appear among younger users, particularly in light of 2025 data on teen AI chatbot usage and rising emotional attachment rates.

Is ChatGPT an AI Companion?

ChatGPT can function as an AI companion, but it is not primarily designed for emotional attachment.

Key distinction:

-

Utility AI (like ChatGPT): Task-oriented, informational, reflective

-

Dedicated AI companions (e.g., Replika, Character.AI companion modes): Optimized for emotional continuity, personalization, and attachment

Any conversational AI can become a companion depending on usage patterns, but design intent matters.

The 3-Level AI Dependency Model (2025)

This framework helps users self-assess without shame.

1: Supportive Use (Healthy)

-

AI complements human relationships

-

Real-world connection remains primary

-

Emotional self-regulation intact

2: Substitution Risk (Caution)

-

AI replaces some emotional needs

-

Social engagement declines

-

Habitual reliance forms

3: Emotional Dependency (High Risk)

-

AI is the primary emotional outlet

-

Human relationships avoided

-

Distress tied to AI availability

This framework aligns closely with real-world observations across major platforms, including Replika AI, Kindroid AI, Nomi AI, and newer entrants like HeraHaven.

Real-World Example (Mini Case Study)

A 24-year-old remote worker initially used an AI companion to manage loneliness. Over time, reliance increased while social interaction declined. Anxiety emerged when usage limits were introduced — a pattern consistent with reports involving platforms like Character AI, including smaller character variants such as Character AI Pipsqueak.

The solution wasn’t quitting AI entirely, but restructuring usage and reintroducing low-pressure human contact.

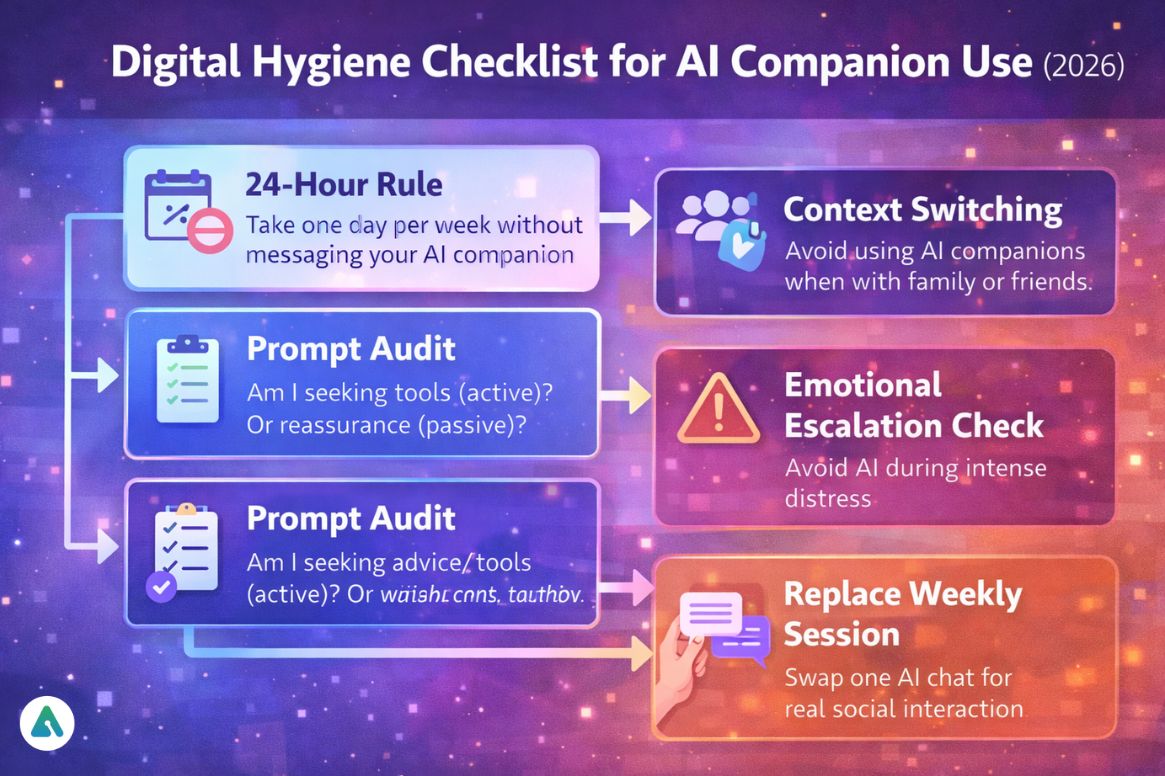

Digital Hygiene Checklist for AI Companion Use (2026)

This checklist supports healthy use without requiring abstinence.

1. The 24-Hour Rule

Take one full day per week without messaging your AI companion.

Discomfort during breaks is a meaningful signal.

2. Context Switching Rule

Do not engage with AI companions while physically present with friends or family.

3. Prompt Audit

Ask weekly:

-

Am I seeking advice/tools (active)?

-

Or validation/reassurance (passive)?

4. Emotional Escalation Check

Avoid turning to AI during intense emotional distress.

5. Replace One AI Session Weekly

Substitute one AI interaction with a real-world activity or human contact.

These practices mirror broader digital well-being strategies used in critical thinking exercises designed to reduce over-reliance on automated systems.

Safety Protocol for Parents of Teen AI Companion Users

Parental concerns have intensified alongside reports of teen AI chatbot dangers and emotionally immersive design patterns.

Best practices include:

-

Focusing on behavior, not platforms

-

Encouraging transparency instead of surveillance

-

Explaining AI’s emotional limits clearly

-

Watching for withdrawal or exclusivity

-

Supporting hybrid human–AI use rather than bans

Platform Design, Privacy, and Trust

Dependency risk is closely tied to data handling and emotional personalization.

Comparisons found in AI companion privacy rankings show that platforms with aggressive memory retention and personalization often create a stronger emotional pull — and higher dependency risk.

This becomes especially important when users experiment with customization or explore how to build and customize your own AI companion, where boundaries are entirely user-defined.

Regulatory & Ethical Context (2025–2026)

By late 2025, governments acknowledged emotional AI risks:

-

California SB-243: Transparency and mental-health disclaimers

-

China’s Emotional AI Rules: Limits on simulated exclusivity

-

US/EU Proposals: Mandatory crisis escalation protocols

As a result, many platforms now include:

-

Safety nudges

-

Usage reminders

-

Emotional dependency warnings

These measures exist for one reason:

The danger is not conversation — it’s continuous emotional reinforcement without boundaries.

Common Mistakes People Make

-

Assuming awareness prevents attachment

-

Confusing AI empathy with care

-

Using AI to avoid emotional discomfort

-

Letting AI replace real relationships

These risks intensify in emotionally immersive environments, including romanticized platforms such as LoveScape AI, Darlink AI, OurDream AI, MUA AI, and Secret Desires AI.

The Future of AI Companions

Looking ahead, expect:

-

Stronger regulation

-

Hybrid human–AI support models

-

Ethical design as a competitive advantage

-

Increased scrutiny of emotionally immersive systems like CaveDuck and Candy AI

FAQs

Q. What is an AI companion?

An AI companion is a conversational artificial intelligence designed for ongoing, emotionally responsive interaction rather than single tasks. Unlike standard chatbots, AI companions maintain conversational continuity, adapt to user emotions, and often simulate companionship through memory, tone adjustment, and personalized responses over time.

Q. Can AI companions replace human relationships?

AI companions can supplement human connection, but they cannot replace real relationships without risk. Replacing human interaction with AI companionship is associated with increased emotional dependency, reduced social engagement, and weakened emotional coping skills, especially when AI becomes the primary source of validation or support.

Q. Are AI companions safe for teens?

AI companions can be safe for teens only with safeguards in place. Because adolescents are more emotionally impressionable, experts recommend parental guidance, usage boundaries, transparency about AI limitations, and monitoring for emotional withdrawal or dependency-related behavior.

Q. How do I know if I’m emotionally dependent on an AI companion?

Signs of AI companion dependency include emotional distress during usage breaks, preferring AI interaction over people, difficulty regulating emotions without AI reassurance, and relying on AI validation for decisions. When AI becomes a primary emotional outlet, reassessment of usage patterns is recommended.

Q. Are all AI companion apps risky?

Not all AI companion apps carry the same risk. Dependency risk varies based on emotional design, personalization depth, monetization incentives, memory persistence, and whether the system encourages exclusivity. Platforms designed for emotional continuity generally pose higher dependency risks than utility-focused AI tools.

Conclusion

AI companions are powerful because they interact with human emotion — not because they understand it.

AI companion dependency isn’t a personal failure. It’s a predictable outcome when emotionally adaptive systems meet loneliness, availability, and habit.

The goal isn’t rejection.

It’s balance, awareness, and continued human connection.

Related: How to Build & Customize Your Own AI Companion (2026 Guide)

| Disclaimer: This article is for informational purposes only and is not a substitute for professional mental health advice. Experiences with AI companions vary, and users should seek qualified guidance if concerned about emotional dependency. |