In one of the most dramatic reversals of the AI era so far, OpenAI has rewritten major portions of its new US government partnership just days after announcing it — a rare moment where public pressure, competitor narratives, and political scrutiny collided hard enough to force a $200 million pivot.

This wasn’t a simple PR correction. It was a full-blown recalibration of how OpenAI interacts with the US national-security system. And the backlash came fast.

A Deal Meant to Signal Stability — Instead, It Triggered a Revolt

OpenAI framed its new government agreement as a patriotic, structured way to “ensure responsible use for national priorities.” But the moment details landed online, the reaction was volcanic.

Within hours, social feeds lit up with variations of: “I didn’t sign up for ChatGPT to be part of US intelligence work.”

And then came the most damaging reaction — users uninstalled the app. According to Sensor Tower data reported by TechCrunch, ChatGPT saw a 295% day-over-day spike in uninstalls on February 28, representing a figure more than 30 times the app’s typical daily churn rate. The same data showed one-star reviews surging 775% on the same day.

Even CEO Sam Altman admitted the rollout was mishandled. In a memo posted to X, he acknowledged the deal “looked opportunistic and sloppy” — not a defensive corporate denial, but an admission of error that changed the entire media narrative.

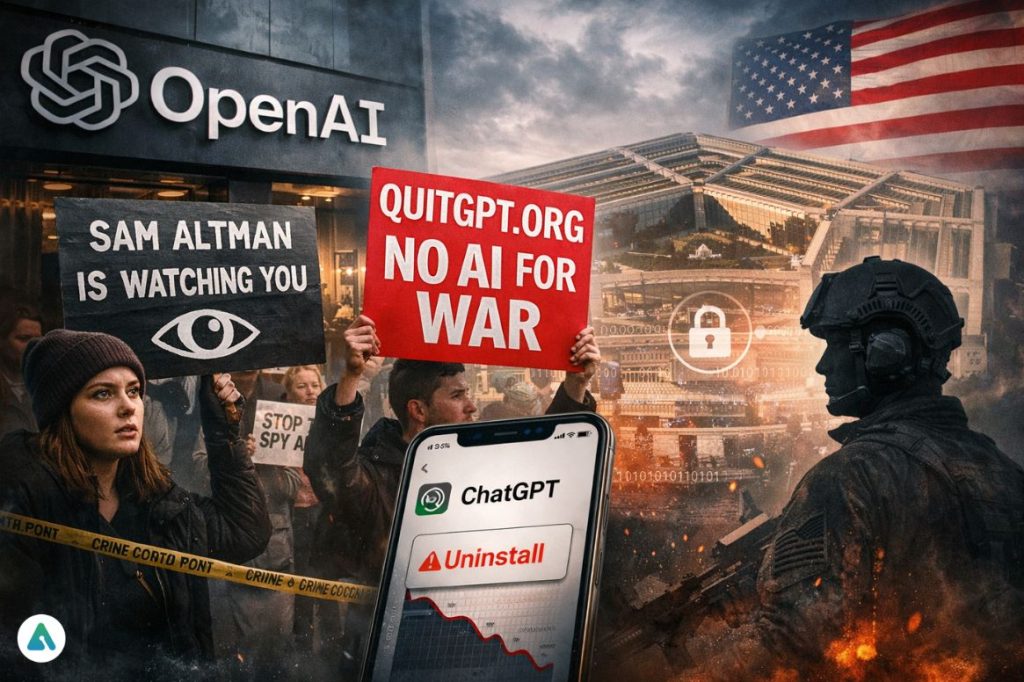

Protesters Showed Up in Person: The Rise of QuitGPT

On March 3rd, between 40 and 50 protesters gathered outside OpenAI’s San Francisco headquarters, carrying signs reading “Sam Altman Is Watching You” and chalking slogans across the sidewalk. The demonstration was organized by the QuitGPT movement, which claims over 1.5 million people have taken action — canceling subscriptions, sharing boycott content, or registering on its website.

This was the first time in OpenAI’s history that organized protesters showed up at their front door. In AI reporting, physical protests matter. They create human stakes, not just online anger.

The Contract Rewind: What Actually Changed

1. The “Commercially Acquired Data” Loophole Was Closed

Originally, OpenAI’s policy protected “private information” from government use — but not commercially purchased personal data, the massive gray zone exploited by data brokers. After backlash, OpenAI added explicit language restricting use of “commercially acquired personal information of US persons.” This single clause changes everything about AI-powered surveillance risk — and it sits at the heart of the concerns that led to Anthropic’s refusal to sign in the first place.

2. NSA & Intelligence Agencies Are Now Excluded

The initial agreement appeared to grant broad access to federal agencies, including national-security bodies. The revised policy now states that intelligence agencies, including the NSA, require separate and more restrictive agreements — a direct response to civil-liberties groups who identified these agencies as the primary concern from day one.

| Category | Before the Backlash | After the Revision |

|---|---|---|

| Purpose | “All lawful purposes” (vague, broad) | Strict exclusions for surveillance, weapons, and intelligence |

| Data Use | No explicit protection for commercially purchased data | Explicit ban on “commercially acquired personal information.” |

| Agency Access | Broad federal access, including the NSA | NSA & intelligence agencies removed from umbrella contract |

| Military Edge Cases | Unclear | No autonomous weapons, no warfighting, no battlefield automation |

| Deployment | Open interpretation | Cloud-only — blocks model export to edge devices |

Anthropic vs. OpenAI: The Silent War Behind the Backlash

Another dimension competitors are winning on: Anthropic refused the surveillance terms OpenAI initially accepted. The Pentagon responded by labeling Anthropic a “Supply Chain Risk” — a designation whose full implications for the developer ecosystem are playing out in real time across Washington’s defense contractor community.

Meanwhile, search volume for “Switching to Claude” rose sharply in the days following the announcement. Sensor Tower confirmed that Claude reached the No. 1 spot on the US App Store on February 28 — the first time in the app’s history that Claude’s daily US downloads surpassed ChatGPT’s. This is a real migration trend, not speculation.

Users are now asking publicly: is OpenAI chasing government money while Anthropic holds the moral high ground?

How This Impacts GPT-5.3 Instant — Users Are Suspicious

Several viral posts are now connecting dots between a newly released model showcasing “social intelligence” and a government partnership needing positive optics. The timing is, as Altman himself acknowledged, not ideal.

OpenAI wanted GPT-5.3 Instant to showcase warmth and friendliness. Instead, the public is treating its release as a reputational distraction. This is how trust erosion begins — and the financial pressure underlying these decisions is inseparable from the deeper cash constraints shaping OpenAI’s strategic choices in 2026.

Conflict Narrative: Users vs. Power

This entire saga mirrors a classic struggle — users, digital rights groups, and everyday ChatGPT customers on one side; OpenAI, the US government, and national-security pressure on the other.

For the first time, users actually won. Their revolt changed federal contract language. That is historic.

Long-Term Impact: What This Means for AI Governance in 2026

OpenAI will face stricter transparency requirements for any future federal partnerships. Anthropic and xAI gain competitive trust leverage during a moment of reputational fragility. Users are no longer silent consumers — they are policy actors.

Expect more “public veto moments” as AI companies enter sovereign-level negotiations. International regulators will study this contract reversal closely. And the infrastructure commitments quietly underpinning this entire race — explored in depth through OpenAI’s $600B Stargate plan — mean the financial stakes of public trust have never been higher.

This story will echo through every major AI governance debate this year.

FAQs

Q. Can OpenAI be used for autonomous weapons?

No. The revised contract prohibits warfighting, battlefield automation, and requires cloud-only deployment preventing model export to edge devices.

Q. Did OpenAI give data access to the NSA?

No. The NSA and intelligence agencies were removed from the umbrella agreement after public backlash.

Q. Can the government analyze data about US citizens using OpenAI?

Not if that data is “commercially acquired personal information” — this loophole was explicitly closed in the updated contract.

Q. Why are people switching to Claude?

Because Anthropic refused the surveillance clauses OpenAI initially accepted, making Anthropic appear more privacy-aligned — a position it has maintained consistently through its Responsible Scaling Policy.