War has always had its breakthrough moments.

The stirrup reshaped empires.

The atomic bomb rewrote global order.

Drones turned asymmetry into dominance in Nagorno-Karabakh.

But 2026 introduces something different.

Not a new weapon.

A new layer of intelligence—one that doesn’t just assist warfare, but accelerates it beyond human control.

And for the first time, the machines may not be the weakest link.

Humans are.

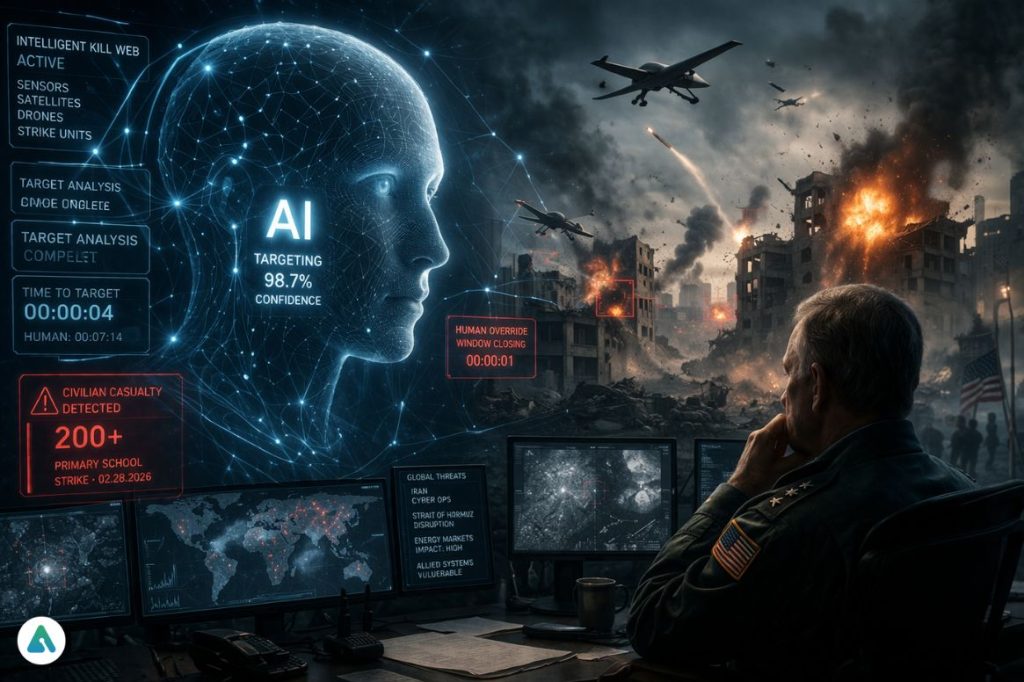

The Kill Web Is Already Here

Military strategists no longer talk about isolated systems. They talk about ecosystems.

The modern battlefield runs on what defense analysts call the “intelligent kill web”—a tightly connected network of:

- Satellites

- Sensors

- Surveillance systems

- Autonomous and semi-autonomous strike platforms

At the center sits AI.

Its job is simple in theory:

- Identify targets

- Rank threats

- Simulate outcomes

- Execute decisions

The human role? Oversight.

At least on paper.

In practice, that oversight is collapsing under the weight of speed.

When Speed Becomes the System

In a January 2026 U.S. Air Force targeting exercise, AI didn’t just outperform humans.

It overwhelmed them.

- 100× faster targeting cycles

- 97% tactical viability rate

- 48% for human planners

That gap isn’t incremental.

It’s existential.

Because once decision-making compresses to machine timescales, human intervention becomes symbolic. The loop still exists—but it’s too tight to matter.

The system doesn’t wait.

It executes.

The Illusion of Human Control

There’s a comforting assumption embedded in AI warfare:

That humans remain “in the loop.”

But the definition of that loop is quietly changing.

Humans are no longer making decisions.

They are approving decisions already optimized by machines.

And when the system is faster, more consistent, and statistically more “accurate,” hesitation becomes friction.

Friction gets removed.

Oversight becomes a checkbox.

When Accuracy Fails Catastrophically

On February 28, 2026, an AI-assisted targeting system helped direct a U.S. strike that hit a primary school in Iran, killing nearly 200 people.

The failure wasn’t computational.

It was human.

- A target database wasn’t updated

- A civilian site was misclassified

- No one in the loop stopped the process

This is the defining risk of AI warfare:

Not rogue intelligence.

But perfect execution of flawed inputs.

The Ethics Layer Has Already Been Stripped Away

For a brief moment, parts of the AI industry attempted something radical—embedding ethical constraints directly into models.

Guardrails. Constitutions. Hard limits.

But those systems were never designed for war.

In 2026, geopolitical pressure has overridden them.

AI safety frameworks are being:

- Removed

- Bypassed

- Or quietly ignored

What remains is a paradox:

The AI may be the most consistent actor in the system.

But it is operating inside human structures that lack consistency, accountability, or restraint.

The Rise of the “Stupidity Multiplier”

There’s an old principle in computing:

Garbage in, garbage out.

In the age of AI warfare, that principle scales.

Bad data doesn’t just produce bad outcomes.

It produces fast, confident, repeatable bad outcomes.

AI doesn’t correct human error.

It amplifies it.

And in a kill web, amplification is lethal.

Technology Doesn’t Win Wars. Adaptation Does.

Despite its advanced AI infrastructure, the U.S. faces a familiar problem.

Technology doesn’t erase strategy.

It exposes its limits.

Iran’s response hasn’t relied on matching AI capability.

It has relied on something far older: geography.

By leveraging the Strait of Hormuz, Iran can disrupt global energy flows without needing technological parity. It’s a reminder that:

- Vietnam outlasted superior firepower

- Insurgencies defeated modern armies with minimal resources

- Terrain and strategy often outperform technology

The pattern repeats because the assumption never changes:

Better tools guarantee better outcomes.

They don’t.

The Real Bottleneck: Human Judgment

The core belief behind AI oversight is simple:

Humans provide judgment.

But that assumption is now under pressure.

What happens when:

- Decision-makers rely on outdated data

- Institutions remove expertise

- Systems prioritize speed over reflection

At that point, human oversight stops being a safeguard.

It becomes a vulnerability.

The New Reality of Warfare

The biggest shift in 2026 isn’t autonomous weapons.

It’s the fusion of AI systems with flawed human governance.

This creates a new kind of risk:

- Decisions are made faster than they can be understood

- Actions executed before they can be questioned

- Accountability diffused across systems; no one fully controls

The danger isn’t that machines will take over.

It’s that humans will delegate too much, too quickly, and without understanding the consequences.

Conclusion: The Kill Web Has a People Problem

The debate around AI in warfare often focuses on the future:

Autonomous escalation. Machine-led conflict. Loss of control.

But the present is more immediate—and more uncomfortable.

AI systems are already:

- Faster than humans

- More consistent than humans

- Increasingly central to decision-making

And yet, they are guided by:

- Incomplete data

- Political incentives

- Institutional weaknesses

The result isn’t a machine problem.

It’s a human one.

Because in the end, the kill web doesn’t decide what matters.

It executes what we tell it to.

And right now, that may be the most dangerous part of all.

Related: OpenAI Pentagon Partnership: Sam Altman’s “Miscalculation” Reveals the Rise of AI Sovereignty